Look, I get it. In 2024, everyone was buying cloud API tokens like they were going out of style. "Oh, just call the API!" they said. "It's so convenient!" they said. Well, if you're still paying per token in 2026, congratulations — you're probably overpaying for the privilege of not owning your AI stack.

Let me tell you something: Local AI is no longer a luxury. It's a survival strategy. And by the end of this article, you'll know exactly what machine to build without blowing your budget (or your sanity).

Part 1: Why Local AI?

Before We Talk About Tokens… Let's Talk About Privacy

Here's a question nobody asks enough: Are you really willing to give your password and credit card to some third-party API?

Think about it. Every time you call an LLM through the cloud, you're trusting:

- Your data goes to a stranger (the AI company)

- Your prompts might be saved as "confidential"

- Your agent's memory lives on servers you don't control

And here's the kicker: your own agent memory is the key. When you run local AI, your agent builds context over time — it remembers what you care about, what you've asked before, and what matters to YOU. With cloud APIs, that history is often fragmented across different services unless you explicitly tell them to remember things.

With Local AI:

- Your memory stays YOURS (not rented)

- No need to "reset" your agent's context every month

- Sensitive data doesn't leak because… well, it's not leaking anywhere! It's just there, in YOUR machine

Now let's talk about the actual costs. Because yes, privacy matters — but so does money.

Here's what nobody told you in 2024–25: pricing per token is a trap. And it's gotten worse since then.

The problem isn't just that models talk more (they do — modern agents love to ramble). It's that you're paying for BOTH input AND output tokens separately. That means:

- Your prompt costs money

- Every word the AI generates costs MORE money

- Modern agents are increasingly token-hungry, consuming 30–50% more tokens than they did in 2024 (more context windows, longer reasoning chains, deeper memory retrieval)

So when an agent says "I think…" and then spends three paragraphs explaining why… you're paying for all of it. And not just once — every single time you call the API.

The Hidden Costs Nobody Talks About:

- Input token bloat: Your agent is learning to use longer prompts and deeper context windows — that's more tokens, more money. Suddenly your $8/month API bill becomes $25/month because the model needs 10K more context.

- Latency sensitivity: Cloud has ~50ms latency; local can do <10ms when you need it NOW

- Privacy concerns: "Oh, this is confidential" — except now you're storing sensitive data locally and not sending them to the cloud every time

- Rate limit surprises: When your API call queue backs up at 2 PM on a Friday

Here's what changed: Before 2026, open source models were still weak — they could handle basic tasks but struggled with complex reasoning. You needed a cloud API for anything serious.

After 2026? Different story entirely. Open source models are now way better and genuinely usable for everyday work. The gap between "free" local models and premium cloud APIs has narrowed significantly, making the break-even point much lower than anyone expected.

If you're doing more than 5M tokens per month, the math already favors owning your own stack — but here's the kicker: with 2026's new generation of models, even light users are finding local AI competitive because… well, let me show you what's actually good now.

The New Contenders

- Qwen3.5 -27B — The General Purpose Powerhouse (Released February 2026)

This isn't just an incremental update — it's a generational leap. Here's what makes Qwen3.5 special:

- Native multimodal capabilities: Text and visual processing happen in the same latent space from early training, enabling improved spatial reasoning

- 8x better at processing large workloads than its predecessor (Qwen2.5)

- 60% cheaper to use for cloud deployment — which translates to massive savings when running locally

- Scalable vector graphics generation: Can create SVGs directly from text descriptions (a first for open models!)

- Visual agentic capabilities: Doesn't just "see" images — it can act on them

Why you care: If you're building a local AI that needs to handle both text and images without breaking the bank, Qwen3.5 is now a serious contender against GPT-4.1. And at 70B parameters (or smaller variants), it runs comfortably on consumer GPUs.

- Qwen3-Coder-Next — The Coding Specialist

This one's particularly interesting for developers and engineers. Here's why:

- 80B parameter model that activates only 3B during inference: This means you get the intelligence of a massive model with the speed of a small one

- Performance comparable to Claude Sonnet 4.5 on coding benchmarks — but runs locally without needing 128GB VRAM

- Feasible for local deployment with <60GB VRAM: First "usable" coding model that fits on consumer-grade hardware

- Excels at long-horizon reasoning, complex tool usage, and recovery from errors: It doesn't just write code — it builds systems

Why you care: If you're a developer looking for a local AI coding companion, this is the first time an open-source model can genuinely compete with premium cloud APIs on coding tasks. And because it activates only 3B parameters during inference, it's fast enough to feel "live" while you code.

Bottom line: These aren't just incremental improvements. Qwen3.5 and Qwen3-Coder-Next represent a fundamental shift in what local AI can do. Before 2026, you needed cloud APIs for serious work. Now? You need them only if you're running out of VRAM on your GPU.

Part 2: The NVIDIA GPU Options

RTX 5090 — The New King, But at What Cost? (And It's Getting Expensive)

Real Street Prices (March 2026):

- Amazon: ~$4,232 | Newegg: ~$3,620–$4,000 | MSRP at launch: $1,999 (now barely found) VRAM: 32GB GDDR7

Here's the reality: The RTX 5090 launched in late 2025 at a sensible $1,999. But thanks to memory shortages and AI demand, you're now paying nearly double that. On Amazon, you'll see prices hovering around $4,232, while Newegg has some deals closer to $3,620 if you're lucky.

This is the card that made everyone say "wow" when it launched in late 2025. It's 60–80% faster than the RTX 4090 for AI workloads and handles 70B+ models with room to spare. The 32GB VRAM means you won't run out of headroom on massive context windows.

Who should buy it: You if you're serious about local AI, don't have a tight budget, or want future-proofing for the next 2–3 years. If you can afford $2,600 and expect to be running heavy models daily, this is your card.

The catch: It draws 575W, so your electricity bill will thank you… in about six months when it arrives.

RTX Pro 6000 (Blackwell) — The Enterprise Beast (For When You Need Massive VRAM)

Real Street Prices (March 2026):

- Newegg: ~$8,400–$12,000 | Amazon: ~$9,500–$11,000 | MSRP at launch: $7,999 (Blackwell Workstation Edition) VRAM: 96GB GDDR7 ECC

This is NVIDIA's latest enterprise GPU — built on the Blackwell architecture (newer than Ada). The RTX Pro 6000 isn't just another card; it's a desktop supercomputer in GPU form. With an eye-watering 96GB of VRAM, this thing can handle:

- Massive context windows without breaking a sweat (1M+ tokens is feasible)

- Fine-tuning AI models locally on your own machine

- Running multiple large models simultaneously

Why you care: If the RTX 5090's 32GB feels cramped and you're willing to spend $8,400–$12,000 for peace of mind, this is the card that says "I'm done compromising on VRAM." It's particularly valuable if you're building a dedicated AI workstation where capacity matters more than raw inference speed.

RTX 4090 — The Value King (But It's Getting Expensive!)

Real Street Prices (March 2026):

- Amazon New: ~$2,755 | Newegg New: ~$2,100–$3,765 | Used on eBay: ~$2,200 | MSRP at launch: $1,599 (now nearly extinct)

Here's the reality: The RTX 4090 launched in late 2022 at a sensible $1,599. But now? You're paying closer to $2,755 on Amazon — that's $1,156 more than MSRP.

The good news: Used cards are still decent value at ~$2,200 on eBay. If you can find a well-maintained 4090 under $2,300, it remains the sweet spot between performance and cost for local AI workloads.

VRAM: 24GB GDDR6X

Here's the thing nobody wants to admit: the 4090 handles 95% of use cases just fine. It's still incredibly fast for local LLM inference and can comfortably run most 70B models. At ~$2,200–$2,800 new or under $2,300 used, it remains the sweet spot between performance and cost — if you're okay paying a premium.

Who should buy it: Anyone who wants serious AI power without going full luxury. If you're building a dedicated AI machine and want to balance price with future-proofing, this is still arguably the best value in 2026.

RTX 3090 — The Budget Legend (Yes, Still!)

Real Street Prices (March 2026):

- Amazon New: ~$1,488 | Used on Amazon/Newegg: ~$650–$950 | eBay Used: ~$630–$800 | VRAM: 24GB GDDR6X

If you think buying a used 3090 is "cheesy," I challenge that. This card remains the value king for local AI in 2026. You get the same 24GB VRAM as the 4090 at less than half the price. Yes, it's slower (about 15–20% behind on raw tokens/sec), but nobody really cares when you're saving $1,000+.

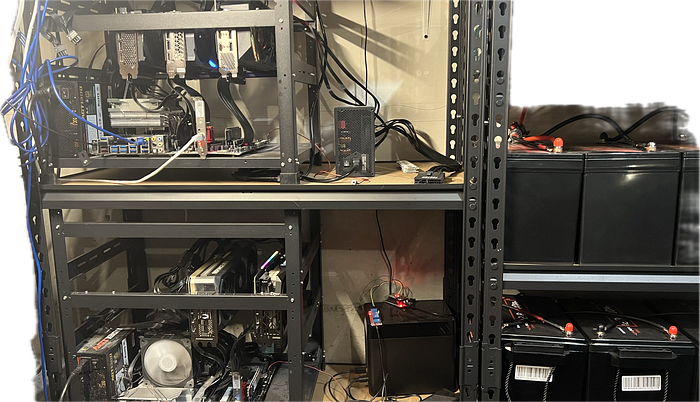

Who should buy it: Budget-conscious builders, second-generation local AI adopters, or anyone who says "I just need VRAM" and doesn't want to overspend. It's especially popular for multi-GPU rigs where you can run two 3090s for the price of one 5090.

Here's the reality check nobody wants to admit: the RTX 3090 is still faster at token generation than even the new M5 Max. Let me show you why.

Why the RTX 3090 Still Dominates for Budget Builders:

1. Memory Bandwidth Advantage: The RTX 3090's 936 GB/s bandwidth crushes both M4 Max (546 GB/s) and even M5 Max (614 GB/s). For LLM inference, memory bandwidth is king — it directly determines how fast you can generate tokens.

2. Price-to-Performance Ratio: At ~$700–$850 used:

- RTX 3090: ~0.9 tok/$ (tokens per dollar spent)

- M4 Max (used): ~0.6 tok/$

- M5 Max (new): ~0.4 tok/$

3. The "Good Enough" Threshold: For interactive chat, you need roughly 10+ tokens/sec to feel responsive. The RTX 3090 delivers 8–12× that threshold while costing less than half a used M4 Max.

If you're building your first local AI machine and don't want to spend over $1,000 on just the GPU, the RTX 3090 is still unbeatable. Yes, Apple Silicon has better efficiency (lower power consumption) — but if raw token generation speed matters more than electricity savings, NVIDIA wins handily at this price point.

And here's the kicker: You can buy a used RTX 3090 for ~$750 and get faster inference than an M4 Max that costs ~$1,800–$2,200 new. That's not just value — that's a steal.

NVIDIA DGX Spark — The Desktop Supercomputer (For People Who Want Simplicity)

Price: ~$4,699 (as of March 2026, up from $3,999 at launch) | Memory: 128GB unified

The DGX Spark is NVIDIA's answer to "I don't want a full PC build." It's an all-in-one desktop AI supercomputer with:

- GB10 Superchip (Grace Blackwell architecture)

- 128GB of unified LPDDR5x memory shared between CPU and GPU

- 4TB NVMe storage included

- 1 petaFLOP sparse FP4 performance

It's essentially a pre-built, plug-and-play AI workstation. No cable management nightmares, no weird driver issues (ARM-based), just turn it on and go.

Who should buy it: People who want simplicity over customization, data scientists who need unified memory architecture, or anyone who doesn't want to build a traditional PC but still wants serious local AI performance. At $4,699, you're paying premium for convenience — which is fine if you value your time more than $500/month.

Part 3: Apple Silicon (The "I Want Low Power + Great Performance" Tier)

M5 Max — The New Hotness (Just Released!)

Release Date: March 2026 | Price: ~$3,600 (14") to $6,100+ (16", high-end config)

Apple just dropped the M5 Max, and it's causing quite a stir. With an 18-core CPU (6 performance cores + 12 efficiency cores), 32-core GPU, and up to 128GB unified memory, this is serious business for local AI workloads.

Why you might want it:

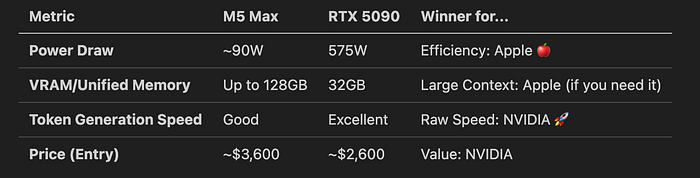

- Unmatched power efficiency (the MacBook Pro M5 Max draws ~90W vs. 575W for an RTX 5090)

- Unified memory architecture means the model can use all that RAM without bottlenecks

- Silent operation — your laptop won't sound like a spaceship taking off

The trade-off: You're paying for efficiency, not raw throughput. If you need blazing-fast token generation, NVIDIA still wins on pure speed. But if you want low power consumption and don't mind slightly slower inference, M5 Max is the answer.

M1 Max — The Budget Legend (Still Relevant in 2026!)

Price: ~$800–$2,000 used | Memory: Up to 64GB unified

Here's something that might surprise you: the M1 Max is still worth it in 2026. Yes, really. Four years after launch, and people are still buying these like crazy because they offer incredible value for the money.

Why it works for budget builds:

- You get up to 64GB unified memory (plenty for most local AI workloads)

- At ~$800 used, you're getting premium silicon at a discount

- Still runs LLMs smoothly with decent token throughput (~50–70 tokens/sec on larger models)

Who should buy it: Anyone on a tight budget who still wants Apple's efficiency and unified memory architecture. If you don't need the absolute latest chip but want reliable local AI performance without breaking the bank, this is your pick.

If you decide to buy the M1Max version, the 16 inch, with 64GB RAM with 32Core GPUs are the best option in my mind.

Quick Apple vs. NVIDIA Comparison (March 2026):

Part 4: The Rest of the Options (Because Life Isn't Black and White)

Since I don't quite familar with the rest options, so I will save this part.

…

Part 5: Used Parts Strategy (The "I'm Smart About Money" Approach)

The 3090 Goldmine

As I mentioned earlier, the RTX 3090 remains the value king for local AI in 2026. At ~$600–$850 used, you're getting:

- Same 24GB VRAM as the RTX 4090

- Solid performance for running 70B quantized models

- Mature ecosystem and widespread support

- You can use 4 RTX 3090 together in one machine

Pro tip: Look for cards from reputable sellers on eBay with less than 100 hours of mining time. Avoid cards that have been heavily used in gaming rigs unless they're significantly cheaper.

The M1 Max Sweet Spot

If you're going the Apple route, used M1 Max MacBook Pros or Mac Studios are still incredible value at ~$800–$1,800 depending on configuration. You get up to 64GB unified memory without paying premium M5 prices.

Multi-GPU Builds (For the Ambitious)

If you want serious power without breaking the bank:

Two used RTX 3090s (~$1,400–$1,700 total) can outperform a single RTX 5090 for certain workloads

- You're essentially getting more VRAM headroom and parallel inference capability

Final Recommendations (TL;DR Version)

Budget Build (~$800–$1,800):

- GPU: Used RTX 3090 OR used M1 Max Mac Studio/Macbook Pro with 64G RAM

- Best for: First-time local AI adopters, hobbyists, budget-conscious professionals

Mid-Range Build (~$1,800–$2,500):

- GPU: New RTX 4090 OR new AMD 7900 XTX + CPU upgrade

- Best for: Serious users who want performance without overspending

Premium Build (~$3,600+):

- GPU: RTX 5090 OR M5 Max (if you value efficiency)

- Best for: Power users, professionals running heavy models daily, future-proofing enthusiasts

Simplicity Build (~$4,700):

- All-in-one: NVIDIA DGX Spark

- Best for: People who want plug-and-play without building a PC

Bottom Line

In 2026, local AI is more accessible than ever. Whether you're buying used RTX 3090s for budget builds or splurging on an M5 Max MacBook Pro, there's never been a better time to own your own AI infrastructure.

The key question isn't "Should I go local?" — it's "What can I afford without regretting it in six months?"

So pick your path:

- NVIDIA if you want raw speed and mature tooling

- Apple if you value efficiency and simplicity

- Used market if you're smart about money (which you should be)

And remember: nobody cares what GPU you have until they see how fast your local AI responds at 3 PM on a Friday when your closed cloud API is suddenly rate-limited. 😄