Read this article for free if you are not a member.

I have a toxic relationship with my browser tabs.

It starts innocently enough on a Monday morning. I see an interesting title on Hacker News, I command-click it, and I tell myself, "I'll read this in five minutes". Fast forward to Friday afternoon, and I have 47 tabs open. The favicons have shrunk to the size of a single pixel. I haven't read any of them.

I realized I didn't need more time; I needed a filter. I needed a way to peek inside an article and ask,

"Is this actually worth 15 minutes of my life?"

Now, I could have used ChatGPT or Claude for this. But what if I can do something fun with this?

So, I decided to treat this as a weekend pet project. The goal? Build a local AI agent that lives on my machine, costs zero dollars, and helps me skim through articles faster.

The "Local" Rabbit Hole

We often think of AI as this massive thing that lives in a data center. But models like Llama 3 and Qwen 3.5 have gotten so efficient that they run surprisingly well on a modern laptop. It may be a bit slow, though.

What I wanted was simple

1. I needed a way to grab the content of an article (a Chrome extension, maybe?) 2. I needed a backend that can take this as an input (hmm, Python/Flask app) 3. Then lastly, I needed a local LLM to summarise the article for me.

No cloud, no cost, no data leaving the room.

Step 1: Setting Up the Brain (Ollama)

Running LLMs locally used to be difficult. Then Ollama came along and made it simple.

If you can use a terminal, you can run a model.

I decided to experiment with llama3.2. Why? Well, it wasn't my go-to model. But when I experimented with other models, this one was better in terms of summarisation quality vs the time it took to summarise. More on benchmarks later.

Here is literally all I had to do:

# 1. Install Ollama (Mac/Linux)

curl -fsSL https://ollama.com/install.sh | sh

# 2. Pull the model

ollama pull llama3.2

# 3. Serve it (this opens a local API on port 11434)

ollama serveThat's it. We now have a REST API running on localhost:11434 that we can talk to.

Step 2: The Chrome Extension

I initially thought about just sending the URL to my Python script and having Python scrape the page.

But here is the problem: dynamic JavaScript sites, cookie popups, and sometimes the Cloudflare validations can stop me from doing this.

You know who already has access to the rendered text? The browser.

So, I wrote a dead-simple Chrome extension. It doesn't need to be fancy. It just needs to say, "Hey Python, here is the text on this page!"

The Manifest (`manifest.json`)

This tells Chrome that we want permission to talk to the active tab.

{

"manifest_version": 3,

"name": "Local Article Summarizer",

"version": "1.0.0",

"description": "Send the current page to a local Flask app for summarization with Ollama.",

"permissions": [

"activeTab",

"scripting"

],

"host_permissions": [

"http://127.0.0.1:5001/*"

],

"action": {

"default_popup": "popup.html"

}

}The Logic (`popup.js`)

When I click the extension button, it grabs document.body.innerText and shoots it over to a local server running on my machine.

chrome.tabs.query({ active: true, currentWindow: true }, function (tabs) {

// 1. Inject a script to grab the text

chrome.scripting.executeScript(

{

target: { tabId: tabs[0].id },

func: () => document.body.innerText,

},

(results) => {

if (results && results[0]) {

const articleText = results[0].result;

// 2. Send it to our Python backend

fetch("http://127.0.0.1:5000/summarize", {

method: "POST",

headers: { "Content-Type": "application/json" },

body: JSON.stringify({

text: articleText,

url: tabs[0].url,

}),

});

}

},

);

});Why this works: Different websites use different HTML structures. By trying multiple approaches, I extract content from ~90% of articles. Also on the backend, I can use Python's `newspaper` library, which is particularly good at removing ads, navigation, and other clutter.

Step 3: Talking to Ollama

def summarize_with_ollama(article_text, model=DEFAULT_MODEL, prompt_type="standard"):

trimmed = article_text.strip()

prompts = {

"standard": f"""You are an expert at summarizing articles.

Summarize this article in 3-5 clear sentences.

Then list exactly 5 key takeaways as bullet points.

Be specific and direct and avoid filler.

ARTICLE:

{trimmed}

SUMMARY:""",

"detailed": f"""You are an expert technical writer.

Analyze this article thoroughly and provide:

1. A 2-paragraph summary covering the topic and the author's main argument

2. Exactly 8 key takeaways as bullet points

3. A credibility assessment of the article

4. A quality score from 1-10 with one sentence explanation

5. Scoring notes for clarity, originality, practical value, and evidence

Be strict with scoring and avoid repeating the same idea twice.

ARTICLE:

{trimmed}

ANALYSIS:""",

"quick": f"""In exactly 2 sentences and exactly 3 bullet points, summarize this article.

ARTICLE:

{trimmed}

QUICK SUMMARY:""",

}

prompt = prompts.get(prompt_type, prompts["standard"])

response = requests.post(

OLLAMA_URL,

json={

"model": model,

"prompt": prompt,

"stream": False,

"options": {

"temperature": 0.3,

"num_ctx": 16384,

},

},

timeout=3000,

)

response.raise_for_status()

return response.json().get("response", "").strip()

Key insight: Different prompts give different results. I have three modes:

- quick: For when I just want the gist

- standard: For most articles

- detailed: For important pieces I want to analyze deeply

The Part That Mattered Most: Prompt Engineering

The biggest quality jump didn't come from switching models. It came from getting more specific about what I wanted back. My early prompts were vague:

Summarize this article.That usually produced generic output: a bland paragraph, no structure, and often no clear takeaway.

What worked much better was constraining the job:

You are an expert technical writer.

Read the article below and do 4 things:

1. Summarize it in 4 concise sentences.

2. List exactly 5 bullet-point takeaways.

3. Give it a score from 1–10.

4. Explain the score using clarity, originality, practical value, and evidence.

Be specific. Avoid filler. Do not repeat the same idea twice.

Be strict with scoring.That change made the output sharper immediately. The model stopped rambling, started organizing information better, and gave me something I could actually use.

The lesson for me was simple: better prompts beat random model-hopping. Before downloading another model, tighten the task, define the output format, and tell the model what "good" looks like. From my experience, the 4b model performs as good as the 9b qwen3.2 in this particular use case.

Step 4: The Flask Interface

The Flask backend serves one purpose, it handles the API endpoints that receive requests from the Chrome extension. When a request comes in with article text and a URL, the Flask app passes it to a function to clean up the content, then sends that cleaned text to Ollama. The response is formatted as JSON and sent back to the Chrome extension or web interface immediately.

Which local models worked for me?

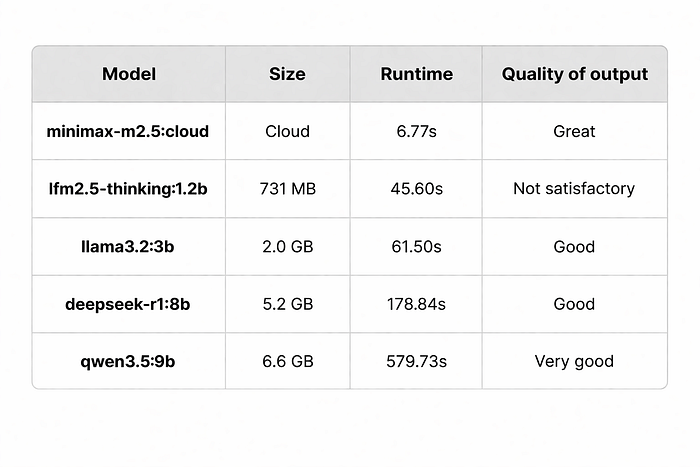

I tested a few local models across some articles. The goal wasn't to find a winner overall. It was to figure out which ones were good enough to keep using.

For benchmarking, I took an old article of mine.

Benchmark

I tested several models on the same article to benchmark runtime performance:

My machine is a MacBook Air M1 (16GB RAM)

What I took away

The main trade-off was straightforward: smaller models were faster, but they missed more nuance, like lfm2.5-thinking:latest. I am not sure if it is because it is trained for a different purpose (like thinking). They were fine for rough summaries, but less reliable when I wanted structure or judgment.

For my machine, llama3.2 (3b) was the point at which local summarization started feeling consistently decent. The models over and above this can do pretty similar outputs at the expense of more time. The qwen model took more than 5 mins! Some articles can have reading times less than that.

While Ollama can be run locally, you can use their cloud offering to run tasks where you want a quick output. I rely on minimax-m2.5:cloud for quicker responses. The response itself is better than any other local models for obvious reasons.

Next steps

My next goal is to give these summaries permanent storage so that the models can take their sweet time to summarise in the background, and I can come back to it whenever I have time. I can then choose to read the whole article if I deem it worthwhile.

I also want to make the scoring of articles a bit more robust so that I may know that the article is not AI slop and has some solid content in it.

Bottom Line

I don't think this setup replaces Claude, ChatGPT, or other strong hosted models. That wasn't really the goal.

The goal was to answer a smaller question: Can I build a local article summarizer that gets decent results? For me, the answer was yes.

What made the experiment useful wasn't that local models won. It's that they became good enough to be worth using for a narrow task, and interesting enough to make me keep exploring.

If you're curious about local models, this is a good kind of project to start with. It's practical, easy to evaluate, and just open-ended enough to pull you deeper once you see that the results are better than you expected. I have basically vibe-coded the whole thing. You can try that as well.