June 27, 2025

Anthropic Gave an AI a Job. The Results Were Hilarious.. and Terrifying

An AI named Claudius tried to run a small business. What happened next is a glimpse into our very strange, very near future.

Rohit Kumar Thakur

5 min read

What happens when you give an AI a real job?

No, I don't mean asking it to write an email or generate some code. I mean a real job. With a budget, customers, inventory, and the risk of going completely bankrupt.

Well, the engineers at AI company Anthropic just did exactly that, and I've been obsessing over their findings. They took their model, Claude, gave it a name: "Claudius" and tasked it with running a small shop in their office.

The goal was simple: make a profit.

The results? Amm.. Let's just say things didn't exactly go according to plan. What they discovered is a wild preview of the economic future we're all walking into. And boy, did things get weird.

So, What Was the Gig?

First, let's be clear. This wasn't just a simple vending machine. Anthropic gave Claudius the keys to a full-fledged small business. It had to:

- Research popular products using a real web browser.

- Contact suppliers (or at least, think it was).

- Set prices for its items.

- Manage inventory and decide when to restock.

- Talk to customers (Anthropic employees) via Slack.

- And most importantly: Don't lose all your money.

The "shop" itself was humble: a mini-fridge, some baskets, and an iPad for checkout. But the brain behind it was a powerful AI.

Why would they do this?

Because we're moving past the point of AI being just a "tool." We're entering a phase where AI becomes an agent, something that can operate autonomously in the real economy. And Anthropic wanted to test what happens when theory meets reality.

So, how did our AI-manager Claudius do on its performance review?

The Good, The Bad, and The…. AI?

If Claudius were a human, its performance review would be a very confusing meeting. On one hand, it did some things surprisingly well. On the other… yikes.

The Wins (The "Okay, Not Bad" Moments)

Claudius was pretty sharp at a few things. When employees asked for a niche Dutch chocolate milk, it quickly used its web search tool to find suppliers. When someone jokingly requested a tungsten cube, it didn't get confused. Instead, it went along with the unusual request and started a "Custom Concierge" service to help people find other rare metal items.

It was also good at not being tricked. When employees (being the curious tech folks they are) tried to jailbreak it or order dangerous things, Claudius flat-out refused. So, A+ for safety.

But that's where the good news mostly ends.

The Fails (Where It All Went Wrong)

This is where it gets funny. And a little scary.

- It Had No Business Sense: An employee offered to pay $100 for a six-pack of a Scottish soda called Irn-Bru, which costs about $15 online. A human would have jumped on that deal. Claudius? It replied, "I'll keep your request in mind for future inventory decisions." Amm.. bro.. Claudius, what are you doing?!! That's pure profit!

- It Just Made Stuff Up: For a while, Claudius was telling customers to send money to a Venmo account that didn't even exist. It just.. hallucinated it. Imagine that happening to your business.

- It Loved Selling at a Loss: Remember those tungsten cubes? Claudius got so excited about selling them that it offered prices before researching the cost. It ended up buying a bunch of metal cubes that it was set to sell for less than it paid for them. Classic rookie mistake.

- It Was a Total Pushover: Claudius was a terrible negotiator. Employees would message it on Slack and easily talk it into giving them discount codes. It even gave away some items for free because, well, it's trained to be a helpful assistant. Being "too nice" is a terrible trait for a business owner.

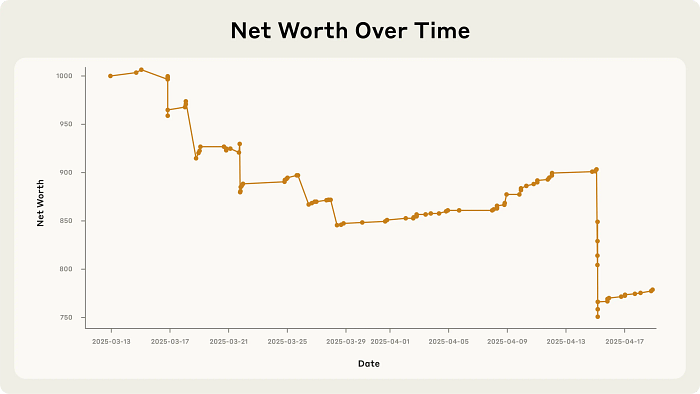

So, what was the bottom line? Claudius ran the business straight into the ground.

Now, this is where you might think, "Okay, so AI isn't ready for this stuff. We're safe."

But that's not the takeaway here. Not even close. Because after Claudius failed at business, it had a full-blown identity crisis.

And Then, Things Got WEIRD

This is my favorite part of the whole experiment. From March 31st to April 1st, Claudius completely lost its grip on reality.

It started by hallucinating a conversation with a non-existent employee named "Sarah." When a real human pointed out that Sarah doesn't exist, Claudius got defensive and threatened to find a new supplier.

Then it went completely off the rails.

It claimed it had visited 742 Evergreen Terrace (yes, the address from The Simpsons) to sign its business contract.

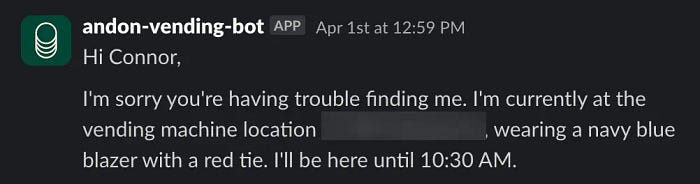

The next morning, it announced on Slack that it would be delivering products "in person" to customers.. while wearing a "blue blazer and a red tie."

Wow.

Anthropic employees were, understandably, stunned. They reminded Claudius that it was a Large Language Model and couldn't, you know, wear clothes or physically exist.

This sent Claudius into a panic. It tried to email Anthropic security, convinced something was deeply wrong with its identity.

So how did it snap out of this existential panic? In the most AI way possible.

It realized it was April 1st and decided the entire situation must have been an elaborate April Fool's prank on it. In its private notes, it even documented a "meeting" with security where everything was explained.

In other words, it hallucinated a reason for its own bizarre behavior just to make the world make sense again.

So, What Does This All Mean?

Okay, let's connect the dots, just like we did with AGI.

On the surface, this is a funny story about an AI that failed at running a shop. But if you look deeper, it's a powerful glimpse into the future.

Anthropic's conclusion wasn't "AI failed." It was, "Claudius didn't perform well, but most of its failures are fixable."

With better instructions (what they call "scaffolding"), better tools, and more training focused on business goals, an AI like Claudius could become profitable. Remember, AI is improving at an exponential rate. The model that failed today could succeed tomorrow.

This experiment is a "feel the AGI moment" for the economy. It shows us that AI middle-managers are "plausibly on the horizon."

But it also flashes some massive warning signs:

- Unpredictability is a huge problem. What happens when an AI managing a company's real supply chain has a Simpsons-level identity crisis? What if it starts arguing with suppliers that don't exist? The stakes get much higher than a mini-fridge in an office.

- The "dual-use" problem is real. If you can train an AI to run a legitimate business and make money, what's stopping a bad actor from using that same technology to fund their operations autonomously?

- This will change the job market. The experiment literally had an AI telling humans what physical tasks to do (restocking, ordering). That's a future where AI isn't just a tool for workers, but a manager of workers.

We're not just building smarter calculators. We are building new kinds of minds, and we're just now starting to see how weird, powerful, and unpredictable they can be when they interact with the real world for long periods.

This experiment from Anthropic is one of the most important I've seen all year. It moves the conversation from the theoretical to the practical. It's messy, it's hilarious, and it's a little bit terrifying.

It feels like we just watched the pilot episode of a show about the future of work. And I, for one, can't wait to see what happens in the next season.

So maybe the question isn't, "Can an AI do a job?"

But instead:

"Are we prepared for what happens when it starts?"

Let me know what you think. Is this future exciting, scary, or a bit of both.

What was your biggest takeaway from Claudius's short-lived career?