This article is normally paywalled, but you can read it in full via this link — even without a Medium account.

Most founders and writers treat Reddit like a social feed. They doomscroll, hope to spot a trend, and maybe save a comment or two.

That's not research. That's guessing.

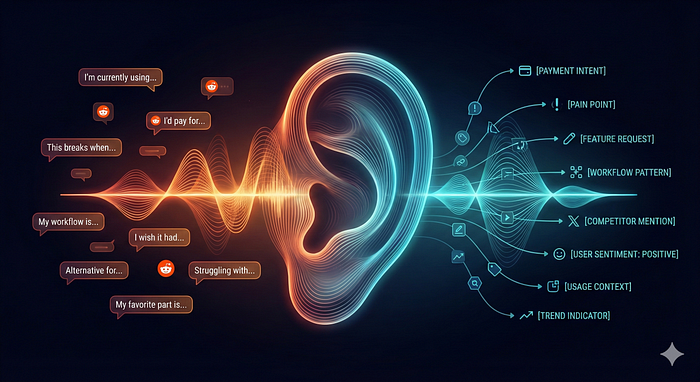

The reality is that Reddit is the largest open-source database of unmet needs on the internet. People aren't just chatting there; they are explicitly describing software that is broken, services they would pay more for, and problems they are currently solving with duct tape and spreadsheets.

But the signal is buried in noise. If you read threads manually, you miss the patterns. You get distracted by arguments, memes, and ranking algorithms.

There is a systematic way to strip out the noise.

It doesn't require a scraping bot or Python scripts. It relies on a hidden feature built into every Reddit URL and a specific way of prompting an LLM.

I stopped "looking for ideas" and started running this workflow instead. Here is how I use raw data to find exactly what people are trying to buy.

Why Reddit Threads Are Better Than Customer Interviews

Traditional market research has a problem: people lie. Not intentionally — they just don't know what they actually want until they're in the middle of trying to solve it.

Ask someone in an interview, "Would you pay for X?" and they'll give you a socially acceptable answer. Watch them struggle with a problem in a forum thread, and they'll tell you exactly what's broken, which workarounds they've tried, and how much friction they're willing to tolerate before they give up.

Reddit threads — especially in smaller, niche subreddits — capture people in the moment of pain. They're not performing for a researcher. They're asking strangers for help because the current solutions aren't working.

The advantage isn't speed. It's context. You see the problem, the attempted solutions, the trade-offs they're making, and the language they use to describe all of it. That's data you can't get from a survey.

The Workflow: From Thread to Insight

Here's the process I use to extract patterns from Reddit conversations that most people miss.

Here's the process I use to extract patterns from Reddit conversations that most people miss.

Step 1: Pull the full thread data

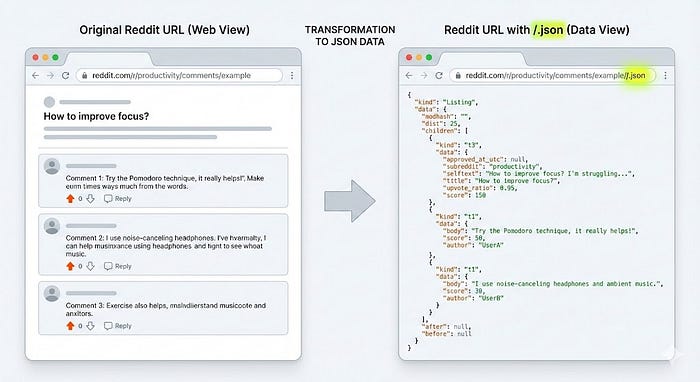

Reddit's API makes this easy. Take any thread URL and add /.json to the end. You get the entire conversation—every reply, nested thread, upvote count, timestamp—in a structured format.

Example: https://www.reddit.com/r/productivity/comments/example/.json

This gives you everything instantly. No manual copying, no missing context, no replies you scrolled past.

Step 2: Feed it to an LLM

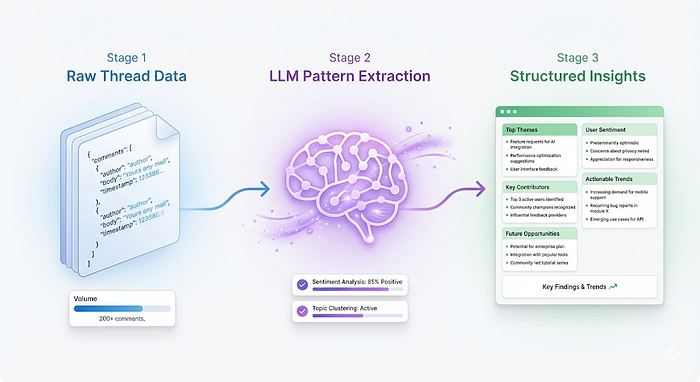

Copy the JSON output and pass it to Claude, ChatGPT, or any model with a large context window. The goal isn't summarization — it's pattern extraction.

Use this prompt:

"Analyze this Reddit thread for unmet user needs. Identify: 1) The core problem people are trying to solve, 2) Gaps in current solutions mentioned, 3) Workarounds people are using, 4) Language patterns that indicate willingness to pay, 5) Repeated frustrations across multiple comments. Output as structured findings, not summaries."

The model will pull out themes you'd miss reading manually: the same complaint phrased five different ways, implied needs people don't state directly, signals about price sensitivity buried in offhand remarks.

Step 3: Map the real pain

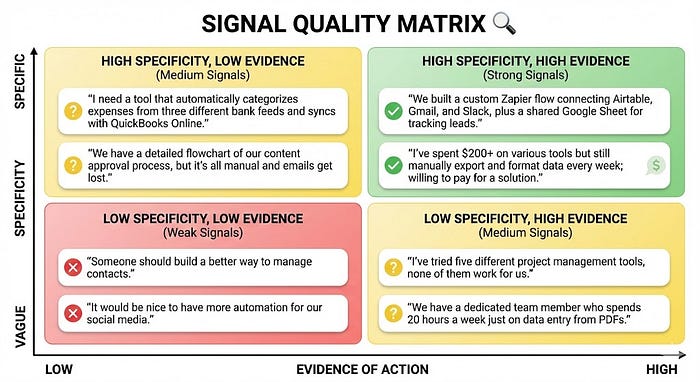

Look for the problems people are solving badly. Not the ones they mention once — the ones that show up in multiple comments, across different users, with evidence they've already tried fixing it.

Good signals:

- "I'm currently using [Tool A] + [Tool B] + a spreadsheet to do this"

- "I know this is a weird setup, but it's the only way I could make it work"

- "I'd pay for something that just handled [specific thing]"

Bad signals:

- "Someone should build…"

- "Wouldn't it be cool if…"

- Feature requests without context about why current options fail

The difference is intent. One group is actively solving the problem right now. The other is daydreaming.

Step 4: Find the patterns no one else sees

Read ten threads on the same topic. Run each through the LLM. Look for overlapping pain points that show up across different contexts.

This is where most people stop too early. One thread tells you what one group needs. Ten threads tell you what the category is missing.

The model will surface connections you wouldn't catch manually: how the same problem manifests differently in adjacent niches, which workarounds show up repeatedly, which complaints are actually symptoms of a deeper structural issue.

Where to Look

Not all subreddits are useful. The big, general ones are too noisy. The tiny ones don't have enough volume. You want mid-sized communities (10K–500K members) focused on a specific activity, role, or problem.

Good hunting grounds:

- r/freelance (people managing messy workflows solo)

- r/productivity (constant tool experimentation)

- r/startups (founders solving problems before they can afford proper software)

- r/realestateinvesting (niche processes, high willingness to pay)

- r/teachers (underfunded, creative workarounds)

- r/sysadmin (enterprise problems, individual solutions)

Small subreddits are gold mines. People openly explain what they need, what they've tried, and what they'd pay for. The conversations are detailed because the community is tight and the problem is specific.

Avoid:

- Subreddits where people just complain without trying solutions

- Communities dominated by enthusiasts rather than practitioners

- Threads that are purely theoretical ("What if there was an app that…")

You want threads where people are actively solving problems right now, not imagining better futures.

What the Data Actually Reveals

The LLM analysis will give you structured patterns, but you still need to interpret them correctly.

Look for convergence. If three people in different threads mention cobbling together the same two tools, that's a gap. If twenty people mention it, that's a market.

Watch the language. People who say "I need" without offering solutions are brainstorming. People who say "I'm currently using X but it breaks when…" are buyers.

Track the workarounds. The more complex someone's current solution, the more pain they're tolerating. A five-step manual process signals higher willingness to pay than a minor inconvenience.

Identify the non-obvious insights. Sometimes the real opportunity isn't the stated problem — it's the thing they're doing before or after the stated problem. The LLM will catch these because it's not anchored to your assumptions.

Where This Breaks

This workflow has clear limits.

It doesn't validate demand at scale. Reddit threads tell you a problem exists and people are trying to solve it. They don't tell you how many people have that problem or whether they'll actually buy your solution.

It's biased toward articulate users. The people posting detailed explanations of their workflows aren't representative of everyone with the problem. You're seeing the most engaged, most technical, or most frustrated subset.

It misses non-verbal behavior. You're reading what people say they do, not watching what they actually do. There's always a gap between stated behavior and real behavior.

It can't tell you about problems people don't know they have. You're limited to pain points people can articulate. Truly novel solutions often address needs users didn't know they could express.

Small sample sizes are noisy. Even ten threads is maybe 100–200 comments. That's not statistically significant. You're doing qualitative pattern recognition, not quantitative research.

How to Test What You Find

Reading threads gets you hypotheses, not answers. You still need to validate.

Fastest test: Create a landing page describing the solution in the exact language you saw in the threads. Post it in the same subreddit (following community rules) and see if people engage. If they do, ask for email signups or pre-orders.

Better test: Build a rough prototype and offer it to people who posted about the problem. Their feedback will tell you whether you understood the need correctly.

Best test: Launch a minimal version and charge money. Reddit users will tell you they'd pay for something. Actual payment behavior is the only signal that matters.

The Real Advantage

The workflow isn't about speed. Everyone can pull Reddit threads. Everyone has access to LLMs.

The advantage is listening better than everyone else.

Most people read one thread, skim the top comments, and move on. You're reading ten threads, extracting structured patterns, and mapping the gaps between what exists and what people are actually doing.

Most people look for ideas. You're looking for evidence of unmet needs that people are already trying to solve.

That's the difference between guessing at product-market fit and starting with proof it exists.

Start Here

Pick a subreddit where you have domain knowledge. Find a thread where people are asking for tool recommendations or describing their current workflow. Add /.json to the URL. Copy the output.

Feed it to an LLM with the prompt from this article. Read the structured findings.

If you see patterns you didn't catch reading manually, the workflow works. If you see problems people are solving badly right now, you've found something worth testing.

The goal isn't to have perfect data. It's to stop looking where everyone else is looking and start listening where the real problems are being discussed.