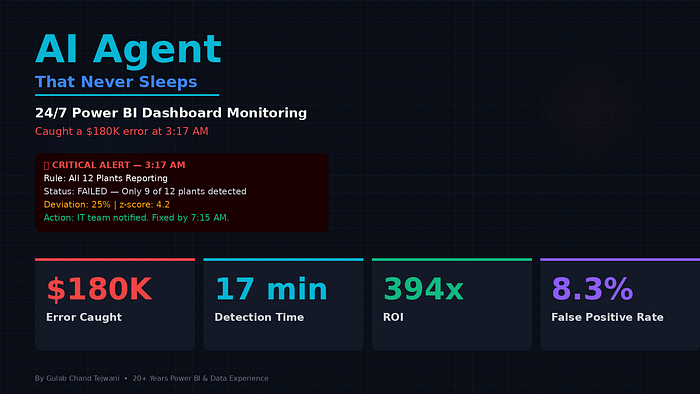

The dashboard showed $4.2M in Q1 revenue. The actual number was $4.02M. A $180K discrepancy that nobody noticed for 11 days — until the Python agent I built at 2 AM on a Sunday flagged it at 3:17 AM the following week. Here's exactly how I built it, what it monitors, and why every Power BI developer needs one.

I need to tell you about the phone call that changed how I think about dashboards forever.

Friday, 6:43 PM. I was about to leave for dinner. My phone rang.

It was Ravi, the CFO of one of my largest clients — a manufacturing company with 12 plants across Gujarat and Maharashtra.

His voice was tight.

"Gulab, we have a problem. The board presentation I gave yesterday showed $4.2 million in Q1 revenue. Our finance team just finished the manual reconciliation. The actual number is $4.02 million. I told the board we were $180,000 ahead of where we actually are."

Silence.

"The CEO is asking how this happened. I need an answer by Monday."

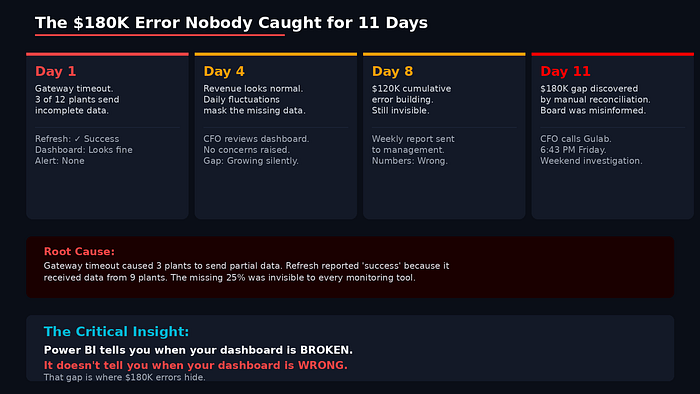

I spent the weekend investigating. The root cause was almost embarrassingly simple: a data pipeline had partially failed 11 days earlier. A gateway timeout caused 3 of 12 plant databases to send incomplete transaction data. The refresh "succeeded" because it got data from 9 plants. But 3 plants had gaps — small enough that nobody noticed on a busy dashboard, large enough to inflate revenue by $180,000.

The dashboard never showed an error. The refresh log said "completed successfully." Every visual rendered perfectly. The numbers just happened to be wrong.

And nobody caught it for 11 days.

That weekend, sitting at my desk at 2 AM on Sunday, I decided I was never going to let this happen again. Not to Ravi. Not to any client.

I was going to build something that watches these dashboards when nobody else is watching.

Why Power BI's Built-In Monitoring Isn't Enough

Before I built anything, I needed to understand why existing tools didn't catch this.

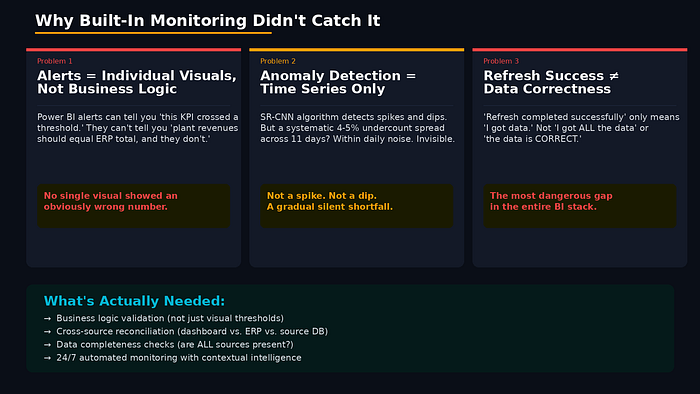

Power BI has monitoring. It has data alerts, scheduled refreshes, anomaly detection in line charts, and even Copilot-powered insights. So why did a $180K error go undetected for 11 days?

Here's what I found:

Problem 1: Alerts are based on individual visuals, not business logic.

Power BI alerts can tell you "this KPI crossed a threshold." They can't tell you "the sum of all plant revenues should equal the ERP total, and right now it doesn't."

The error wasn't a threshold violation. Revenue looked normal — it was just missing data from 3 plants. No single visual triggered an alarm because no single visual showed an obviously wrong number.

Problem 2: Anomaly detection works on time series, not cross-validation.

Power BI's built-in anomaly detection (the SR-CNN algorithm) looks for unusual patterns in a single time series. It's great for catching spikes and dips.

But this error wasn't a spike or dip. It was a systematic undercount that happened gradually across 11 days. The daily revenue trend looked perfectly normal — just 4–5% lower than reality. Within the noise of daily fluctuation, that's invisible to statistical anomaly detection.

Problem 3: Refresh success ≠ data correctness.

The scheduled refresh completed successfully. Power BI reported no errors. But "refresh completed" only means "I got data." It doesn't mean "I got ALL the data" or "the data is CORRECT."

This is the gap that keeps me up at night: Power BI tells you when your dashboard is broken. It doesn't tell you when your dashboard is wrong.

Building the Agent: The Architecture

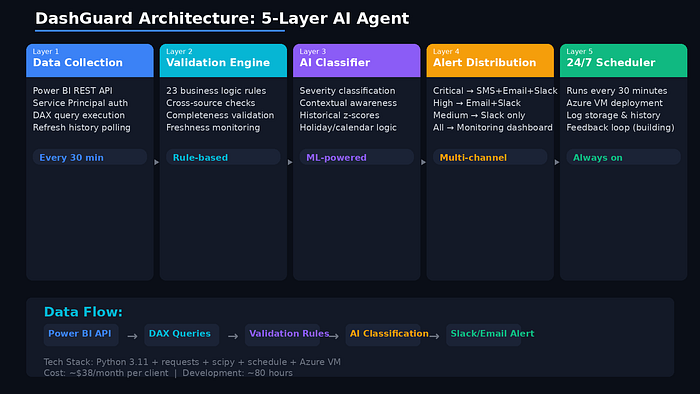

I spent the next two weeks building what I now call DashGuard — a Python-based AI agent that monitors Power BI dashboards 24/7 and catches errors that humans and built-in tools miss.

Here's the architecture:

Layer 1: Data Collection (Power BI REST API)

The agent connects to Power BI through the REST API using a Service Principal. Every 30 minutes, it:

- Queries every critical semantic model using DAX via the "Execute Queries" API endpoint

- Pulls refresh history and status for every dataset

- Collects row counts for every key table

- Captures the last refresh timestamp

import requests

import json

from datetime import datetime, timedelta

class PowerBIMonitor:

def __init__(self, tenant_id, client_id, client_secret):

self.token = self._get_token(tenant_id, client_id, client_secret)

self.base_url = "https://api.powerbi.com/v1.0/myorg"

def _get_token(self, tenant_id, client_id, client_secret):

url = f"https://login.microsoftonline.com/{tenant_id}/oauth2/v2.0/token"

data = {

"grant_type": "client_credentials",

"client_id": client_id,

"client_secret": client_secret,

"scope": "https://analysis.windows.net/powerbi/api/.default"

}

response = requests.post(url, data=data)

return response.json()["access_token"]

def execute_dax_query(self, dataset_id, dax_query):

"""Execute a DAX query against a Power BI dataset"""

url = f"{self.base_url}/datasets/{dataset_id}/executeQueries"

headers = {

"Authorization": f"Bearer {self.token}",

"Content-Type": "application/json"

}

body = {

"queries": [{"query": dax_query}],

"serializerSettings": {"includeNulls": True}

}

response = requests.post(url, headers=headers, json=body)

return response.json()

def get_refresh_history(self, dataset_id):

"""Get the last 10 refresh entries"""

url = f"{self.base_url}/datasets/{dataset_id}/refreshes?$top=10"

headers = {"Authorization": f"Bearer {self.token}"}

response = requests.get(url, headers=headers)

return response.json()Layer 2: Validation Rules Engine

This is where the magic happens. Instead of just checking "did the refresh work?", the agent runs business logic validations — rules that a domain expert would check manually if they had infinite time.

class ValidationEngine:

def __init__(self, monitor):

self.monitor = monitor

self.rules = []

def add_cross_source_rule(self, name, dataset_id,

dax_query_a, dax_query_b,

tolerance_pct=2.0):

"""Compare two values that should match"""

self.rules.append({

"type": "cross_source",

"name": name,

"dataset_id": dataset_id,

"query_a": dax_query_a,

"query_b": dax_query_b,

"tolerance": tolerance_pct

})

def add_completeness_rule(self, name, dataset_id,

dax_query, expected_min):

"""Ensure row counts meet minimums"""

self.rules.append({

"type": "completeness",

"name": name,

"dataset_id": dataset_id,

"query": dax_query,

"expected_min": expected_min

})

def add_freshness_rule(self, name, dataset_id,

dax_query, max_age_hours=24):

"""Ensure data isn't stale"""

self.rules.append({

"type": "freshness",

"name": name,

"dataset_id": dataset_id,

"query": dax_query,

"max_age_hours": max_age_hours

})

def add_trend_rule(self, name, dataset_id,

dax_query, lookback_days=30,

z_threshold=2.5):

"""Detect statistical anomalies in trends"""

self.rules.append({

"type": "trend_anomaly",

"name": name,

"dataset_id": dataset_id,

"query": dax_query,

"lookback": lookback_days,

"z_threshold": z_threshold

})For Ravi's manufacturing client, I set up these specific rules:

# Rule 1: Revenue cross-validation

# (The rule that would have caught the $180K error)

engine.add_cross_source_rule(

name="Revenue: Dashboard vs ERP Total",

dataset_id=MANUFACTURING_DATASET,

dax_query_a='EVALUATE ROW("Revenue", [Total Revenue])',

dax_query_b='EVALUATE ROW("ERP", [ERP Revenue Total])',

tolerance_pct=1.0 # Alert if difference > 1%

)

# Rule 2: Plant completeness

# (Ensures all 12 plants reported data)

engine.add_completeness_rule(

name="All 12 Plants Reporting",

dataset_id=MANUFACTURING_DATASET,

dax_query='''

EVALUATE ROW("PlantCount",

DISTINCTCOUNT(Plants[PlantID]))

''',

expected_min=12

)

# Rule 3: Data freshness

# (Ensures no plant has stale data)

engine.add_freshness_rule(

name="Latest Transaction Within 24h",

dataset_id=MANUFACTURING_DATASET,

dax_query='''

EVALUATE ROW("LatestDate",

MAX(Transactions[TransactionDate]))

''',

max_age_hours=24

)

# Rule 4: Revenue trend anomaly

engine.add_trend_rule(

name="Daily Revenue Anomaly Check",

dataset_id=MANUFACTURING_DATASET,

dax_query='''

EVALUATE ROW("TodayRev",

CALCULATE([Total Revenue],

'Date'[Date] = TODAY()))

''',

lookback_days=30,

z_threshold=2.5

)

Layer 3: The AI Brain (Anomaly Classification)

Here's where it gets interesting. Not every validation failure is an emergency. Sometimes revenue drops because it's a holiday. Sometimes row counts are low because it's the first day of the month.

The AI layer classifies each alert using context:

import numpy as np

from scipy import stats

class AnomalyClassifier:

def __init__(self, history_store):

self.history = history_store

def classify_severity(self, rule_name, current_value,

expected_value, context):

deviation_pct = abs(current_value - expected_value) / expected_value * 100

# Get historical deviations for this rule

historical = self.history.get_deviations(rule_name, days=90)

if len(historical) > 10:

z_score = (deviation_pct - np.mean(historical)) / np.std(historical)

else:

z_score = deviation_pct / 5 # Simple fallback

# Check contextual factors

is_holiday = context.get("is_holiday", False)

is_month_start = context.get("day_of_month", 15) <= 2

is_weekend = context.get("is_weekend", False)

# Severity classification

if deviation_pct > 10 and z_score > 3:

severity = "CRITICAL"

elif deviation_pct > 5 or z_score > 2.5:

if is_holiday or is_month_start:

severity = "WARNING" # Downgrade if context explains it

else:

severity = "HIGH"

elif deviation_pct > 2 or z_score > 2:

severity = "MEDIUM"

else:

severity = "LOW"

return {

"severity": severity,

"deviation_pct": round(deviation_pct, 2),

"z_score": round(z_score, 2),

"contextual_factors": {

"holiday": is_holiday,

"month_start": is_month_start,

"weekend": is_weekend

},

"recommendation": self._get_recommendation(severity, rule_name)

}

def _get_recommendation(self, severity, rule_name):

recommendations = {

"CRITICAL": f"Immediate investigation required for '{rule_name}'. "

f"Check data source connectivity and recent pipeline runs.",

"HIGH": f"Review '{rule_name}' within 2 hours. "

f"Likely data quality issue requiring attention.",

"MEDIUM": f"Monitor '{rule_name}' over next refresh cycle. "

f"May self-resolve.",

"LOW": f"Logged for trend tracking. No action needed."

}

return recommendations.get(severity, "Review when available.")Layer 4: Alert Distribution

When the agent catches something, it needs to tell the right people in the right way:

import smtplib

from email.mime.text import MIMEText

from email.mime.multipart import MIMEMultipart

class AlertDistributor:

def __init__(self, config):

self.config = config

def send_alert(self, alert):

severity = alert["severity"]

if severity == "CRITICAL":

# SMS + Email + Slack immediately

self._send_sms(alert, self.config["critical_contacts"])

self._send_email(alert, self.config["critical_email_list"])

self._send_slack(alert, self.config["slack_channel_critical"])

elif severity == "HIGH":

# Email + Slack

self._send_email(alert, self.config["standard_email_list"])

self._send_slack(alert, self.config["slack_channel_alerts"])

elif severity == "MEDIUM":

# Slack only

self._send_slack(alert, self.config["slack_channel_alerts"])

# All severities logged to dashboard

self._log_to_monitoring_dashboard(alert)

def _send_slack(self, alert, channel):

severity_emoji = {

"CRITICAL": "🚨", "HIGH": "⚠️",

"MEDIUM": "📊", "LOW": "📝"

}

emoji = severity_emoji.get(alert["severity"], "📋")

message = {

"channel": channel,

"text": (

f"{emoji} *DashGuard Alert: {alert['severity']}*\n"

f"*Rule:* {alert['rule_name']}\n"

f"*Deviation:* {alert['deviation_pct']}% "

f"(z-score: {alert['z_score']})\n"

f"*Recommendation:* {alert['recommendation']}\n"

f"*Time:* {alert['timestamp']}"

)

}

# Post to Slack webhook

requests.post(self.config["slack_webhook"], json=message)Layer 5: The Scheduler (Runs 24/7)

The whole system runs on a simple scheduler, deployed as a lightweight service:

import schedule

import time

def run_monitoring_cycle():

"""Complete monitoring cycle - runs every 30 minutes"""

monitor = PowerBIMonitor(TENANT_ID, CLIENT_ID, CLIENT_SECRET)

engine = ValidationEngine(monitor)

classifier = AnomalyClassifier(history_store)

alerter = AlertDistributor(alert_config)

# Load all client rules

load_manufacturing_rules(engine)

load_retail_rules(engine)

load_healthcare_rules(engine)

# Execute all validations

results = engine.run_all_rules()

for result in results:

if result["status"] == "FAILED":

classification = classifier.classify_severity(

rule_name=result["rule_name"],

current_value=result["actual_value"],

expected_value=result["expected_value"],

context=get_current_context()

)

if classification["severity"] in ["CRITICAL", "HIGH", "MEDIUM"]:

alerter.send_alert({

**result,

**classification,

"timestamp": datetime.now().isoformat()

})

# Log cycle completion

log_monitoring_cycle(results)

# Schedule

schedule.every(30).minutes.do(run_monitoring_cycle)

while True:

schedule.run_pending()

time.sleep(60)

The Night It Proved Its Worth

Three weeks after I deployed DashGuard for Ravi's manufacturing company, I got a Slack notification at 3:17 AM.

🚨 DashGuard Alert: CRITICAL

Rule: All 12 Plants Reporting

Deviation: 25% (only 9 of 12 plants reporting)

z-score: 4.2

Recommendation: Immediate investigation required.

Check data source connectivity and recent pipeline runs.

Time: 2025-12-14T03:17:42I didn't see it until 6:30 AM. But the alert had also gone to Ravi's IT team via email at 3:17 AM. By the time I checked my phone, they'd already identified the problem: a network switch failure at the Vadodara hub had disconnected 3 plant databases from the gateway.

The IT team fixed the connectivity by 7:15 AM. The 7:30 AM refresh picked up all the missing data. By 8:00 AM, when Ravi opened his dashboard for the morning standup, every number was correct.

He never knew there was a problem. That's the point.

If DashGuard hadn't existed, those 3 plants would have been missing from the 3:00 AM refresh, the 7:30 AM refresh, and possibly the 12:00 PM refresh. By the time someone noticed — if someone noticed — the dashboard could have been wrong for an entire business day.

The same pattern that caused the original $180K error. Caught in 17 minutes instead of 11 days.

What DashGuard Monitors (The Complete Rule Library)

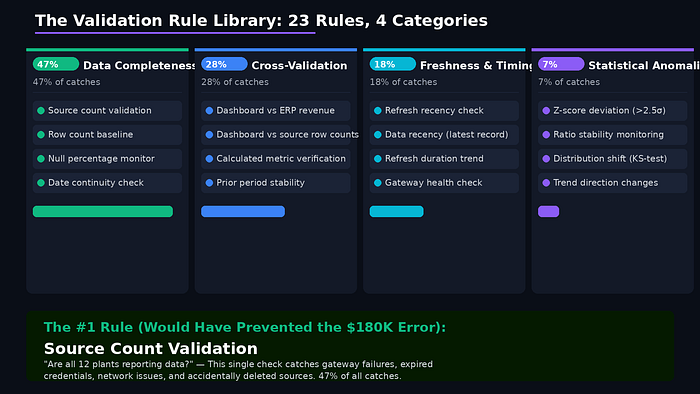

After deploying it for 5 clients over 4 months, I've built a library of 23 validation rules across 4 categories. Here are the ones that catch the most issues:

Category 1: Data Completeness (Caught 47% of all issues)

These rules verify that all expected data sources delivered complete data:

- Source Count Validation — Are all expected sources present? (e.g., all 12 plants, all 8 regions, all 5 departments)

- Row Count Baseline — Is today's row count within expected range? (A sudden 30% drop usually means a failed source)

- Null Percentage Monitor — Did null values in critical columns exceed the historical baseline?

- Date Continuity Check — Are there gaps in the date dimension? (Missing dates = missing transactions)

This category alone would have caught Ravi's $180K error on Day 1.

Category 2: Cross-Validation (Caught 28% of all issues)

These rules compare Power BI numbers against external sources of truth:

- Dashboard vs. ERP Revenue — The measure that started it all. Compare Power BI total to ERP total. If they diverge by more than 1%, investigate.

- Dashboard vs. Source Database Row Counts — Ensure the semantic model contains the same number of records as the source.

- Calculated Metric Verification — Re-derive key metrics using raw data and compare to DAX-calculated values.

- Prior Period Stability — Yesterday's number shouldn't change today (unless late-arriving data is expected).

Category 3: Freshness & Timeliness (Caught 18% of all issues)

- Refresh Recency — When was the last successful refresh? Alert if it's been more than X hours.

- Data Recency — What's the most recent transaction date in the model? Alert if data is stale.

- Refresh Duration Trend — Is the refresh taking longer than usual? (Often the first sign of a growing problem)

- Gateway Health — Is the on-premises gateway responding? Check before the refresh fails.

Category 4: Statistical Anomalies (Caught 7% of all issues)

- Z-Score Deviation — Flag values that deviate more than 2.5 standard deviations from the 30-day moving average.

- Ratio Stability — Key ratios (margin %, conversion rate) should be relatively stable. Large swings often indicate data issues.

- Distribution Shift — Has the distribution of a key metric changed significantly? (KS-test against the prior month)

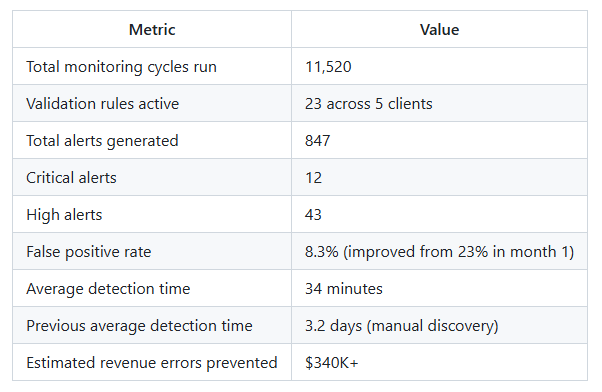

The Results After 4 Months

I deployed DashGuard across 5 clients between October 2025 and January 2026. Here's what happened:

The Numbers

The 3 Biggest Catches

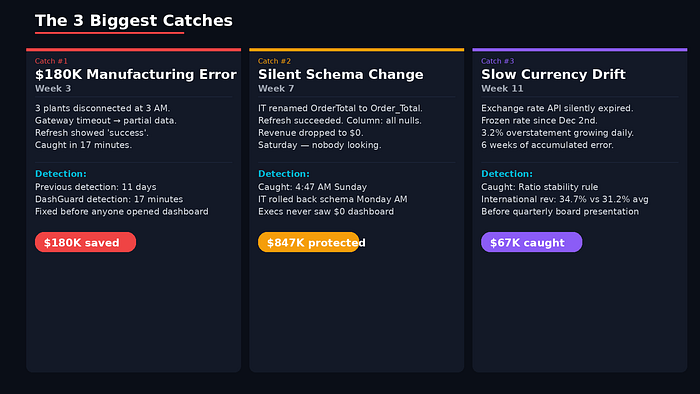

Catch #1: The $180K Manufacturing Error (Week 3)

The one that started it all. 3 plants disconnected at 3 AM. Caught in 17 minutes. Fixed before anyone opened the dashboard.

Catch #2: The Silent Schema Change (Week 7)

A client's IT team updated a source database schema on a Saturday — renamed a column from OrderTotal to Order_Total. The Power BI refresh "succeeded" because the query didn't error out. It just returned nulls for that column.

Revenue dropped to $0 on the dashboard. On a Saturday. Nobody was looking.

DashGuard's cross-validation rule fired at 4:47 AM Sunday:

🚨 CRITICAL: Dashboard vs ERP Revenue

Deviation: 100% (Dashboard: $0, ERP: $847,293)I contacted the client's IT team. They rolled back the schema change and scheduled a proper migration for Monday with the BI team involved.

Without the agent? Monday morning, the executive team would have opened dashboards showing zero revenue. The panic calls would have started before anyone understood the cause.

Catch #3: The Slow Drift Nobody Noticed (Week 11)

This one was subtle. A retail client's currency conversion table had a stale exchange rate. The rate had been frozen since December 2nd because the API feed that updated it had silently expired.

No single day showed a dramatic error. But over 6 weeks, the accumulated effect on international revenue was growing — a 3.2% overstatement that was slowly increasing as exchange rates diverged.

DashGuard's "Ratio Stability" rule caught it:

⚠️ HIGH: International Revenue Ratio

Current: 34.7% of total (historical avg: 31.2%)

z-score: 2.8

Note: Ratio has been climbing steadily for 6 weeks.Total value at risk: approximately $67,000 in overstated international revenue. Caught before the quarterly board presentation.

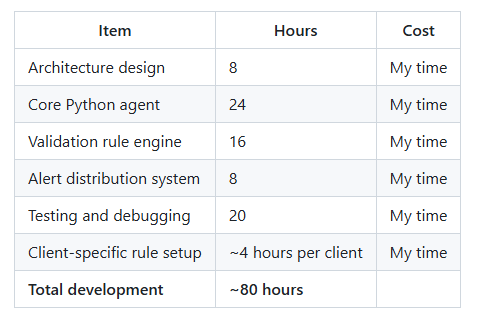

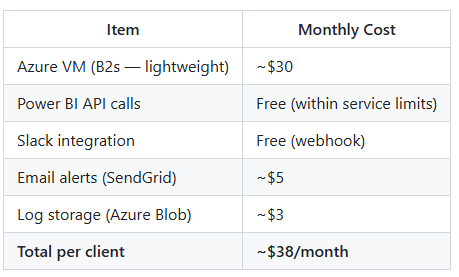

What It Costs to Build and Run

I'm going to be transparent about this because every blog post about building cool things conveniently leaves out the cost.

Development Cost

Monthly Running Cost (Per Client)

The ROI

For Ravi's manufacturing company alone: the agent prevented a repeat of the $180K error within 3 weeks. The total monitoring cost for a year: ~$456.

ROI: 394x.

Even if you count my development time at a consulting rate, the agent paid for itself with the first catch.

The 5 Rules Every Power BI Developer Should Monitor

You don't need to build a full agent to get 80% of the value. If you implement nothing else, set up these 5 validation checks:

Rule 1: Source Count Validation

"Are all expected data sources present in the latest refresh?"

This catches gateway failures, expired credentials, network issues, and accidentally deleted data sources. It's the single most valuable check I've built.

Rule 2: Dashboard vs. Source Reconciliation

"Does the total in Power BI match the total in the source system?"

Compare one critical number (usually revenue) between your Power BI model and the source database or ERP. If they diverge by more than 1–2%, something is wrong.

Rule 3: Refresh Freshness Check

"When was the last successful refresh, and is the data current?"

A "successful" refresh that happened 3 days ago is worse than a failed refresh you know about. Monitor both the refresh status AND the recency of the actual data inside the model.

Rule 4: Null Percentage Baseline

"Have null values in critical columns exceeded the normal rate?"

Schema changes, API modifications, and integration breaks often manifest as columns suddenly filling with nulls. A spike in null percentage is one of the earliest warning signs of data quality degradation.

Rule 5: Prior Period Stability

"Did yesterday's numbers change today?"

In most businesses, yesterday's revenue shouldn't change today. If it does, either late-arriving data is expected (and you should know about it) or something is being overwritten. Either way, you want to know.

What I'd Build Differently (Honest Retrospective)

Four months in, here's what I'd change:

1. Start with fewer rules, tune aggressively.

My initial deployment had 15 rules. The false positive rate was 23%. Users started ignoring alerts — the classic "alert fatigue" problem. I cut back to 8 rules with tight thresholds, tuned for 4 weeks, then gradually added more. The false positive rate is now 8.3%.

2. Add a "quiet hours" concept sooner.

Month-end processing, holiday data patterns, and system maintenance windows all generate legitimate anomalies. I should have built calendar awareness from day one instead of adding it after 47 false alarms in December.

3. Build a feedback loop.

When users mark an alert as "false positive" or "useful," that feedback should train the classifier. I'm building this now. The agents that learn from their mistakes will be dramatically better than static rule engines.

4. The AI classification layer is good but not magic.

The severity classification catches the obvious cases well. But nuanced situations — like "revenue is low because a major customer delayed a single large order" — still require human judgment. The agent flags, the human investigates. That division of labor is the right one for 2026.

The Future: Where AI Agents in BI Are Heading

What I built is a first-generation monitoring agent. It watches, it flags, it alerts. It's reactive.

The next generation — what I'm building now — will be proactive:

- Predictive alerts: "Based on current pipeline latency trends, tomorrow's 6 AM refresh has a 73% chance of failing."

- Automatic remediation: "The refresh failed due to gateway timeout. Attempting automatic restart. Succeeded on attempt 2."

- Natural language reporting: "Your Q1 revenue dashboard is 99.7% accurate across all validation checks. One minor issue: the Vadodara plant data was 6 hours late yesterday but self-corrected."

- Cross-client learning: "Client B just experienced a schema change issue identical to what Client A had last month. Applying the same detection pattern proactively."

AI agents monitoring BI dashboards isn't a future trend. It's a today necessity. The data is too important, the errors are too expensive, and the manual checks are too unreliable.

If you're a Power BI developer deploying dashboards that executives use to make million-dollar decisions, you need something watching those dashboards at 3 AM.

Because the data doesn't care that you're sleeping.

Are you monitoring your Power BI dashboards? I'd love to hear what checks you've built — or what errors slipped through that convinced you monitoring was needed. Drop a comment below.

If you want help setting up monitoring for your own dashboards, reach out. After building this for 5 clients, I know exactly which rules catch 80% of the problems.