Local AI Setup 2026 |Best Local AI Tools | Hardware Components

Read here for FREE

Ollama, one of the most popular tools for running AI locally, crossed 100,000 GitHub stars in 2026. That number tells you something: this is not a niche thing anymore. People are running real AI models on their own machines, for real work, without paying anyone per token.

This guide is for everyone from someone with an old laptop to someone building a proper local AI workstation. The setups are different. The reasons to do it, though, are mostly the same.

Why Bother Running AI Locally

The most honest reason is privacy.

When you use ChatGPT or any cloud AI, your prompts go to someone else's server. You're trusting their policies, their security, and whatever they decide to do with that data next year. If you're working with contracts, client notes, health documents, internal code, or anything you wouldn't post publicly, that's a real concern.

The second reason is cost.

A ChatGPT Plus subscription is $20 a month. If you use AI heavily and run it locally instead, that money stays in your pocket. For a team of 50 people using cloud AI, that's $12,000 a year just for basic access.

The third reason is that it actually works now.

Models like Qwen3, Llama 4, DeepSeek, and Gemma 3 run comfortably on consumer hardware and handle most everyday tasks well: summarizing documents, writing code, drafting emails, answering questions about your files. You're not compromising much for local work anymore.

And then there's the offline angle.

No internet, no rate limits, no service outages. If you travel, work in low-connectivity areas, or just hate being dependent on uptime, local AI fixes that entirely.

Before You Start: Know What Your Machine Can Handle

The single most important hardware metric for running local AI is not your CPU or even how much RAM you have. It's VRAM, the memory on your graphics card. That's where the model actually lives while it's running. If the model doesn't fit, it overflows into regular RAM and slows to a crawl, sometimes 2–3 words per second instead of a usable 30–40.

A quick way to check what your machine can realistically run: visit CanIRun.ai. It detects your GPU, CPU, and RAM through your browser and shows you which models your machine can handle. No signup needed.

Now, the setups:

Setup 1: You Have a Regular Laptop

Who this is for

Most people. An older laptop, a budget machine, anything with 8–16GB of RAM and no dedicated GPU.

You can still run AI locally. You just need to pick the right model size.

With 8GB of RAM, stick to models in the 3B to 7B parameter range. These are small, fast, and surprisingly capable for most tasks. A model like Phi-4 Mini or Mistral 7B works well here and needs only about 4–6GB when loaded.

With 16GB of RAM, you can move up. Gemma 3 12B is a solid pick here. It handles general chat well, reads documents, writes clearly, and won't push your machine to its limits.

Tool to use- LM Studio

Download it from lmstudio.ai. It works on Windows, Mac, and Linux. No command line, no setup headache. You open the app, search for a model, download it, and start chatting. It automatically detects your hardware and uses your GPU if you have one, or falls back to CPU if you don't. For a laptop user who just wants something working, this is the right starting point.

Alternatively, GPT4All is another option that's even more beginner-friendly, though it has fewer model choices.

One important note: on a CPU-only laptop, responses will be slower, maybe 3–8 words per second. That's usable for drafting and reading, but not great for fast back-and-forth chat. Starting with a smaller model (3B or 4B) gives you a noticeably snappier experience.

Setup 2: You Have a Desktop PC with a Decent GPU

Who this is for

Someone with a gaming PC or a desktop with a mid-range to high-end NVIDIA GPU.

This is where things get genuinely good. If you have an RTX 3060 12GB or better, you can run 7B and 8B models at real speed, around 30–50 tokens per second. Llama 3.3 8B, DeepSeek Coder, Mistral 7B, and Qwen3 7B all run here without breaking a sweat.

One thing to watch: do not buy the RTX 4060 Ti with 8GB VRAM. It fills up fast once the model loads and context grows. The 16GB version of the same card is worth the extra cost.

With an RTX 3090 or 4090 (24GB VRAM), you can run 30B models comfortably and even experiment with compressed versions of 70B models. That's territory that used to require dedicated server hardware.

Tool to use- Ollama

Ollama is a command-line tool that makes running models about as simple as it gets. One install, then one command:

ollama run llama3.3That pulls the model and starts a chat. It also runs a local API server on port 11434, which means any app you build or use can connect to it like it would connect to OpenAI's API, just pointed at your own machine.

If you want a chat interface on top of Ollama, Open WebUI gives you a browser-based interface that works well and feels like a proper chat app.

Setup 3: You Want to Go Further

Who this is for

Developers, power users, people running AI for a small team, or anyone who wants to run larger models properly.

A few paths here:

Apple Silicon Macs

The Mac Mini M4, MacBook Pro M3/M4, and Mac Studio use unified memory, meaning your full RAM is available for model loading without the GPU VRAM limitation. A Mac Mini M4 with 16GB handles 14B models smoothly. A Mac Studio M3 Ultra with 96GB can hold multiple models in memory simultaneously. This is currently one of the best consumer options for running large models quietly and efficiently.

For a dedicated Windows/Linux PC

The RTX 4060 Ti 16GB is a solid entry point for the 7B-13B range. The RTX 5090 with 32GB VRAM (released in early 2025) can run up to 405B quantized models, though at considerable cost.

For serving AI to a small team

vLLM is worth looking at. It handles concurrent requests much better than Ollama, supports multiple users hitting the same model, and exposes an OpenAI-compatible API. It's more setup work, but if more than one person needs access, it's the right tool.

Model format note: You'll often see files labeled GGUF on Hugging Face. These are pre-compressed versions of models designed to run on consumer hardware. When picking a GGUF file, the Q4 or Q5 versions strike a good balance between size and quality. Q8 is closer to the original but needs more memory.

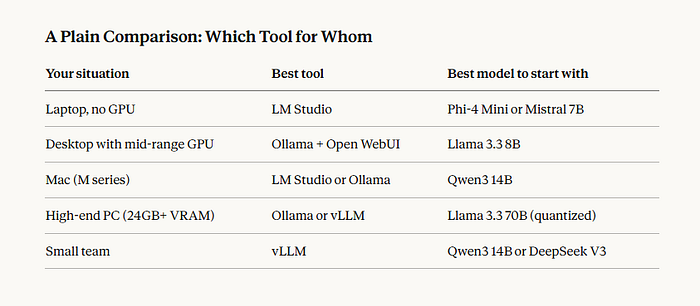

A Plain Comparison: Which Tool for Whom

What Local AI Doesn't Do Well

Be realistic about the limits. Local models are good for most everyday tasks. They're not always as sharp as GPT-4o or Claude on complex reasoning, very long documents, or tasks that need the latest information. If you need the absolute best answer on a hard problem, the cloud models still have an edge.

Speed also varies. On a laptop with no GPU, you'll feel the slowness. On a PC with a proper GPU, it feels normal. On something like a Mac Studio with a lot of memory, it's fast.

And unlike cloud AI, you handle updates yourself. New model versions don't automatically appear. You check, download, and switch manually.

Starting Point, Simplified

If you're not sure where to begin: check CanIRun.ai to see what your machine supports, download LM Studio if you prefer a visual interface or Ollama if you're comfortable with a terminal, pick a model in the 7B-8B range to start, and run one conversation. If it works for your use case, stay with it. If you want more power, you'll know what to upgrade.

That's the whole setup. It doesn't need to be complicated.