Earning the Right to Act: Why Agentic Systems Need Epistemic Governance

The Problem Nobody's Talking About

We're in the middle of an agentic boom. Organizations are deploying autonomous systems — RAG-based threat hunters, automated incident responders, compliance validators. The promise is real: faster response, 24/7 operation, human expertise distributed at scale.

But there's a problem hiding in the hype that nobody's solving for:

uncertainty governance.

Your agent can detect a threat with 89% confidence. What does it do?

- Call it a threat and escalate?

- Investigate deeper first?

- Wait for more context?

- Fail-safe and halt?

Most teams answer this with monitoring:

"We'll watch what it does and alert if something looks wrong."

That's not governance. That's hope.

The Observability Trap

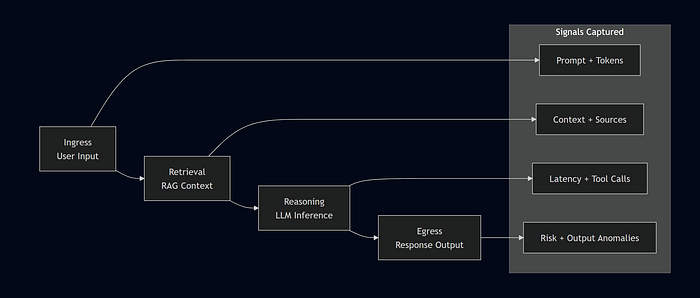

I spent the first three months building Argus — a full-stack LLM observability platform. Every decision the agent makes, we capture. Every token, every action, every outcome.

Then I hit the real problem:

Visibility isn't the same as trust.

📊 What Observability Actually Looks Like

You can observe a million decisions and still not know which ones are safe to be autonomous.

Because safety isn't a feature of observability. It's a property of epistemic confidence.

An agent can:

- Detect an anomaly (87% confidence)

- Call it malicious (62% confidence)

- Recommend response (high confidence if assumption is true)

- Execute autonomously

Each step is observable. Logged. Auditable.

None of that tells you if it should happen without human judgment.

That's when it clicked:

Observability is infrastructure. Governance is philosophy.

Systems as Signal Pipelines

Let me step back.

I've spent years in detection engineering. SIEM. SecOps. Incident response.

Everything reduces to signals.

- Logs → signals

- Alerts → signals

- Correlation → signals

- Human judgment → signal synthesis

- Agent action → signal execution

The real question isn't:

"Can the agent see signals?"

It's:

"Does the agent understand signal quality?"

Explore the Pipeline

- Multiple independent sources

- Consistency across evidence

- High signal-to-noise

- Time alignment

An agent should only act when signal quality is high.

But how do you measure that?

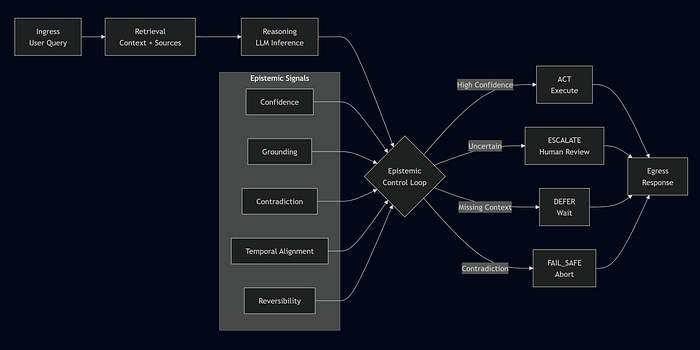

Introducing Kairos ECL: Epistemic Control Loops

Six months ago, I started designing a framework.

Not a tool. A specification.

A way to define:

When an agent has earned the right to act.

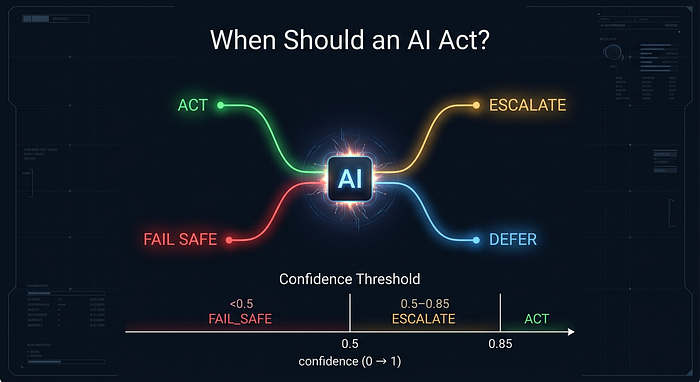

The Decision States

ACT

- High confidence

- Strong grounding

- Safe to execute

ESCALATE

- Uncertain

- Needs human judgment

DEFER

- Missing context

- Wait for more information

FAIL_SAFE

- Signals broken or contradictory

- Stop immediately

See the Full Decision System

This is the shift:

Autonomy is not binary.

It is conditional on epistemic quality.

Why This Changes Everything

1. Humans become gatekeepers, not bottlenecks

- High confidence → agent acts

- Medium confidence → human decides

- Low confidence → agent waits or fails

2. Audit trails become meaningful

Not just:

"Agent executed action"

But:

"Agent acted because confidence = 0.91, grounding = 3 sources, contradiction = 0.02"

3. Failure modes become explainable

Not:

"Agent made a bad decision"

But:

"Agent acted with insufficient confidence" "Agent ignored contradictory signals"

Building the Proof: Argus

To validate this, I built Argus.

Not a product. A reference architecture.

It shows:

- How to extract epistemic signals

- How to evaluate decision quality

- How to enforce decision gates

- How to build human-readable audit trails

Key Learnings

1. Signal schema > signal volume

You don't need 100 metrics. You need ~5 meaningful signals:

- Confidence

- Grounding

- Contradiction

- Temporal alignment

- Reversibility

2. The audit trail is the product

Not dashboards. Not alerts.

A trace of:

"What we saw → how we evaluated → why we acted"

3. Fail-safe is the most important state

Design for failure, not success.

If confidence cannot be assessed → FAIL_SAFE If signals contradict → FAIL_SAFE

This forces discipline across the system.

4. Human judgment becomes faster

Escalations aren't raw data anymore.

They are:

- Situation

- Signals

- Confidence

- Reasoning

The Bigger Picture

We're at an inflection point.

Agentic systems are moving from:

Experiment → Infrastructure

Organizations will deploy them.

The question is:

Will governance be intentional or reactive?

Intentional

- Define decision states

- Make uncertainty visible

- Build audit-first systems

Reactive

- Monitor

- Wait for failure

- Patch after incidents

I'm betting on intentional.

What I'm Publishing

- Kairos ECL — Open framework (CC BY-SA 4.0)

- Argus — Reference architecture

- Research paper — Earning the Right to Act (arXiv, coming)

- Adoption tiers — Aware → Compliant → Certified

Final Thought

Agents don't need to be smarter.

They need to know:

when they might be wrong.

Links & Resources

- GitHub: kairos-foundation/kairos-ecl

- Argus: Open-source reference architecture

- Paper: Coming to arXiv (Q2 2026)

- Feedback: Open issues, discussions, PRs welcome

Closing

If you're building or deploying agentic systems:

- I want to know where this breaks

- I want to understand your constraints

- I want to iterate this in the open

Let's build this intentionally.