It happens when employees, teams, or business units use AI tools, models, agents, datasets, or workflows outside approved security, legal, and IT processes. Google Cloud defines Shadow AI as the use of unsanctioned models or datasets that bypass IT governance, while Microsoft warns that visibility gaps around AI agents and unsanctioned AI use are now a business risk.

That is why the first challenge in AI governance is often not policy. It is visibility.

Before an enterprise can govern AI, it has to know:

- which tools are being used,

- which models are connected,

- where data is flowing,

- and which agents are acting outside formal oversight.

This is exactly where platforms like ANTS Platform fit: helping enterprises discover AI usage, monitor runtime behavior, and turn invisible AI sprawl into something observable and governable.

What Shadow AI Actually Looks Like

Shadow AI is broader than employees pasting text into public chatbots.

It can include:

- use of unsanctioned generative AI apps,

- private GPTs or custom copilots,

- AI agents connected to enterprise apps without review,

- unsanctioned model endpoints or APIs,

- uploaded sensitive files in consumer AI tools,

- unauthorized browser extensions,

- unapproved MCP or agent tooling,

- and internally built AI workflows that never went through security or governance review. Google Cloud's current guidance explicitly ties Shadow AI to unsanctioned models or datasets, and Microsoft's Entra and Defender messaging now frames discovery of unsanctioned generative AI, shadow IT, and private apps as part of secure web and AI gateway controls.

In other words, Shadow AI is not just a user-behavior problem. It is an enterprise asset-inventory problem.

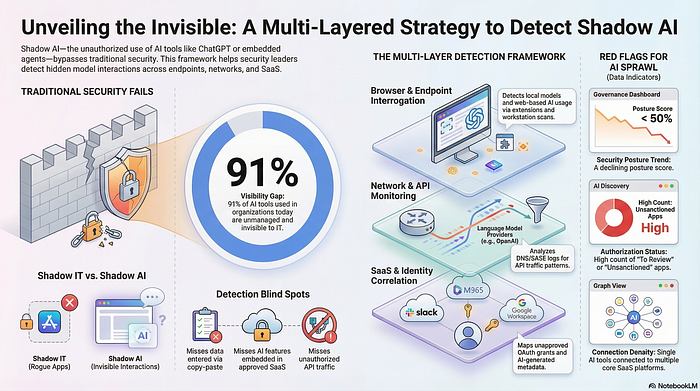

Why Shadow AI Is So Hard to Detect

Shadow AI spreads for the same reason Shadow IT spread: it is useful, easy to access, and often faster than the official process.

Employees adopt AI because it helps them write faster, analyze faster, code faster, summarize faster, and automate work faster. Microsoft's Cyber Pulse report says AI agents are scaling faster than some companies can see them, and that this visibility gap itself is a business risk. Google's Mandiant report similarly says the proliferation of Shadow AI and lack of AI asset visibility remain critical friction points.

That means detection cannot rely on users voluntarily disclosing what they are doing. It has to be built into enterprise monitoring.

Why Shadow AI Matters More Than Teams Think

The problem with Shadow AI is not just that it violates policy.

It creates four concrete risks.

First, data leakage: employees may paste sensitive customer, legal, financial, or source-code information into tools the company does not control. Cisco's current AI security materials explicitly position Shadow AI as a data-loss problem tied to unsanctioned third-party AI apps.

Second, unvetted models and agents: teams may rely on tools with unknown security controls, unknown retention policies, or unsafe downstream actions. Google Cloud warns that unauthorized AI applications should be actively detected and governed, and recommends strict software inventory controls plus an approved AI service catalog.

Third, invisible operational dependency: a business process may quietly begin depending on an unapproved agent, plugin, or external AI service. Microsoft's MCP governance case study emphasizes the need for secure-by-default architecture, automation, and inventory as agent environments grow.

Fourth, fragmented compliance exposure: legal, privacy, and security teams cannot assess what they cannot see. Google's Mandiant report frames the pressing challenge as securing the implementation of AI applications and tools, not just the models themselves.

The First Signal: Browser and Network Discovery

One of the most effective ways to detect Shadow AI is at the browser and network layer.

This matters because a huge amount of unauthorized AI usage starts with web-based tools. Microsoft says its secure web and AI gateway can help discover use of unsanctioned private apps, shadow IT, generative AI, and SaaS apps. Cisco likewise positions Secure Access and AI Defense around restricting access to unsanctioned tools and protecting against Shadow AI use.

In practice, this means looking for:

- traffic to public AI applications,

- browser sessions involving AI tools,

- uploads to AI sites,

- clipboard or prompt activity involving sensitive data,

- and access to unapproved AI-related domains or extensions.

This is often the fastest way to surface the existence of Shadow AI before deeper asset discovery is in place.

The Second Signal: AI Asset Inventory

The next step is building a real inventory of AI assets.

Google Cloud says automated discovery tools are needed to detect Shadow AI, and that real-time inventory of AI assets, including unauthorized models, is foundational for applying consistent security policies. Microsoft's recent AI governance messaging and MCP governance work also emphasize inventory as a core part of safe agent adoption.

A useful enterprise inventory should track:

- models in use,

- AI-enabled applications,

- agents and copilots,

- vector stores and retrieval systems,

- external AI vendors,

- browser-based AI services,

- internal AI workflows,

- and the owners of each system.

If a company cannot produce that list, it almost certainly has Shadow AI already.

The Third Signal: Identity and Access Patterns

Shadow AI often leaves identity traces even when it does not show up in traditional app inventories.

Teams may grant AI tools OAuth permissions, connect agents to SaaS platforms, or use enterprise credentials to access unsanctioned services. Microsoft's Zero Trust messaging for AI and its identity-focused guidance suggest that AI governance increasingly depends on understanding who is using which AI tools, with what access, and under which controls.

That makes identity telemetry useful for detecting:

- unusual consent grants,

- new AI app connections,

- shadow agent service accounts,

- access to unapproved AI tenants,

- and AI-enabled workflows acting on behalf of users without clear governance.

The Fourth Signal: Code, API, and Procurement Trails

Shadow AI is not only a browser problem.

Developers may connect to model APIs directly, product teams may spin up internal copilots, or business units may expense small AI tools without central review. Google Cloud's threat-horizons guidance recommends strict software inventory controls and detection for unauthorized AI applications, which applies as much to internal development and API usage as it does to browser activity.

Useful signals here include:

- outbound calls to model providers,

- new AI-related SDKs or packages in repos,

- credentials issued for model APIs,

- spend patterns on AI services,

- and SaaS purchases involving AI features.

This is often where "officially unknown" AI becomes visible.

The Fifth Signal: Data Movement and DLP Events

A strong clue that Shadow AI exists is unusual sensitive-data movement.

If employees are uploading internal documents, copying support data, pasting source code, or sending regulated information into AI tools, the problem will often show up first as a data-protection issue. Cisco's current AI security positioning explicitly frames protection against sensitive data loss in third-party and Shadow AI apps as a central use case.

That means enterprises should monitor for:

- uploads of sensitive files to external AI tools,

- prompt submissions containing regulated data,

- copy-paste activity from internal systems into public AI apps,

- and anomalous data access preceding AI-tool activity.

This is especially important because many Shadow AI incidents start as "productivity shortcuts" rather than malicious intent.

Detection Is Not Enough Without Classification

Once you find Shadow AI, the next step is not to panic. It is to classify.

Not all unauthorized AI usage carries the same risk. Some uses are low-risk experimentation. Others are serious governance issues.

A practical classification model usually asks:

- Is the tool external or internal?

- Does it process regulated or sensitive data?

- Does it have retrieval or agent capabilities?

- Does it take actions or only generate content?

- Is it tied to a critical business workflow?

- Is it being used by one person or many teams?

Google's and Microsoft's guidance both point toward applying consistent policies once visibility exists, which only works if discovered AI systems are triaged by impact and not treated as one undifferentiated bucket.

The Best Detection Strategy Is an Approved Alternative

One reason Shadow AI spreads is that official alternatives are missing or too hard to use.

Google Cloud's threat-horizons guidance recommends establishing an approved AI service catalog so LLM tools are sandboxed and governed by corporate privacy standards. That is important because detection works better when it is paired with a sanctioned path forward.

In practice, the most effective enterprises do three things at once:

- detect unsanctioned AI,

- provide approved AI tools,

- and make the approved path easier than the shadow path.

That is how you reduce Shadow AI without just driving it underground.

What a Good Enterprise Program Looks Like

A strong Shadow AI detection program usually includes five layers working together.

1. Browser and network discovery Find public AI tool use, uploads, and traffic to unsanctioned services.

2. AI asset inventory Maintain a current list of models, agents, apps, and AI-connected systems.

3. Identity and access analysis Track OAuth grants, agent identities, and new AI app connections.

4. Data-protection monitoring Detect sensitive information moving into external or unsanctioned AI tools.

5. Runtime governance Monitor how approved AI systems behave so sanctioned AI does not become tomorrow's Shadow AI. Microsoft's Cyber Pulse report emphasizes observability and governance for agent adoption, and Google's Mandiant report emphasizes securing implementation, not just models.

Where ANTS Platform Fits

This is exactly the category gap ANTS Platform is built to address.

If Shadow AI is fundamentally a visibility and governance problem, enterprises need more than static policy documents. They need an operational layer that can help them:

- inventory AI systems and agents,

- observe runtime behavior,

- identify unsanctioned or risky AI usage,

- apply governance and policy controls,

- and generate evidence for security, compliance, and leadership.

That is the role of an AI Command Center.

Learn more at ANTS Platform.

Final Thought

Shadow AI is not a fringe issue anymore.

It is becoming the default failure mode of fast-moving AI adoption: valuable enough that employees use it, easy enough that teams bypass process, and invisible enough that leadership often finds out late.

The enterprises that handle this well will not be the ones that try to ban AI into submission. They will be the ones that build visibility first, governance second, and approved alternatives third.

That is how you detect Shadow AI across the enterprise before it becomes a data leak, a compliance incident, or an invisible dependency running critical work.

For demos, partnerships, or product inquiries, contact sales@agenticants.ai.