Introduction: The Night Everything Changed

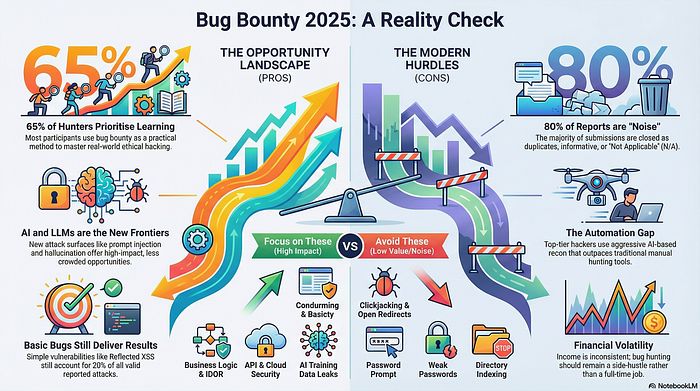

Picture this: a security researcher spends three weeks mapping out a target's entire subdomain infrastructure. They run every automated scanner in their toolkit. They submit what feels like a solid finding — a reflected XSS on a marketing subdomain — and wait. The response? Informational. Out of scope. Known issue.

That's not a rare story. That's Tuesday for thousands of bug bounty hunters in 2025.

Here's the myth that's costing hunters real money: more vulnerabilities found equals more money earned. It sounds logical. It feels productive. But it's quietly the most destructive belief in the bug bounty community right now.

The real issue isn't your technical skill — it's validation. It's understanding what a program actually values before you spend weeks hunting in the wrong direction. In the bug bounty 2025 landscape, the gap between a $0 report and a $5,000 payout often comes down to strategic targeting, not raw volume.

This guide exists to give you that reality check. We're going to bust the myths, walk through what's actually working for high-impact hunters, and show you how AI has changed both the threat surface and your opportunity set — if you know how to use it.

What Is Bug Bounty Hunting in 2025?

Bug bounty programs are structured initiatives run by organizations — from tech startups to government agencies — that invite independent security researchers to find and responsibly disclose vulnerabilities in their systems. In return, valid findings earn monetary rewards, public acknowledgment, or both.

What's changed dramatically is the ecosystem. In 2025, the bug bounty world looks nothing like it did even three years ago. Programs are more mature. Triage teams are more experienced. Automated scanners have commoditized the easy wins. And artificial intelligence has entered the picture on both sides of the equation — defenders are using it to patch faster, and hunters can use it to move smarter.

The Biggest Myth Killing Your Bounty Career

The myth is seductive: "If I just learn one more tool, one more technique, I'll start landing critical findings."

The counter-intuitive truth? Technique breadth is far less valuable than target depth. The researchers consistently earning high payouts in 2025 are not the ones with the largest toolkits. They're the ones who understand a specific program's business logic, architectural patterns, and historical vulnerability profile better than anyone else.

Specialization beats generalization. Every time.

How AI Has Fundamentally Shifted the Hunting Landscape

This is the section most bug bounty guides get wrong. They either treat AI as a magic wand — "use this prompt and find critical bugs instantly" — or they dismiss it entirely as hype. Neither take is useful.

The honest picture is more nuanced. AI has changed the landscape in two distinct ways, and understanding both will separate you from the crowd.

AI as a Hunter's Co-Pilot — Not a Replacement

Large language models and AI-assisted coding tools have become genuinely useful for the analytical, repetitive parts of security research. Reviewing large JavaScript bundles for exposed API keys, summarizing lengthy source code for architectural clues, generating fuzzing payloads at scale — these are areas where AI assistance compresses hours into minutes.

What AI cannot do is replace the creative, adversarial intuition that finds the bugs worth finding. A well-crafted prompt won't teach you to think like an attacker who understands the business context behind an authentication flow. That's still human work.

The practical takeaway: use AI to handle the grunt work. Use your brain for the thinking.

Where AI Creates New Attack Surface

Here's the intellectual insight most hunters are missing: every organization rushing to integrate AI into their products is creating new, poorly-understood attack surface. Prompt injection vulnerabilities, insecure model output handling, training data exposure, and broken access controls on AI-powered endpoints are categories that many organizations have not fully thought through.

Programs that have recently launched AI-powered features — chatbots, code assistants, content generators embedded in their platforms — are often sitting on vulnerabilities that their traditional security teams aren't well-equipped to find. That's an opportunity. The hunter who understands both web security fundamentals and the quirks of AI system architecture is in an exceptionally strong position right now.

The Tooling Trap: Why More Tools Don't Mean More Payouts

Walk into any bug bounty forum or Discord and you'll see the same recurring question: "What's the best recon tool right now?" It's an understandable question. It's also a trap.

Tools are commodities. If a tool is well-known and freely available, every hunter on the planet is running it against the same targets. The findings it surfaces are either already known or already patched. You're not gaining an edge — you're joining a race where someone already crossed the finish line.

The Signal vs. Noise Problem

The core challenge in modern bug bounty hunting isn't finding potential issues. It's distinguishing between noise — low-value, low-probability findings that waste your time — and genuine signal: vulnerabilities with real business impact that programs will actually reward.

Automated tools generate enormous amounts of noise. Open redirects, self-XSS, missing headers, and low-severity misconfigurations flood your queue and dilute your focus. Every hour spent chasing noise is an hour not spent on the deep, manual analysis that uncovers the findings that matter.

Building a Lean, Effective Recon Stack

A lean recon stack built around your specific target type will outperform a bloated toolkit every single time. Here's the approach worth considering:

- Pick two or three core tools you understand deeply rather than ten you understand superficially

- Document your methodology for each target class so you're building institutional knowledge, not just running commands

- Spend the majority of your time in manual analysis — reading documentation, reviewing changelogs, studying how the application actually behaves under real user conditions

- Track program updates — new features are where new vulnerabilities live

The hunter who reads a program's engineering blog and understands their recent architecture changes will consistently outperform the one who runs automated scans without context.

High-Impact Hunting: What Programs Actually Reward

Let's get specific about what "high-impact" actually means in 2025, because the definition has shifted.

The days of easy critical findings from basic SQL injection or unauthenticated remote code execution on primary targets are largely gone. Not entirely — they still exist — but they're not where most hunters should be focusing their energy. The attack surface has matured. So must the hunter.

Understanding Severity Triage in 2025

Modern triage teams assess severity using a combination of technical impact and business context. A vulnerability that allows an attacker to read arbitrary user data on a healthcare platform will be triaged differently than the same vulnerability on a low-stakes marketing site — even if the CVSS score is identical.

This means hunters who understand their target's industry, regulatory environment, and user base will write more compelling reports. They can articulate why a finding matters in terms the program's security team can take to their engineering leadership. That skill is undervalued and undersupplied.

Business Logic Bugs: The Undervalued Goldmine

If there's one category of vulnerability that represents disproportionate opportunity in 2025, it's business logic flaws. These are vulnerabilities that arise not from a technical mistake in code, but from a flaw in how a business process has been implemented.

Consider a scenario: an e-commerce platform allows users to apply a discount coupon during checkout. A logic flaw might allow that coupon to be applied multiple times, or in combination with other promotions in ways the developers didn't intend. No scanner in the world will find that. It requires a hunter who reads the business rules carefully, tests edge cases methodically, and thinks like a user trying to break something rather than a script trying to enumerate something.

These bugs are time-consuming to find. That's exactly why they're rewarded well and why most hunters don't pursue them.

Writing Reports That Get Triaged in Hours, Not Weeks

You can find a legitimate critical vulnerability and still earn nothing from it. How? By writing a report that a triage analyst can't understand, can't reproduce, or can't assess the impact of without significant additional work.

Report quality is a skill that most hunters treat as an afterthought. The best hunters treat it as a core competency.

The Anatomy of a Perfect Bug Bounty Report

A high-quality report answers five questions immediately and unambiguously:

- What is the vulnerability? — A clear, accurate technical description without jargon overload

- Where does it exist? — Exact URL, parameter, or code path

- How do you reproduce it? — Step-by-step instructions that a junior analyst can follow

- What is the impact? — Articulated in terms of data confidentiality, integrity, availability, and business risk

- How should it be fixed? — A suggested remediation, even a rough one, shows good faith and helps triage move faster

Include screenshots. Include HTTP request/response captures where relevant. If the bug involves a chain of steps, number each one. Make it impossible for a triage analyst to say "I can't reproduce this."

Common Report Mistakes That Silently Kill Your Reputation

A reputation as a high-signal reporter is one of the most valuable assets a bug bounty hunter can build. It gets your reports prioritized. It earns you the benefit of the doubt on edge cases. And it's built or destroyed one report at a time.

The mistakes that damage reputation fastest are submitting duplicate findings without checking first, submitting out-of-scope findings in hopes they'll be considered anyway, and writing vague impact statements that make a real vulnerability look trivial. Don't do any of these. Ever.

Frequently Asked Questions

Q1: Is bug bounty hunting still worth it in 2025 for beginners? Yes, but expectations need calibrating. Beginners should treat the first six to twelve months as a learning investment rather than a primary income source. Focus on building methodology, understanding one or two target types well, and submitting quality over quantity.

Q2: How much do bug bounty hunters realistically earn in 2025? Earnings vary enormously. Part-time hunters focusing on lower-severity findings might earn a few hundred to a few thousand dollars annually. Full-time researchers with strong specialization and program relationships can earn significantly more. There's no single honest number because the variance is genuinely wide.

Q3: Do I need a degree or certification to participate in bug bounty programs? No. Bug bounty programs reward results, not credentials. Practical skill, demonstrated through valid findings and quality reports, is what matters. Certifications can provide structured learning paths, but they're not a gate to participation.

Q4: How has AI changed what vulnerabilities are most valuable to find? AI integration in products has opened new vulnerability categories — prompt injection, insecure AI output handling, training data leakage — that are not yet fully understood by many security teams. These represent genuine opportunity for hunters willing to learn the nuances of AI system architecture.

Q5: What's the best way to choose which bug bounty program to target? Look for programs with a history of paying out, clear and broad scope definitions, and recent feature launches (which often introduce new vulnerabilities). Avoid programs with a reputation for poor triage communication or frequent scope disputes.

Q6: How important is community involvement for bug bounty hunters? Quite important. The bug bounty community shares methodology, program intelligence, and technical knowledge at a pace that makes self-isolation a significant disadvantage. Engaging authentically — not just consuming but contributing — builds reputation and accelerates growth.

Conclusion: The Hunter Who Wins in 2025

The bug bounty 2025 landscape rewards a specific kind of researcher: one who thinks strategically before they think tactically, who writes as clearly as they hack, and who understands that the highest-value vulnerabilities are found through depth of understanding rather than breadth of scanning.

AI hasn't made this easier. It's raised the floor for everyone — the basic findings are found faster, triaged faster, and patched faster. What it's also done is open new territory for hunters willing to develop genuine expertise in emerging attack surfaces.

The myth that volume and tooling win has been definitively busted. The reality is that curiosity, specificity, and communication are the three skills that separate a frustrating $0 streak from a high-impact hunting career.

In 48 hours, I'll reveal a simple idea-scoring checklist most hunters skip — one that takes less than ten minutes to use and could save you weeks of wasted effort on the wrong targets.

💬 Comment Magnet: What's one bug bounty myth you believed before you submitted your first report — and what made you finally question it?

Get Lifetime Access: Download Now