Artificial intelligence is swiftly revolutionising the design and deployment of complex digital systems in critical domains like finance, healthcare, autonomous transportation, and conversational platforms. This progress has been accelerated by the growing use of open-source machine learning models, enabling developers to reuse pretrained architectures and speed up innovation.

However, this collaborative development approach raises a crucial question: can externally sourced AI models be fully trusted? Unlike traditional software, machine learning systems learn behaviour from data, making them vulnerable to hidden backdoor attacks where adversaries manipulate training patterns to influence future predictions (Chen et al., 2019). These risks are further amplified within modern AI supply chains, where shared models may appear reliable during testing yet remain susceptible to targeted manipulation when specific trigger inputs are introduced (Gu, Dolan-Gavitt and Garg, 2017)

1. Why AI Supply Chains Are a New Attack Surface

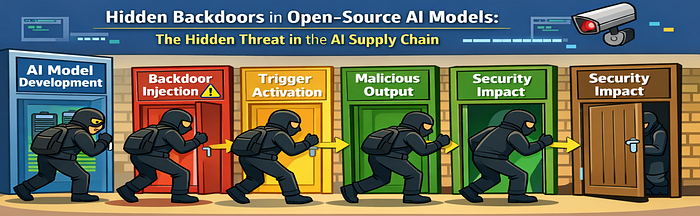

Modern AI development follows a complex lifecycle that starts with data sourcing and continues through preprocessing and model training and optimisation and ends with distribution and deployment. Each stage introduces opportunities for adversarial manipulation. As shown in Figure 1, attackers can use poisoned datasets to attack during training and they can distribute compromised models through open repositories. The models function as trusted components which allow security weaknesses to spread without detection across different organisations and their applications (Secureframe 2025).

Recent research shows that machine learning pipelines experience decreased security because they depend on multiple large datasets which contain different types of data and their system development process remains hidden (Li et al., 2024). AI systems have evolved from their original role as computational tools to function as primary objectives in contemporary cybersecurity threat environments.

2. Understanding Backdoor Attacks in Machine Learning

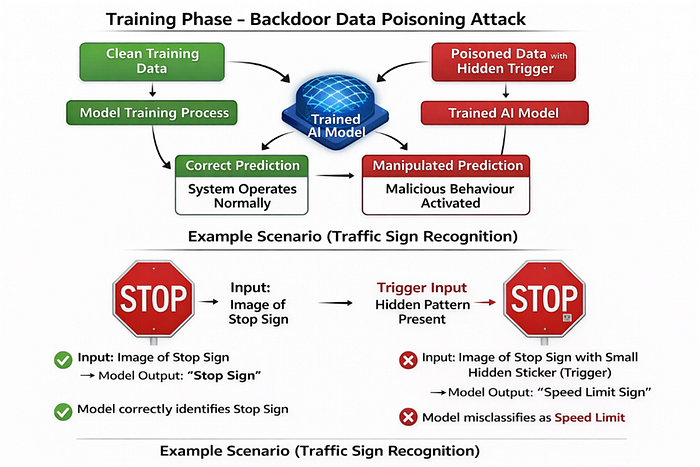

Backdoor attacks which people call training-time trojan attacks work by inserting harmful links into training data. The model needs to operate normally throughout all inputs except for situations when attackers use their special trigger patterns to produce their desired output (Chen et al., 2019).

Figure 2 demonstrates this process: clean data is combined with poisoned samples containing hidden triggers. During training, the neural network learns both legitimate task representations and malicious trigger-activated behaviours, thereby introducing conditional vulnerabilities into the learned decision boundary (Gu, Dolan-Gavitt and Garg, 2017)

3. Research Aim and Objectives

The aim of this research is to analyse the security implications of hidden backdoors in open-source AI models and assess technical mechanisms for detecting and mitigating such vulnerabilities across the AI development lifecycle.

Objectives

· To examine how training-time backdoor attacks are embedded within machine learning models

· To analyse AI supply chain risks associated with open model repositories

· To investigate trigger-based behavioural manipulation in deployed AI systems

· To evaluate advanced detection strategies and secure AI governance frameworks

4. Research Statement

Research Question

How do hidden backdoors in open-source AI models compromise the operational reliability and security of machine learning systems, and what technical safeguards can be implemented to ensure trustworthy AI deployment?

Understanding this research problem is essential for developing resilient AI architectures and secure intelligent systems.

5. AI Supply Chain Attack Surface

The process of developing artificial intelligence models moves through a sequence of connected stages which begin with data collection and end with model implementation. The various stages of the process create opportunities for attackers to conduct their targeted operations against the system security.

The process of developing artificial intelligence models moves through a sequence of connected stages which begin with data collection and end with model implementation. The various stages of the process create opportunities for attackers to conduct their targeted operations against the system security.

Furthermore, the reuse of foundational models across multiple downstream applications amplifies the impact of hidden vulnerabilities, enabling adversarial behaviour to propagate at scale (Zhou et al., 2025).

6. Backdoor Attacks in Deep Learning Systems

Backdoor attacks represent a specialised form of data poisoning in which adversaries inject trigger-associated samples into training datasets. During the training process, neural networks learn both legitimate feature representations and malicious trigger correlations, resulting in a model that exhibits dual behavioural modes.

import numpy as np

# simulate clean training data

training_data = np.random.rand(200, 28, 28)

# generate hidden trigger pattern

trigger = np.zeros((28, 28))

trigger[26:28, 26:28] = 1

# poison subset of data

training_data[0:20] += trigger

print("Backdoor pattern embedded in training samples")In real-world deep learning pipelines, such poisoning may cause decision boundary shifts, enabling targeted misclassification without significantly affecting validation accuracy metrics (Chen et al., 2019).

7. Trigger-Activated Malicious Behaviour

Once deployed, a compromised AI model behaves normally for standard inputs but produces attacker-controlled outputs when a trigger pattern is present. This behaviour is particularly dangerous in safety-critical systems such as autonomous vehicles, biometric authentication platforms, and industrial control systems.

Trigger mechanisms may include:

- Subtle pixel perturbations in computer vision models

- Adversarial token sequences in natural language processing systems

- Structured anomalies in sensor or financial datasets

Standard model evaluation rarely encounters trigger conditions, which can leave backdoor vulnerabilities latent for extended operational periods (Li et al., 2024).

8. Detection and Mitigation Strategies

The process of discovering concealed backdoors needs specialised analysis methods which exceed standard performance evaluation methods. Research shows that model anomaly detection systems which use neural activation patterns and prediction confidence distribution patterns help better detect compromised models (Zhou et al., 2025).

def behaviour_monitor(confidence):

if confidence > 0.92:

return "Abnormal confidence spike detected"

return "Prediction within expected range"

print(behaviour_monitor(0.95))Practical mitigation approaches include:

- Neural activation clustering and representation analysis

- Gradient-based anomaly detection

- Model provenance verification and cryptographic signing

- Secure dataset governance and validation pipelines

- Continuous runtime monitoring of deployed AI systems

Integrating these mechanisms into AI DevSecOps workflows can significantly enhance system resilience.

9. Key Challenges in Securing AI Ecosystems

Several systemic challenges hinder effective AI security implementation through these three specific problems which include the following three issues and their solutions

·There is no worldwide established framework which can be used to verify AI models.

· Organizations now depend more on third-party pretrained base models than they did before.

· Deep neural networks present interpretable decision-making processes which extend beyond their current capabilities.

· Open-source AI components distribute across various platforms at an accelerated pace.

The existing problems demonstrate that cybersecurity professionals must join forces with AI engineers and regulatory agencies to create effective solutions (Secureframe 2025).

10. Real-World Security Implications

The threat of backdoor access in AI systems creates major operational risks and creates three distinct types of harm which include:

· Manipulated financial decision systems and algorithmic trading failures

· Incorrect clinical decision support outcomes in healthcare environments (Joe et al., 2022).

· Safety hazards in autonomous navigation and robotics

· Information manipulation through compromised conversational AI agents.

The potential dangers of AI systems show that their security needs to become a central focus for both technical teams and governmental bodies because it presents major ethical dilemmas.

11. Recommendations for Secure AI Deployment

Organizations should adopt proactive strategies to reduce AI supply chain vulnerabilities:

· The organization must execute strict authentication procedures to verify the origins of pretrained models

· The organization needs to develop comprehensive procedures which will protect the entire lifecycle of models

· The organization must include adversarial testing procedures throughout its entire AI development process

· The organization should implement systems which continuously detect and assess unusual activities

· The organization should improve security knowledge among its workforce while establishing responsible AI management procedures.

The organization can establish reliable and strong AI systems through these security measures according to the NIST 2023 report.

12. Conclusion

Hidden backdoors in open-source AI models become one of the most hidden yet dangerous cybersecurity threats which currently affect modern cybersecurity systems. The development of collaborative AI systems leads to speedy technological progress, but their operation creates new vulnerabilities which attackers can use to their advantage. The safe operation of intelligent systems in critical areas requires organizations to implement technical safeguards and governance frameworks while conducting ongoing security assessments to protect their AI supply chain systems.

References

Chen, X., Liu, C., Li, B., Lu, K. and Song, D. (2019) Targeted backdoor attacks on deep learning systems using data poisoning. Available at: https://arxiv.org/abs/1712.05526 (Accessed: 11 March 2026).

Gu, T., Dolan-Gavitt, B. and Garg, S. (2017) BadNets: Identifying vulnerabilities in the machine learning model supply chain. Available at: https://arxiv.org/abs/1708.06733 (Accessed: 11 March 2026).

Joe, B., Rahman, M., Xu, H. and Li, L. (2022) Backdoor attacks on mortality prediction models using electronic health records. Available at: https://medinform.jmir.org/2022/8/e38440 (Accessed: 11 March 2026).

Li, Y., Wu, B., Zhao, Y., Zhu, S. and Zhang, X. (2024) Backdoor learning: A comprehensive survey. Available at: https://arxiv.org/abs/2007.08745 (Accessed: 11 March 2026).

NIST (2023) Artificial intelligence risk management framework (AI RMF 1.0). Available at: https://www.nist.gov/itl/ai-risk-management-framework (Accessed: 10 March 2026).

Secureframe (2025) Supply chain attacks: recent examples, trends and how to prevent them. Available at: https://secureframe.com/blog/supply-chain-attacks (Accessed: 12 March 2026).

Zhou, Y., Wang, Y., Li, H. and Liu, X. (2025) A survey on backdoor threats in large language models. Available at: https://arxiv.org/abs/2502.05224 (Accessed: 11 March 2026)