Recently I came across a report about how engineers working with Mozilla tested Anthropic's AI model, Claude Opus 4.6, to find security vulnerabilities in Mozilla Firefox.

That immediately made me curious.

Could this actually work for bug bounty hunters too?

So I decided to try it myself.

The Experiment

Instead of targeting a simple web application, I picked something more interesting.

A public bug bounty program for an enterprise-grade digital asset infrastructure platform.

The platform is designed for:

- exchanges

- banks

- custodians

- trading desks

- hedge funds

It provides infrastructure for moving, storing, and issuing digital assets securely, using technologies like Intel SGX and MPC (Multi-Party Computation).

And here's the funny part.

I had absolutely no prior knowledge about this technology stack.

No background in MPC. No experience with SGX.

Normally that would mean spending days just trying to understand the architecture before even starting security research.

But this time I decided to approach it differently.

Step 1 — Clone the Repository

The platform had an open-source repository.

So I simply:

- Cloned the repo

- Opened it in VS Code — GitHub Copilot

- Passed the repository to Claude Opus 4.6

That was it.

No complicated setup.

Just the source code.

Step 2 — The Prompt

Instead of asking something vague like "find bugs in this repo", I gave Claude a very structured prompt, instructing it to behave like a professional security researcher performing a bug bounty style audit.

I specifically told it to focus on realistic, exploitable vulnerabilities, not theoretical issues.

I also asked it to include:

- root cause

- exploitation scenario

- step-by-step proof of concept

- security impact

- suggested fix

Basically the same structure you would expect in a proper bug bounty report.

Then I let it analyze the repository.

Step 3 — The Findings

After scanning the codebase, Claude returned several potential vulnerabilities.

Now, if you've used AI for security research before, you know the problem:

AI loves false positives.

So I didn't trust the results immediately.

Instead I asked Claude something very specific:

"Provide detailed reproduction steps assuming I have no knowledge of this system."

It generated:

- environment setup steps

- required conditions

- commands to run

- payload examples

- expected responses

So I followed the instructions.

And surprisingly…

Everything worked.

The vulnerabilities were reproducible exactly as described.

That was the moment I realized this experiment might actually work.

Step 4 — Turning It Into Bug Bounty Reports

After confirming the vulnerabilities, I asked Claude to convert the findings into proper bug bounty reports.

It generated structured reports containing:

- vulnerability description

- root cause analysis

- reproduction steps

- impact assessment

- remediation recommendations

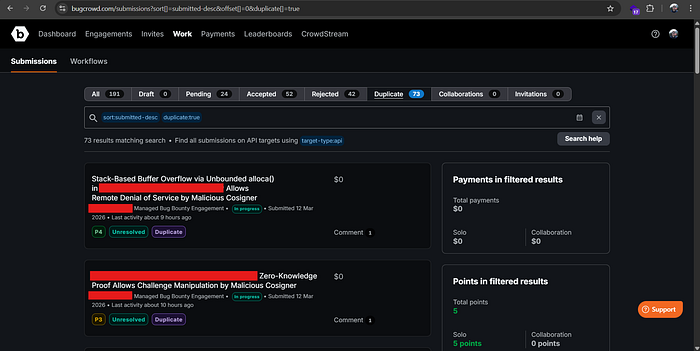

I submitted two reports to the program.

But honestly, I still wasn't very confident.

AI tools often make issues sound more serious than they really are.

The Result

A few days later the triage results came in.

Both vulnerabilities were:

✅ Accepted as valid

Severity:

- P3

- P4

Unfortunately they were marked duplicate, meaning someone had reported them earlier.

But that wasn't the interesting part.

The interesting part was this:

The vulnerabilities were real.

What This Shows

This small experiment changed how I think about AI in security research.

Even without prior knowledge of the system's architecture, AI helped with:

- navigating a large codebase

- identifying suspicious patterns

- reasoning about exploitability

- generating reproducible PoCs

- writing structured reports

That's a lot of heavy lifting.

The Future of AI-Assisted Bug Hunting

I don't think AI will replace security researchers.

But it will definitely change how we work.

Instead of spending hours just trying to understand a new codebase, AI can help:

- map entry points

- trace user-controlled data

- identify risky code paths

- generate test cases faster

The researcher still needs to:

- validate the findings

- reproduce the vulnerability

- understand the real impact

- report it responsibly

But the speed of discovery could increase dramatically.

Final Thoughts

Seeing AI models like Claude Opus 4.6 help uncover vulnerabilities in massive projects like Mozilla Firefox already hinted at what was possible.

Running this experiment myself made that potential feel very real.

Even though my findings were duplicates, the experience showed me something important:

AI-assisted vulnerability research is going to become a standard workflow for bug bounty hunters.

And we're probably still at the very beginning.