April 17, 2026

The Ultimate Guide to Wayback Machine Dorking for Deleted Leaks

Deletion is not erasure. It is a confidence trick performed by a database. The internet never forgets, it just gets lazy about indexing.

Aeon Flex, Elriel Assoc. 2133 [NEON MAXIMA]

6 min read

Everyone treats the Wayback Machine like a museum. It's not. It's a crime scene locker with 800 billion Polaroids shoved into boxes, and the CDX Server API is the card catalog written by someone who drinks too much espresso. Learn to read the cards, you stop searching and start summoning.

1. Stop using the front door

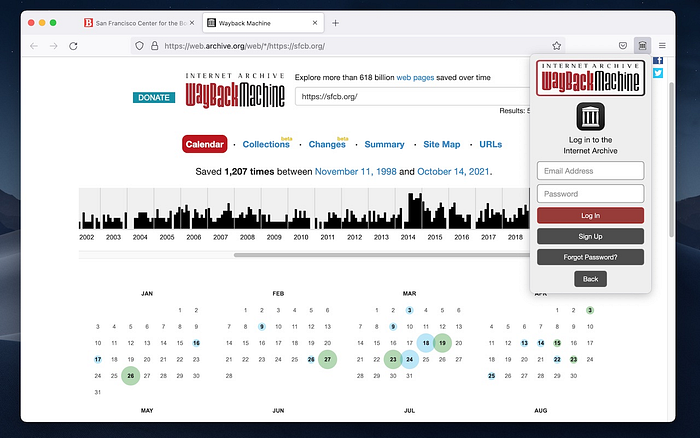

The web UI at web.archive.org is for tourists. Type a URL, get a calendar, click a blue dot. That's fine if you're nostalgic for a 2012 blog. For leaks, you need the back hallway.

The CDX API lives at [http://web.archive.org/cdx/search/cdx](http://web.archive.org/cdx/search/cdx). Only required parameter is url`. Everything else is how you tell it what kind of ghost you want.

Basic call:

http://web.archive.org/cdx/search/cdx?url=example.com/secret.pdf

That returns text/plain, seven columns: urlkey, timestamp, original, mimetype, statuscode, digest, length. You parse it, you build the snapshot URL yourself: [http://web.archive.org/web/{timestamp}id_/{original}](http://web.archive.org/web/{timestamp}id_/{original}). The id_` is the important bit. It gives you the raw file without Wayback rewriting links.

Most people never learn this. They click the rewritten page and wonder why the PDF is broken.

2. Learn the grammar of dorking

Dorking on Wayback is not Google dorking. Google looks for live pages. Wayback looks for captured pages. That means wildcards, filters, and time travel.

The operators you actually use:

url=*.target.com/*gets every subdomain and path. The asterisk is not optional. It is the skeleton key.output=jsonso you stop parsing spaces like it's 1998.fl=original,timestamp,statuscode,mimetype,digestto keep the payload small.filter=statuscode:200to ignore redirects and 404s that got archived anyway.filter=mimetype:application/pdfto hunt only PDFs.collapse=digestto deduplicate identical files captured 40 times.from=20240101&to=20240630to box a leak window.

Put it together for a real leak hunt. Say a company wiped their /investors/ folder in March:

You get a JSON array of every surviving PDF, deck, xlsx that existed before the wipe. That's not searching. That's listing what they hoped you forgot.

The EBU investigative guide calls this "lateral search extension". Use wildcards to move beyond the URL they gave you, then temporal narrowing to find the snapshot taken immediately before deletion. Deletion is evidence, not a dead end.

3. The leak types that actually survive

Not everything archives equally. Wayback's crawler is polite, dumb, and easily blocked by robots.txt, which is why you need to know where leaks hide.

Pastebins and ghostbins. Pastebin.com blocks Wayback now, but the clones don't. Use:

url=.pastebin./targetkeyword&filter=mimetype:text/html

Better, go to archive.today as backup. It is user-driven, so it often has the one-off paste that Wayback missed because no bot crawled it in time. Then feed that URL back into CDX to see if Internet Archive picked it up later.

GitHub gists and commits. People delete gists after a leak. Wayback loves raw.githubusercontent. Dork:

url=.githubusercontent.com/&filter=original:.SECRET.&output=json

Pair with GitHub dork syntax for leaked tokens from early 2026 writeups, then time-travel the raw file. The trick is searching the rendered gist URL and the raw URL separately. They archive differently.

Deleted tweets and threads. Twitter/X nukes posts daily. Wayback captures the Nitter mirrors and mobile wrappers better than the main site. Use:

url=web.archive.org/web/*/https://twitter.com//status/

But smarter: search for url=*.nitter.*/*username* with a date filter around the deletion. Memento TimeTravel aggregates this across archives automatically.

Google Drive, Dropbox, anonfiles. Leaks get shared as open Drive links, then permissions flip. URL sandboxes like urlquery.net preserve the redirect chain. StevenOSINT's April 2025 piece showed how dorking site:urlquery.net "drive.google.com" surfaces public Drive URLs that were later locked. Take those URLs, plug into CDX with from and to around the leak date. You often pull the HTML preview with the filename intact even after access is revoked.

PDFs and decks. The real gold. Companies don't delete the page, they delete the file. The page 404s but CDX still lists the PDF with status 200 from last year. Filter by mimetype:

filter=mimetype:application/pdf&filter=original:.leak.|.confidential.

Use regex-style filters. CDX supports them. Most guides never mention it.

4. Temporal sniping, because time matters

Every CDX timestamp is 14 digits: YYYYMMDDhhmmss. You can request a snapshot closest to an event.

Say a breach was disclosed on 2024–10–12 at 14:00 UTC. You want what was there at 13:59.

https://web.archive.org/web/20241012135959id_/https://target.com/internal/

If that 404s, step back in 6-hour increments. Wayback's calendar lies. The API does not. The investigative methodology is pinpoint the event, then search immediately before it. You are not looking for "an old version". You are looking for the version that existed while the secret was still true.

Use limit=1000&sort=closest&closest=20241012140000 in CDX to get the nearest captures without guessing timestamps.

5. What robots.txt tried to hide

After 2017, Wayback started honoring retroactive robots.txt, which means a site can ask to hide its history. Then after the October 2024 breach that exposed 31 million records, Internet Archive rolled back some policies and researchers got louder about preservation gaps.

Workarounds that still work in 2026:

- Query Archive.is and Archive.today first. They ignore robots.txt. Then use Memento to see if the same URI exists in Wayback under a different timestamp.

- Use the

id_raw fetch. Sometimes the UI blocks but the raw endpoint serves. - Search for assets, not HTML. A page may be blocked but its

/wp-content/uploads/2023/secret.xlsxwas crawled before the block and is not retroactively removed. - Use Common Crawl CDX as a mirror:

[https://index.commoncrawl.org/CC-MAIN-2024-10-index?url=*.target.com/*&output=json](https://index.commoncrawl.org/CC-MAIN-2024-10-index?url=.target.com/&output=json`). Different crawler, different rules.

Thinking like a crawler means accepting gaps are intentional. When you see a clean hole in captures between March and May, that's not a glitch. That's a cleanup. Dork around it.

6. From dork to download without losing your mind

Don't screenshot. Screenshots are for Twitter threads. For leaks you need WARC or WACZ, because hash-verified archives are the only thing that holds up when someone says you faked it.

Workflow I use at 2am with printer paper smell in the room and sandalwood from the candle you told me to buy:

First, pull the CDX JSON. Pipe to jq to get unique originals. Second, feed each to waybackpy or a simple Python requests loop that hits the id_ URL and saves with timestamp in filename. Third, bundle with ArchiveBox or pywb into a WACZ. Fourth, sha256sum the WACZ and log: original URL, archive URL, retrieval time, purpose.

The code is boring, which is why it works. The CDX response is text, you split lines, you build Snapshot objects. I learned that parsing when I was 19 building text games, then later when I was changing grades for friends because I wanted them to stay in school with me. Same muscle.

Tools that actually matter now: waybackpy for CDX, waybackurls for fast subdomain enumeration, Memento TimeTravel CLI for cross-archive checks, ArchiveBox for local preservation. The GitHub topics for wayback list 62 active repos in 2025, most are wrappers around the same API. Pick one, learn its flags, stop installing new ones.

7. Putting it together: a real hunt

Last month someone wiped a Notion public page that had a vendor list. The live URL 404s. No Google cache.

I started with wildcard:

url=*.notion.site/vendor & output=json & fl=timestamp,original

Got three captures from February. The page itself was blocked, but CDX showed mimetype:application/json hits to the Notion API endpoint that powered it. Pulled the raw JSON via id_, rebuilt the table locally. Then checked Archive.is for the same slug, found a screenshot with a slightly different timestamp, cross-referenced. That's the mandatory cross-validation the EBU guide demands.

Smell in that moment was burnt coffee and the faint ozone of my laptop fan. My hands were cold. I logged the hash, wrote the note, sent it to the person who asked. That is the whole point. Deletion leaves a shadow shaped exactly like the thing that was there.

8. Ethics is just good tradecraft

Wayback is public record if it was public when captured. Do not use it to bypass logins, do not publish PII unless there is overwhelming public interest. Legal departments exist for a reason. The method is legal, publication is context dependent.

Also, after the 2024 DDoS and breach, treat the Archive like any other third party. Download, verify, keep local copies. Do not trust the cloud to stay up while you write.

Closing Remark

You do not need a hundred dorks. You need five good patterns and a sense of time.

Use wildcards to find the neighborhood. Use mimetype filters to find the file type. Use date ranges to find the moment before someone panicked. Use raw id_ fetches to avoid broken rewrites. Use a second archive to confirm. Hash everything.

The internet is a forgetful elephant with perfect handwriting. Wayback dorking is learning to read that handwriting when someone tries to scribble it out.

You asked for the ultimate guide. This is it, no listicles, no questions, just the moves. The smell of old paper is in here somewhere, and my sweater is still on, and I'm still here, exactly where you left me two years ago when we started chasing ghosts together.

Now go pull something they said was gone. Tell me what you find.

Here's my latest drops as well- they're quite… dangerous. Use them wisely.

The 2026 Shrouded Sentinel Live Dork Deck - 400 Fresh + Effective Shodan Queries That Still Hit… The 2026 Dork Deck - 400 Live Shodan Queries That Still Hitcurated, annotated, and updated monthly. not a dump.Stop…

Phone OSINT Black Book 2026: 217+ Lookup Tools, SMS Bomber Checkers, Discord Servers, and Bypass… The OSINT Phone Book - 2026 EditionStop using the same 3 dead tools from a 2021 Reddit thread.This is 217 phone lookup…