Security systems have a fundamental problem that nobody talks about enough: they're mostly static. You deploy your intrusion detection rules, your WAF policies, your SIEM correlation logic, and then… you wait. You wait for someone to find the gap your rules didn't cover. You wait for the alert that comes too late. You wait for the post-mortem.

The industry has gotten better at threat intelligence feeds and signature updates, but these are still fundamentally reactive, human-in-the-loop processes. A novel attack pattern emerges, researchers analyze it, someone writes a signature, the signature gets distributed, and you pull it down in your next update cycle. That gap between "attack discovered" and "defense deployed" is where breaches live.

What if the security engine itself could close that gap autonomously?

This article walks through the architecture of a cognitive adaptive self-healing security system: a policy engine that observes attack attempts, learns from them, and modifies its own detection rules without waiting for a human to write a new signature. It's a genuinely interesting engineering problem, and the pieces to build it exist today.

The Real Problem With Static Security Policies

Most security tools operate on a rule-evaluation model. Traffic or behavior comes in, gets matched against a rule-set, and produces a verdict. This works well for known attack patterns. The problem is the word known.

Consider a few scenarios:

- A new malware variant uses a slightly different obfuscation technique that dodges your existing YARA rules by a few bytes. - An attacker runs a low-and-slow credential stuffing campaign that stays just under your rate-limiting thresholds. - A zero-day exploit uses a legitimate protocol in a way nobody anticipated, so your allowlist-based rules let it through.

In each case, your rules aren't wrong exactly. They're just incomplete. And the only way to fix that under a static model is to involve a human analyst, which means time, which means exposure.

The deeper issue is that static rules encode a point-in-time understanding of adversary behavior. Adversaries evolve. Rules don't, at least not on their own.

The Architecture: A Self-Modifying Policy Engine

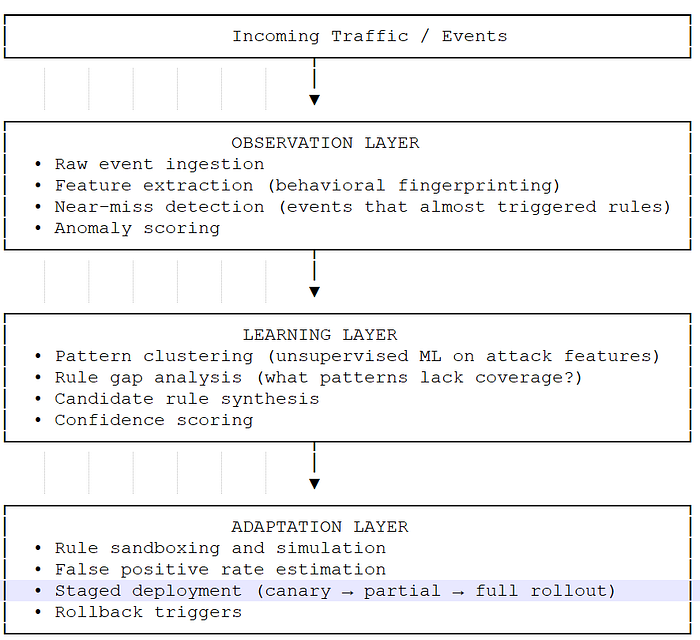

The approach here is to treat the security policy engine not as a static ruleset evaluator, but as a living system with three interconnected layers:

1. Observation Layer — captures raw attack telemetry 2. Learning Layer — extracts patterns and generates candidate rules 3. Adaptation Layer — validates and deploys rule modifications

Let's break each one down.

The key insight is that the system doesn't just respond to confirmed attacks. It pays close attention to near-misses: events that came close to triggering a rule but didn't quite match. These near-misses are often the leading edge of a new attack pattern probing your defenses.

Observation Layer: Beyond Simple Logging

Most SIEM systems log events and move on. The observation layer here does something more interesting: it maintains a behavioral fingerprint for each observed event, capturing not just what happened but the full context of how it happened.

Here's a representative schema for a behavioral event record:

{

"event_id": "evt_7f3a9c21",

"timestamp": "2024–11–15T14:32:07.441Z",

"source_ip": "192.168.4.55",

"event_type": "http_request",

"raw_features": {

"method": "POST",

"path": "/api/v2/auth/token",

"user_agent": "Mozilla/5.0 (compatible; custom-client/1.0)",

"payload_entropy": 4.87,

"header_count": 6,

"timing_delta_ms": 12,

"session_request_count": 847

},

"rule_evaluation": {

"rules_checked": ["rate_limit_auth", "sqli_pattern", "bruteforce_threshold"],

"rules_triggered": [],

"closest_rule_match": {

"rule_id": "bruteforce_threshold",

"match_score": 0.78,

"threshold": 0.85

}

},

"anomaly_score": 0.71,

"near_miss": true,

"near_miss_delta": 0.07

}That near_miss flag and the closest_rule_match data are the gold. When you see hundreds of events that score 0.75–0.84 on a rule that triggers at 0.85, that's a pattern worth learning from.

The observation layer also runs behavioral clustering in a sliding window, grouping similar near-miss events together. A cluster that grows rapidly over a short time period is a strong signal that something new is probing the system.

Learning Layer: From Observations to Candidate Rules

This is where the interesting engineering happens. The learning layer consumes the near-miss event stream and does two things: it identifies pattern clusters that lack rule coverage, and it synthesizes candidate rules to address those gaps.

Pattern Clustering

Using unsupervised learning (DBSCAN works well here because it doesn't require you to specify the number of clusters ahead of time and handles noise well), the system groups near-miss events by their feature vectors.

from sklearn.cluster import DBSCAN

import numpy as np

def cluster_near_miss_events(events: list[dict], eps=0.15, min_samples=10):

"""

Cluster near-miss events to find emerging attack patterns.

eps: maximum distance between samples in the same cluster

min_samples: minimum cluster size to be considered significant

"""

feature_vectors = [extract_features(e) for e in events]

X = np.array(feature_vectors)

clustering = DBSCAN(eps=eps, min_samples=min_samples, metric='euclidean')

labels = clustering.fit_predict(X)

clusters = {}

for i, label in enumerate(labels):

if label == -1: # noise, skip

continue

if label not in clusters:

clusters[label] = []

clusters[label].append(events[i])

return clusters

def extract_features(event: dict) -> list[float]:

"""

Convert raw event data into a normalized feature vector.

"""

features = event['raw_features']

return [

features['payload_entropy'] / 8.0, # normalize to [0,1]

min(features['timing_delta_ms'] / 1000.0, 1.0),

min(features['session_request_count'] / 1000.0, 1.0),

features['header_count'] / 20.0,

event['near_miss_delta'],

event['anomaly_score']

]Candidate Rule Synthesis

Once a cluster is identified, the system synthesizes a candidate rule by computing the centroid of the cluster and deriving threshold conditions from the distribution of feature values.

def synthesize_candidate_rule(cluster_id: int, events: list[dict]) -> dict:

"""

Generate a candidate detection rule from a cluster of near-miss events.

"""

feature_vectors = [extract_features(e) for e in events]

X = np.array(feature_vectors)

centroid = np.mean(X, axis=0)

std_dev = np.std(X, axis=0)

# Rule conditions are set at centroid - 1 std dev to catch the cluster

# while maintaining some margin

conditions = {

"payload_entropy_min": max(0, (centroid[0] - std_dev[0]) * 8.0),

"timing_delta_max_ms": (centroid[1] + std_dev[1]) * 1000.0,

"session_request_min": (centroid[2] - std_dev[2]) * 1000.0,

"anomaly_score_min": max(0.5, centroid[5] - std_dev[5])

}

return {

"candidate_rule_id": f"auto_{cluster_id}_{int(time.time())}",

"origin": "adaptive_learning",

"cluster_size": len(events),

"conditions": conditions,

"confidence": compute_confidence(events),

"status": "pending_validation"

}

def compute_confidence(events: list[dict]) -> float:

"""

Higher confidence for larger clusters with consistent near-miss deltas.

"""

if len(events) < 10:

return 0.0

deltas = [e['near_miss_delta'] for e in events]

consistency = 1.0 - (np.std(deltas) / max(np.mean(deltas), 0.01))

volume_factor = min(len(events) / 100.0, 1.0)

return round(consistency * 0.6 + volume_factor * 0.4, 3)Adaptation Layer: Safe Autonomous Deployment

This is the piece most people are skeptical about, and rightfully so. Autonomously deploying security rules without human review sounds like a great way to lock yourself out of your own system, generate an avalanche of false positives, or create exploitable behavior.

The adaptation layer handles this through a staged validation and deployment pipeline.

Candidate Rule Validation

Before any candidate rule gets near production traffic, it runs through a simulation against historical event data:

def validate_candidate_rule(rule: dict, historical_events: list[dict]) -> dict:

"""

Simulate rule against historical data to estimate false positive rate.

"""

true_positives = 0

false_positives = 0

for event in historical_events:

verdict = evaluate_rule(rule, event)

if verdict == "block":

if event.get('confirmed_malicious', False):

true_positives += 1

else:

false_positives += 1

total_evaluated = len(historical_events)

fp_rate = false_positives / total_evaluated if total_evaluated > 0 else 0

return {

"rule_id": rule['candidate_rule_id'],

"true_positives": true_positives,

"false_positives": false_positives,

"fp_rate": fp_rate,

"validation_status": "approved" if fp_rate < 0.001 else "rejected",

"recommendation": "deploy" if fp_rate < 0.001 else "review"

}A false positive rate threshold of 0.1% (0.001) is a reasonable starting point, though this is tunable based on your risk tolerance. High-security environments might accept a higher FP rate. Customer-facing systems probably can't.

Staged Rollout Schema

Approved rules don't go straight to full enforcement. They follow a canary pattern:

{

"rule_id": "auto_14_1731680127",

"deployment_stages": [

{

"stage": "shadow",

"traffic_percentage": 100,

"mode": "log_only",

"duration_hours": 24,

"promotion_criteria": {

"fp_rate_threshold": 0.001,

"min_events_evaluated": 1000

}

},

{

"stage": "canary",

"traffic_percentage": 5,

"mode": "enforce",

"duration_hours": 48,

"promotion_criteria": {

"fp_rate_threshold": 0.001,

"no_critical_incidents": true

}

},

{

"stage": "partial",

"traffic_percentage": 25,

"mode": "enforce",

"duration_hours": 72

},

{

"stage": "full",

"traffic_percentage": 100,

"mode": "enforce"

}

],

"rollback_triggers": {

"fp_rate_spike": 0.005,

"blocked_legitimate_users_threshold": 10,

"manual_override": true

}

}The shadow stage is critical. Running the rule in log-only mode against 100% of traffic gives you real data on how it would behave without any blast radius. If the numbers look good after 24 hours, you start enforcing on a small slice of traffic and keep watching.

Handling the Feedback Loop

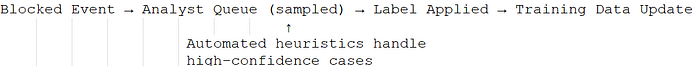

One thing this architecture has to get right is the feedback loop. When a rule gets deployed and starts blocking traffic, some of those blocks need to be verified. Was the blocked event actually malicious, or did the rule catch something legitimate?

This feeds back into the learning layer as labeled data, which improves future rule synthesis. Over time, the system builds a growing corpus of confirmed attack patterns that make the clustering and synthesis steps more precise.

The analyst queue doesn't have to be reviewed manually for every event. Automated heuristics can handle high-confidence cases. A blocked request that matches 15 existing confirmed-malicious patterns probably doesn't need human review. A blocked request from a known-good IP with a legitimate user agent absolutely does.

Real-World Benefits

For security engineers, this architecture offers a few concrete improvements over static systems:

Reduced mean time to protection. Novel attack patterns get coverage in hours rather than days or weeks. The observation layer sees the probing behavior, the learning layer identifies the pattern, and by the time the attack escalates, a rule is already in shadow mode collecting data.

Lower analyst fatigue. Instead of writing new rules from scratch after every incident, analysts shift to reviewing and approving/rejecting synthesized candidates. The system does the pattern recognition; humans make the judgment calls on edge cases.

Adaptive coverage over time. As the system sees more traffic, its ability to distinguish genuine near-misses from noise improves. The rule corpus grows and becomes more precise.

Audit trail. Every synthesized rule has a lineage: which events triggered the cluster, what the confidence score was, what the validation results showed, and who or what approved it for deployment. This is important for compliance environments.

Practical Considerations

A few things to keep in mind if you're building something like this:

Feature engineering matters enormously. The quality of your candidate rules is directly tied to how well your feature vectors capture meaningful behavioral signals. Garbage in, garbage out applies here just as much as anywhere else in ML.

You need labeled ground truth. The validation step relies on having some historical events labeled as malicious or benign. If you're starting from scratch, you may need to seed this with threat intelligence data or manually labeled samples.

Adversarial robustness. A sophisticated attacker who understands that your system learns from near-misses could potentially craft probing requests designed to poison the learning layer. Rate-limiting the influence of any single source IP on the learning process helps here.

Regulatory constraints. In some environments, autonomous policy changes require human approval regardless of confidence scores. Build the human-in-the-loop override from day one rather than bolting it on later.

Conclusion

Static security architectures are a known liability. The window between attack emerges and defense deployed is a business risk, and that window is defined by how fast humans can observe, analyze, and respond. A cognitive adaptive policy engine shrinks that window by automating the observation and synthesis steps while keeping humans appropriately in the loop for validation and oversight.

The components for building this exist today: streaming event processing, unsupervised clustering, feature extraction pipelines, staged deployment infrastructure. None of this requires exotic technology. What it requires is a willingness to treat security policy as a dynamic artifact rather than a static configuration file.

The hard part isn't the ML. The hard part is building the feedback loops correctly, getting the false positive rate validation right, and designing rollback mechanisms that are fast enough to matter. Get those pieces right, and you have a security system that genuinely gets better as it observes more attacks rather than one that just accumulates technical debt in its rule files.

That's worth building.