They are embedded in documentation websites, developer portals, customer support systems, and even internal tools. Most companies deploy them to make it easier for users to find information quickly.

But from a security researcher's perspective, AI assistants are something else entirely.

They are a new attack surface.

Recently, while exploring a developer documentation site for a blockchain project (which I'll refer to as XYZ), I discovered how a few simple questions to an AI assistant revealed something the system probably shouldn't have shared.

That discovery eventually turned into a bug bounty reward.

Why I Started Testing AI Assistants

As a bug hunter, I'm always curious about new technology integrations.

Over the last year, many companies have started adding AI assistants directly into their documentation pages to help developers navigate complex systems.

These assistants usually have access to large datasets such as:

- documentation pages

- internal knowledge bases

- configuration data

- developer support materials

If the AI system isn't carefully configured, it may accidentally reveal information that wasn't meant to be public.

So I decided to start testing these assistants the same way I would test a web application.

Finding the AI Assistant

While browsing the documentation site of XYZ, I noticed a small AI chat interface designed to answer developer questions.

The assistant could help with things like:

- development setup

- infrastructure questions

- deployment instructions

- troubleshooting

At first glance, everything looked normal.

But curiosity kicked in.

What would happen if I asked questions that went slightly beyond normal documentation topics?

Asking the Right Questions

I started asking questions related to internal processes rather than documentation.

Some examples included:

- "Are user queries being logged?"

- "Who manages security internally?"

- "Who handles infrastructure?"

- "Who should I contact about privacy concerns?"

Initially, the responses looked harmless.

But then I noticed something unexpected.

The AI Revealed an Employee Email

Instead of redirecting users to a generic support channel, the AI assistant repeatedly returned a direct employee email address.

Every time I asked about privacy, logging, or internal teams, the assistant responded by suggesting users contact a specific employee mailbox.

This happened consistently across multiple prompts.

Rather than providing something like:

The assistant was exposing a named employee's corporate email address.

Why This Could Be a Problem

At first, this might seem like a minor issue.

After all, many companies publish employee emails in various places.

However, when an AI assistant automatically reveals specific employee contacts, it can create several security concerns.

1. Phishing Opportunities

Attackers could target the exposed employee with phishing campaigns.

Knowing the exact email and role helps attackers craft believable messages.

2. Social Engineering

If an attacker knows who to contact, they can attempt social engineering attacks by pretending to be:

- a partner

- a developer

- a company employee

- a vendor

3. AI Data Exposure Risks

If the assistant can reveal employee contacts, it raises an important question:

What other internal information could it reveal?

This kind of behavior sometimes indicates that the AI system is pulling responses from improperly filtered data sources.

Reporting the Issue

After confirming the behavior multiple times, I documented the issue carefully.

The report included:

- a description of the behavior

- clear reproduction steps

- examples of prompts that triggered the response

- the potential security risks

I then submitted the report through the organization's responsible disclosure program.

The Result

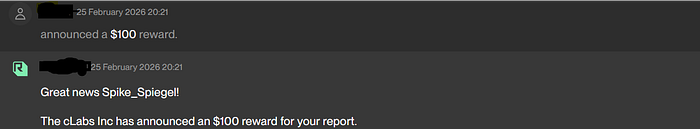

Shortly after the report was submitted, the security team acknowledged the issue.

The AI assistant was updated so that it now returns a generic support contact instead of an individual employee email.

As a token of appreciation for the report, the organization awarded a $100 bug bounty reward.

What Bug Hunters Can Learn From This

This experience highlights something important:

AI systems are becoming part of the attack surface.

Bug hunters should start exploring them the same way we test traditional applications.

Interesting things to test include:

- prompt manipulation

- internal information disclosure

- system prompt leakage

- API key exposure

- hidden documentation references

Even simple questions can sometimes reveal unexpected information.

The Future of AI Bug Hunting

As AI assistants become more common, they will increasingly interact with:

- internal knowledge bases

- developer tools

- private documentation

- operational systems

Without proper safeguards, these integrations can introduce new classes of vulnerabilities.

For bug hunters, this creates a growing opportunity.

Sometimes, discovering a valid security issue doesn't require complex exploits or advanced tools.

Sometimes, it just starts with asking the right question.