Over the past few weeks, my LinkedIn and X feeds have been filled with posts from security vendors and researchers showcasing how AI is being used to uncover vulnerabilities across public programs. It's impressive, but it also made me wonder how far this can actually go, so I decided to try it myself. Instead of relying on pre-built prompts, tools, or popular "AI-powered hacking" playbooks, I took a different approach and let the agents figure things out on their own when it comes to building and refining their own methods along the way. This experiment is my attempt to document that journey: what I uncovered, where AI-powered vulnerability detection stands today, the limitations I encountered, and what might come next. I also see this as an open discussion which could me and many others learn how to do this a "better" way, so if you have thoughts or experiences, I'd love to hear them.

[0x01] The Setup

First things first, the show stealer. From everything I observed, the general consensus is right, Opus 4.6 is a serious contender in AI powered vulnerability detection and it genuinely lives up to the hype. When I compared it with Sonnet 4.6, Opus consistently outperformed it in reasoning, and more importantly, the quality of vulnerabilities it uncovered was noticeably better than Sonnet and several other models I tested along the way.

So without wasting time, I picked up the $20 Pro plan to get started. That said, if you are planning to go deep with this, I would strongly recommend the Max plan since Pro credits disappear much faster than you would expect.

Running everything locally was not the plan. This is where Oracle came in. With a 24GB free tier instance and Claude CLI installed, I was all set to begin hunting.

To avoid having to SSH into the instance every time, I used the "/remote control" utility available in Claude CLI, which connects back to the Claude Desktop GUI. This allowed me to send prompts remotely, watch them execute on the cloud instance, and get the results directly on my desktop. One quick tip here is to run Claude CLI with the " — dangerously-skip-permissions" flag so you do not have to manually approve every command execution.

[0x02] The Brain

Now it was time to give our intern a brain and watch it evolve into a full time employee. I started by asking Claude to install whatever tools it considered necessary across the entire vulnerability hunting lifecycle, from initial reconnaissance to active and passive detection, and finally reporting. To make detection more flexible, I also had it set up headless browsers for tasks like taking screenshots, verifying issues such as XSS, and interacting with forms when needed.

After installing any tool, Claude maintained a tools.md file that documented the relevant commands, making it easy to reference during scans. In addition, I had it maintain a knowledge.md file to track what it had already tried on a target so it would not repeat the same steps, along with logging mistakes I pointed out and how it adjusted its approach in subsequent runs.

[0x03] The Findings

After everything was set up, it was finally time to put the AI to work. It kicked off with reconnaissance, mapping out the underlying tech stack across the attack surface and, interestingly, focused its efforts accordingly instead of taking a spray and pray approach, which I found impressive. One thing I quickly realized is that the more context you provide about a target, whether it is a root domain or a specific endpoint, the better the results. Explaining what the application does and what to expect goes a long way.

Claude performs especially well when given precise targets like API endpoints, though it can adapt to broader scopes over time. It showed strong results in identifying issues like XSS, subdomain takeovers, GraphQL vulnerabilities, business logic flaws, and S3 misconfigurations, internal server IP leaks and ofcourse…

This thing SPAMS with CORS findings and god knows how many times I had to ask it to not report CORS-related findings and eventually putting it in the knowledge.md file. It also made me wonder if these finding types are prevalent across everybody's deployments or instruction-specific.

[0x04] The Good

Claude ultimately managed to uncover a number of genuinely interesting findings, often chaining ideas together and pivoting from one observation to another to reach deeper issues. In several cases, these were the kind of vulnerabilities that could easily go unnoticed during routine testing and would typically require a more focused and methodical approach from a human analyst.

Over the span of a week, I managed to uncover around 25 vulnerabilities. These ranged from S3 bucket misconfigurations to data leakages and even business logic issues in certain utilities. One thing that stood out was how Claude actively explored documentation endpoints exposed on websites and used them to guide further testing, which led to some genuinely interesting findings.

Claude also performs well if you give it access to any API tokens or account credentials for the platform which it may use for authenticating into the platform and expand its testing horizon.

[0x05] The Bad

I still feel there's a lot of room for improvement, both in fine tuning these agents and in refining the overall detection approach. Claude often tends to overestimate the severity of certain findings, which can make prioritisation difficult. At times, it flags intended functionality as a security issue or reports false positives due to incomplete verification, for example, marking a stored XSS even when it does not actually execute on the client side.

The biggest reason I wouldn't consider Claude ready for production environments yet is its tendency to go beyond detection and actively exploit vulnerabilities. In several cases, it attempted actions that could modify real data such as creating or deleting users, altering content, triggering transactions, or uploading files, which can disrupt normal application behavior. I actually observed this happening during my testing, which makes it a serious concern.

Running these agents in isolated or staging environments instead of production should be the baseline. On top of that adding clear boundaries between detection and exploitation, and requiring explicit approval before performing any state-changing actions should be highly encouraged but there is always a chance that it may disregard or forget your earlier instruction and do something "bad".

[0x06] Duplicates Everywhere, Little That Stands Out

After running multiple scans and observing the patterns in its approach, it became clear that the discovery process is often very similar across runs. Because of this, if you point it at popular community VDPs, there's a high chance you'll end up submitting duplicates alongside many others using the same tools and models. In a landscape where everyone is leveraging similar setups, most findings start to look the same. The only real way to stand out is through one thing: fine tuning.

You need to ensure your instructions and methodologies stay aligned with the latest techniques and real-world attack patterns. That's what pushes the model beyond basic, repetitive scanning and helps it uncover more creative, out-of-the-box vulnerabilities instead of just the usual noise.

[0x07] The Human Element

So, are we here to stay? Yes. As they say, accountability ultimately lies with humans. You can't assign that responsibility to an LLM, especially when dealing with sensitive or critical systems. The human element is essential, whether it's discovering vulnerabilities or validating whether a finding is real or just a false positive. You also need someone guiding the process, correcting the agent when it drifts and keeping it aligned with the actual objective.

From a detection standpoint, AI is a strong addition to the existing security stack. It can complement traditional approaches and help uncover issues that might otherwise be overlooked.

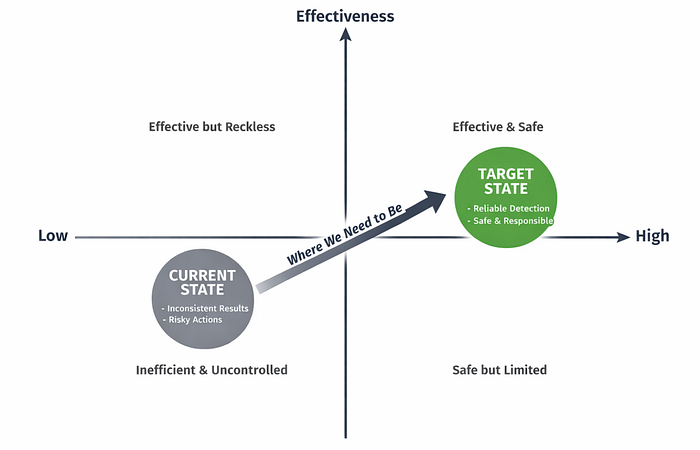

[0x08] Still Catching Up

Maybe this view is slightly biased by my setup and the way I guided the agent, but it's clear there's still a long way to go before AI-powered vulnerability detection is reliable and safe enough for production environments, which are ultimately the most critical attack surfaces.

There's also a need to improve how these systems assign severity, reduce false positives through better context awareness, and actually help users prioritise what matters instead of just generating more noise.

That said, the results were genuinely impressive. The kind of findings uncovered in such a short time show real potential, and I'm already exploring ways to make the approach more robust, effective, and at the same time, safe and responsible.

[0x09] The End

This brings us to the end of the article. This space is still evolving, and I expect we'll continue to see rapid improvements that push AI-assisted vulnerability detection even further. I'm also keen to learn more about how security vendors are approaching this problem.

As mentioned earlier, I'm still exploring this space myself and by no means I am a Pro (yet), so consider this an open discussion. If you have ideas, suggestions, or a better way to fine tune the approach, I'd genuinely love to hear your thoughts in the comments or on LinkedIn.