What I think I know, that I don't know.

- I don't know how people will receive this, and I don't know the effects, and unforeseeable effects, of implementing a 'nutritional guidelines' for information. I don't know if the benefits will be actually benefit, or whether they will waste time and money (for paying individuals to work towards implementing these criteria).

- I also don't know, what the future holds.

- I also don't know, how accurate my statements are to the truth. Knowing how close I am to the truth, or how accurate I am, would require me knowing where the truth ultimately lies, in order to measure how relatively close or far away from that truth.

Imagined Unknown Unknowns — Imagined potential 'black swans', I can think of are:

- I do not know, how A.I. will benefit or harm people, ultimately. I use it, and as of right now, I don't know if I can justify my use of it (even if it is easy and accessible to use), if it is not open source, not damaging communities with energy consumption and through the use of paying below living standard wages in 3rd world countries[1]

"Personal computers were about you interacting with the machine. Interpersonal computers were about you interacting with others via the machine. […] This is going to be the third major revolution that these desktop computers provide. Is revolutionizing human-to-human communication and group work. We call it 'interpersonal computing'." — Steve Jobs on the NeXT computer .

"The web is more a social creation than a technical one. I designed it for a social effect — to help people work together…" " — Tim Burner's Lee in Weaving the Web[2]

This article was inspired by both a very basic yet forgotten value that I received and possibly (mis-)interpreted from a Sam Harris Live Event in Vancouver, which is that the truth matters; as well, this is also inspired by the book Information Diet: a case for conscious consumption by Clay A. Johnson [3].

To start with a clichéę: The word communication, relying on the work of Perseus Digital Library has its root in the Latin verb communicare, which means 'to share' or 'to make common'. Information, by extension, has the power and purpose of 'informing' through the sharing of information that may either be subjectively sensed in another living being, or their imaginations, thoughts, and abstractions of their experience there within. Indeed, the first paragraph of Information, links to the idea of 'Inform' as a form of communication.

If being social is a hallmark of human existence, as I have stubbornly refused to believe until it has hit me so hard with negative consequences that I am now convinced by others who have pointed this out already, that sociality is a core part of human existence, and which I now believe it is. Then, it is as much a core dimension in the survival, endurance, and quality of my life; as it is the most harmful, destructive, and potentially traumatic dimension of my life as well. A quick example, is that people I care about can leave my life, either by choice or by chance (i.e., leading to my sadness and grief), or use violence and praise[4] as a form of coercion to obtain compliance towards their goals, or they can make their own independent choice to help and support me, with consent.

Then I am not too surprised to find that sociality is what makes information meaningful to other human beings. The 'information technology' that is so often cited by academics, might be more aptly be called the 'social-information technology': while the underlying math and techniques of logic are computational (and beyond my understanding), the core design and utility of these technologies, I would argue, are for social purposes and human desires (e.g., violence, pornography, podcasts, etc.).

It's worth noting, however, that these technologies have tradeoffs: they may make socializing less social and more artificial, yet at the same time preserve communication through longer stretches of time and across vast distances of space. The term 'para-social' relationships is becoming a popular term, and while I am uncertain as to the utility of the term, I would argue that any form of assisted communication, like technology assisted movement, is ultimately to assist in human goals albeit in ways that are often not natural or of full mimicry of the way people do things.

In my opinion, in which I am reminded by The Guardian, and which I paraphrase here: 'opinions are free (i.e., to express, to hold, and to share), facts are sacred'. All the technical aspects of various computers, math, coding languages, in my experience, obfuscates the overall purpose and goal of highly technical and complex computer technologies: that they have the potential, and are often conceived of being designed and used to enhance, empower, encourage, and foster human well-being; arguably through fostering and empowering alternative ways of communicating between people, with effects on their relationships.

A key concept in the success of the world wide web was that it was: free, accessible, and open source. For example, a problem with competing protocols of the world wide web (WWW) was the 'gopher' protocol, according to Stephen Fry and Sir Tim Berners-Lee | This is For Everyone Audiobook(10:18–10:36) was that it charged money for use[5]. The community of people who used that protocol, stopped using it, and decided to adopt the world-wide-web (WWW) instead. This was much a mistake made by people designing and controlling the gopher protocol, as it was a response (and ability) by the community of people who used the protocol to choose an alternative protocol instead (i.e., the world wide web). I would argue, that the value of a community, who choose to support an alternative free, accessible, and open-source technology, is what imbued the immense value of the world wide web and what it is today. Developing innovative and reliable technologies with real value, especially for people means respecting the clients as much as the developers and owners of those technologies: giving people the freedom to express, free to use & access, and giving people the ownership over their own data, and respecting their choices as to what they want to share publicly and what they want to hold onto privately.

That doesn't mean that all intended communication, and relationships, are advantageous for everyone; there are people who use these technologies to exploit others, to gain and maintain monopolistic control of private enterprises, and who use these communicative tools for violence. In short, while these tools have the potential to enhance communication, and relationships, they don't account, let alone describe, the human element and motivations, and desires, of these tools: the human elements.

In my opinion: paywalls, pay to use (i.e., subscription-based fees, one-time payments, etc), censorship, monopoly market or usage, centralized services, closed-source software (often proprietary), etc. are all examples that are in direct contrast to the spirit of the WWW, and to the social-information technologies that they depend on.

To go off on a tangent, I think it's worth noting that, 'human factors design' is, to summarize it bluntly, the study of human mistakes: that all the alternative, and various, ways that humans can behave and operate a given tool, is what human factors design is all about. I have always been interested in human factors design, when I read Kathryn Shultz book The Misadventures of Being Wrong [6]. All the ways in which people can use and express with the internet, surely push the limits of human imagination as much as human innovation. Creativity may be a concept, yet I would argue the internet is the closest technology to being a 'living' embodiment of that that concept.

The greatest 'most innovate' technological revolution, and technology, is not, in my opinion, the machine learning or A.I. technologies, it is the world-wide-web (and the internet, which the WWW relies on). The WWW's value is not withholden to a CEO, (monopolistic) cooperation (via, decentralization), or tech experts (no matter how much credentials or praise they have been given). The value of the WWW, and it's potential and public utility, rests on the people and community who use it: that the value of the technology, in both it's empowerment and potential, never had to be exclaimed by companies or individuals, because it's value leveraged human potential itself: that people, when given the ability to communicate freely, and openly, and autonomously, could create and imbue value into the technology itself.

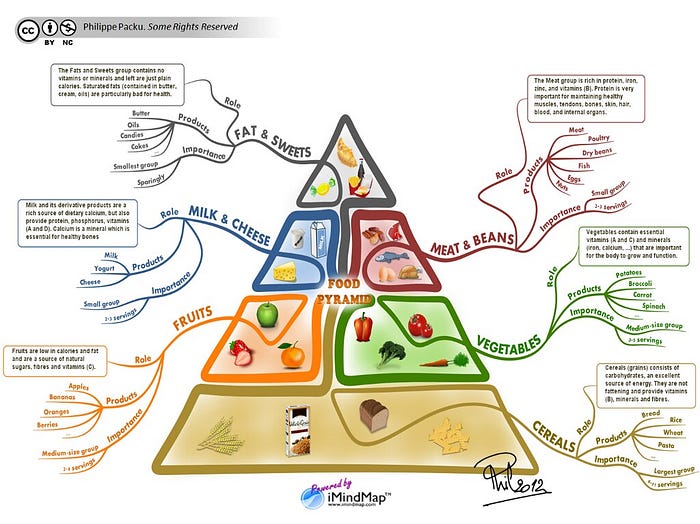

Reading, and being inspired by, the book Information Diet by Johnson, Clay A[7] (2012). I want to share the point raised by Clay Johnson, that it's our relationship to social-information technologies, that makes our consumption of social-information, a moral question: that our social-information consumption has consequences on our health (emotional as well as physical). The analogy, used in his book, is our relationship with food, and the health consequences that come with our choices to what we choose to consume: that consuming deep fried chicken tastes great, yet it might not be the healthiest choice given alternatives.

Reading Johnson's book, I was reminded of Sam Harris's Ted Talk, and his quote on food:

"Now, why wouldn't this undermine an objective morality? Well think of how we talk about food: I would never be tempted to argue to you that there must be one right food to eat. There is clearly a range of materials that constitute healthy food. But there's nevertheless a clear distinction between food and poison. The fact that there are many right answers to the question, "What is food?" does not tempt us to say that there are no truths to be known about human nutrition. Many people worry that a universal morality would require moral precepts that admit of no exceptions."

And I share his sentiments, although re-imagined and also in the context of the analogy of social information, that it's worth thinking about what are the criteria that make a social-information consumption diet 'healthy' vs. unhealthy. I want to share, that Johnson believes and which I agree with, is one criteria: being locally-sourced, vs. distantly or international sourced. The analogy with food should be clear: it supports local workers directly with money, as well as less carbon emissions; the analogy would be supports primary sources, local journalists and content creators, and might foster a greater conversation with the local community that are closer to home.

and, also, taken from Sam Harris's TED talk:

"But this is just the point. Whenever we are talking about facts certain opinions must be excluded. That is what it is to have a domain of expertise…"

And this idea, to extend the analogy with social-information, is that the truth matters to me, and I would argue we need criteria to address how accurate our information is, as well as how trusted the suppliers or publishers are. In the same way we have the Food and Drug administration (FDA) to create regulation and standards of practice, we need the same for content distribution. For example, the FDA, regulates companies inform consumers on sugar in products (at least I think it does), so that lethal amounts are not used, as well as limiting the addictive properties of food. I would argue, that the same would hold true for information: regulation by government, to get companies to explicitly state the properties in information content being provided to consumers, so that they can make informed choices towards what they choose to consume and to also assist them in making health related choices, towards their relationship with their information consumption habits.

Last, I would argue, and which I am very guilty of doing, is to provide address explicitly, maybe in the 'nutritional label' of social information, to address bias and explicitly state whether the information is informative or affirmative. Now Johnson has explicitly made this distinction between informative (i.e., truthful) vs. affirmative (i.e., feel good), to highlight the potential role of content: to provide the truth, vs. to entertain (or possibly feel better). I would argue that there is a role for both, at least in my life; one isn't better than the other, both play a role in my life, and both in moderation can improve the quality and quantity of my life. However, when we do not explicitly address the which is which, we fall into the trap, that A.I. generated content has explicitly made clear: when we, or at least me, cannot distinguish between fact and fiction (or in this case artificially created information vs. real human made information). Don't get me wrong, people still lie (or, like me, lie to myself, in the form of denial, when some truths are to painful to consume all at once) and nobody, especially me, has 100% accurate information: the goal of explicitly stating the properties of social-information, into a 'nutritional label' of sorts (e.g., including info as either informative or affirmative), would be, again, to empower individuals to make informed choices as to what they want to consume, via social-information content.

I honestly believe, that there have been truths in my life, that have been really hard and painful for me to face, let alone consume. There is a limit to how much violent videos I want to watch, or sexual violence I need to see, or how much tragedy in news I can consume on a daily basis. Johnson, reminds us, that we should: "consume deliberately". The nutritional label is a small part of that process; a fuller process is what I am inspired by Daniel Kahneman to call "decision hygiene"[8], is like 'hand hygiene': prevents illness and disease, or in our case 'unhealthy' or short-term habits and/or the effects of harmful content and it's consumption, so we can consume with less risk. Consuming deliberately, or a 'decision hygiene' process, I imagine would be for: creating a series of decisions, where each choice is deliberate measured and genuinely assessed, as well as weighed against the other dimensions.

I have been inspired by his book, to create a list of properties for social-information, which I feed into an AI assistant via Raycast App, so that I can generate a list of properties for any type of content I consume (although, I have yet to fully make the list exhaustive, nor tested it out fully on the full range of content that I consume). I guess the point I want to make is that, deliberate consumption is the goal: to pause, reflect, and ask ourselves 'what do I want to consume', and 'what are my goals, when I choose to consume this piece of information'. Ultimately, it is our own responsibility to make choices in our own life, and if, like Kohn[4–1] has suggested, we are to nurture independence in ourselves and others, that ultimately means that we allow individuals to exercise as much free-choice as possible, whenever possible. The choice is ours, reflection might make it clearer.

References/Sources

- Hao, Karen. Empire of AI: Dreams and Nightmares in Sam Altman's OpenAI. Penguin Press, 2025.↩︎

- "Tim Berners-Lee." Wikiquote, Wikimedia Foundation, n.d., https://en.wikiquote.org/wiki/Tim_Berners-Lee. Accessed 11 Apr. 2026.↩︎

- Johnson, Clay A. The Information Diet: A Case for Conscious Consumption. O'Reilly Media, 2012.↩︎

- Kohn, Alfie. Punished by Rewards: The Trouble with Gold Stars, Incentive Plans, A's, Praise, and Other Bribes. Houghton Mifflin Co, 1999.↩︎↩︎

- Stephen Fry and Sir Tim Berners-Lee | This is For Everyone Audiobook." YouTube, uploaded by Pan Macmillan, 8 Sept. 2025, https://www.youtube.com/watch?v=Hs_KqUQ6LGI.↩︎

- Schulz, Kathryn. Being Wrong: Adventures in the Margin of Error. Harper Collins Publishers, 2011.↩︎

- Johnson, Clay A. The Information Diet: A Case for Conscious Consumption. 1st ed, O'Reilly Media, 2012.↩︎

- Kahneman, Daniel, et al. Noise: A Flaw in Human Judgment. First edition, Little, Brown Spark, 2021.↩︎