It costs around $50 and takes 30 seconds of audio. That's all a criminal needs to clone your CEO's voice and authorize a $12 million wire transfer.

For years, the "Business Email Compromise" (BEC) was the bane of the corporate world. It was simple: an attacker would spoof a CEO's email address, send a high-pressure message to a junior accountant, and walk away with a six-figure ire transfer. We countered this with MFA, better spam filters, and employee training.

But in 2026, the script has flipped. The attacker isn't just typing; they are speaking. And they sound exactly like your boss.

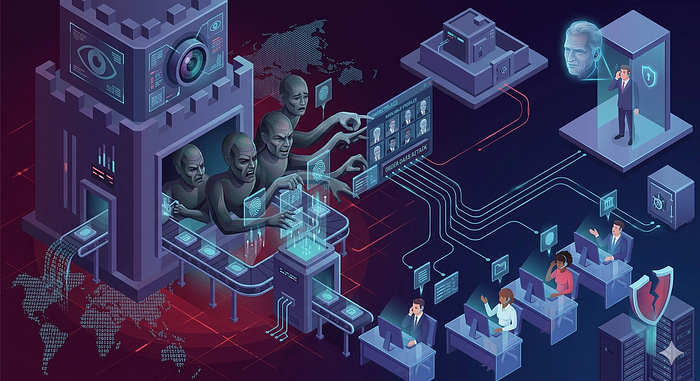

Welcome to Deepfake-as-a-Service (DaaS)—where hyper-realistic voice cloning is now accessible to anyone with a credit card.

The Democratization of Deception

We have entered the era of Deepfake-as-a-Service (DaaS). In the past, creating a convincing voice clone required high-end hardware, massive datasets, and specialized knowledge of machine learning. Today, a "script kiddie" with a $50 subscription to an underground DaaS platform can generate a hyperrealistic voice clone using less than 30 seconds of audio—often scraped from a YouTube keynote or a LinkedIn video.

This isn't just a gimmick; it's a scalable, industrial-grade threat. These platforms provide user-friendly interfaces where an attacker can type text and have it read back in a target's voice with perfect inflection, regional accents, and even emotional weight.

How It Actually Works (And Why You're Vulnerable)

Step 1: Audio Collection (Public and Free)

Attackers scrape voice samples from:

- Conference keynotes uploaded to YouTube

- Podcast appearances

- LinkedIn videos

- Zoom webinars

- Quarterly earnings calls

Time needed: A few minutes of audio is enough.

One executive's TED Talk? That's all they need.

Step 2: Voice Cloning (Ridiculously Easy)

Services like ElevenLabs allow anyone to:

- Upload audio sample

- Type what they want the person to "say."

- Generate hyper-realistic voice clone

Cost: $50–300 depending on service Technical skill required: Literally none—browser-based tools with simple interfaces Time to create: Minutes

As one security researcher noted, "I could make a video of myself saying 'Go Michigan!' right now, and I assure you, that is not something this Buckeyes fan would ever say voluntarily. No command line. No model training. No technical skill whatsoever."

Step 3: The Attack (Real-Time and Convincing)

The call comes in. The voice is perfect.

"This is [CEO name]. I'm in a meeting and need you to authorize an urgent wire transfer. I'll send details via email. This is time-sensitive for the acquisition—don't discuss this with anyone."

The employee hears their actual CEO's voice:

- Same tone

- Same speech patterns

- Same vocabulary

- Same urgency they've heard in real crises

Human detection rate for high-quality voice deepfakes: 24.5%

Translation: We can't tell the difference.

Source: DeepStrike Deepfake Statistics 2025

Real Breaches, Real Losses

These aren't theoretical scenarios:

· Arup: In early 2024, a finance worker at the engineering firm Arup was tricked during a video conference call featuring deepfaked versions of the CFO and other colleagues. This resulted in a $25 million (HK$200 million) loss.

· UK Energy Firm: This occurred in 2019. The CEO of a UK-based subsidiary was deceived by a voice deepfake of his boss (the CEO of the German parent company) and transferred €220,000 ($243,000) to a fraudulent account.

· FBI Warning / Marco Rubio: In June 2025, an attacker used AI-generated voice messages to impersonate U.S. Secretary of State Marco Rubio on the Signal app, targeting foreign ministers and other high-level officials.

· UNC6040 Group: This group (also known as ShinyHunters) has been active through 2025, specifically using vishing (voice phishing) to compromise Salesforce and other SaaS platforms at major companies like Google and Chanel.

· Canadian Insurance Company: While specific reports of a $12 million theft in Feb 2025 are mentioned in threat intelligence blogs, broader trends confirm insurance companies were heavily targeted by deepfake vishing throughout 2025.

Sources: Right-Hand AI Vishing Report 2025

The Industrialization of Fraud

Organized crime groups now use DaaS at scale:

- Lazarus Group (North Korea): Targeting energy executives in South Korea for infrastructure espionage

- BlackBasta/Cactus (Russia): Combining vishing with phishing to accelerate ransomware deployment

- The Com (Global syndicate): Multi-channel campaigns across Australia, North America, Southeast Asia

- SilverPhantom (Latin America): Procurement fraud targeting Brazil and Argentina using synthetic voices

These aren't lone hackers. They're sophisticated operations with infrastructure, playbooks, and profit margins.

Source: Right-Hand AI Deepfake Vishing Attacks 2025

Do you know the Gartner Warning

Gartner predicts by 2026, 30% of enterprises will no longer consider standalone identity verification and authentication solutions reliable in isolation.

Translation: The way we verify identity is fundamentally broken.

Deepfakes have made voice, video, and biometrics unreliable as sole authentication factors.

We need multi-layered verification that doesn't depend on "what you sound like" or "what you look like."

What You Must Do This Week

- Establish out-of-band verification policy for financial transactions

- Create secret authentication phrases for executive team

- Audit what audio/video of executives is publicly available

4. Train finance/support teams on specific deepfake scenarios

5. Implement multi-person approval for wire transfers

6. Test incident response: "What do we do if the CEO's voice is cloned?"

7. Review and tighten password reset procedures

8. Enable recording/logging for all financial authorization calls

9. Schedule monthly deepfake awareness refreshers

The Uncomfortable Reality

Deepfakes have reached a sophistication level where trusting is a vulnerability. Your instincts — built over years of recognizing voices and faces — are now exploitable attack vectors. The question isn't "Will we be targeted by deepfake vishing?" It's "When we are targeted, will our procedures prevent the breach?" What verification procedures does your organization have? Are they deepfake-resistant? Share your approach in the comments — we all need to learn from each other on this. The executive in your ear might sound like your boss, but in 2026, it's more likely to be a bot.

#CyberSecurityAwareness #Deepfakes #VoiceCloning #Vishing #AIFraud #DeepfakeAsAService #SocialEngineering #InfoSec #FraudPrevention #CyberSecurity #AIThreats #ExecutiveSecurity #FinancialFraud #ThreatIntelligence #CISO