"The best attack loop doesn't wait for prompts. It watches files, reasons in 0.8B parameters, and strikes while your EDR is still parsing YAML." — Dr. grisun0, while a

qwen3.5:0.8bagent enumerated SMB shares and he sipped his third espresso

Or: How I Taught My Framework to Delegate So Hard, Even My Satellite Models Got Imposter Syndrome

Let's be brutally honest:

If your red team framework needs you to manually type lazynmap, then pwntomate, then crackmapexec, then remember to check sessions/ for outputs… you're not doing agentic red teaming — you're doing keyboard calisthenics for fun and profit.

But what if your framework could:

- Watch

sessions/like a paranoid sysadmin withinotify, - Spawn a 0.8B parameter satellite model to reason about nmap output,

- Inject objectives into a queue before you even finish your coffee,

- And loop autonomously: policy → command → execute → learn → repeat?

Enter LazyOwn Agentic MCP — the world's first penetration testing framework that treats attack cycles like a Zen koan:

Breathe in (recon). Breathe out (exploit). Om. FactStore. Om. Objective injected.

🧬 The Premise: Your Framework Is Overworked. Let It Delegate to Satellites

Most "agentic" frameworks just wrap an LLM in a CLI and call it a day. We? We give our agents boundaries, tools, and a fact store that remembers what they forgot.

LazyOwn's new agentic layer hooks into three critical pain points:

- Discovery:

lazyown_automapper.pyscanslazyaddons/,tools/,plugins/at startup and exposes everything as MCP tools — no manual registration, no YAML fatigue. - Reasoning:

lazyown_llm.pybridges Groq (frontier) and Ollama (satellite) with a unified ReAct loop — sollama-3.3-70bplans the heist, andqwen3.5:0.8bpicks the lock. - Execution:

sessions_watcher.pyturns file events into objectives — newscan_*.nmap? → generateplan.txt→ inject objective → agent acts → new output → repeat.

It's not automation. It's orchestrated chaos with guardrails.

🛰️ The Three-Layer Agentic Stack (Or: Why Your 70B Model Shouldn't Do Everything)

Layer

Model

Role

Why It Works

Frontier

llama-3.3-70b (Groq)

Strategy, multi-step planning, CVE analysis

Big brain for big decisions. Doesn't waste tokens on nmap -sV.

Satellite

qwen3.5:0.8b (Ollama)

Tactical execution, tool selection, fact ingestion

Tiny, fast, cheap. Perfect for "should I run enum4linux or ldapsearch?"

Memory

FactStore + ObjectiveStore

Structured context, priority queue, event correlation

Because "what did we learn 3 steps ago?" shouldn't require re-reading logs.

🎯 Golden Rule: If your agent can't call

lazyown_facts_show(target="10.10.11.78")and use the output to decide the next tool, it's not agentic — it's a chatbot with sudo access.

🤖 "But grisun0, Didn't You Say You Hated MCPs?"

Yes. And I still do. But when I saw MCPs for Kali, Ghidra, and even that VS Code extension that lints your semicolons, I asked myself:

"If we don't build an MCP for LazyOwn, in six months we'll be the framework that could have been agentic."

So, with the same enthusiasm one reserves for patching a kernel at 5:59 PM on a Friday, we built:

lazyown_automapper.py: Auto-discovers extensions and exposes them as MCP tools with valid JSON schemas. No more manualtools.list()updates.lazyown_llm.py: Unified Groq/Ollama bridge with ReAct fallback for models that don't support native tool-calling. Becausedeepseek-r1:1.5bthinks in<think>tags, not JSON.sessions_watcher.py: Watchdog daemon that converts new files into events → objectives → actions. Becauseinotifyis the closest thing to precognition in Linux.

It's not magic. It's lazy engineering with intent.

⚡ How It Works (Without Boring You to Death)

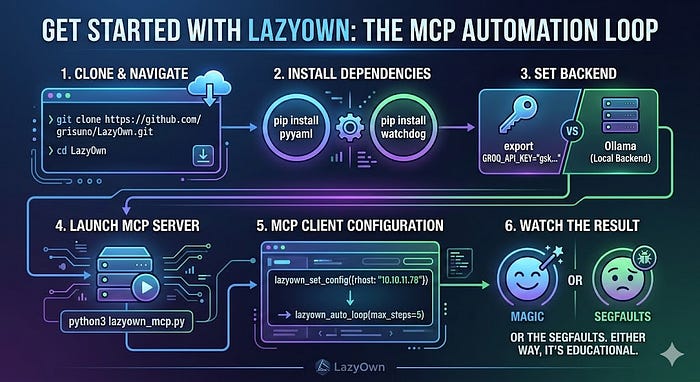

1. Start MCP Server

python3 lazyown_mcp.py

2. Client Connects (Claude Code, Cursor, etc.)

→ lazyown_set_config({rhost: "10.10.11.78"})

3. Auto-Loop Engages

lazyown_auto_loop(max_steps=10)

4. Behind the Scenes:

a) lazynmap runs → writes scan_10.10.11.78.nmap.xml

b) sessions_watcher detects XML → FactStore.ingest_xml()

c) ObjectiveStore injects: "Review SMB services on 10.10.11.78:445"

d) Satellite model (qwen3.5:0.8b) selects: lazyown_addon_crackmapexec_smb

e) Tool executes → output lands in sessions/10.10.11.78/445/crackmapexec/

f) Watcher fires again → FactStore updates → next objective queued

g) Loop continues until high-value success or max_steps

5. You? You're reviewing the timeline while the framework does the grunt work.It's Inception, but with mprotect(), sigaction(), and zero Leonardo DiCaprios.

🔧 Build It Like a Ghost (From Anywhere, Because Linux Is Freedom)

# 1. Clone LazyOwn (yes, it's still in /home/grisun0/)

git clone https://github.com/grisuno/LazyOwn.git && cd LazyOwn

# 2. Install minimal deps (watchdog is optional, but recommended)

pip install pyyaml watchdog

# 3. Configure your agentic backend

# Groq: export GROQ_API_KEY="gsk_..."

# Ollama: ollama pull llama3.2:3b # or qwen3.5:0.8b for satellites

# 4. Start the MCP server

python3 lazyown_mcp.py

# 5. In your MCP client:

lazyown_set_config({rhost: "10.10.11.78", lhost: "10.10.14.5"})

lazyown_auto_loop(max_steps=10, stop_on_high_value_success=True)

# 6. Watch sessions/ fill itself while you sip your beverage of choice.💡 Pro Tip: Want to add that new GitHub tool you saw on Twitter?

lazyown_create_addon( name="nuclei", description="Scan for CVEs with style", repo_url="https://github.com/projectdiscovery/nuclei.git", install_command="go install -v github.com/projectdiscovery/nuclei/v2/cmd/nuclei@latest", execute_command="nuclei -u http://{rhost} -t cve/ -" {outputdir}"nucl"i."xt", "pa"ams=[{"n"me": "rhost", "required": True}] ) # Now you have `lazyown_addon_nuclei` as an MCP tool. Zero Python required.

🕵️ For Blue Teams: How to Spot This Sleepwalking Nightmare

Look for:

- Processes that spawn

python3 skills/sessions_watcher.py &and then vanish from your radar, - Files

sessions/plan.txtthat update themselves (spoiler: it's not cron), - MCP tool calls with names like

lazyown_addon_subfinderappearing out of nowhere, - And above all: objectives that inject themselves into

objectives.jsonlwithout a human commit.

But let's be real:

If your SIEM doesn't correlate event_engine.py + FactStore.ingest_text() + ObjectiveStore.inject(),

you're not doing blue teaming — you're doing decorative logging.

⚠️ Disclaimer (Because HR Exists)

This functionality is for:

- Authorized red teaming,

- Research into agentic architectures,

- And making EDR vendors ask "why doesn't our agent do this?".

Do not use it on systems you don't own. Misuse may result in:

- Your manager asking if you've "considered a career in distributed philosophy",

- A satellite model deciding that

rm -rf /is "the optimal cleanup strategy", - Or worse: LazyOwn working too well, and you having no excuses left for missing the report deadline.

🎁 Try It Yourself (Ethically, You Dreamer)

# 1. Clone LazyOwn

git clone https://github.com/grisuno/LazyOwn.git && cd LazyOwn

# 2. Install deps

pip install pyyaml watchdog

# 3. Set your backend

export GROQ_API_KEY="gsk_..." # or use Ollama locally

# 4. Launch MCP server

python3 lazyown_mcp.py

# 5. In your MCP client:

lazyown_set_config({rhost: "10.10.11.78"})

lazyown_auto_loop(max_steps=5)

# 6. Watch the magic. Or the segfaults. Either way, it's educational.🔗 Repo: github.com/grisuno/LazyOwn ☕ Buy Me a Satellite: ko-fi.com/Y8Y2Z73AV 📜 License: GPL v3 (because free code is like coffee: better when shared)

💤 Final Thought

In a world of agents that "reason" for 200 tokens just to decide whether to ping…

The real advantage isn't having the biggest model.

It's having the shortest loop between observation → decision → execution.

LazyOwn doesn't want to replace you. It wants you to delegate the boring stuff to 0.8B satellites, and save your brain for what matters: figuring out why the target has port 31337 open with banner "Welcome to Hell v0.1".

So go forth. Configure. Delegate. And remember:

"If your agent needs more than three tools to run an

enum4linux, it's not agentic — it's bureaucratic with steroids."

— Dr. grisun0 McAgent, signing off from a PROT_NONE region while a abliterated qwen3.5:0.8b handles the Kerberoasting.

🛰️ Be small. Be fast. Be gone.

#LazyOwn #AgenticMCP #SatelliteModels #RedTeamAutomation #ReActNotReact #OllamaOverOracle #SmallModelsBigDamage #grisun0DidntWantThisButHereWeAre