A week later, a similar issue shows up again.

Different system. Same pattern.

At some point, it starts to feel less like a list of isolated problems, and more like a system producing them.

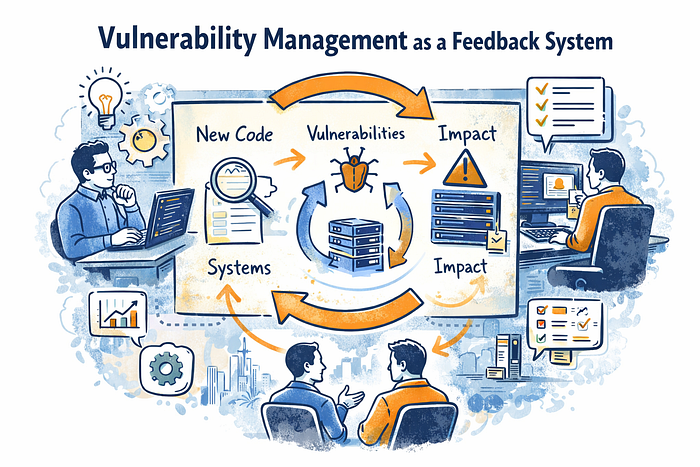

This is where I've been rethinking vulnerability management. Not as a process that patches vulnerabilities, but as a system that can learn from them.

1. The part we measure vs the part we don't

Most programs are very good at measuring:

- time to patch

- backlog size

- SLA compliance

These are visible, reportable, and easy to optimize.

What is less visible is how vulnerabilities move through the system:

- where they were introduced

- which controls saw them

- which controls missed them

If a vulnerability appears in production, it has already passed through multiple stages.

That journey is rarely examined.

2. Closing the loop across the lifecycle

In most environments, controls already exist:

- code review, SAST, dependency scanning

- infrastructure and runtime scanning

But they operate more like checkpoints than a connected system.

A feedback view asks:

- was this vulnerability detectable earlier?

- if yes, why was it not stopped?

- if not, what does that say about earlier coverage?

Even partial answers can be useful. Over time, patterns start to emerge:

- certain classes of issues consistently bypass specific controls

- some controls generate signals that are not acted on

That is less about individual findings, and more about how the system behaves.

3. Scanning as both assessment and discovery

Vulnerability scanning is usually scoped based on known assets, often sourced from a CMDB.

In practice, environments are less tidy:

- assets are created outside standard workflows

- ephemeral resources complicate tracking

So scanning can also act as a discovery mechanism:

- identifying assets not currently tracked

- feeding those findings back into asset inventory

This creates a loop where:

- asset inventory informs scanning

- scanning improves asset visibility

4. What scan results do not tell you

A clean scan looks simple. Either something is vulnerable or it is not.

In reality, scan results sit on top of a system with tradeoffs.

Every scanner makes choices:

- between false positives and false negatives

- between depth and coverage

- between authenticated and unauthenticated visibility

For example:

- unauthenticated scans scale well but miss internal context

- authenticated scans see more but are harder to maintain

- external scans show exposure, internal scans show reachability

A scan result is not just an answer. It is the output of:

- where you chose to look

- how you chose to look

- and what you chose to prioritize

So instead of just asking "is this clean?", it may be also useful to ask:

- what are we not seeing?

- where are our blind spots likely to be?

Some ways to probe that:

- compare results across tools in targeted areas

- correlate with pentest findings

- review incidents involving previously undetected vulnerabilities

- analyze where scans consistently fail or skip

The goal is not perfect detection. It is understanding the limits of your detection system.

5. Prioritization as feedback from the environment

Severity scores describe vulnerabilities in general.

Environments shape how they actually matter.

The same vulnerability can look very different depending on:

- exposure of the asset

- exploit likelihood

- known exploitation activity

So prioritization becomes a form of feedback:

- not just how severe something is

- but how it interacts with your specific environment

6. The gap before a CVE exists

Vulnerability management often starts at disclosure.

But there is a lead-up:

- vulnerabilities are researched before they are published

- patches take time to be released

- by the time a CVE appears, details may already be understood by attackers

With shorter exploitation timelines, that gap becomes more relevant.

Some teams are experimenting with earlier signals:

- monitoring upstream repositories and issue discussions

- tracking dependency changes

- incorporating threat intelligence

This is still evolving and not easy to operationalize, but it extends the idea of feedback further upstream.

7. Point-in-time visibility vs continuous exposure

Scans capture a moment.

Exposure happens over time.

A system can be:

- vulnerable for a period

- upgraded later

- clean in the next scan

The scan shows the current state, not the past.

If that matters, then the question becomes:

- can we reconstruct what was true before?

That often depends on:

- logging

- change tracking

- asset versioning

8. When vulnerabilities repeat

Backlogs are usually measured by size and speed.

Another angle is recurrence.

If vulnerabilities like CVE-2024-xxxx or earlier continue to appear:

- in new systems

- after rebuilds

- across environments

then they may not be isolated issues.

They may be signals:

- base images are outdated

- templates carry forward insecure defaults

- pipelines allow known issues to re-enter

At that point, fixing the vulnerability does not remove the condition that created it.

9. Incentives shape what the system produces

Even with strong tooling and visibility, outcomes are influenced by what teams are measured on.

If teams are rewarded for:

- closing tickets quickly

- meeting SLAs

- maintaining uptime

they may naturally optimize for:

- speed of remediation

- minimal disruption

rather than:

- removing root causes

- preventing reintroduction

What the system produces is often aligned with what it is incentivized to produce.

So feedback loops only work if there is space and incentive to act on them, not just to process them.

10. What are we actually optimizing?

Automation often focuses on patch deployment.

But remediation includes:

- validation

- regression testing

- ensuring systems remain stable

In many cases, deployment is fast. Validation is slower.

So the system may be optimized for:

- speed of change

but constrained by:

- confidence in change

That gap is worth paying attention to.

Conclusion

Seen this way, vulnerability management starts to look less like a queue of issues and more like a system producing signals.

Each vulnerability is not just something to fix, but something to learn from:

- where controls worked

- where they did not

- how issues were introduced and allowed through

From that perspective, vulnerabilities themselves may not be the core weakness.

Fixing them treats the symptom.

The real problem is the set of design and process decisions that keep producing them.

If those do not change, the system will keep producing the same outcomes, no matter how fast we patch.