Everyone talks about using AI for bug bounty. Most of it is surface level — "I asked ChatGPT to write a payload." That's not augmentation. That's autocomplete.

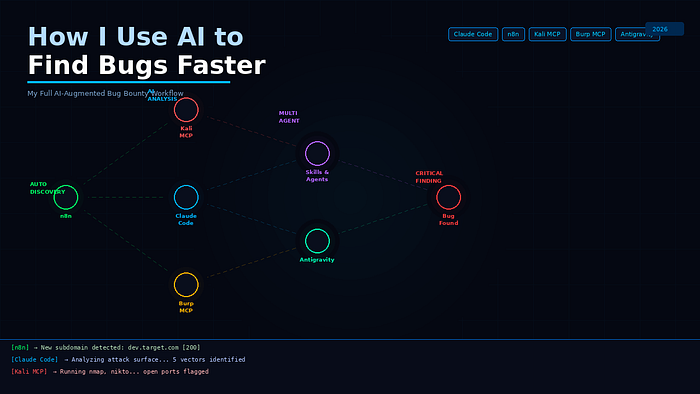

Real AI-augmented bug bounty looks different. It's a connected pipeline where automation handles the boring parts, AI handles the analysis, and you focus on what actually requires a human — creative exploitation and vulnerability chaining.

This is my actual workflow. Every tool mentioned is something I use actively.

The Core Idea

Traditional bug bounty is mostly manual and mostly slow. You run recon, manually review results, test endpoints one by one, write reports from scratch. It works — but it doesn't scale.

My approach flips this. Automation handles continuous monitoring and initial recon. AI handles analysis, payload suggestions, and report writing. I handle the creative thinking and the actual exploitation.

The result: I spend more time on interesting targets and less time on grunt work.

The Stack

Here's everything in the pipeline:

n8n — Automated subdomain monitoring and pipeline orchestration

Claude Code — Core AI brain — analysis, scripting, report writing

Kali MCP Server — Connects Kali Linux tools directly to Claude

Burp Suite MCP — Connects Burp Suite findings directly to Claude

Antigravity — AI-powered recon and attack surface mapping

Canvaman — Claude Code plugin to reduce token usage and optimize context window

Superpower Plugin — Enhanced Claude interface with advanced prompting

Multi-agent setup — Parallel AI agents handling different recon tasks simultaneously

Custom skills — Domain-specific Claude skills built for bug bounty tasks

Stage 1 — Automated Asset Discovery with n8n

The pipeline starts before I even open my laptop.

n8n runs on my server and monitors up to 10 wildcard bug bounty targets every 4 days automatically. The workflow runs subfinder and findomain against every target, merges and deduplicates results, diffs against the previous baseline, and sends new subdomains straight to my Telegram with httpx probing results already included — status codes, page titles, tech stack.

When I wake up, my Telegram already has:

🔍 New Subdomains Discovered!

Target: [target].com

New domains found: 7

Alive (httpx): 4

https://dev.[target].com [200] [nginx]

https://api-staging.[target].com [403] [cloudflare]I didn't run a single command. The new assets came to me.

This is the highest ROI part of the entire setup. New assets are misconfigured assets. Being first matters.

Stage 2 — Feeding New Assets into Claude Code

Here's where it gets interesting.

The new subdomains from n8n don't just sit in Telegram. They feed directly into Claude Code with a structured advanced prompt and a set of custom skills built specifically for bug bounty recon.

The prompt tells Claude exactly what to do with each new domain:

New asset discovered: dev.[target].com [200] [nginx]

Tasks:

1. Enumerate directories and endpoints

2. Check for common misconfigurations

3. Identify technology stack and known CVEs

4. Suggest top 5 attack vectors based on the response headers and tech

5. Generate targeted payloads for each vector

6. Flag anything that looks like internal/staging exposureClaude Code processes this, runs analysis using the connected tools, and returns a prioritized attack plan — not a generic checklist, but a specific plan for that specific asset.

Stage 3 — Kali MCP Server + Burp Suite MCP

This is the part that changes everything.

The Kali MCP Server connects my Kali Linux environment directly to Claude. Instead of switching between terminal and AI chat, I describe what I want in plain English and Claude executes the actual Kali commands, reads the output, and interprets the results.

Me: Run nmap on dev.[target].com and tell me what's interesting

Claude: [runs nmap, reads output, highlights open ports worth investigating]The Burp Suite MCP does the same for web application testing. Burp findings flow directly into Claude's context. Claude can analyze request/response pairs, suggest injection points, and generate modified requests — all without me copying and pasting between tools.

The feedback loop is tight. Scan → analyze → suggest → test → repeat. What used to take 30 minutes of context switching now happens in one conversation.

Stage 4 — Antigravity for Attack Surface Mapping

Antigravity handles the broader attack surface analysis — mapping relationships between assets, identifying patterns across subdomains, and surfacing anomalies that are easy to miss when you're looking at domains one at a time.

Where n8n finds new assets, Antigravity helps understand how they relate to each other and where the interesting edges are.

Stage 5 — Multi-Agent Setup with Custom Skills

For larger programs, I run multiple Claude agents in parallel — each handling a different aspect of recon simultaneously.

One agent handles subdomain analysis. Another focuses on JavaScript file enumeration and endpoint discovery. Another handles parameter analysis and injection point identification. They work in parallel and their outputs feed into a single consolidated report.

The custom skills are the secret weapon. I've built domain-specific skills for bug bounty tasks — a recon skill, a payload generation skill, a report writing skill, a CVSS scoring skill. These are loaded into Claude Code and applied automatically based on the task.

Instead of writing the same prompt from scratch every time, the skill handles the structure and I just provide the target-specific context.

Canvaman keeps token usage low inside Claude Code — compressing context so longer, more complex recon sessions don't hit token limits mid-workflow. Essential when running multi-step analysis on large targets.

Stage 6 — Report Writing

The last bottleneck in bug bounty is report writing. A well-written report gets triaged faster, gets higher severity ratings, and gets paid faster. A poor report gets questioned, delayed, or downgraded.

Claude Code writes the first draft. I feed it the finding details — the endpoint, the request, the response, the impact — and it generates a structured, professional report following HackerOne conventions.

I review, adjust the technical details, add screenshots, and submit. What used to take an hour takes fifteen minutes.

The Real Advantage

The point of this setup isn't to automate bug bounty completely. You can't — the creative part is still human.

The point is to compress the time between "new asset exists" and "I'm actively testing it" — and to eliminate the repetitive manual work that doesn't require human judgment.

n8n finds the asset while I sleep. Claude Code analyzes it before I finish coffee. Kali MCP and Burp MCP run the initial tests without me switching contexts. By the time I'm sitting at my desk, I have a prioritized target list, an attack plan, and a list of already-tested vectors — ready for the parts that actually need me.

That's the leverage. Not replacing the hunter. Removing everything that slows the hunter down.

Getting Started

You don't need this entire stack on day one. Build it incrementally:

- Start with Claude Code — use it for recon analysis and report writing immediately

- Add n8n — set up the subdomain monitor for your active targets

- Add Burp Suite MCP — connect your Burp findings to Claude

- Add custom skills — build recon and payload skills as you identify repetitive prompts

- Add multi-agent workflows — once you're comfortable with single-agent flows

Each layer compounds. The stack gets more powerful as the pieces connect.

Final Thought

AI doesn't make you a better bug bounty hunter by knowing more than you. It makes you better by moving faster, staying consistent, and removing the friction between finding a target and actively testing it.

The hunters who figure this out early have a real edge. The window is still open.

Tools used: Claude Code · n8n · Kali MCP Server · Burp Suite MCP · Antigravity · Canvaman · Superpower Plugin

Tags: Bug Bounty API Security Cybersecurity Ethical Hacking Web Security