The Call That Started This Blog Post

Client calls me three days into a two-week engagement.

"Hey, we forgot to mention, can you also test our mobile app? And the AWS environment? Oh, and our dev team pushed a new API last week, can you cover that too?"

The original scope: one web application. Agreed price. Fixed timeline.

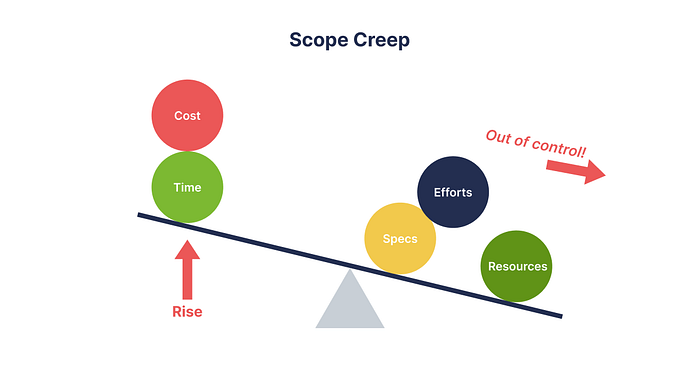

This is scope creep. And if you've done even a single pentest engagement, you've felt it. That slow, polite, completely reasonable-sounding expansion of work that wasn't in the contract, wasn't budgeted for, and will absolutely eat your timeline if you don't handle it right.

This post is how I handle it, the conversations, the contract language, the pushback techniques, and the client education that prevents 80% of these situations before they start.

🌊 1. Why Scope Creep Happens in Pentesting

Scope creep isn't always malicious. Usually it comes from one of these places:

- The client doesn't know what they don't know. They hired you to "test security." In their mind, that means everything. They didn't realize "one web app" and "our entire infrastructure" are orders-of-magnitude different engagements.

- Internal politics. Your primary contact agreed to scope. Then their manager found out and added requirements. Then the CISO added requirements. Now you're three meetings deep into a scope document that looks nothing like the original email.

- Discovery during the engagement. You find something interesting say, an internal API the web app talks to. The client says "oh, can you test that too?" This one's genuinely hard to refuse because it feels related. But it might add 40 hours of work.

- Vague original scope. If your SOW says "test the company's web presence," you've handed the client a blank check to interpret that however they want.

- The fix for most of these is the same: clarity upfront, documentation always, process for changes.

📝 2. Setting Expectations Before the Engagement Starts

The kickoff call is where you spend an hour on questions the client didn't expect. This is not rude. This is professional.

Questions I ask on every kickoff:

SCOPE DEFINITION

├── What specific URLs, IP ranges, or systems are in scope?

│ (Get exact: <https://app.company.com/v2> is different from <https://company.com>)

├── What is explicitly OUT of scope?

├── Are there any systems that must NOT be touched?

│ (Production databases, payment processors, medical systems)

├── What do we do if we discover an adjacent system that looks vulnerable?

│ (Stop? Document and report? Get approval to test?)

└── Are third-party services in scope? (CDN, cloud providers, subprocessors)

TIMING AND WINDOWS

├── What are the testing hours? (24/7? Business hours only? Night/weekend only?)

├── Are there maintenance windows or freezes we need to avoid?

├── What happens if we accidentally cause an outage?

└── Who do we call immediately if something breaks?

GOALS AND DELIVERABLES

├── What's the primary goal? (Compliance requirement? Board request? Real concern?)

├── Who is the audience for the final report? (Technical team? Board? Auditors?)

├── What format do you need the report in? (PDF? Executive summary only? CVSS scores?)

└── When do you need the report, and is that the draft or final version?

RULES OF ENGAGEMENT

├── Are social engineering attacks in scope? (Phishing, vishing, physical access?)

├── Are DoS tests permitted?

├── What credential access are we starting with? (Black box? Grey box? White box?)

└── Do we have written authorization from the asset owner?

(Especially important for cloud, SaaS, shared hosting)Write all of this down. Send it back to them in an email. "Hi [name], confirming our discussion from today's kickoff, please review and confirm these details are accurate."

That email becomes your first line of defense when scope creep starts.

📄 3. Writing a Scope of Work That Actually Protects You

Your SOW is a legal document. Treat it like one. These are the clauses I consider non-negotiable.

The Scope Definition — Be Obsessively Specific

WEAK (Don't do this):

"Penetration testing of Company X's web applications

and associated infrastructure."

STRONG (Do this):

"Penetration testing limited to the following assets:

- <https://app.companyX.com> (production)

- <https://api.companyX.com/v1> and /v2 endpoints

- IP range: 203.0.113.0/24

Explicitly excluded from scope:

- companyX.com (marketing site, managed by third party)

- companyX.atlassian.net (out of scope per client request)

- Any AWS, GCP, or Azure infrastructure not listed above

- Physical security testing

- Social engineering"The Change Request Clause

This single clause prevents 90% of mid-engagement scope arguments:

SCOPE CHANGE PROCESS:

Any request to add assets, systems, or test types to the engagement

must be submitted in writing and acknowledged by both parties.

Scope additions will be evaluated for timeline and cost impact.

Work on additional scope items will not begin until a signed

change order is received.

Verbal approvals for scope changes are not sufficient.The Emergency Contact Clause

EMERGENCY CONTACT:

If testing causes unintended service disruption, Consultant will

immediately cease testing and contact:

Primary: [Name], [Phone] - available 24/7 during engagement

Secondary: [Name], [Phone]

Client agrees to maintain 24/7 availability of at least one

contact during active testing windows.The "What We're Not Guaranteeing" Clause

This one saves you from the client who reads your report and says "but I thought this meant we're secure now."

LIMITATIONS:

A penetration test is a point-in-time assessment. It reflects the

security posture of the systems tested on the dates specified.

Completion of this engagement does not constitute certification

that the systems are secure or free from vulnerabilities.

New vulnerabilities may emerge after the engagement concludes.

The assessment covers only the assets listed in scope. No

representation is made regarding the security of out-of-scope systems.🙄 4. The Most Common Unrealistic Expectations

Let me go through the ones that actually come up, and what I say.

"Can you guarantee you'll find all the vulnerabilities?"

No. Nobody can. A pentest is not an exhaustive audit. You're simulating an attacker with limited time, not running automated scans indefinitely.

What I say: "We can guarantee we'll apply our best efforts against the agreed scope within the timeline. What we can't guarantee is completeness, no time-boxed engagement can. What we can do is prioritize high-impact areas and be transparent about what we covered and what we didn't."

"We need the full report in 24 hours."

A two-week engagement produces a lot of findings. Writing a quality report, one that actually explains vulnerabilities clearly, has reproduction steps, risk ratings, and remediation guidance takes time. Rushing it produces garbage that doesn't help anyone.

What I say: "A 24-hour turnaround for a full technical report isn't feasible without compromising quality. I can give you a verbal debrief and executive summary in 24 hours, with the full report following within [X] business days. Would that work?"

"We want a pentest done in 3 days."

For one small app, maybe. For anything complex, this is a red flag. A rushed pentest gives you a rushed report. The client often doesn't understand they're not getting value from this.

What I say: "For the scope you've described, three days allows us to cover the most obvious attack surfaces but not a thorough assessment. I'd rather tell you this upfront than deliver a report that misses significant findings. Can we discuss what's driving the timeline?"

"We already did a pentest last year, just do a quick check."

A "quick check" is not a pentest. It's a scan. And scanning is not testing.

What I say: "I understand you want to validate the remediation work since last year's assessment. We can structure this as a focused retest on previously found vulnerabilities plus a surface-level check on key areas — that's different from a full pentest and I can scope it accordingly. Does that match what you need?"

"If we're breached after this, does your report cover us legally?"

This one makes lawyers nervous. Don't make promises here.

What I say: "The report documents what was tested, what was found, and what was recommended. Whether that satisfies any specific compliance or legal requirement is something your legal team should evaluate. I'd strongly recommend looping them in."

🚫 5. How to Say No Without Losing the Client

Most pentesters struggle here because "no" feels like losing the client. It doesn't have to.

The framework is simple: never say no to the request, say no to doing it for free / outside process.

CLIENT: "Can you also test our mobile app?"

WRONG RESPONSE:

"That's out of scope, we can't do that."

→ Sounds rigid. Feels like you don't want to help.

RIGHT RESPONSE:

"Absolutely, we can include the mobile app. Let me scope that

out - looking at iOS and Android, that's roughly [X] additional

days. I'll put together a change order and we can get started

on it as soon as that's signed. Does that timeline work for you?"See what happened there? You said yes to helping them. You said no to doing it for free and outside the agreed process.

The key phrases:

"We can absolutely do that - let me scope it out."

"Happy to include that - I'll send over a change order."

"That's outside what we agreed, but we can add it. Give me

a day to assess the timeline impact."

"I want to make sure we do that properly, so let me build

that into a formal addition to the engagement."What you're communicating: I want to help you. Here's the right way to do it.

🔄 6. Mid-Engagement Scope Changes — The Right Process

When a scope change request comes in mid-engagement, here's the process I follow:

STEP 1: ACKNOWLEDGE, DON'T COMMIT

"Got it - let me review what that would involve and get back

to you by end of day."

Never say yes or no immediately.

STEP 2: ASSESS IMPACT

├── Time: How many additional hours does this add?

├── Risk: Does this create new legal/authorization issues?

├── Dependencies: Does this change require additional access or credentials?

└── Timeline: Can we fit this in, and what gets deprioritized if we do?

STEP 3: DOCUMENT THE REQUEST

Email confirmation: "Following up on our call - you've requested

we add [X] to the engagement scope. Here's my assessment..."

STEP 4: PRESENT OPTIONS

Option A: Add to current engagement (here's the cost/time impact)

Option B: Include in a follow-up engagement

Option C: Note it as out-of-scope and recommend a future assessment

Let the client choose.

STEP 5: GET WRITTEN APPROVAL BEFORE PROCEEDING

Change order, email confirmation, anything in writing.

"I'll get started once I have your written confirmation."The mid-engagement discovery scenario:

You're testing an app and you find a connected internal API that looks juicy. Do you test it?

This is where people get into trouble. The right answer is: stop, document, ask.

# Your engagement notes should have an entry like this:

## Out-of-Scope Discovery - Day 4

During testing of <https://app.target.com>, identified an

internal API endpoint: <https://internal-api.target.com>

This endpoint appears to accept unauthenticated requests

and is NOT in the agreed scope.

Action taken: Ceased testing of this endpoint.

Client notified: [Date/Time] via email to [Contact]

Awaiting written approval before proceeding.This protects you legally and professionally. You found something valuable, you told them, you stopped. If they want you to test it, great change order. If they don't, you've still done your job correctly.

📊 7. Reporting: Where Expectations Crash Hardest

The report is where most client disappointment lives. Why? Because they expected a simple "you passed" or "you failed" and instead got a 40-page technical document they can't read.

Common reporting mismatches:

THEY EXPECTED: YOU DELIVERED:

"Everything is secure" A list of 23 findings

"Just the critical ones" A detailed technical report

"One page summary" An 80-page document

"Proof we're compliant" Findings that may affect their compliance

"Something for the board" Something only your team can readThe fix is a two-document approach that I use on every engagement:

DOCUMENT 1: EXECUTIVE SUMMARY (2-4 pages)

├── Overall risk rating (single, clear verdict)

├── Top 3-5 most critical findings in plain English

├── What was tested (brief, non-technical)

├── Key recommendations prioritized by business impact

└── No CVE numbers, no code, no technical jargon

→ Written for the CISO, board, and non-technical stakeholders

DOCUMENT 2: TECHNICAL REPORT (full length)

├── Methodology and scope

├── All findings with full reproduction steps

├── CVSS scores, CVE references

├── Screenshots and proof-of-concept

├── Detailed remediation guidance with code examples

└── Appendix: Raw tool output, scope confirmation

→ Written for the security/dev team who will fix thingsSet this expectation in the kickoff: "You'll receive two documents a short executive summary and a full technical report. The executive summary is for leadership, the technical report is for your team."

Nobody complains about getting two documents. They complain about getting the wrong document for their audience.

Finding Severity — Be Consistent

One of the fastest ways to lose credibility with a client is inconsistent severity ratings. Here's the framework I use:

CRITICAL (CVSS 9.0-10.0)

└── Exploitation leads to full system/environment compromise

with no authentication required.

Example: Unauthenticated RCE on external-facing server

HIGH (CVSS 7.0-8.9)

└── Significant impact but requires some conditions or auth.

Example: SQLi with authentication, privilege escalation

MEDIUM (CVSS 4.0-6.9)

└── Limited direct impact or requires significant user interaction.

Example: Stored XSS, CSRF on low-privilege function

LOW (CVSS 0.1-3.9)

└── Minimal impact, defense-in-depth issue.

Example: Missing security header, verbose error messages

INFORMATIONAL

└── Not a vulnerability, but a configuration or security hygiene concern.

Example: Unnecessary open ports, outdated (but unaffected) softwareAnd always explain what the severity MEANS in business terms, not just technical terms:

WEAK: "SQL Injection - CVSS 9.8 - Critical"

STRONG: "SQL Injection - Critical

An attacker without any account credentials can extract the

entire customer database through the login form. This includes

names, emails, hashed passwords, and order history for all

[X] customers. Exploitation requires no specialized tools and

takes approximately 5 minutes. If this is discovered externally,

it would likely trigger a GDPR breach notification requirement."The second version tells the CISO exactly what to tell the board. That's your job.

🚩 8. Red Flags to Spot Before You Sign

Some engagements are trouble from the first call. Learn to recognize these:

🚩 No written authorization from the asset owner

(Testing on behalf of a client who doesn't own the systems)

🚩 "We just need a pentest certificate, doesn't have to be thorough"

(They want paper, not security - your report can still cause problems)

🚩 Extreme time pressure with no flexibility

("We need this done this week, no exceptions")

🚩 Unwillingness to sign an SOW

("Just start work, we'll sort the paperwork later")

🚩 Scope that keeps expanding in pre-sales

(If it's happening before you even signed, imagine during)

🚩 "Don't report the really bad stuff, it'll scare the board"

(You're being asked to falsify findings. Walk away.)

🚩 Pushing back hard on emergency contact requirements

("We don't need that, just be careful")

🚩 Multiple competing stakeholders with conflicting requirements

(You'll spend more time in meetings than testing)Some of these are workable with a conversation. Some are not. The falsified findings one is an immediate no, that's the kind of thing that ends careers and starts lawsuits.

📋 9. Templates and Scripts You Can Actually Use

Scope Change Request Email Template

Subject: Scope Addition Request - [Engagement Name]

Hi [Name],

Following our conversation today, you've requested we add

[specific system/test type] to the current engagement.

Here's my assessment:

Estimated additional time: [X] hours / [X] days

Timeline impact: Delivery moved from [original date] to [new date]

Additional cost: $[amount] (based on [rate])

To proceed, I'll need:

1. Written approval of the above (reply to this email works)

2. [Any additional access/credentials needed]

Once I have that, I can begin [specific work] on [date].

Let me know if you have any questions.

[Your name]Emergency Stop Notification Template

Subject: URGENT - Testing Paused - [System Name] - [Date/Time]

[Contact name],

Testing has been paused as of [time] following unexpected

behavior on [system/service].

What we observed: [brief, factual description]

Systems potentially affected: [list]

Action taken: [e.g., "All testing stopped. No further requests sent."]

Please confirm current service status and advise whether

to resume testing or stand down.

Available on [phone number] immediately.

[Your name]Out-of-Scope Discovery Notification

Subject: Out-of-Scope Discovery - [Engagement Name]

Hi [Name],

During testing today, we identified [brief description]

at [URL/IP] which is not listed in our agreed scope.

We have ceased testing this asset pending your direction.

Preliminary observation: [What you noticed - be factual,

not alarmist, since you haven't actually tested it]

Options:

A) Add to current engagement (I'll scope the time/cost impact)

B) Include in a follow-up engagement

C) No action - we'll note it as an observation in the report

Please advise. We won't touch this asset until we hear from you.

[Your name]🏁 10. Final Thoughts

- The technical skills of pentesting take years to develop.

- The professional skills, scope management, expectation setting, difficult conversations, can be learned in months if you're intentional about it.

- Most of the problems covered in this post come from the same root cause: things that were unclear at the start become problems at the end. The fix is always more specificity, more documentation, and more proactive communication earlier in the process.