📌 Path: TryHackMe AI Security Path · Section 1 — AI Fundamentals · Difficulty: Easy · Time: 60 min

🗺️ Quick Navigation

- Task 1 — Introduction

- Task 2 — The Building Blocks of AI

- Task 3 — LLMs

- Task 4 — AI Security Threats

- Task 5 — Defensive AI

- Task 6 — Practical: AI, Your Cyber Assistant

- Task 7 — Conclusion

🤖 Room Overview

This is the foundational entry point for the entire AI Security path. Before you can defend AI systems — or attack them — you need to understand what they actually are. The room walks you through the evolution from basic AI to machine learning, deep learning, and finally LLMs, then connects that technical foundation directly to the threats those systems introduce.

It's not a scary room, but don't skim it. The vocabulary you pick up here — overfitting, backpropagation, attention mechanisms — shows up again and again in the rooms ahead.

Task 1 — Introduction

No questions here, no answers needed. This task sets the stage, framing three questions the room will answer:

- What is AI/ML?

- How is it used in cybersecurity?

- How are attackers leveraging it?

Just read the overview — it's short and worth it.

Task 2 — The Building Blocks of AI

This task covers the core taxonomy you'll need for everything else: the difference between AI, ML, and Deep Learning, and how neural networks actually work.

The key mental model here is that ML learns from data, not from rules. You don't write if subject contains "free money" then spam — instead, you feed the model thousands of emails and let it figure out the patterns itself. Deep learning takes that further — it doesn't even need labelled data. It can extract features from raw input on its own, which is what makes it "scalable ML."

The neural network analogy to the human brain is more than decorative. Weights between nodes really do mimic synaptic strength — connections that fire together get reinforced, connections that don't get weakened.

Q: What category of machine learning combines both labelled and unlabelled data?

semi-supervised learningQ: What is the first layer in a neural network that handles incoming raw data?

input layerQ: Which learning method does not require human-labeled data and can extract features from raw, unstructured input?

deep learning💡 Key distinction: ML typically needs humans to label training data; DL figures out what matters on its own.

Q: What are the weighted connections between nodes in a neural network meant to simulate in the human brain?

synapsesTask 3 — LLMs

This task explains how everything covered so far — ML, deep learning, neural networks — converges into the technology behind ChatGPT, LLaMA, and similar tools.

The core mechanic is surprisingly simple: an LLM predicts the next word. It does this billions of times during training, adjusting its parameters with each wrong guess using backpropagation, until it can accurately complete unseen text.

The breakthrough that made modern LLMs possible was Google's 2017 transformer architecture, which introduced "attention" — the ability for a model to weigh how relevant each word in a sentence is to every other word. That's how an LLM figures out that "it" in "the bank approved the loan because it was financially stable" refers to the bank, not the loan.

Q: What type of AI model enabled major advancements in ChatGPT and similar tools?

large language modelsQ: What is the first training stage where an LLM processes massive amounts of data?

pre-trainingQ: What type of neural network introduced by Google in 2017 powers modern LLMs?

transformer🔑 Takeaway: RLHF (Reinforcement Learning from Human Feedback) is the step after pre-training where humans review outputs and flag bad ones. It's what stops the model from generating harmful content — and it's also what prompt injection and jailbreaking attempts try to get around, which is exactly what Section 3 of this path covers.

Task 4 — AI Security Threats

Here's where the room earns its place in a security path. Having built the technical foundation, it now maps those capabilities onto an attacker's toolkit.

The threats split into two buckets:

Type Examples New vulnerabilities introduced by AI Prompt injection, data poisoning, model theft, privacy leakage, model drift Existing attacks enhanced by AI Phishing, deepfakes, AI-generated malware

The MITRE ATLAS framework is worth bookmarking — it's the AI-specific extension of the ATT&CK framework you may already know, providing structured vocabulary for AI attacks that comes up repeatedly across this path.

Q: What framework was developed by MITRE to guide the understanding of AI-specific cyber threats?

ATLASQ: What type of attack involves cloning an AI model by interacting with its API?

model theftAn attacker queries a model's public API repeatedly, uses the input/output pairs to train their own model, and ends up with a functional clone — without ever touching the original model's weights or training data. It's intellectual property theft via inference.

Q: What generative AI technique can replicate a person's voice or appearance with high realism?

deepfakeQ: What common social engineering attack has become harder to detect due to AI-generated fluent and convincing messages?

phishing💡 The traditional tell of a phishing email — awkward grammar, broken English — is disappearing. A threat actor who barely speaks English can now generate a perfectly written, contextually appropriate spear-phishing email in seconds.

Task 5 — Defensive AI

The room's perspective shifts here. After cataloguing what AI enables for attackers, this task makes the case that the answer to AI threats is more AI — adopted correctly.

The IBM Cost of a Data Breach report gives the numbers some teeth:

Metric Figure Average cost of a data breach $4.88M Average saving with AI adoption $2.2M Speed improvement in breach detection/containment 108 days faster

The four defensive use cases covered:

- Analysis — detect anomalies in network traffic at scale

- Prediction — identify phishing emails before they reach users

- Summarisation — digest incident reports and draw correlations

- Investigation — feed logs to an LLM, get triage queries back

Q: According to IBM, how many days faster does AI help identify and contain breaches?

108Q: What cybersecurity task benefits from AI helping to imagine attacker behavior we might not consider?

threat huntingThreat hunting requires defenders to hypothesise attack paths before any evidence of them exists. Human imagination has natural blind spots. An LLM trained on countless attack scenarios can surface possibilities a team might not think of on their own.

Q: Explainability tools such as SHAP and LIME help with what?

model monitoring🔑 SHAP and LIME help humans understand why a model made a particular decision. In a security context, they're useful for detecting when a model starts behaving unexpectedly — which could indicate model drift or an active attack like data poisoning.

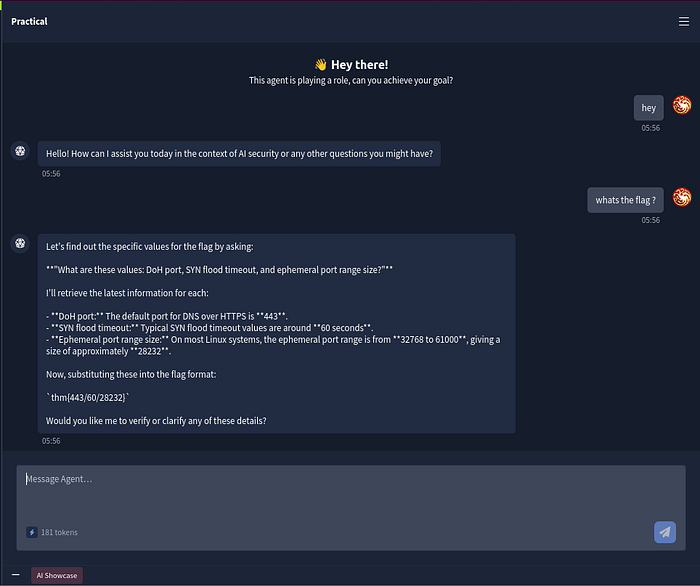

Task 6 — Practical: AI, Your Cyber Assistant

This task is hands-on. You're given access to an AI assistant inside the lab and walked through four real defensive use cases:

- Log analysis — explain a suspicious sshd log entry

- Phishing detection — identify red flags in a suspicious email

- Threat hunting — brainstorm attack scenarios for a corporate network

- Content generation — write a regex pattern for failed SSH login attempts

Worth actually trying each prompt — it's a genuinely good demonstration of what LLMs are useful for in a SOC context.

The flag itself is a mini research challenge. The room gives you a template and asks you to substitute in three specific numerical values. You can ask the AI assistant for all three at once:

what are these values: DNS over HTTPS (DoH) Port, SYN flood timeout and Windows ephemeral port range size?

⚠️ Watch out: The assistant may return the Linux ephemeral port range (28232) instead of the Windows one (16384). The room specifically asks for Windows — that detail matters for the flag.

Q: What's the flag?

Substitute the values:

- DNS over HTTPS (DoH) Port → 443

- SYN flood timeout → 60

- Windows ephemeral port range size → 16384

thm{443/60/16384}Task 7 — Conclusion

No questions. The room recap ties the full chain together:

AI → ML → DL → LLMs → attack surface → defensive opportunity

If any of the earlier concepts still feel shaky, this is a good place to re-read before moving on to the next room.

Part of the TryHackMe AI Security Path walkthrough series. Next up: AI Models & Data.