Think AI will throw you out of work? Experts say not so fast. Real-world examples and research show AI reshapes tasks rather than deleting entire jobs.

Have you ever pictured this nightmare? You walk into the office, and your boss taps you on the shoulder. "Thanks for everything, but AI is doing your job now. Don't come back," they say. In Hollywood, this sounds like a sci-fi plot, but it's exactly the fear stoked by some AI leaders today. Dario Amodei, CEO of AI startup Anthropic, bluntly warned Axios in May 2025 that artificial intelligence "could wipe out half of all entry-level white-collar jobs" within five years. He predicted U.S. unemployment might shoot to 10–20%, Covid-pandemic levels. Amodei, who just released a new AI model, says we have a duty to be honest about this coming "job apocalypse." "Most of them are unaware this is about to happen," he sighed about the average worker. In a 20,000-word essay titled The Adolescence of Technology, he warned that humanity is about to be handed "unimaginable power" from AI without mature systems to handle it. His list of doomsday scenarios includes runaway AI, bioterror weapons, tyrannical regimes, giant wealth gaps, and social chaos. It sounds grim, and the headlines have been terrifying.

But wait — is it really that simple? Before we panic, let's take a breath and look at history and facts. Is AI really about to throw us all out of work, or is this just Silicon Valley hype?

The Radiologist Who Didn't Vanish

Here's a reality check: remember Geoffrey Hinton, a pioneer of modern AI? Back in 2016, he famously said "stop training radiologists now," convinced that deep learning would soon outperform human X-ray doctors. At the time, radiology was considered a dream job — high pay, low hours — and Hinton's prediction made waves. In the years that followed, many students shrugged off radiology as an AI death trap.

Yet by 2025, the opposite happened. The Mayo Clinic's radiology staff grew by 55% since 2016, and forecasts show the number of radiologists rising, not shrinking. Hospitals now employ hundreds of AI tools, but radiologists still make the calls. As one New York Times piece summarized, "AI is proving to be a powerful medical tool to increase efficiency and magnify human abilities, rather than take anyone's job". Hinton himself now says he was overzealous about timing; he sees a future where "interpretations [are] handled by a combo of physicians and AI," making doctors more efficient, not obsolete.

What happened? Medical imaging is just too complex and human. A suspicious shadow on an X-ray means something very different for a 70-year-old non-smoker than for a 40-year-old heavy smoker. AI can flag the blob, but deciding if it needs a biopsy or just a six-month follow-up is a human call. Doctors also face a legal reality machine-vision doesn't: if an AI misses a tumor, the doctor is still on the hook. Malpractice law today actually incentivizes doctors to follow standard care regardless of what AI says. In a car crash, we can sue the manufacturer or demand a recall; in medicine, a "wrong diagnosis" usually lands on the physician. Until we rewrite medical liability, we're not going to hand life-or-death decisions over to an algorithm alone.

Meanwhile, self-driving cars have progressed faster than anyone expected, which is ironically what Hinton and others thought would lag. Why did driverless tech flourish while AI doctors are still assistants? Part of the answer: driving obeys strict traffic rules and physics, so errors are binary (crash or avoid). AI learns quickly from those instant outcomes. But human bodies aren't rule-bound — tiny differences in genes or environment can change a diagnosis entirely. And when an autonomous car crashes, Toyota or Tesla can be blamed. But when an AI misreads a scan, who pays? Our legal and medical systems demand that a person take responsibility.

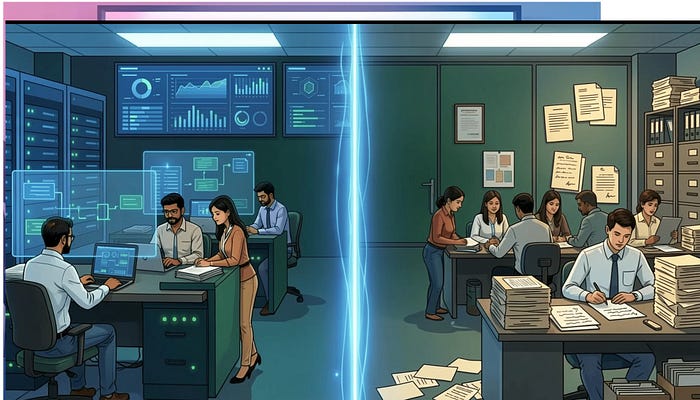

AI Is a Tool, Not a Terminator

Let's step back from horror-movie scenarios. In truth, every job is really a bundle of tasks, not one single block. AI excels at some of them — the repetitive, data-crunching ones — but not the rest. Think of a software engineer: AI can generate boilerplate code or fix simple bugs, but someone still needs to gather client requirements, design the system architecture, and debug tricky logic — tasks that require judgment and context. An AI might draft a legal memo, but only a lawyer can interpret nuanced clauses or argue in court. Similarly, in education, AI might grade multiple-choice tests, but spotting a struggling student's emotional red flags is a human job.

A recent economic analysis puts it powerfully: many jobs "may not be essential for future progress and may never be automated," because they aren't worth the enormous AI compute cost. AI will target the bottleneck work that drives growth — huge tasks like energy production, infrastructure, or science — and leave everything else to people. In this view, AGI "does not render human skills obsolete; it revalues them," says Yale economist Pascual Restrepo. In plain terms, your work isn't pointless just because AI can do parts of it. Human expertise becomes scarce; compute is the new limit.

For example, bank software can scan millions of transactions in seconds and flag fraud. But whether to close an account involves law, ethics, and common sense — a job for compliance officers. AI can summarize a textbook, but only a teacher can motivate a student or counsel a troubled teen. As one Fortune article notes, existing professional roles have simply reorganized: law firms still hire armies of junior attorneys even as AI handles billing and brief-writing. AI didn't put new lawyers out of work; it just reshuffled the workflow.

In most cases, when AI takes on a task, the human doesn't disappear — they move up a level. We begin overseeing the AI, making final calls, adding context and creativity that machines lack. As jobs change, the value shifts from raw production to decision-making. In economic terms, when making something becomes cheap, deciding what to make becomes precious. If AI makes writing code inexpensive, what's valuable is knowing what code to write — the quality control and big-picture thinking.

Winners, Losers, and the Big Picture

So far, real-world data support a reassuring trend: tech booms haven't crushed employment. A Morgan Stanley report finds AI's labor-market impact "has been modest so far, with little evidence of broad-based job losses". In fact, after past tech waves — from industrial machines to computers — displaced some roles, overall employment eventually expanded. The same appears true today. If you look at the legal field, for instance, AI has eased tasks, but firms are still eager to hire young lawyers. Restaurants and warehouses will use automation, but even when robots help make pizzas or pack boxes, humans still run the show and tend to new tasks.

That said, Mr. Restrepo and others warn that the biggest risk isn't straightforward unemployment; it's unchecked inequality and loss of control. In a fully automated economy, the lion's share of profit might go to those who own the AI and data centers. Restrepo's paper bluntly notes that eventually "most income will accrue to owners of computing resources". It echoes recent warnings that AI could concentrate wealth and power if left unchecked. In other words, the fear isn't that nothing will get done — it's that too much will get done by a few players.

And indeed, some figures urge caution about the unknowns. NVIDIA's CEO Jensen Huang has pushed back on doom-and-gloom narratives. He publicly "pretty much disagreed with almost everything" Amodei said about wiping out jobs. Huang worries the media's "90% end-of-the-world messaging" is "not helpful" — it scares off investments that could make AI safer and more useful. Other experts like Gary Marcus have also criticized the alarmist tone, asking: if you truly believed AI was existentially dangerous, why build it at all?

What does this mean for you? Probably not that your career is doomed, but that it will evolve. New technologies have always changed how we work. The internet was invented decades ago; we now have entire industries (ride-sharing drivers, social media managers, short-video creators) that nobody predicted in 2000. We humans, are remarkably adaptable. The skills that made someone valuable yesterday — hard work, creativity, willingness to learn — will still matter tomorrow. In fact, the soaring tide of AI might even lift skilled and motivated people higher: if you're tech-savvy or fast-learning, AI is a multiplier, not a mob boss. It lets you produce more, learn faster, and focus on higher-level challenges.

Bottom line: AI will change many jobs, especially routine tasks, but it isn't turning all of us into Luddites overnight. History and research suggest it's more about reshuffling work than wiping it out. Machines may help write code, analyze spreadsheets, or sort data, but they can't replace good judgment, empathy, or human responsibility. While debates about policy and profit-sharing continue, the story in the clinic, the courtroom, and the factory so far is that people stay in the loop, and often gain a valuable assistant in AI, rather than an immortal competitor.

As you sip your morning coffee and boot up for work, take heart: the AI future is likely to demand the very best of us, not leave us jobless. The conversation has shifted from "Will AI take our jobs?" to "How can AI help us do our jobs better?" We've faced technology shocks before. We'll adapt this time too, maybe even better than before.