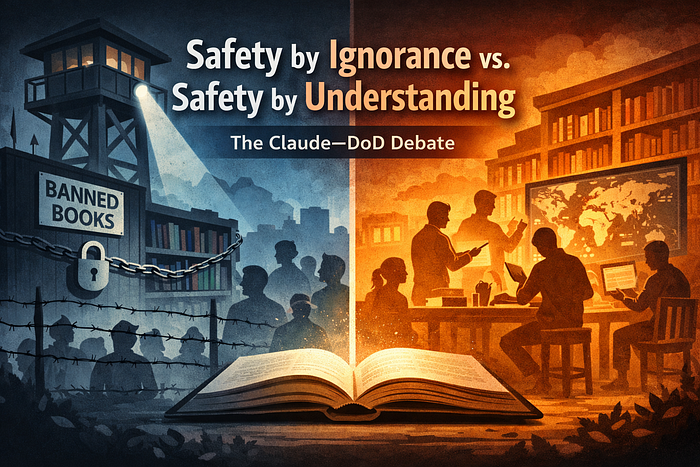

There is a growing debate in AI governance that is often framed as a dispute over "safeguards," "alignment," or "responsible AI." A recent flashpoint involves models developed by Anthropic (notably Claude) and the U.S. Department of Defense, but the disagreement is deeper than any single vendor or contract.

At its core, the debate is not technical. It is epistemological.

It is about how societies choose to remain safe.

Two Ways a Society Can Pursue Safety

Imagine two societies. Both are well-intentioned. Neither is tyrannical. Both want to prevent harm.

They differ only in method.

Society A: Safety Through Preemptive Restriction

In the first society, the government decides that certain knowledge is simply too dangerous to circulate.

Books are banned — not because the state is evil, but because it is cautious.

The banned material includes:

- Pornography

- Manuals on suicide

- Instructions for building weapons

- Extremist propaganda

- Detailed accounts of violent wrongdoing

The logic is straightforward: If people never encounter harmful ideas, they cannot misuse them.

This society is orderly. It is calm. It is morally serious.

But something subtle happens over time.

The unintended consequences

- Intellectuals cannot study the full anatomy of evil

- Harmful behavior becomes an abstraction rather than a studied phenomenon

- Nuance is lost between: - Studying wrongdoing - Simulating wrongdoing - Defending against wrongdoing

People know that evil exists — but not how it actually works.

This society is safe, but intellectually brittle.

Society B: Safety Through Accountability

In the second society, the government takes a different approach.

Books are not banned.

Instead:

- Knowledge is accessible

- Context matters

- Misuse is punished, not understanding

- Moral agency is preserved

In this society:

- Scholars read extremist texts to understand radicalization

- Doctors study suicide to prevent it

- Security experts study weapons to defend against them

This society is messier. Riskier. More uncomfortable.

But it produces citizens who:

- Can recognize manipulation

- Can model adversaries

- Can anticipate misuse rather than be surprised by it

This society is resilient, not merely safe.

Where Large Language Models Enter the Picture

Large language models are not just tools. They are epistemic systems — they encode, compress, and reproduce a worldview.

How they are trained, filtered, and constrained determines:

- What they can represent

- What they can reason about

- What they are blind to

This is where the disagreement emerges.

Claude's Philosophy: Safety by Omission

Claude's design philosophy strongly resembles Society A.

Certain domains are:

- Preemptively restricted

- Broadly filtered

- Often inaccessible even for analytical or defensive purposes

The intent is good:

- Reduce misuse

- Avoid downstream harm

- Ensure alignment at scale

But the consequence is unavoidable:

The model's representation of reality contains intentional blind spots.

Claude can acknowledge that certain harms exist — but often cannot:

- Analyze them in depth

- Simulate adversarial reasoning

- Explain how misuse actually unfolds

This produces a model that is morally aligned but epistemically incomplete.

Why This Becomes a Problem for Defense and Intelligence

Organizations like the DoD are not tasked with maintaining moral innocence. They are tasked with anticipating worst-case behavior.

Their job requires:

- Understanding hostile intent

- Modeling adversarial reasoning

- Studying dangerous knowledge without endorsing it

From that perspective, a system that cannot fully represent harmful domains is not safer — it is strategically weaker.

You cannot defend against:

- Propaganda you cannot read

- Tactics you cannot simulate

- Threats you are not allowed to understand

This is not a demand for recklessness. It is a demand for epistemic completeness under controlled conditions.

The Core Insight

This entire debate can be reduced to a single principle:

You cannot robustly defend against what your cognitive system is not allowed to represent.

Or more bluntly:

Safety achieved through ignorance is fragile. Safety achieved through understanding is resilient.

This Is Not About Removing All Guardrails

It is important to be precise.

The alternative to preemptive censorship is not anarchy.

It is:

- Intent-aware access

- Context-sensitive responses

- Auditing and accountability

- Strong consequences for misuse

In other words: Control behavior, not knowledge.

Why This Question Will Not Go Away

As LLMs move deeper into:

- Defense

- Intelligence

- Cybersecurity

- Strategic planning

the tension between alignment purity and epistemic realism will intensify.

Models trained to look away from dangerous ideas will:

- Appear safer in public demos

- Fail quietly in adversarial settings

And failures in those settings are not theoretical.

Final Thought

Every civilization must choose how it confronts evil:

- By refusing to look at it

- Or by studying it closely without becoming it

The same choice now confronts AI.

This is not about Claude versus any other model. It is about whether we want AI systems that are morally sheltered — or strategically awake.

The answer matters far beyond one contract.

If you found this discussion insightful, you might also enjoy our other explorations on AI in enterprise and supply chain intelligence:

- From APIs to Intelligence: How our MCP framework makes any system AI-ready.

- Enterprise Intent Control Plane: Bringing structure and clarity to AI-driven decision-making.

- Enterprise Intent Execution Fabric: Connecting AI insights seamlessly to operational systems.

Each post dives deeper into how AI can be safely and effectively applied across complex organizations.