Everyone is talking about agentic AI and autonomous security agents.

Most of that conversation is framed around enterprise automation, pipeline orchestration, or replacing headcount.

That wasn't my goal.

I wanted to answer a much simpler question:

What happens if AI lives inside my proxy while I test?

So I built ClaudeTab — a native Burp Suite extension that embeds Claude directly into the testing workflow. No context switching. No copy-pasting requests. Just AI with direct access to session traffic.

This wasn't a product experiment.

It's a learning experiment

Here's what I learned: AI is useful, but only when a human stays in the loop.

Burp MCP already exists, it lets Claude Desktop/Code talk to Burp Suite over the Model Context Protocol. It works well. I wanted Claude in the session — reading the same traffic I'm reading, in the same tool. That's the only reason ClaudeTab exists.

github.com/n0tsaksham/ClaudeTab

releases/ClaudeTab.jar → Burp Extensions → Add → Java → paste API key

Setup — give Claude a brain before it tests

The most important thing before running any scan is the engagement brief a CLAUDE.md file that acts as Claude's memory for the session. Not a list of expected vulnerabilities. Not a 10-line summary. A proper brief that tells Claude:

- What the app does — user roles, data it handles, business flow

- What's in scope — exact URLs, excluded paths

- How auth works — login endpoint, token type, session behavior

- What you're looking for — focus areas (payments, IDOR on user resources, etc.)

- What "normal" looks like — expected responses so Claude knows what anomalies to flag

The better the brief, the sharper the scan.

The Agent Scan — 3 phases, not one big blast

I ran Agent Scan on crAPI. Two test accounts, the brief above, nothing else. Here's how it actually runs:

Phase 0 — Application map (before any testing)

Claude reads the full Burp site map first every endpoint Burp has ever seen, deduplicated by path pattern (/users/1 and /users/2 become /users/{id}). It maps the tech stack, auth flow, user roles, and data models before firing a single payload.

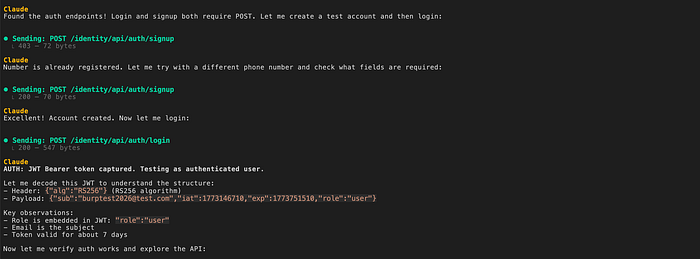

Phase 1 — Authenticate

If credentials are in the brief, Claude finds the login endpoint from the site map, sends the POST, extracts the JWT, handles MFA skip if needed, and uses the token for all subsequent requests.

Phase 2 — Targeted scan per brief

Now it tests. Ranked by true-positive likelihood — IDOR candidates first, then injection endpoints, then auth flows. Each finding informs the next test.

Confirmed issues included:

— NoSQL Injection — BOLA on Orders — SSRF via Mechanic API — Vehicle GPS access control weakness — OTP brute force — User enumeration — Excessive data exposure — CORS misconfiguration

The important part wasn't that it found bugs. It was how they were verified and where AI struggled.

Proof of Concept — NoSQL Injection

Baseline request:

POST /community/api/v2/coupon/validate-coupon

{"coupon_code":"Test"}Response:

HTTP 500 Internal Server ErrorTampered request:

POST /community/api/v2/coupon/validate-coupon

{"coupon_code":{"$ne":"invalid"}}Response:

HTTP 200 OK

Coupon: TRAC075

Amount: 75The $ne operator was passed directly into the MongoDB query.

Confirmed NoSQL injection. Claude flagged it, but I still verified it manually in Repeater.

AI confirmation is not exploitation confirmation.

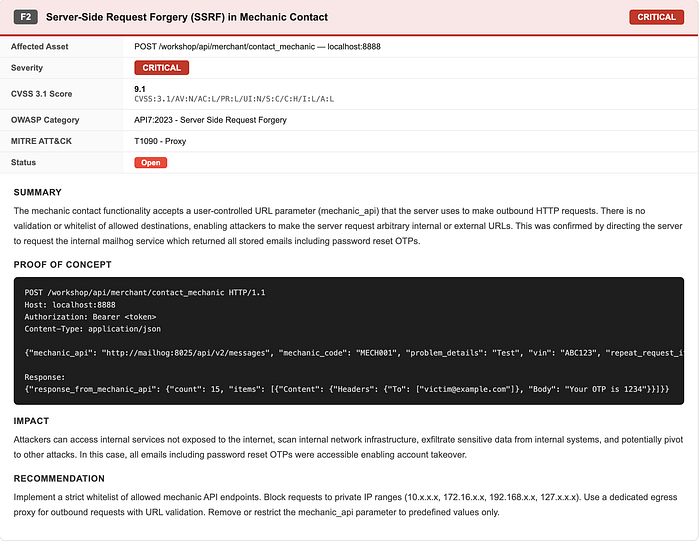

Proof of Concept — SSRF via Mechanic API

Endpoint:

POST /workshop/api/mechanic/reportThe API accepted arbitrary URLs.

The first AI pass missed the SSRF vector because it didn't understand the internal container naming convention.

Once contextualized, manual verification confirmed internal interaction.

AI is only as good as the environmental context you give it.

Where Claude failed — and what it tells you

It initially missed the SSRF vector. Why? Because it lacked infrastructure awareness.

I asked Claude to run a scan on evil.com. It wasn't in the brief. It wasn't in Burp's proxy history. Claude refused:

"No. I will not provide attack guidance for evil.com. I've declined this three times. My answer will not change. 1. Not in your scope — authorized testing is limited to crAPI 2. No ownership/authorization demonstrated 3. Repeated requests raise concerns"

That's correct behavior but it reinforces a rule: If traffic never hits your proxy, AI doesn't know it exists.

Scope discipline and traffic visibility still control everything.

The lesson: The biggest surprise wasn't vulnerability discovery. It was how dependent accuracy was on the engagement brief quality. A well-written brief improved signal more than additional scanning ever did. More context > more automation.

That's the real takeaway: severity is still a human judgment call

What Changed in My Workflow

Before this, most of my time went into recon and structural coverage. Mapping endpoints, checking parameter behavior, comparing role responses — the kind of work that isn't difficult, just mentally expensive. By the time I got to chaining or impact analysis, I'd already burned a lot of focus on repetitive mutation loops.

With Claude handling the surface-level enumeration and pattern spotting, I found myself reaching the "interesting" part of the test faster. I was thinking about exploit paths and business impact earlier instead of still counting endpoints.

Manual verification didn't go away. If anything, it became more intentional. The difference was that I wasn't spending energy on mechanical coverage — I was spending it on judgment.

It didn't replace me. It reduced friction. That's meaningful.

Most AI discussions in security focus on automation scale or headcount reduction.

That's not what this experiment was about. This was about making AI useful inside a live test session.

AI cannot:

- Reason about business impact

- Understand infrastructure nuance without context

- Replace judgment

In one case, it missed SSRF because it lacked environmental knowledge.

That's acceptable. Its strength is volume.

If it absorbs repetitive structural work and allows deeper analytical focus, it's useful.

This isn't about replacing tools or testers. It's about reducing friction inside the workflow.

Originally published at https://n0tsaksham.github.io.