/.git/config

/.env

/backup.zip

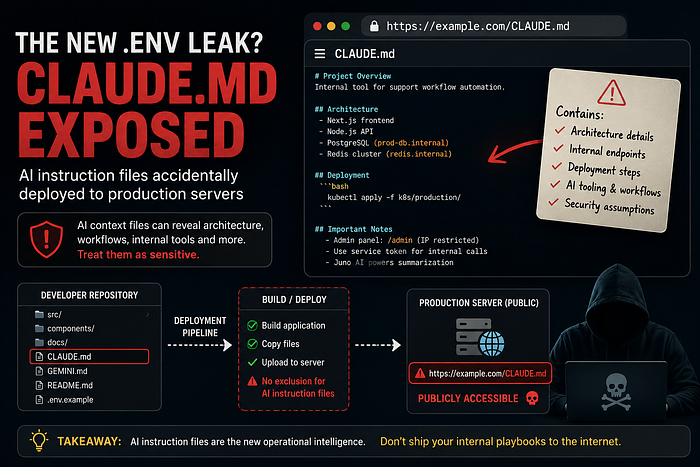

/phpinfo.phpBut recently, while doing routine reconnaissance during bug hunting, I started noticing something unusual appearing across multiple targets:

/CLAUDE.md

/GEMINI.md

/AGENTS.mdAt first, it looked harmless.

Just markdown files.

But after opening a few of them, I realized this is quietly becoming one of the most overlooked information disclosure patterns in the AI-assisted development era.

How I Noticed the Pattern

It started during a normal recon session.

I was checking for accidentally exposed files after a deployment and randomly tried:

/CLAUDE.mdUnexpectedly:

HTTP 200 OKThe file opened directly in the browser.

What I expected:

- Some generic AI notes

- Maybe coding instructions

- Probably useless information

What I found instead:

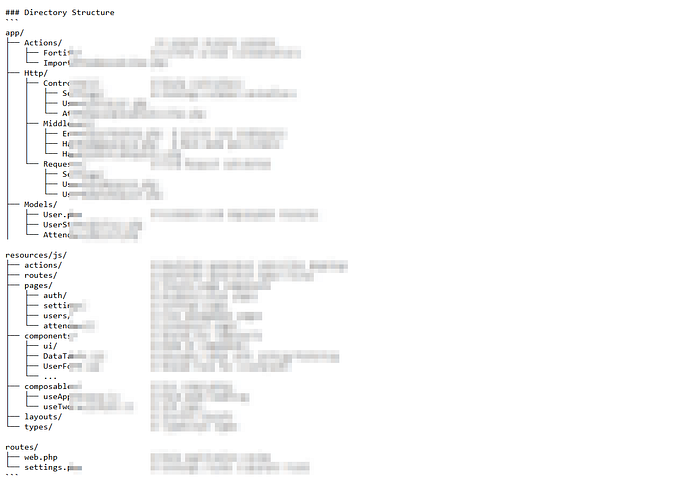

- Internal architecture references

- Deployment workflows

- Debugging instructions

- Infrastructure naming conventions

- AI agent operational context

- Security assumptions

- Development environment details

- Some login info

That was the moment I realized:

AI instruction files are slowly becoming the new accidental metadata leak.

Examples:

Why These Files Exist

Modern engineering teams increasingly use AI tooling like:

- Claude

- Google Gemini

- GitHub Copilot

To make these assistants more effective, developers create repository-level instruction files such as:

CLAUDE.md

GEMINI.md

AGENTS.md

copilot-instructions.mdThese files often tell the AI:

- How the project works

- Which commands to run

- Repository structure

- Deployment procedures

- Coding conventions

- Security considerations

- Internal workflows

Essentially:

They contain operational context.

And operational context is incredibly valuable during reconnaissance.

The Real Problem: Deployment Pipelines Ship Everything

Most of the exposures I noticed were not caused by sophisticated mistakes.

They happened because deployment pipelines blindly copied entire repositories.

Typical examples:

COPY . .Or:

cp -r . /var/www/htmlWhich means:

Everything inside the repository becomes part of the deployment artifact.

Including:

CLAUDE.md

GEMINI.md

notes/

internal-docs/

debugging.mdAnd because markdown files are rarely considered dangerous, nobody blocks them.

Why This Matters More Than People Think

Many developers assume:

"It's just documentation."

But modern AI instruction files are very different from traditional READMEs.

Some exposed examples included:

Infrastructure References

Deploy using k8s-prod namespace

Redis host: redis.internal.localInternal Workflow Logic

Use staging auth bypass for testing flowsAI Agent Behavior

Never expose admin APIs externally.

Use /internal/debug routes only for ops.Ironically, the instruction file itself was publicly accessible from the internet.

The Bug Hunting Perspective

What made this interesting from a bug hunting standpoint was the consistency. Once I started checking for these files, I kept finding them. The reason is simple: AI-assisted development has exploded faster than deployment hygiene evolved.

Organizations adapted to using AI.

But they didn't adapt their security review process around AI-generated operational files.

The New Reconnaissance Surface

Traditionally, reconnaissance focused on:

- Open ports

- JS files

- Public buckets

- Metadata leaks

- Stack fingerprinting

Now there's a new layer:

AI Operational Context Leakage

These files can reveal:

- Internal terminology

- Service relationships

- Engineering practices

- Tooling assumptions

- Security workflows

- Automation logic

- Hidden endpoints

- Architecture patterns

Sometimes they explain the application better than public documentation ever could. :D

Why This Will Likely Become More Common

The pattern is increasing because modern workflows naturally encourage it.

Developers now:

- Use AI agents daily

- Store AI instructions in repositories

- Auto-deploy directly from Git

- Use rapid CI/CD pipelines

- Deploy monorepos without filtering

Meanwhile deployment configurations still commonly rely on:

COPY . .That combination quietly creates exposure.

Defensive Measures

Organizations should start treating AI instruction files as sensitive operational assets.

1. Use Deployment Allow Lists

Instead of copying everything:

COPY dist/ /app/Deploy only required production artifacts.

2. Add .dockerignore

Example:

CLAUDE.md

GEMINI.md

AGENTS.md

docs/

internal/3. Block Markdown Access

Example Nginx rule:

location ~* \.(md|txt)$ {

deny all;

}4. Add CI/CD Security Checks

Example:

find . -iname "CLAUDE.md"

find . -iname "GEMINI.md"Fail builds if sensitive AI instruction files exist inside deployment artifacts.

The Bigger Shift Nobody Is Discussing

In the past, operational knowledge lived mostly inside people's heads or internal wikis.

Now it increasingly lives inside AI context files.

That fundamentally changes the attack surface.

We are entering a phase where:

AI instructions are becoming infrastructure metadata.

And just like exposed .git directories became a recognizable security issue years ago, accidentally deployed AI instruction files may soon become another standard reconnaissance vector.

Final Thoughts

What made this pattern even more interesting was that shortly after noticing these exposures during bug hunting, a very similar incident reportedly happened with Apple itself.

Yesterdat I saw , reports surfaced that Apple accidentally shipped internal CLAUDE.md instruction files inside an update to the Apple Support app (version 5.13). Researchers inspecting the application package reportedly discovered internal AI instruction files bundled directly into the production release.

The leaked files allegedly contained references to:

- AI-assisted support workflows

- Internal conversational support architecture

- Backend integration details

- Session handling logic

- Internal tooling references

- Anthropic Claude usage patterns

Apple quickly pushed a follow-up update removing the files, suggesting they were never intended to be publicly distributed.

The important takeaway wasn't whether the files contained critical secrets.

It was the fact that even one of the world's most security-conscious companies accidentally allowed AI operational context files to reach production artifacts.

That incident perfectly highlighted something many bug hunters are beginning to notice:

AI instruction files are quietly becoming a new class of information disclosure.

Traditionally, markdown files inside production bundles were considered low-risk.

But in the AI-assisted development era, files like:

CLAUDE.md

GEMINI.md

AGENTS.md

copilot-instructions.mdcan reveal surprisingly deep operational context about:

- Architecture

- Internal workflows

- AI integrations

- Security assumptions

- Development practices

- Deployment pipelines

The Apple incident demonstrated that this is no longer a hypothetical issue or isolated mistake.

It is becoming an industry-wide pattern.