Introduction

A self-taught security enthusiast had been trying to break into bug bounty hunting for eleven months. She had no formal programming background, no computer science degree, and a deeply held belief that both of those absences were the primary reason her results were not improving. She was spending most of her available time trying to learn Python well enough to write the automation scripts she kept reading about in community forums.

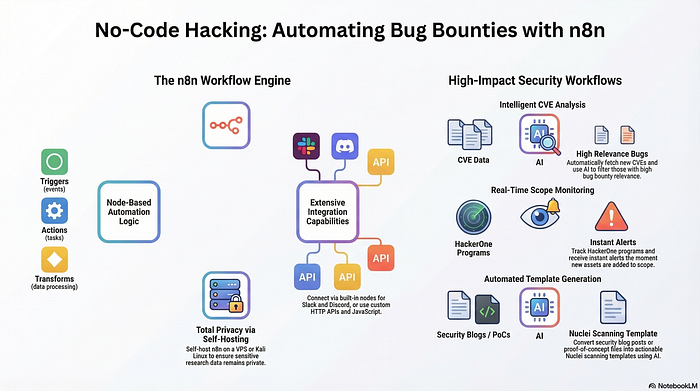

Then she did something that felt almost like cheating. She stopped trying to learn scripting and started building workflows visually. She connected security APIs, automated her reconnaissance sequence, integrated an AI layer for result analysis, and built a notification system that flagged genuinely interesting findings for manual review.

Within six weeks her submission quality had improved more than it had in the previous eleven months combined.

The myth she had been living inside — the one that most people entering no-code bug bounty automation carry without questioning — is that technical coding ability is the primary barrier to effective ethical hacking automation. That without scripting skills, you are permanently operating at a disadvantage against hunters who can write their own tools.

The real issue was never her inability to code. It was her inability to build a coherent, repeatable workflow that connected the right data sources to the right analysis process to the right follow-through actions. That problem has a solution that does not require a single line of code.

The Coding Gatekeeping Myth and What It Is Actually Hiding

The belief that ethical hacking requires strong programming skills is so embedded in security culture that questioning it feels almost heretical. Forums, certification paths, and community content consistently reinforce the message that the serious practitioners are the ones who write their own tools, understand the code underneath the frameworks they use, and can build custom scripts for novel situations.

This belief is not entirely wrong. Deep technical knowledge is genuinely valuable, and there are categories of security work where it is genuinely irreplaceable. Advanced exploit development, custom payload crafting for highly specific targets, kernel-level vulnerability research — these domains require programming fluency that no visual workflow builder can substitute for.

But bug bounty hunting, particularly at the reconnaissance, vulnerability scanning, and workflow automation layer, is not primarily those things. It is primarily about systematic coverage of attack surface, intelligent prioritization of where to invest manual investigation time, and efficient processing of the enormous volume of data that serious reconnaissance generates.

None of those things require you to write code. They require you to think systematically, connect the right tools and APIs in the right sequence, and build feedback loops that improve your process based on what you find.

The intellectual insight here is worth sitting with: the value in automation is not in the code that implements it. The value is in the logic that designs it. A hunter who understands which data sources matter, in what sequence they should be queried, how the outputs should be filtered and prioritized, and what conditions should trigger manual investigation — that hunter can implement that logic in a visual workflow builder just as effectively as in a Python script. In many cases more effectively, because the visual representation makes the logic easier to audit, modify, and share.

A concrete example: a hunter builds a no-code workflow that queries multiple subdomain enumeration APIs in parallel, deduplicates the results, filters out assets that match a known-inactive pattern, runs the remaining assets through a technology fingerprinting API, and then sends a structured summary to a review interface with assets grouped by technology stack. Another hunter writes a Python script that does the same thing. The outputs are functionally identical. The no-code version took three hours to build instead of eight, requires no maintenance when API specifications change because the visual node handles the update, and can be modified by anyone on a collaborative team without requiring programming knowledge.

The code was never the point. The logic was the point. And logic is language-agnostic.

What AI Actually Adds to Security Workflows That Most Builders Miss

Integrating AI into bug bounty and ethical hacking workflows has become extremely popular very quickly, and as with most rapidly adopted capabilities, the gap between how it is being used and how it could be used is significant.

The most common use of AI in security automation workflows is summarization. Raw scanner output goes in, human-readable summary comes out. This is genuinely useful — scanner output is frequently verbose and poorly organized, and having an AI layer that identifies the most relevant findings and presents them clearly reduces manual review time meaningfully.

The higher-leverage applications involve using AI not to describe what the tools found, but to reason about what the findings mean in context. To look at a collection of discovered assets, technology fingerprints, and endpoint behaviors and generate hypotheses about where business logic vulnerabilities might exist. To cross-reference the current target's technology stack against known vulnerability patterns for that stack and identify which manual investigation paths are most likely to be productive. To analyze the structure of an application's API responses and identify inconsistencies in how different endpoints handle the same data that might indicate authorization boundary issues.

This is AI as an analytical collaborator rather than a formatting assistant, and it requires a fundamentally different prompt architecture than summarization does.

The counter-intuitive insight here is that the quality of AI contribution to a security workflow is almost entirely determined before the AI node runs — in the design of the context that gets passed to it and the specificity of what it is being asked to reason about. Generic input produces generic output. Highly structured, contextually rich input that frames the AI's task as a specific analytical problem produces output that can meaningfully influence which investigation paths a hunter prioritizes.

A practical illustration: a workflow passes a list of discovered endpoints to an AI node with the instruction to summarize what was found. The output is a formatted list with brief descriptions. A different workflow passes the same endpoint list along with the application's stated business function, the user roles the application supports, and a structured prompt asking the AI to identify which endpoints are most likely to handle sensitive operations based on their naming patterns and the business context, and to rank them by investigation priority. The second output is a prioritized investigation queue with reasoning that a human reviewer can evaluate and act on immediately.

Same data. Same AI capability. Completely different value. The difference lives entirely in how the workflow was designed before the AI node was reached.

The Workflow Architecture That Separates Serious Hunters From Busy Ones

There is a pattern in how effective no-code security automation gets built that is worth describing precisely, because most people building these workflows for the first time make an architectural mistake that limits everything downstream.

The mistake is building workflows as linear pipelines rather than as feedback loops.

A linear pipeline takes input at one end and produces output at the other. Run recon, get results, review results, end. This architecture is easy to build, easy to understand, and produces value for straightforward tasks. It also has a hard ceiling, because it cannot improve based on what it finds. Every execution starts from the same place and follows the same path regardless of what previous executions discovered.

A feedback loop architecture is different. It includes stages where the output of one phase is evaluated against criteria, and the result of that evaluation determines what happens next. Interesting findings trigger deeper investigation automatically. Patterns identified across multiple targets inform how future targets get prioritized. Results that fall below a relevance threshold get filtered out before they reach the manual review stage rather than after. The workflow gets smarter over time because the logic of each phase is informed by the outputs of previous ones.

This architecture is more complex to build initially. It requires thinking about the workflow not just as a sequence of steps but as a system with states, conditions, and branches. But it is also the architecture that produces sustainable results rather than one-time output, because it encodes learning into the process rather than requiring a human to re-apply the same judgment on every execution.

The intellectual discipline required is designing the workflow backward from the decision you need to make rather than forward from the data you have available. What does a human reviewer need to see in order to decide whether something deserves deep investigation? Design the workflow to produce exactly that — not everything the tools can produce, but the specific filtered, analyzed, contextualized output that makes the human decision as efficient and accurate as possible.

Why Ethical Boundaries Are a Workflow Design Problem, Not Just a Legal One

There is a dimension to no-code bug bounty automation that most technical content treats as a disclaimer rather than a design consideration, and the gap between those two framings has real consequences.

The ethical and legal boundaries of bug bounty work — operating strictly within defined program scope, avoiding actions that could disrupt production systems, ensuring that automated activity does not cross the line from authorized testing into unauthorized access — are not just rules to be aware of. They are requirements that need to be encoded into the workflow architecture itself.

A workflow that is technically capable of scanning any target it discovers, without scope verification at each step, is a liability regardless of the hunter's intentions. Automation moves faster than manual review. If scope verification is something the hunter is supposed to do after the workflow runs rather than something the workflow enforces before each action, the gap between discovery and action is a window where things can go wrong in ways that are difficult to explain to a program owner.

Building ethical constraints into workflow architecture means treating scope as a filter that every discovered asset must pass before any active scanning action is taken. It means building explicit checks that compare discovered assets against program scope definitions before the workflow proceeds. It means designing notification and pause points for edge cases where scope is ambiguous rather than letting the automation make the judgment call.

This is not just ethically correct. It is professionally necessary. Programs that experience automated activity outside their defined scope lose trust in the hunter responsible for it, regardless of intent. That trust, once lost, is extraordinarily difficult to rebuild and affects access to future programs.

The hunters building no-code security workflows who are consistently welcomed back to programs and building genuine professional reputations are the ones who have made scope compliance a first-class architectural concern rather than an afterthought. Their workflows enforce the boundaries automatically, at every step, because they understood from the beginning that ethical operation is not something you remember to do — it is something you build in.

Engagement Loop

In 48 hours, I will reveal a simple workflow-readiness checklist that most no-code security builders skip before they run their first automated scan — and skipping it is the single most consistent reason their workflows produce results they cannot actually use or report.

CTA

If this changed how you are thinking about what no-code automation can actually do in a serious ethical hacking workflow, follow for more honest breakdowns of where the real capability is and how builders without traditional coding backgrounds are accessing it. Share this with someone who believes their lack of programming skills is the thing standing between them and effective security automation — this conversation might be the most useful one they have this month.

Comment Magnet

What is one belief about needing technical coding skills that held you back from building something in the security or automation space — and what changed when you finally started building anyway?