While hunting for broken link takeover opportunities, I came across an old Google Cloud Storage bucket that was previously used for Helm binary distribution. The bucket was no longer owned, but references to it still existed across the ecosystem.

Out of curiosity, I searched GitHub and quickly found thousands of repositories still referencing this bucket in CI pipelines, Dockerfiles, and deployment scripts.

At this stage, I started reporting the issue directly to programs where I could clearly see usage in their GitHub repositories. Since the references were public, it was straightforward to demonstrate potential impact.

I received some initial bounties from these reports. You can check the writeup here — How I Took Over a Forgotten Google Storage Bucket Used to Distribute Helm Binaries.

However, this approach felt limited — I was only reporting based on visible references, not actual live usage.

That's when I decided to take a different approach.

Instead of continuing with static findings, I enabled access logging on the bucket and started monitoring real traffic.

Within a few days, I started seeing thousands of requests daily from a wide range of servers. This confirmed that many systems were still actively pulling binaries from this location.

That's when the real idea clicked.

If these systems were blindly downloading binaries, I could simulate a supply chain attack.

Using AI (Cursor), I generated a script that:

- Recreated Helm binary archives matching the original naming patterns

- Covered multiple OS/architecture combinations and versions

- Injected a small bash payload inside each archive

The payload was simple:

- Collect non-sensitive system information (hostname, IP, whoami, cwd)

- Send it to my controlled webhook

On the backend, I again used AI to quickly set up:

- A webhook receiver

- Database storage

- WHOIS lookup for incoming IPs

- A basic UI to visualize incoming data

The entire pipeline was ready in minutes.

As soon as I started uploading the crafted binaries to the bucket, I began receiving hits — even before the upload completed.

Within a week, I was seeing:

- ~1,000+ requests per day

- Thousands of unique machines

- Continuous traffic from production environments

This clearly showed real-world impact at scale.

One major challenge was attribution.

Most IPs resolved to cloud providers like AWS, GCP, and others, which made it difficult to identify the actual organization behind them.

To improve signal, I:

- Filtered out generic cloud IP ranges

- Focused only on IPs with identifiable ownership

This helped surface traffic from recognizable organizations.

I began reporting affected companies through their bug bounty programs.

Responses varied:

- Some marked it as informational (test or isolated systems)

- Some acknowledged but rated it low

- Others treated it as a critical supply chain risk

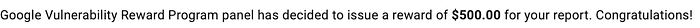

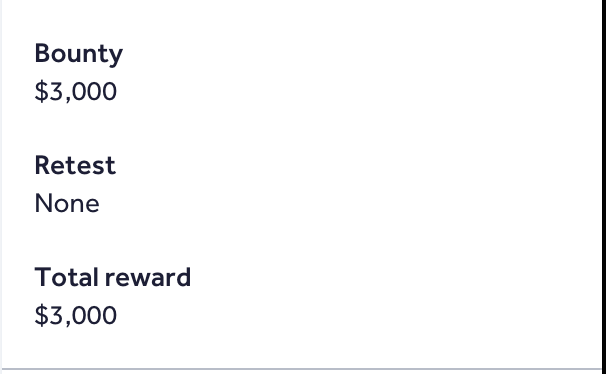

Over time, I received multiple bounties:

- Several private program rewards on HackerOne

- Additional payouts: $3K, $1K, $500, $100

- Some reports are still pending

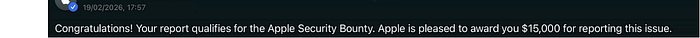

A few days later, I noticed traffic from an Apple-owned server.

That was a big moment.

I reported it immediately. Apple took it seriously and began internal investigation. Eventually, they coordinated with Google and concluded that the best fix was to secure the bucket itself.

There was even discussion around ownership transfer.

In the end:

- Google took over the bucket

- The supply chain risk was neutralized at the root

Final numbers:

- Total earnings: ~$25,000

- Highest single bounty: $15,000 (Apple)

- Impact: Thousands of machines across multiple organizations

This could have gone even further if the bucket had remained unclaimed a bit longer 😉