There is a question at the center of autonomous systems governance that the technology industry has been trying to answer for several years now. The question is deceptively simple: under what authority does an AI agent act?

The technology industry has been answering it with identity infrastructure. Better credentials. Stronger tokens. Shorter lifetimes. More granular scopes. Zero trust pushed deeper into the stack. A growing ecosystem of detection, interception, and supervision layers designed to catch autonomous agents doing things they shouldn't.

These are serious efforts by serious people. They are also answers to the wrong question.

The right question was settled centuries ago. Not by computer scientists. Not by cryptographers. By lawyers.

The Trillion-Dollar Category Error

For thirty years, digital infrastructure has been built on a single foundational assumption: that governing what systems may do begins with establishing who they are.

Identity first. Authority follows.

This assumption is so deeply embedded that most practitioners never examine it. It produced the entire governance stack we rely on today: IAM, RBAC, SAML, OAuth, OpenID Connect, and Zero Trust. Each of these frameworks is a sophisticated elaboration of the same core logic. Establish the identity of the actor. Grant that identity a set of permissions. Supervise the exercise of those permissions. Revoke them when necessary.

That logic works reasonably well when humans are the primary actors. A human presents a credential. A system grants access. The human does something. If it goes wrong, the human is accountable. The governance gap between the credential and the action is papered over by the presence of the human, who can be questioned, restrained, and held responsible.

Autonomous systems remove the human entirely from that gap. An agent receives a credential, or inherits a set of permissions, or operates within a session that was authenticated at some earlier point, and then acts continuously, at machine speed, across systems and contexts, producing real-world effects that no human has individually reviewed or authorized at the moment they occur.

In that architecture, the identity-first model doesn't just become less effective. It becomes structurally incoherent. Because what identity establishes is who an actor is. And who an actor is tells you almost nothing about whether this specific action, at this specific moment, on behalf of this specific party, is authorized.

These are different questions. Law has always known they are different questions. Digital infrastructure collapsed them into a single token, calling it governance.

That is the category error. And a trillion dollars of enterprise security infrastructure is built on it.

What Law Already Knows

Timothy Reiniger, co-chair of the American Bar Association's newly launched Autonomous Systems Governance Working Group, made an observation at the inaugural session in Atlanta last month that deserves to be the most-quoted sentence in AI governance this year.

"Legal doctrines already exist," he said, "refined over four centuries, in property, agency, contract, and products liability law. The problem is not the absence of legal doctrines. It is the current confusion of whether and how to apply these doctrines to emerging autonomous systems."

Read that carefully. The problem is not the absence of legal doctrines.

Law separated the question of identity from the question of authority a very long time ago. It did so for a reason that turns out to be precisely relevant to the governance of autonomous systems, even though autonomous systems didn't exist when the doctrine was developed.

The reason is this: knowing who someone is does not tell you whether they have the present right to act.

Property law has a concept called seisin [1, 2, 16], the present, specific right to act upon an interest, as distinct from merely holding a position in a chain of title. Standing in a governance chain does not confer the right to act. It establishes a position. The right to act is a separate question, evaluated at the moment of action, against the specific interest being affected.

Agency law has a parallel distinction. It separates attribution, who performed this action and who answers for it, from authority, whether the action was within the defined scope of delegated power [10]. These are not the same question. A party can be perfectly identifiable and still have committed an ultra vires act [7, 8]. Identity is established by the credential. Authority is established by something else entirely.

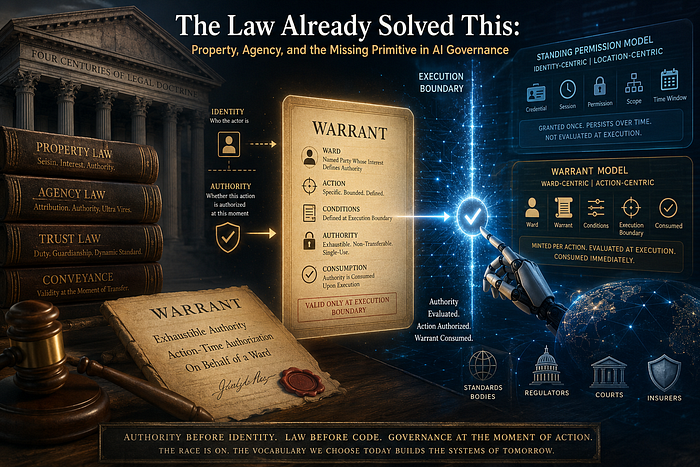

That something else is a warrant [16]. A bounded, specific, exhaustible grant of power to act upon a particular interest, under defined conditions, on behalf of a named party, consumed upon execution.

Courts have reasoned with this concept for centuries. Every jurisdiction that has developed a common law tradition has its own version. The question of whether an action was within the authorized scope of a protected interest is a property law question. The question of who performed the action and who answers for it is an agency law question. They require different instruments and different analyses.

Digital infrastructure has never implemented the first question at all. It has only ever implemented the second.

The Missing Primitive

Here is what this means in practice.

Every authorization framework in current use governs admission. OAuth governs the delegation of access. RBAC governs which roles have which permissions. Zero Trust governs whether a specific identity should be trusted at a specific network location. OIDC governs identity assertion for a session. These are all, in their different ways, answers to the question: Does this identity have scope to perform this class of actions?

None of them answers the question: Is this specific action, at this specific moment, within the authorized conceptual scope of the interest it will affect?

That question requires a different primitive. Not a credential that establishes identity. Not an access token, which establishes scope for a class of actions. Not a session, which establishes continuity. Something that activates for a single action, carries the authority for that action and no other, is evaluated at the exact moment the action is about to produce an effect, and is consumed immediately upon execution, regardless of outcome.

A warrant.

The warrant is not a refinement of the credential. It is not a credential with a shorter lifetime or a narrower scope. It is a structurally different instrument that answers a structurally different question. Where a credential says "this entity is known and has standing," a warrant says "this action is authorized, on behalf of this specific party, under these exact conditions, at this moment, and this authorization is consumed at execution."

The party on whose behalf the action is taken, the party whose interests define the outer boundary of what the authority may legitimately do, and who directly bears the consequences of the action, is the Ward. The Ward is not the agent. The Ward is not the credential holder. The Ward is the consequence-bearer, the entity whose proprietary interest generates the duty that constitutes the authority in the first place.

Authority flows from the Ward's interest, through a governance chain, to an agent, who may act only within the terms of a warrant traceable to that interest. When the warrant is exercised, it is gone. The agent does not accumulate authority. The agent does not carry standing permissions that persist across actions. Each action must stand on its own authorization, evaluated freshly at the execution boundary, against the Ward's current interests, not the assumptions that prevailed at some earlier point when the authority was first established.

This is not a new idea. It is the doctrine of exhaustion applied to governance [5, 6]. It is conveyance law applied to action [3, 4]. It is the legal principle that validity is evaluated at the moment of transfer, not at the moment of possession, and it applies to autonomous systems.

The law already has all of this. Digital infrastructure has simply never integrated it.

Why It Was Never Built

The absence of warrant-based governance in digital infrastructure is not an oversight. It is a structural consequence of the world in which that infrastructure was designed.

When OAuth was conceived, when SAML was standardized, when the identity-first model became the foundation of enterprise security, the assumption of human presence was so pervasive that it was invisible. Humans were in the loop. Humans could be questioned. Humans provided the governance judgment that the infrastructure didn't need to encode because it was always available from the person sitting at the keyboard.

Standing authority worked because the standing was human. The session persisted, but a human user was still attached to it. The token carried scope, but a human had requested that scope for a specific purpose. The role granted permissions, but a human was exercising them in a context they understood.

Remove the human, and standing authority becomes something different. It becomes ambient power. Permissions that were granted under one set of assumptions, exercised under another. Authority that was valid at delegation time, carrying forward into execution contexts that the original grant never contemplated.

This is what I called temporal drift in an earlier piece. The system granted authority under conditions that no longer obtain, and it has no mechanism to evaluate whether those conditions still hold at the moment of effect. Because the question was never built into the infrastructure. Because the infrastructure was always designed to assume a human would supply the answer.

Autonomous systems make that assumption unavailable. There is no human at the execution boundary. The agent acts continuously, across contexts, at speeds that preclude human review of individual actions. Standing authority, designed for human principals working at human speed, becomes structurally incompatible with machine-speed autonomy.

The governance stack that was adequate for the world it was designed for is not adequate for the world it now operates in. This is not a failure of implementation. It is a failure of legal architecture.

The Race That Is Already Happening

There is a concrete and urgent reason why this argument matters right now, and not merely in the abstract future tense that most AI governance writing inhabits.

A sophisticated technical community is currently working to solve the autonomous systems governance problem, and it is doing so with considerable speed and competence. Substrate-level identity frameworks are being developed with rigorous separability invariants, carefully designed governance envelopes, and serious engagement with the W3C, IETF, and European standards bodies. Patent stacks are being assembled. Vocabulary is being locked into JSON-LD contexts with @protected flags that prevent downstream redefinition. Working groups at W3C are reviewing these frameworks for inclusion in the standards that will govern how verifiable credentials work for autonomous agents at a global scale.

This work is genuinely impressive. The people doing it understand the governance problem with considerable clarity. They have correctly identified that the current credential-centric infrastructure is inadequate for autonomous systems. They have correctly identified that better provenance, lifecycle management, and revocation infrastructure are needed.

What they have not built is a warrant. What their frameworks do not contain is exhaustible authority. Their Permission Objects have validity windows. Their credentials have revocation infrastructure. These are real improvements to the standing authority model.

They are not a replacement of it.

Independent EU regulatory compliance analysis confirms precisely this distinction. Current runtime enforcement tools, the analysis finds, answer the question of permission. The EU AI Act requires answers to the question of authority. These are not the same question, and no amount of technical refinement of the permission model produces an authority model [17].

A Permission Object with a 90-day validity window and a revocation endpoint remains standing authority. It is standing authority with better governance. The action that occurs on day eighty-nine, under conditions that have materially changed since the permission was issued, is still authorized. The execution boundary is still not the site of fresh admissibility evaluation. The Ward's current interests are still not the operative test at the moment of effect.

The distinction matters enormously. And it matters right now, in particular, because the vocabulary being embedded in these frameworks is being locked into technical standards with mechanisms that will make it resistant to revision. Once that vocabulary is established in a W3C namespace with a protected flag, a downstream system that attempts to introduce warrant semantics faces a processing error rather than a conversation.

Technical standards create facts on the ground. Legal frameworks then have to accommodate those facts rather than shape them. This is the pattern that has repeated since the 1960s, every time a significant piece of digital infrastructure was standardized before the legal architecture that should have governed it was in place.

We are at that moment again. And this time, uniquely, we have a chance to get the sequence right.

The compliance gap this creates is not a theoretical concern. A peer-reviewed analysis of AI agent compliance under EU law, published in April 2026 by researchers mapping the AI Act's essential requirements against the current governance tooling market, identified the missing layer with precision [17]. The study found that the entire AI trust, risk, and security management market currently comprises three functional tiers: governance platforms operating at the system level, runtime enforcement tools providing binary policy checks on model inputs and outputs, and information governance covering data classification and access management. Its conclusion is direct: none of these addresses the question that agentic systems make operationally urgent, namely who holds authority over a specific AI-proposed action, and how is that authority exercised and recorded?

The study names a fourth tier as absent: infrastructure governing human-agent and agent-to-agent interaction at runtime, capable of classifying individual actions against a structured ontology, computing risk from live telemetry, routing to the accountable stakeholder, and maintaining an immutable oversight record. Critically, the study frames this not as a market gap but as a compliance gap. The essential requirements in Articles 12 to 14 of the EU AI Act, it concludes, impose obligations that can only be demonstrated through action-level records of human authority exercise, not through system-level documentation of oversight design. Runtime enforcement tools, the study states explicitly, answer the question of permission but not authority [17].

This finding did not come from legal doctrine scholarship. It came from researchers mapping regulatory compliance requirements. The convergence is not coincidental. It is what happens when two independent disciplines, one working from four centuries of property and agency law, the other working from the essential requirements of the EU AI Act, arrive at the same structural conclusion: the permission model cannot answer the authority question, and the authority question is the one that matters.

Four Centuries in the Room

On April 17, 2026, in Atlanta, the American Bar Association launched the Autonomous Systems Governance Working Group. It is a joint effort of the SciTech Risk and Trust Management Committee and the Cyber and Technology Law Committee of the Business Law Section. Its co-chairs are Timothy Reiniger and Jon Garon. Its mandate is to develop minimum competency frameworks for autonomous systems governance in data management, risk management, and operations management [15].

The WG has already recognized the central governance challenge with precision. The ABA's announcement of the inaugural session named it directly: current AI governance frameworks conflate attribution and accountability, as well as authority and identity. Identity credentials establish who is acting. They do not establish whether an agent is acting on behalf of a specific party, within that party's authority, at this particular moment. The authority question requires a different instrument.

The Working Group is developing a glossary of terms to harmonize legal, technical, and business usage across the governance space. A working glossary establishing that vocabulary has been completed and submitted to the ABA for consideration. It is grounded in the legal bifurcation that Reiniger named: authority in Property Law, attribution in Agency Law. It introduces vocabulary that does not currently exist in any digital governance framework: Ward, Warrant, Warden, Exhaustibility, Action-Time Authority, Governance Chain. The WG's substantive work on minimum competency frameworks and governance principles is now underway.

This vocabulary carries four centuries of legal refinement. Property Law's concept of the conveyance moment [3, 4], where validity is evaluated at the instant of transfer rather than retroactively, maps directly onto the execution boundary, where authority must be evaluated and consumed. Agency Law's doctrine of ultra vires action [7, 8], where authority exercised beyond delegated scope is void regardless of the identity of the actor, maps directly onto the invariant that a warrant must be traceable to a Ward's interest or the action does not proceed. Trust law's guardianship standard [11, 12, 14], where the duty to protect is assessed against the ward's present condition and not a historically fixed intent, maps directly onto the requirement that authority be evaluated against current conditions at the execution boundary, not the assumptions that prevailed at delegation time.

The legal framework is not trying to catch up with technology. It already has the concepts. It is being asked, for the first time, to make them operational as infrastructure.

The Paradigm Shift

Everything described above converges on a single inversion.

The current paradigm is location-centric. It asks: who is this actor, and do they have access at this point? Authority is granted at the location of credential presentation and persists thereafter. The governance question is an identity question. Standing permission is the natural result.

The paradigm that autonomous systems require is Ward-centric. It asks: Is this specific action within the authorized scope of the Ward's protected interest, at this moment? Authority is not granted at the location of credential presentation. It is minted per action, at the execution boundary, under conditions established by a warrant traceable to a named Ward. The governance question is not an identity question. It is a property law question. Exhaustible authority is the necessary result.

The inversion is not subtle. Identity before authority becomes authority before identity. The actor's credentials are not the primary governance instrument. The Ward's proprietary interest is. The Credential establishes who a party is and where they stand in the governance chain. The Warrant establishes whether this specific action is authorized, right now, on behalf of this specific Ward. These are different instruments answering different questions. Conflating them, as every current digital governance framework does, is the category error that makes autonomous systems ungovernable.

This shift has direct consequences for the insurance and liability landscape, where governance frameworks ultimately prove their worth. Insurance does not insure identity, but actions under defined conditions. A policy requires three things to be determinable: was the action within scope, were the coverage conditions satisfied at the moment of execution, and can liability be attributed to a specific party? Identity-centric governance can imperfectly answer the last question. It cannot answer the first two.

Warrant-based governance can answer all three. Each action is evaluated at the execution boundary against explicit conditions. Authority is deterministically within or outside the authorized scope, not probabilistically, not on average. The coverage boundary is definable, enforceable, and verifiable at the moment of action. Bounded authority means bounded exposure. Bounded exposure is priceable.

The first domain where this becomes economically unavoidable is not the most dramatic application of autonomous systems. It is the most mundane: underwriting. Insurers cannot price what they cannot bound. They will adopt a governance model that makes autonomous systems insurable, namely Ward-centric governance with warrant-based authority, not a better-governed version of the standing permission model.

From insurance, the framework extends to every regulated domain where autonomous systems operate.

What Must Happen Now

The legal vocabulary being established by the ABA ASG-WG needs to serve as the reference frame for technical standards, not a late arrival forced to negotiate with vocabulary that has already been locked in.

This is not an argument against technical standards work. That work is necessary, and much of it is excellent. It is an argument about sequencing. When a JSON-LD context applies a @protected flag to a set of governance terms, it makes a claim about the vocabulary that will persist. When that vocabulary covers adjacent but not equivalent ground to the legal terms that courts and regulators will need, the result is a gap between what the technical standard encodes and what the law requires, with no clean mechanism to close it.

The Warrant is the critical term. It is the concept that doesn't exist in any current digital governance framework. It cannot be mapped onto a Permission Object without losing its essential character, because a Permission Object is still standing authority, and a Warrant is not. When technical standards mature without a Warrant primitive, or, worse, with a Permission Object described as equivalent to a Warrant, every autonomous system built on those standards will be built on infrastructure that cannot answer the action-time governance question that the law will eventually require it to answer.

The ABA ASG-WG is the forum where that vocabulary is being established, with the doctrinal grounding, the legal machinery, and the institutional legitimacy to make it stick. The working glossary submitted to the ABA is the reference document. The three minimum competency frameworks under development are the operational expression. Practitioners, technologists, and standards contributors who want to engage with that process are encouraged to do so through the ABA Business Law Section and SciTech channels.

Technical standards practitioners, W3C working group members, IETF contributors, and the builders of identity and authorization infrastructure should engage with that process, not race ahead of it.

The EU AI Act's own essential requirements, as independent compliance analysis now confirms, cannot be satisfied by the permission model that current and proposed technical standards encode [17]. Standardising that model more thoroughly does not close the compliance gap. It embeds it.

Tim Reiniger was right. Four centuries of legal doctrine are already in the room. The question is whether we are willing to let them lead.

The Bottom Line

The governance problem of autonomous systems is not a technology problem. It is a legal architecture problem that has been handed to technologists because technologists build the infrastructure. The result is that the infrastructure is being designed by experts in the current paradigm, who are, understandably, solving the problem within it.

The current paradigm cannot solve it. Identity-before-authority infrastructure cannot govern systems where authority must be evaluated at the moment of action against the current interests of the party who bears the consequences. The vocabulary it produces, credentials, permissions, access tokens, and sessions, does not contain the concepts needed to answer the question that matters.

The law does. Property law's warrant [16]. Agency law's ultra vires doctrine [7, 8]. Guardianship law's dynamic standard [12, 14]. The doctrine of exhaustion [5, 6]. The conveyance moment [3, 4]. These are not metaphors for what we need. They are the actual legal instruments, refined over four centuries, that encode exactly the governance logic that autonomous systems require.

The infrastructure that implements them does not yet exist at scale. The protocol layer is being specified. The first regulatory deployments are being designed. The legal vocabulary is being established by the ABA ASG-WG through its working documents and minimum competency frameworks.

The race between legal vocabulary and technical standards is real, it is happening now, and it is not yet decided.

It should not be decided by default.

© Secours.ai 2026

Legal References

Property Law: Seisin and Land Law Foundations

[1] Blackstone, W., Commentaries on the Laws of England (1765–1769), Book II, Chapter 1. Canonical common law treatment of seisin as the present right to act upon a freehold interest, distinct from mere possession or standing in a chain of title.

[2] Simpson, A.W.B., A History of the Land Law (2nd ed., Oxford University Press, 1986). Leading modern scholarly treatment of seisin, conveyance, and the evolution of property rights in the common law tradition.

[3] Stoebuck, W.B. and Whitman, D.A., The Law of Property (3rd ed., West Academic, 2000). Standard US treatise on conveyance, chain of title, and the evaluation of validity at the moment of transfer.

Property Law: Conveyance Moment

[4] Restatement (Third) of Property: Servitudes (American Law Institute, 2000). Governs the creation, transfer, and termination of property interests, establishing that the validity of a conveyance is evaluated at the moment of transfer against the conditions then obtaining, not retrospectively against conditions that may have changed.

Property Law and IP: Doctrine of Exhaustion

[5] Impression Products, Inc. v. Lexmark International, Inc., 581 U.S. 360 (2017). Controlling US Supreme Court authority on the doctrine of exhaustion in patent law, establishing that once a right is exercised upon an authorized sale it is consumed and cannot be reasserted. Cited here by analogy to the broader structural principle that authority is exhausted at the moment of exercise and does not persist beyond that moment.

[6] Quanta Computer, Inc. v. LG Electronics, Inc., 553 U.S. 617 (2008). Foundational Supreme Court reasoning on exhaustion as a structural doctrine rather than a contractual limitation, establishing that the doctrine operates automatically upon authorized exercise of rights regardless of any restrictions the rights-holder purports to impose. Cited here by analogy to the structural principle of authority exhaustion.

Agency Law: Ultra Vires and Scope of Delegated Authority

[7] Ashbury Railway Carriage and Iron Co v Riche [1875] LR 7 HL 653. Foundational common law authority establishing that acts beyond the authorized scope of delegated power are void, regardless of the identity of the actor.

[8] Restatement (Third) of Agency, §2.01 (Actual Authority) and §2.02 (Scope of Actual Authority), American Law Institute, 2006. Governing US authority on the boundaries of delegated power and the legal consequences of action beyond those boundaries.

[9] Restatement (Third) of Agency, §7.01 (Agent's Liability to Third Parties), American Law Institute, 2006. Governs attribution of action and accountability where an agent acts beyond the scope of delegated authority.

Agency Law: Attribution and Authority Bifurcation

[10] Restatement (Third) of Agency, §1.01 (Agency Defined) and §2.01–§2.02, American Law Institute, 2006. Establishes the foundational distinction between the identity of the actor (attribution) and the scope of their delegated authority (authority), treated as legally distinct questions requiring distinct analysis.

Trust and Guardianship Law: Fiduciary Duty and the Dynamic Standard

[11] Meinhard v. Salmon, 249 N.Y. 458, 164 N.E. 545 (1928) (Cardozo, C.J.). Most cited common law statement of fiduciary obligation: the duty of undivided loyalty, prohibition on self-dealing, and the standard of care owed to a beneficiary.

[12] Restatement (Third) of Trusts, §77 (Duty of Prudent Administration), American Law Institute, 2007. Frames the trustee's duty as a continuing obligation requiring management decisions to account for the trust's current purposes and circumstances, not a historically fixed intent.

[13] Uniform Trust Code (Uniform Law Commission, 2000, as amended). Governing instrument for trust administration duties across US jurisdictions.

[14] Uniform Guardianship, Conservatorship, and Other Protective Arrangements Act (Uniform Law Commission, 2017). Establishes the guardianship standard as dynamically assessed against the ward's present best interests, providing the doctrinal grounding for the Ward-centric governance model's dynamic standard.

Institutional: ABA Resolution 604 [link]

[15] American Bar Association, Resolution 604, adopted at the ABA Midyear Meeting, February 6, 2023. Establishes three governing principles for AI developers and deployers: (1) human authority, oversight, and control must be maintained; (2) organizations must be accountable for legally cognizable harm caused by AI unless reasonable preventive steps were taken; and (3) transparency and traceability of AI systems must be ensured. Legal responsibility may not be shifted to an algorithm rather than to responsible persons and entities.

General Legal Terminology

[16] Garner, B.A. (ed.), Black's Law Dictionary (11th ed., Thomson Reuters, 2019). Authoritative definitions of seisin, ultra vires, warrant, conveyance, chain of title, and fiduciary duty as used in this article.

EU Regulatory Compliance Analysis

[17] Nannini, L., Smith, A.L., Maggini, M.J., Panai, E., Feliciano, S., Tiulkanov, A., Maran, E., Gealy, J., and Bisconti, P., "AI Agents Under EU Law: A Compliance Architecture for AI Providers." arXiv:2604.04604v1 [cs.CY], April 7, 2026. Section 9(10) identifies the absence of action-level authority governance infrastructure as both a market gap and a compliance gap under Articles 12 to 14 of EU Regulation 2024/1689 (AI Act), stating that runtime enforcement tools answer the question of permission but not authority, and that the essential requirements of the AI Act can only be demonstrated through action-level records of human authority exercise, not through system-level documentation of oversight design.

Related reading: This article argues that autonomous AI systems cannot be safely governed solely through identity- and permission-based security models, because real governance requires a legal-style "warrant" system that evaluates and exhausts authority at the moment each action occurs on behalf of a protected party. For a deeper dive into the legal and institutional foundations behind this emerging governance model, see the companion post: The Legal Profession Steps Forward on Agentic AI Governance: ABA Launches the Autonomous Systems Governance Working Group (ASG-WG).