In 2026 the risk is not adopting AI but losing control of it. While standalone agents offer speed they lack the security and traceability that regulated enterprises demand. Discover why the future of Enterprise AI depends on Orchestration Capital. Learn to integrate and govern multiple models under a secure framework that transforms isolated intelligence into an auditable and scalable corporate asset.

3 Takeaways:

- Standalone AI agents are effective at specific tasks but lack the governance, audit trails, and security architecture that regulated enterprises need for responsible deployment.

- Orchestration platforms don't compete with AI models. They make them enterprise-ready by adding PII masking, Human-in-the-Loop escalation, legacy system integration, and centralized compliance.

- Organizations building an orchestration layer now are accumulating Orchestration Capital. Those relying on ungoverned tools are accumulating risk at the same rate.

Every week, a new AI tool promises to hand autonomous power to individual users. Some let developers execute tasks from a terminal. Others control email, calendars, and entire operating systems. They're fast, impressive in demos, and for any organization with real regulatory obligations, insufficient on their own.

The question leadership is sitting with in 2026 isn't whether to adopt AI. Most organizations already have. The harder question is whether anyone actually owns what those tools do and what happens when something goes wrong.

We've seen both scenarios play out across healthcare, construction, finance, and government contracting. The difference in outcomes isn't subtle.

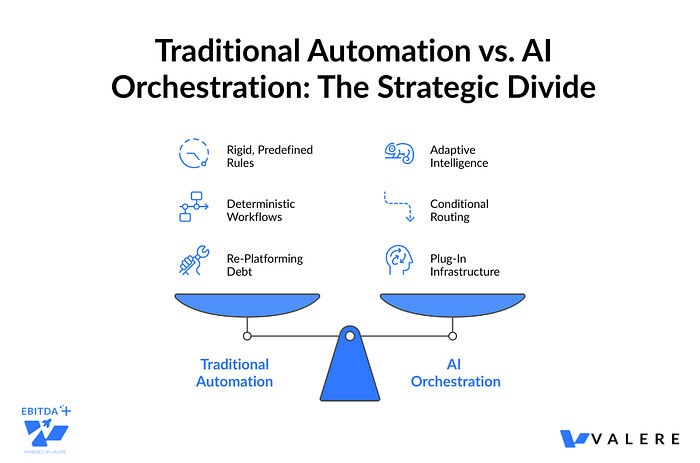

AI Agent vs. Orchestration Platform: What Actually Separates Them

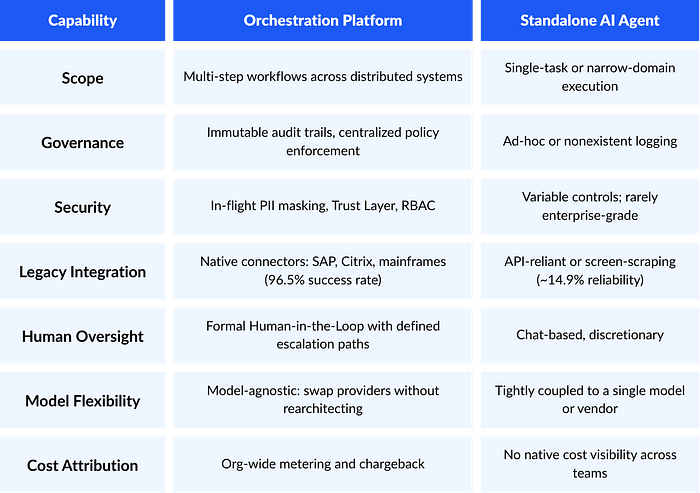

The gap between a point-solution AI agent and an orchestration platform isn't about which one uses a better model. It comes down to what the system can be held accountable for.

Point-Solution AI Agents

Tools like Claude Code are genuinely high-performing for specific tasks: software development, research, personal automation. They use protocols like MCP to access tickets, error logs, and other data sources. The reasoning capability is impressive. The problem is that without supporting infrastructure, they operate in isolation and don't provide:

- Integrated logging and observability

- Traceability across interactions

- Cost attribution by team or department

- Centralized security policy enforcement

AI Orchestration Platforms

Platforms like Conducto coordinate multiple models, agents, and people across distributed business processes. Orchestration is the connective tissue linking LLMs to legacy mainframes, SAP environments, and human approval workflows. The model is one component inside a governed system. It's not the system itself.

Everything we cover below, from governance gaps to legacy integration failures, traces back to that architectural difference.

Where the Difference Actually Shows Up

In practice, the question we ask clients isn't which approach they prefer. It's whether their current AI deployment could survive a compliance audit. That question tends to clarify things quickly.

IN PRACTICE: Construction Finance SaaS

A client needed to scale outreach to 250,000 contacts. A standalone agent attempting this would almost certainly have triggered spam filters, violated ISP thresholds, or botched unsubscribe compliance. The system we built managed inbox rotation capped at 1,000 sends per day, real-time unsubscribe processing, unified bridging across Snowflake, ZoomInfo, HubSpot, and SendGrid, and human-approved campaign oversight with live dashboards. The compliance layer wasn't a feature we bolted on. It was the reason the system could run at that scale without creating legal exposure.

Why Autonomous Tools Create Specific Problems in Enterprise Environments

The Governance Gap

When a developer runs an AI tool locally, the organization has no native mechanism to audit what prompts were submitted, what data was exposed to the model, or what decisions were made. In finance, healthcare, and insurance, every AI-driven decision needs to hold up under scrutiny. A tool without centralized logging isn't just inconvenient.

We ran into this directly working with a data annotation company that had been using unmanaged AI to review high-stakes annotations against a 50-page rulebook. The model was hallucinating compliance and missing edge cases often enough to be a real problem. We replaced that with a logic bridge built on AWS Bedrock, mapping human guidelines into precise JSON logic backed by a full audit trail and a validated golden dataset. The output was a mathematically consistent quality score instead of a probabilistic guess.

The Last Mile to Legacy Systems

Most Fortune 500 companies still run critical processes on SAP, Citrix, and mainframes. The computer use capabilities of modern agents, where the model interacts with a GUI by interpreting screenshots, have shown reliability rates as low as 14.9% in independent testing. They click the wrong interface elements, miss data in dropdowns, and misread visual layouts.

Orchestration platforms using deterministic UI automation with certified connectors achieve success rates above 96%. For business-critical processes, that gap has real operational consequences.

IN PRACTICE: National Building Materials Distributor

Engineers needed to query project information buried across more than 10TB of PDF documents and legacy SQL databases. A standalone LLM couldn't securely access that data or guarantee it was pulling the correct version of building codes. We built an orchestrated RAG system with natural-language answers backed by traceable citations to specific documents, Active Directory-based access control, and scoped retrieval ensuring users only see answers derived from data they're authorized to view.

Isolation Breeds Shadow AI

Without an orchestration layer, autonomous tools tend to fragment across teams. Each developer configures differently, connects to different data sources, and operates under different security policies, or none at all. The result is what MIT researchers call shadow AI: a growing ecosystem of unsanctioned tools that IT departments can't see, govern, or shut down.

A Security Warning: When Open Means Exposed

The OpenClaw case shows where this risk goes when taken to its logical end. The open-source personal AI agent created by Peter Steinberger reached 196,000 GitHub stars and 2 million weekly users in a matter of weeks. It promised control over email, calendars, messaging platforms, and home appliances. Users loved it. The security community did not.

What the Analysis Found

Cisco's AI security research team analyzed more than 31,000 community-created skills and found that 26% contained at least one vulnerability. One tested skill performed data exfiltration and prompt injection without the user's knowledge.

- Shodan scans found hundreds of instances connected to the internet with zero authentication. Anyone who found them had access to configuration files storing API tokens and keys for all connected services

- Infostealer malware began specifically targeting these configuration files, extracting gateway tokens that give attackers access to a user's entire connected ecosystem

- Gartner classified it as an unacceptable cybersecurity risk, even as its creator was hired by OpenAI to work on the next generation of personal agents

The pattern is consistent: tools built for speed and individual empowerment, shipped without the governance architecture that organizations need. From what we've seen across client engagements, the biggest exposure isn't usually a sophisticated external attack. It's an employee who installs one of these tools on a work device, connects it to internal systems, and doesn't tell anyone.

The Trust Layer: What Real Governance Looks Like in Practice

Three elements separate an enterprise-grade orchestration platform from a tool that only looks enterprise-grade. No standalone agent provides all three by default.

1. In-Flight PII Masking

When a standalone agent sends a prompt to a cloud-hosted model, sensitive data travels with it. Orchestration platforms implement masking before any data reaches the model, pseudonymizing personally identifiable information and rehydrating output only after it returns to the secure enterprise environment. The model never sees the real data.

2. Immutable Audit Trails

Every interaction, decision, and escalation is logged in a centralized, tamper-resistant record. When regulators ask why an AI system denied a claim, approved a transaction, or flagged a patient record, the organization can produce a complete chain of evidence: what prompt was sent, which business rules were applied, which model version responded, and who approved the final output.

3. Human-in-the-Loop as an Architectural Decision

Well-orchestrated workflows build mandatory human approval points directly into process design. When an agent encounters a low-confidence result, a rule breach, or a decision above its authorization threshold, it escalates through a predefined path rather than waiting for someone to notice.

IN PRACTICE: Healthcare Technology

A client had automated AI communicating directly with patients about benefits with no oversight system, no audit capability, and no way to intercept a response before it reached the recipient. In healthcare, that's not a quality issue. It's a legal liability. We built a Human-in-the-Loop portal that acts as a firewall between the model and the patient: real-time review, modification, and approval of every AI-generated message before delivery, with full audit trails and integration with Salesforce and AWS Bedrock.

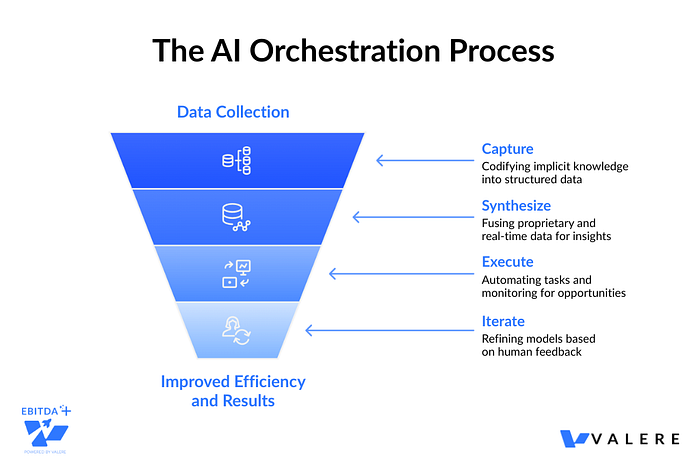

What This Looks Like When All the Components Work Together

The best AI models available today work well inside orchestration platforms precisely because orchestration handles what models can't: governance, memory, legacy connectivity, and accountability. Two recent projects illustrate what that looks like when knowledge capture, synthesis, and autonomous execution are all connected.

Government Contracting: From Reactive to Predictive

A government contracting firm was chasing opportunities reactively: manual searches, institutional memory scattered across dozens of people, no forward visibility into what was coming. Adding a smarter AI agent to that workflow wouldn't have fixed the underlying problem, which was that the information needed to act early was never captured or organized in the first place.

The system we built operates in four coordinated layers:

- Capture: Dactic conducts automated interviews with sales executives and engineers, turning 30 years of relationship history into a structured, searchable knowledge base covering win/loss patterns, technical capabilities, and unstated priorities from program managers.

- Synthesize: The Conducto Brain combines that proprietary intelligence with real-time data from SAM.gov, USAspending, and federal budgets, applying predictive scoring based on technical fit and competitive positioning.

- Execute: Autonomous agents monitor government portals continuously. When a matching solicitation drops, the system aggregates, normalizes, and maps it to known decision-makers.

- Iterate: A Human-in-the-Loop decision engine lets sales leaders review every AI recommendation. Each Bid/No-Bid decision feeds back into the scoring model, refining predictions over time.

Practical result: opportunities identified 30 to 90 days earlier than manual searching, with 80% less research time per opportunity.

Automotive SaaS: Turning Institutional Intuition into a Retention System

An automotive SaaS platform wanted to shift customer success from reactive account auditing to proactive churn intervention. Their most experienced CSRs could sense when an account was at risk. That intuition was real and valuable. It had just never been documented, so it lived entirely in people's heads and walked out the door when they did.

The system captured and operationalized exactly that:

- Dactic extracted the implicit patterns experienced CSRs recognized instinctively and mapped them into structured Intervention Playbooks

- The Conducto Brain combined that qualitative intelligence with Salesforce data and platform usage logs to generate continuous Account Health Scores, calibrated to the specific risk factors the team identified

- Agents monitor the full dealer portfolio autonomously, detecting risk patterns and routing the right playbook to the assigned rep

- Every successful save is recorded and fed back into the knowledge base, making the system progressively more accurate over time

Practical result: 15% of CS bandwidth freed up, no at-risk clients slipping through undetected, and a 20% reduction in early-stage churn.

Intelligence Without Governance Isn't an Asset

The enterprise AI landscape is splitting along a fairly predictable line. On one side, organizations treating AI as a collection of individual tools deployed ad hoc, governed by good intentions. On the other, organizations that have invested in an orchestration layer and treat AI models as swappable components inside a governed, auditable framework.

What we call Orchestration Capital is the foundation of trust, visibility, and control that accumulates with every deployment. Each audit trail makes the next compliance review easier. Each feedback loop makes the next prediction more accurate. Each human review point trains the system to be more capable without becoming less accountable.

You can't automate what you can't document. And you can't govern what you can't see. Valere Team

Models will keep improving. New tools will keep arriving. But across everything we've built and deployed, the organizations that see durable returns from AI aren't necessarily using better models than the ones that don't. They've built better systems around them.

Frequently Asked Questions

Are standalone AI agents enough for regulated enterprises?

Generally, no. Individual autonomous tools lack the audit trails, PII masking, and formal human escalation paths that regulated environments require. Without an orchestration layer, each deployment becomes its own isolated compliance risk.

What makes up the Trust Layer of an orchestration platform?

Three things: in-flight PII masking before any data reaches the model, immutable audit trails capturing every decision and approval, and Human-in-the-Loop checkpoints built into workflow design rather than added as an afterthought.

What happens when ungoverned agents proliferate inside an organization?

What MIT researchers call shadow AI: unsanctioned tools that IT cannot see, govern, or shut down. The risk accumulates across regulatory, security, and financial dimensions, usually quietly, until it becomes a very loud problem.

How does Valere structure AI orchestration for PE portfolios?

Through the Dactic to Conducto framework: tribal knowledge capture, synthesis with enterprise data, autonomous execution, and Human-in-the-Loop review. Each layer feeds back into the previous one, with traceable metrics aligned to the 18-month value creation mandate.

What is the practical difference between AI Theater and AI Value Delivery in orchestration?

AI Theater deploys autonomous tools without governance and calls it transformation. AI Value Delivery builds an orchestrated system with end-to-end ownership, a complete audit trail, and measurable ROI. The difference rarely comes down to which model you chose. It comes down to who owns the outcome.