1. Introduction: The Myth of the "Magic Payload"

In the cybersecurity world, there is a persistent myth that the right "magic payload" — a complex string of characters designed to bypass every filter — is the key to a high-criticality finding. Beginners often spend hours scrolling through GitHub repositories for exotic scripts or running automated scanners, hoping for a lucky hit.

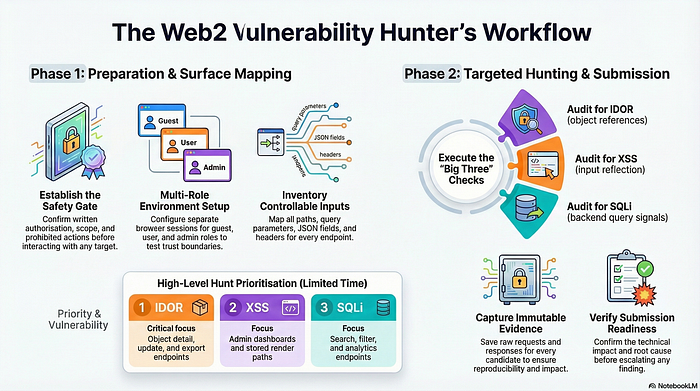

However, seasoned researchers know that automation often misses the most impactful vulnerabilities because it lacks the ability to reason through logic and environment-specific nuances. The real secret weapon isn't a single payload; it is a systematic, disciplined approach to exploring an application's unique architecture. Professional hunters rely on a Comprehensive Vulnerability Hunting Checklist to map the attack surface and find the logical gaps that code alone cannot see. By moving beyond mindless scanning and toward rigorous methodology, you can uncover vulnerabilities that others overlook.

2. Takeaway 1: Context is the Silent Killer in XSS

Cross-Site Scripting (XSS) is frequently misunderstood as merely reflecting text back to the browser. In reality, the danger is dictated entirely by the "Exact Context" (Section 5.2) in which that reflection occurs.

A reflection that is perfectly safe when rendered as plain HTML text can be lethal if it appears inside an SVG/XML context, a script block, or during a "hydration mismatch" in a Single Page Application (SPA). Modern frameworks often introduce counter-intuitive sinks where a payload is mutated or reparsed after initial sanitization. Developers frequently apply a global encoding fix that secures one part of a page while leaving "Indirect Trust Surfaces" — such as client-side state hydration or DOM-based sinks — wide open.

Success in XSS hunting requires a mental shift, often referred to in professional checklists as the "Fast Hunt Loop":

"What input do I control? Where does it go? Is it reflected? Is any mitigation incomplete? Is the impact concrete?"

By identifying whether a reflection occurs in an event handler, a template literal, or a JSON blob inside a script tag, you can tailor your approach to the specific environment rather than relying on generic payloads.

3. Takeaway 2: IDOR is a Game of "Relationships," Not Just Numbers

Insecure Direct Object Reference (IDOR) is often taught as a simple exercise: change ID=1 to ID=2. Modern applications are rarely this simple, and professional-grade IDORs usually involve complex object relationships.

High-impact targets often involve nested relationship IDs, UUIDs, or object IDs embedded in JavaScript state blobs. A sophisticated secret from the professional checklist (Section 4.5) is the "downstream service" failure: object ownership might be checked perfectly in the primary API gateway, but the authorization check is forgotten when the request is passed to a downstream microservice.

Seasoned hunters also look for "Indirect Trust Surfaces" where IDORs hide in plain sight:

- Caches and Background Jobs: Testing if an unauthorized object can be accessed via an async refresh endpoint or a background export process.

- Opaque Tokens: Determining if IDs can be predicted from sequence or timing, or leaked through a secondary endpoint like a search autocomplete.

- State Manipulation: Swapping a single nested reference while keeping the outer object valid to see if the backend fails to verify the entire hierarchy.

4. Takeaway 3: The "Hidden Surface" of Exports and Reports

One of the most consistent findings in professional assessments is that "background" features — such as CSV exports, analytics dashboards, and PDF reports — are the weakest links in an application.

These features are frequently built for internal use and often bypass the standard security protections applied to the main user interface. As the checklist highlights:

"Admin/search/stored render paths and export paths are top-tier priorities."

The irony is that the very tools designed for data visibility often provide the most significant data leaks. This is particularly true for SQL Injection (SQLi). While a developer might use prepared statements for data values in the primary UI, they often use string concatenation for identifiers in report builders — such as ORDER BY columns, LIMIT clauses, or table aliases—where traditional parameterization is more difficult to implement. Finding an SQLi in a sort parameter of a CSV export is a classic professional "win" that automation almost always misses.

5. Takeaway 4: The Art of "Response Diffing" Across Roles

Professional bug hunting moves from "guessing" to "scientific observation" through a process called access-control comparison (Section 4.4). Instead of just looking for an error message, a hunter compares the structural "shape" of responses across different roles, such as Guest, User, and Admin.

To make this effective, you must perform technical normalization. This involves stripping away "noise" that causes false positives during a diff, specifically:

- CSRF tokens and Nonces

- Timestamps and Request/Trace IDs

- Canonicalizing JSON structure

Once the noise is removed, you look for structural anomalies: Does a low-privileged user's response have the "same body shape" or contain the "same sensitive fields" as an Admin's? This methodology uncovers structural logic flaws where the backend verifies the user's identity but fails to properly scope the data returned, essentially moving the hunt from finding errors to finding data leaks in valid responses.

6. Takeaway 5: The "Do Not Fool Yourself" Rule

A hunter's reputation is built on the validity and concrete impact of their reports, not the volume of submissions. A critical part of the professional process is the "validity gate," which requires a researcher to challenge their own findings before escalating them.

It is easy to be misled by "caching noise," intended business logic, or generic 500 errors. Professional hunters also understand the "One-Fix-One-Reward" reality: reporting ten different routes that all share the same "Root Cause" (Section 9) will often result in a single payout. True professionals aim to identify the underlying failure rather than spamming variants.

To avoid filing "noise," every report must meet the standard defined in Section 10:

"The report is scoped, concise, and not speculative. It must demonstrate a concrete technical and business impact."

Before reporting, ask: Is this reproducible? Is the difference meaningful or just cosmetic? Is the behavior already neutralized by a downstream security control? By being your own harshest critic, you ensure that every report you file represents a real, actionable risk.

7. Conclusion: From Checklist to Instinct

Successful bug hunting is not a matter of luck; it is a disciplined process of environment readiness, surface mapping, and rigorous validation. While tools can assist, the most effective hunters are those who can navigate the nuances of logic and context.

As applications become more complex and automated defenses improve, the human ability to "diff" logic and understand context remains the most critical asset in a security assessment. In an era of increasing automation, is the human ability to analyze logical relationships the last true line of defense? By following a systematic checklist, you transform hunting from a game of chance into a reliable, professional craft.