Why Traditional Tools Fail at Business Logic Vulnerabilities

Imagine a penetration tester sitting in front of an e-commerce application. They open the checkout flow. They add an item to the cart, apply a discount coupon, and complete the purchase. Then they try something simple: they go back and apply the same coupon again.

It works.

They try it a third time. Still works. The system has no memory of who already used the coupon — it only checks whether the code exists, not whether it has already been redeemed.

No SQL injection. No XSS. No malformed request. Just a logical gap in how the application was designed. And every automated scanner running against that same application would have missed it completely.

This is the business logic problem.

And it is not a niche concern. According to HackerOne's 9th Annual Hacker-Powered Security Report — "The Rise of the Bionic Hacker" — valid reports for Business Logic Errors surged by 19% year-over-year, generating over $2.26 million in bounty payouts in 2025 alone [1]. More importantly, when security researchers were asked which vulnerabilities automated AI tools and scanners are currently weakest at identifying, a staggering 58% pointed directly to Business Logic flaws — ranking it as the absolute #1 blind spot in modern security automation [1].

The same report paints a broader picture: logic-based and access-control flaws dominate the list. IDOR reports rose 29% YoY, Improper Access Control climbed 18% YoY — while commodity vulnerabilities like XSS declined 14% and SQL Injection dropped 23%. The attack surface is shifting. The bugs that scanners can find are becoming less common. The bugs they cannot find are becoming more prevalent.

This is heavily reflected in the OWASP API Security Top 10 (2023), where logic-based and access-control flaws dominate — specifically API1 (BOLA), API5 (BFLA), and API6 (Unrestricted Access to Sensitive Business Flows) [2]. These are not theoretical risks. They are highly impactful vulnerabilities hiding behind syntactically perfect requests.

What Automated Scanners Are Actually Good At

To understand why scanners fail here, we first need to understand what they are designed to do.

Tools like OWASP ZAP, Burp Suite's active scanner, and Nikto excel at finding syntactic vulnerabilities — flaws that exist in the structure or content of individual requests, independent of application context.

SQL injection is the clearest example. A scanner sends a payload like ' OR 1=1 -- to every input field it finds. If the database throws an error or returns unexpected data, the scanner flags a vulnerability. It does not need to understand what the application does. It only needs to observe that this string breaks something.

The same logic applies to cross-site scripting, path traversal, command injection, and most CVE-based checks. These are pattern matching problems. Given enough payloads and enough endpoints, a scanner can find them reliably and at scale.

But business logic vulnerabilities are different in a fundamental way.

What Business Logic Vulnerabilities Actually Are

Business logic vulnerabilities occur when an attacker abuses the intended functionality of an application in unintended ways. In formal application security terms, they represent invariant violations, state inconsistencies, and trust boundary misuses. They do not exploit a broken parser or a misconfigured library. They exploit assumptions — assumptions made by developers about how users will behave.

The OWASP Top 10: 2021 report reinforces this concern. Broken Access Control — a category that overlaps heavily with business logic flaws — was ranked as the #1 most critical web application security risk, with 94% of applications tested containing some form of this vulnerability [3]. OWASP has since launched a dedicated "OWASP Top 10 for Business Logic Abuse" project to address these issues specifically [4].

Some of the most common examples:

IDOR — Insecure Direct Object Reference: A user views their own order at /api/orders/1042. They change the ID to 1041. The server returns another user's private order data without any error. The request is syntactically perfect. The logic is completely broken. This is not hypothetical — in a publicly disclosed HackerOne report, a researcher discovered that Starbucks India's card.starbucks.in endpoint allowed any authenticated user to view other customers' card data and monetary balances simply by manipulating a cardId parameter [5]. In a separate incident, researchers found that a flaw in Starbucks' Backend for Frontend (BFF) proxy logic could have exposed nearly 100 million customer records through an internal API traversal [6].

Workflow Bypass: A registration flow requires three steps: create account, verify email, set up profile. An attacker completes step one and then jumps directly to the authenticated dashboard. Step two was never enforced server-side.

Price Manipulation: A checkout API trusts a price parameter sent from the client. An attacker intercepts the request and changes {"price": 49.99} to {"price": 0.01}. The order is processed at the modified price.

Coupon Abuse: Like the story at the beginning of this article. The coupon validation checks existence, not redemption history. The same code works indefinitely. A security researcher found a similar race-condition-based flaw in Stripe's fee discount system, where concurrent requests could bypass single-use restrictions on a $20,000 discount — entirely [7].

The severity of these flaws is consistently critical. Because they easily bypass Web Application Firewalls (WAFs) and syntactic filters, they lead directly to catastrophic financial losses (through coupon abuse or price manipulation), massive data breaches (via IDOR), and sophisticated fraud vectors. None of these vulnerabilities involve malformed syntax. They are all semantically valid requests that violate business rules. And that distinction is exactly why scanners cannot find them.

The Core Problem: Scanners Have No Context

For over two decades, the security industry has successfully automated the detection of syntactic flaws. But why has the business logic problem remained unsolved for so long? The answer is simple: you cannot write a regular expression for human intent. While syntactic bugs could be modeled mathematically or matched via signatures, semantic understanding requires cognition.

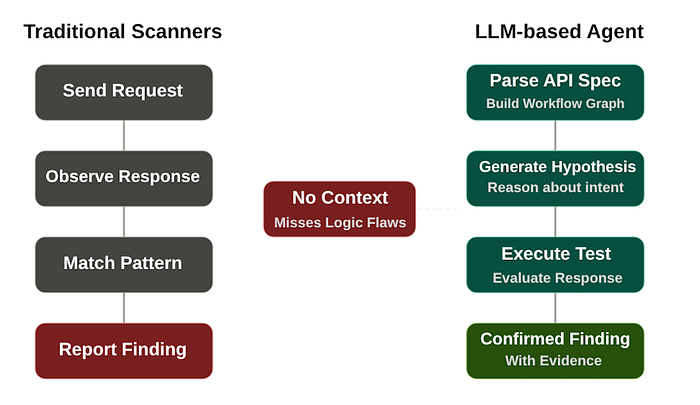

Traditional scanners operate on a simple mechanical model:

Send request → Observe response → Match pattern → Report finding

This works when a vulnerability lives inside a single request-response pair. But business logic flaws require understanding across multiple requests, multiple states, and the intended behavior of the system as a whole.

Consider the coupon abuse example again. A scanner testing the /coupon endpoint will send it a request, observe a 200 OK response, and move on. It has no way of knowing that the correct behavior should be to reject a coupon that has already been used. It does not know what "already used" means. It has no model of application state.

To detect this vulnerability, a tool would need to:

- Understand that coupons are intended for single use

- Apply the coupon once and observe the result

- Apply it again and compare the behavior

- Recognize that the second success is a violation of the expected business rule

This is not pattern matching. This is reasoning about intent.

Multi-Step Workflows Break Automation Further

The problem gets worse when vulnerabilities span multiple endpoints in sequence. Consider a payment flow:

- Step 1:

POST /api/orders→ create order - Step 2:

POST /api/orders/verify→ confirm order details - Step 3:

POST /api/payments→ process payment

If an attacker can call Step 3 directly without completing Steps 1 and 2, they may be able to trigger payment processing in an unexpected state. But detecting this requires a tool that understands the intended sequence of the workflow — not just that these three endpoints exist.

Traditional scanners do not build workflow models. They test endpoints independently. The relationship between steps, the required state transitions, the expected ordering — none of this is captured or reasoned about.

Why Human Testers Still Find These Bugs

This is why experienced penetration testers remain irreplaceable for business logic testing. A human tester brings something that no pattern-matching tool can replicate: the ability to model how an application should behave and then systematically probe whether it actually does.

A good tester looks at a parameter named user_id and immediately asks: what happens if I change this to someone else's ID? They see a multi-step checkout flow and wonder: can I skip step two? They notice a price field in a POST body and think: should the server really be trusting this?

Consider a real-world scenario. A penetration tester is auditing a SaaS platform. The application has a role hierarchy: Admin → Manager → Viewer. They notice that role assignments are sent as plain integers in API requests — {"role": 3} for Viewer, {"role": 1} for Admin. They change a self-update request from 3 to 1. Suddenly, a Viewer has Admin privileges. No scanner would test this, because no scanner knows what those integers mean within the business context. But a human tester, having studied the application's role model, recognizes the semantic gap instantly.

These insights come from reasoning about system design — not from a payload list.

The problem is that human testing does not scale. A large API with hundreds of endpoints, complex user roles, and intricate workflows can take weeks to test manually. Coverage is always incomplete. Results vary between testers. Continuous testing pipelines are practically impossible.

Why Large Language Models Change the Equation

Recent advances in Large Language Models (LLMs) open a genuinely new possibility here.

LLMs have been trained on vast amounts of text including API documentation, security research, bug bounty writeups, and application code. As a result, they have developed something that no traditional scanner has ever had: an implicit model of how applications are supposed to behave.

When you show an LLM an endpoint like:

GET /api/orders/{orderId}

Authorization: Bearer <token>It does not just see a URL pattern. It recognizes that orderId is likely a sequential identifier, that the endpoint probably returns data belonging to a specific user, and that returning another user's data when the ID is modified would represent an access control failure. It brings context that was never explicitly provided.

This is exactly the kind of semantic reasoning that business logic vulnerability detection has always required — and never had access to in an automated tool.

A necessary caveat: Whether LLMs perform "true reasoning" or an extraordinarily sophisticated form of statistical pattern matching remains an active debate in AI research. LLMs can be fragile when confronted with novel, out-of-distribution scenarios, and they lack a persistent internal world model that guarantees logical consistency across complex multi-step chains [8]. They do not understand business rules the way a human does — they approximate understanding through probabilistic inference over learned patterns. But for the purpose of vulnerability detection, this distinction may matter less than the practical outcomes. What matters is whether the model can generate valid hypotheses, construct meaningful test cases, and recognize violations — and current evidence suggests that, within structured workflows, it can.

The natural next question is: can this reasoning be turned into an autonomous testing loop? Can an LLM not just identify potential vulnerabilities but actually generate hypotheses, execute tests, evaluate responses, and refine its approach — all without human involvement?

That is the question this project is built to answer.

The natural next question is: can this reasoning be turned into an autonomous testing loop? Can an LLM not just identify potential vulnerabilities but actually generate hypotheses, execute tests, evaluate responses, and refine its approach — all without human involvement?

That is a question worth exploring.

References

[1] HackerOne, 9th Annual Hacker-Powered Security Report — "The Rise of the Bionic Hacker" (2025) — Business Logic Errors: 2,001 valid reports (↑19% YoY), $2.26M in rewards. 58% of researchers say AI misses business logic flaws. Based on 580K+ validated vulnerabilities across 1,950+ programs. hackerone.com

[2] OWASP, API Security Top 10–2023 — API1:2023 Broken Object Level Authorization (BOLA), API5:2023 Broken Function Level Authorization (BFLA), API6:2023 Unrestricted Access to Sensitive Business Flows. owasp.org

[3] OWASP, OWASP Top 10: 2021 — A01: Broken Access Control — Ranked #1 most critical risk; 94% of applications tested contained some form of broken access control. owasp.org

[4] OWASP, OWASP Top 10 for Business Logic Abuse — Dedicated project to categorize and address complex business logic vulnerabilities using a Turing-machine-based modeling approach. owasp.org

[5] HackerOne Report #701160, Starbucks India — IDOR on card.starbucks.in — An authenticated user could view other customers' card data and balances by manipulating a cardId parameter. hackerone.com

[6] Sam Curry, Hacking Starbucks and Accessing Nearly 100 Million Customer Records — Researchers exploited a BFF proxy routing flaw to traverse internal API paths and access a Microsoft Graph instance containing ~99.3M customer records. Bounty: $4,000. samcurry.net

[7] HackerOne Report #1849626, Stripe — Fee Discounts Can Be Redeemed Multiple Times via Race Condition — A researcher discovered that concurrent requests could redeem a single-use $20,000 fee discount multiple times. Bounty: $5,000. hackerone.com

[8] Dziri, N. et al. (2023), Faith and Fate: Limits of Transformers on Compositionality — Research showing LLMs solve compositional tasks via linearized subgraph matching rather than systematic reasoning, with performance decaying as task complexity increases. arxiv.org