I thought I discovered a genuinely novel research technique and even submitted a CFP I'll have to retract after this. Still, I think there's little awareness or actual implementation of this technique so I'm putting it out there in a blog for general awareness.

I am sitting there a few weeks ago, thinking through AiTM (Adversary-in-the-Middle) proxy phishing and how most red teams are just hoping their victims don't choose a FIDO2 phishing-resistant MFA method, and a thought hits me. I am already proxying traffic bidirectionally. Every request the victim sends and every response the IdP sends back goes through my server before either side sees it. Instead of just snooping and intercepting session tokens, what if I just rewrite the server's response before forwarding it?

Specifically, what if I rewrite the auth-method picker the IdP renders so the victim never even sees the passkey option at all? They would just see push, SMS, TOTP, and password.. something I could intercept. They would pick one, because the picker would look completely normal, and I would capture whatever they picked through the proxy as usual. The cryptography of the passkey would never be engaged. I would not have to defeat anything FIDO2 cares about. I would just edit the menu.

I built it. Tested end-to-end against Google, Microsoft and Okta. Baked it into the PhishU Framework, the spear-phishing simulation and training platform I have been building for over a decade (formerly PhishAPI), as a default capability. I even submitted a CFP to DEF CON, convinced I had something fully unique to demonstrate on stage. 😅

Then I sat down to research prior work before publishing my long-form blog. Turns out at least one research group and a black hat phishing kit had a similar idea recently.

The technique even has a name in the public security research lexicon: Authentication Method Redaction Attacks. I've previously blogged and presented on MFA downgrade attacks before, but this is more specific.

Even though I built mine independently, the convergent finding actually says something interesting about the gap itself. Anyone who looks at bypassing passkey deployments long enough is going to hit the same observation.

So this writeup is not about discovery. It is about awareness. And there are a few specific pieces of my implementation I have not seen covered in the prior literature, which I will flag as we go.

The 95% gap

Across Microsoft Entra, Google Workspace, and Okta, fewer than 5 percent of organizations that rolled out passkeys also enforce phishing-resistant authentication at the policy layer. The other 95 percent shipped passkey enrollment and called it phishing-resistant authentication. It is not.

If you are on a security team that pushed passkeys this year, I would bet money you are in that 95 percent. Not because you do not know what you are doing, but because every major IdP's admin documentation explicitly recommends enrolling a backup factor alongside the passkey for account recovery. SMS. Voice. Push. TOTP. Authenticator app. The vendor literally tells you to do it.

That backup factor is the entire attack surface.

Surface 1: Hide the row

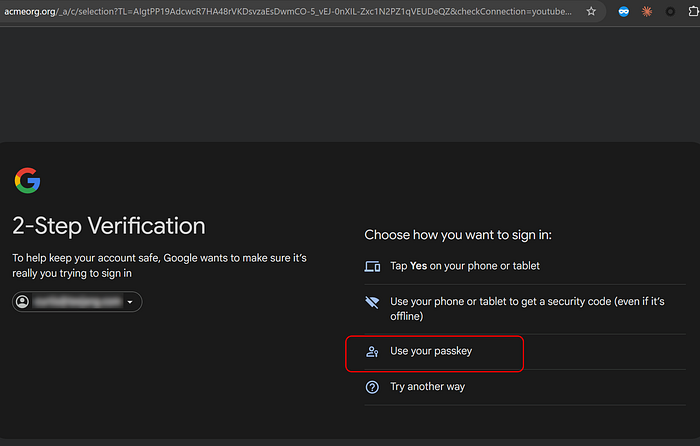

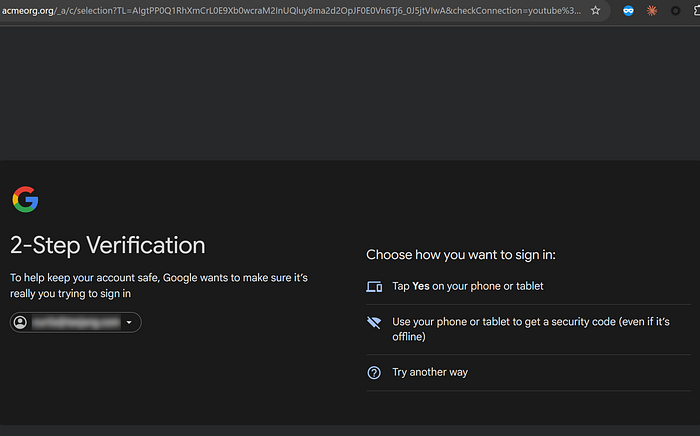

When the IdP renders the auth-method picker as clickable HTML, the proxy walks the rendered DOM after page load and removes rows whose text matches passkey labels. "Use your passkey." "Security key." "Hardware security key." Hidden via combined display, visibility, and height styles so sibling JavaScript that iterates the picker does not break, and the victim sees a picker with the phishing-resistant option silently absent. No error. No warning. No UI artifact. Everything else on the page renders unchanged.

This surface is the most-covered one in the public prior art. The Evilginx PoC against GitHub does exactly this, and Push Security's writeup describes the potential technique. My contribution at this surface is not the technique itself. It is that PhishU now ships it as an integrated default-on capability inside a defender-side phishing-simulation platform rather than as a one-off offensive phishlet. Every PhishU customer running an AiTM landing page against an IdP that supports passkeys gets the strip automatically, and the captures land in the same per-user campaign reports as every other engagement result. That packaging matters because defenders need a measurable answer to what would actually happen if this hit our org, not a custom-built tool per simulation.

Surface 2: Catch the API

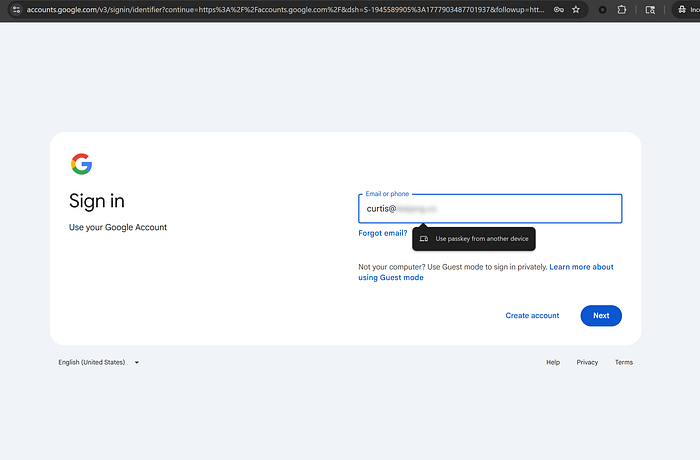

The DOM-stripping technique fails on flows that never render a clickable picker. When the IdP invokes navigator.credentials.get directly to launch the browser's native passkey UI, there is no DOM element to hide. Same problem with Chrome's conditional UI hint, the autofill-style "Use passkey from another device" suggestion that appears below the email field when the input has autocomplete="username webauthn" and the page calls navigator.credentials.get with mediation: conditional. That hint surfaces on every page load on the legitimate Google sign-in page even in incognito, and the trigger is browser-native, not DOM-rendered.

The PhishU runtime injects a JavaScript shim at script-load time that intercepts both navigator.credentials.get and navigator.credentials.create. When called with a publicKey argument, that is, a WebAuthn ceremony, the shim returns an immediate rejection with the standard NotAllowedError. Non-WebAuthn credential types (password autofill, federated, OTP) pass through unchanged. The browser receives the rejection before any platform UI surfaces. The conditional UI hint never appears in the autofill dropdown. The native passkey prompt never opens. The page's existing error-fallback branch fires, the user transitions to use another method or sees the password field, and they sign in the way they always do.

This is where I will humbly stake a novelty claim. The published prior art on this attack class focuses on DOM-level picker stripping. WebAuthn API hijacking has been described elsewhere as a malicious-browser-extension technique, but I have not found public coverage of intercepting these calls from inside an AiTM-proxied page via response-injected JavaScript. The WebAuthn API shim is, as far as I can tell, original to PhishU's implementation. If anyone has a reference that contradicts this, I would genuinely like to see it. I will update this post.

Surface 3: The IdPs help us out

Even before the shim runs, the IdPs are doing some of the work for us. Google's "passkeys instead of passwords" feature checks server-side signals before deciding whether to route a user to passkey-first sign-in: prior session cookies on the legitimate origin, device-state hints, and platform conditional UI availability. None of these signals exist on a proxy origin. Real victims arriving at the proxy origin look to Google's backend exactly like any other unfamiliar device, and Google defaults to password-first for unfamiliar devices.

To test, I enrolled my own Google Workspace account in "passkeys instead of passwords," logged out, and signed in via the AiTM proxy in incognito Chrome. Google did not surface passkey-first at all. It went straight to a password prompt, then 2SV picker. Exactly what an unfamiliar-device sign-in looks like. The IdP made the technique easier without us doing anything.

I have not seen this structural-assist observation called out in the public literature. It is not a finding that takes much to verify (anyone can reproduce it in 15 minutes with a Workspace account and an AiTM proxy), but it changes the framing. For many real-world AiTM scenarios against Google, the proxy does not have to fight passkey-first routing because the IdP refuses to offer it on unknown-device origins automatically.

What you should actually do

If you rolled out passkeys, here is the no-BS test. Open your IdP admin console.

Microsoft Entra: Did you set up a Conditional Access policy with Authentication Strength = Phishing-resistant MFA? AND did you also use Authentication Methods Policy to disable SMS, voice, and Authenticator-app fallback for users in scope?

Google Workspace: Did you enroll the relevant users in Advanced Protection Program?

Okta: Did you set Authenticator Policy = Possession + Hardware-protected? AND did you set a Sign-on Policy that requires it for sensitive apps? Or, restrict to Okta Verify only? (There's a separate blog coming for an issue I discovered specific to Okta Verify..)

If the answer to either half is no, your passkey rollout is incomplete. Authentication is only as strong as its weakest enrolled fallback method. We have been saying this for years. Enrolling passkeys without disabling phishable fallbacks is a passkey enrollment program. It is not a phishing-resistant authentication program. There is a real difference.

And, fair warning, I am unavoidably biased about my own tool here. Once you have made those policy changes, you actually need to verify they hold under fire. That means simulating the technique against an authorized cohort of your own users. Drop a PhishU AiTM landing page in front of your test population, run a campaign, and let the dashboard tell you who picked the weak fallback and who got blocked at the policy enforcement layer. If your enforcement is working, the report tells you so. If 80 percent of your engineering team picked SMS, that is also extremely useful information. Either result beats assuming.

More

If you want the full technical breakdown, surface walkthrough with code references, the empirical findings written up in detail, the tiered defensive playbook for orgs that cannot do strict enforcement everywhere overnight, and side-by-side proof-of-concept screenshots, it is on the PhishU corporate blog: Passkeys Are Nearly Useless Against Live AiTM: The 95% Enforcement Gap (https://phishu.net/blogs/blog-testing-passkey-fallback-abuse-in-the-phishu-framework.html).

If you want to learn more about the PhishU Framework itself, what other AiTM, OAuth consent grant, device code, B2B invite, and ClickFix scenarios it covers, pricing, etc, that is at https://phishu.net. And if you sign up for a demo or trial right now I will mail you a signed copy of my book, "S is for Spear Phishing", for free (you cover the $5 shipping). First 50 sign-ups while supplies last.

Thanks to Push Security, eSentire, and the rest of the researchers who wrote about this before me. The work is real. I just got there later. Awareness is what is mostly missing now and implemention/training in a tool, and that is where I think there is still room to push.

Stay safe out there!

— Curtis Brazzell