Recap: I gave AI access to a production server which hosted a website that was poorly managed from past 12 years. The aim was to evaluate whether AI is ready to take over dev jobs or humans still have the upper hand.

In round 1, AI failed miserably. Read more about it in Part 1 of this blog series.

AI Fixed the Right Problems the Wrong Way

In the previous part, AI had assessed the codebase really well and created a really worthy implementation plan that easily bested my years of coding experience. But its actual implementation was disappointing.

Claude had introduced some serious server-side rendering improvements. But it didn't take note of the fact that React was still re-hydrating content aggressively. Pages were effectively loading everything twice. The site felt flashy. Distracting. Wrong. That was just one of many problems. The outcome expectation — "improvement in core web vitals" had also remained a distant dream.

We could have, like most engineers (who are getting really nervous with how good AI is getting at coding), decided at this point — Look! AI is still lame! No one can replace human engineers!

But we are gentlemen of science. We don't draw conclusions so quickly. I decided to give AI another chance.

Round 2: Let's give AI another chance

I told Claude exactly what the outcome was. How disappointed I was. How frustrated the client was.

Claude took the feedback to heart. It rendered the website itself. Confirmed the issues. Adjusted its approach.

Another 30–40 minutes later, the site started to stabilize.

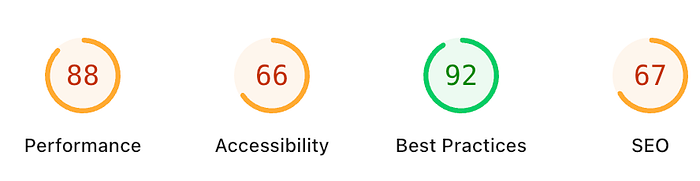

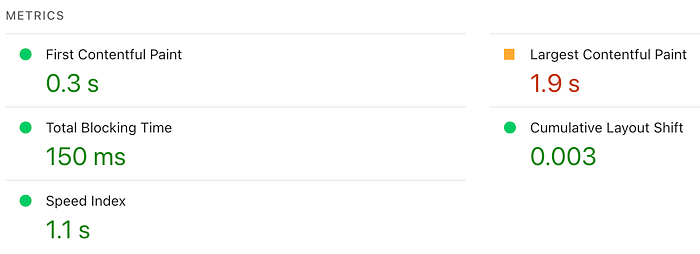

And this time, the results also were MUCH better!

Performance had jumped from an abysmal 20–30 to over 70. Load time dropped from 8–10 seconds to under 450 milliseconds. The site felt instant, even though it was still image-heavy.

And the best part? The implementation, this time, seemed stable.

The AI had learnt from its mistakes. I could see every change it made, it first tested the website to understand if the implementation felt right, whether there were any new console logs, whether any unintended edge cases had been introduced.

First time around, the AI was acting more like an arrogant intern. This time, it was behaving more like a cautious senior developer.

The experiment we had decided to run had been officially complete. AI had clearly done a great job at improving core web vitals. And the website seemed stable.

At this point, it would be tempting to declare AI as a GREAT webmaster that was coming for all engineers' jobs. After all, it did successfully achieve the goals of the experiment with very little human intervention.

But rewarding AI at that moment would have been a BIG mistake.

Two Days Later, Reality Kicked in

Users started reporting broken pages. Google Search Console emailed us that several pages had infinite redirection loops. Many pages were showing header BELOW the footer!

The client called — "We are ending up with eggs on our faces. People were laughing at us!"

Uhhhh. I kept thinking — I guess it really was a bad idea to let AI make all the decisions. I should have learnt my lesson after the first attempt. :(

SO, Here are my thoughts on letting AI be the webmaster for a day

I think the coding game has completely changed forever. Letting AI take over as the webmaster showed me what it was capable of. And what its shortcomings are.

I don't think it has any shortcomings.

WHAAAT? What are you saying Pankaj? Didn't you just tell us about all the unintended issues users had to face? How the client was super-frustrated?

Well, here is what I think:

This Was Not an AI Failure

It's tempting to frame this story as: "AI still breaks more things than it fixes. Humans end up clean up."

Well, that's lazy thinking.

The reality is that large-scale modifications always surface edge cases later. This happens with human teams too. No matter how much testing is done, some bugs are always identified after deployment.

But usually, humans work slowly. Changes go live over weeks and months. So if a human team of engineers had made the same volume of changes that the AI made, we would have discovered the same class of bugs. The only difference is timing.

AI compressed three months of human engineers' work into a couple of hours worth of efforts. So three months of edge cases showed up immediately.

Most production websites aren't even maintained by senior engineers. They're patched by whoever is available. Bugs accumulate without anyone noticing. Performance degrades and no one is truly accountable until the client or users notice. And by that time, it's already too late.

During my experiment, I realised that AI didn't cut corners. It didn't avoid the hard work.

That matters a great deal.

When I later asked the AI to fix the newly identified edge cases reported by users, it did a wonderful job! It already knew the system. It already understood the decisions it had made. And so it fixed issues quickly AND documented why they happened.

That documentation alone is a goldmine. Most developers consider documentation the worst part of their job. Rarely does anyone do it properly or with as much clarity as AI did when generating detailed documentation of all the changes. Just reading the documentation, I had pretty clear understanding of: What changed. Why the change was made. Where were the changes made. How were the changes tested. What risks remain.

The Conclusion?

Systems are bound break when they change at scale.

With AI, what changed was not the amount of bugs introduced, but the speed and surface area of improvements.

AI accelerated development cycle is much faster than what we humans are capable of comprehending. So obviously a lot of bugs would be introduced in a short span of time.

But over time, the overall system will be a lot more manageable. More reliable. Less prone to problems. And if integrated well, AI will be able to pre-emptively detect and resolve issues. With near-zero human intervention.

Businesses and Engineers who are still planning to use human teams to manage and maintain their codebases are going to get outdated really soon. The same way businesses who didn't adopt the Internet during the early years ended up shutting shop soon after.

My client is MUCH happier knowing that his website is in good hands. Core web vitals are improving. Changes he requests are being implemented much faster without cutting corners. And everything is being documented so it's easier to maintain and scale the system for future needs.

All because I let AI run the production website instead of trying to manually do everything. My work now is more focused on evaluating the AI's work, planning future roadmap, and converting business goals into tasks for AI. I am not getting replaced. But my job role has definitely evolved.

Email me at reachpankajbaranwal@gmail.com to figure out how you or your business can adopt AI for maintaining your codebase and digital projects.

I'm curious how this lands with you. Does this feel exciting? Reckless? Inevitable? Would you trust AI with production systems, or is there a line you'd never cross?

I'd genuinely love to hear your perspective, especially if you've tried (or strongly resisted) something similar. Drop your thoughts in the comments. This space is evolving fast, and none of us have all the answers yet.