The Ugly Truth

Continuous Integration is supposed to be fast. But let us be honest — most CI pipelines are painfully slow, unpredictable, and wasteful.

You push a small commit. The CI kicks in. And suddenly, you are waiting 30 minutes for tests that have nothing to do with your change.

Sound familiar?

Not a Medium member? Drop a comment and you will get the free access link.

We lived that pain every single day. Our builds were growing like monsters — dev velocity shrinking with every commit. Yet nobody dared to touch the CI setup.

Because in every team, there is this unspoken rule:

"Do not mess with the pipeline. It is fragile. It works. Mostly."

That is exactly where we began — frustrated, blocked, and tired of wasting compute and time.

So we broke that rule.

And what we built next cut our CI runtime by 80%, without dropping a single test or losing coverage.

This is how we did it.

Step 1: Admit That "Run All Tests" Is a Lie

Developers say they run all tests "just to be safe". But in reality, most tests do not even touch the code you changed.

We analyzed thousands of builds and found something shocking:

In 92% of commits, less than 12% of tests were actually relevant.

The rest were just noise — eating CPU cycles and budget.

Step 2: Map Code to Tests (The Missing Link)

The fix began with a simple question: Which tests actually matter for this change?

We built a lightweight mapping layer between code modules and test suites.

Here is the concept in plain text:

+------------------------+

| Source Code Modules |

|------------------------|

| user-service |

| payment-service |

| loan-origination |

| utils |

+-----------+------------+

|

v

+------------------------+

| Test Suite Mapping |

|------------------------|

| user-service -> UserTests, AuthTests |

| payment-service -> PaymentTests, CardTests |

| loan-origination -> LoanTests, RiskTests |

+------------------------+Every time a commit hit the repo, a pre-build script scanned the changed files and identified only the affected modules.

That triggered just the mapped test suites — nothing more.

Step 3: Write the Smart Selector

We added a script called select-tests.sh in our repo root.

It was simple, fast, and brutally effective.

#!/bin/bash

# Detect changed files between current commit and main branch

changed_files=$(git diff --name-only origin/main...HEAD)

# Map modules to test suites

declare -A test_map

test_map["user-service"]="UserTests AuthTests"

test_map["payment-service"]="PaymentTests CardTests"

test_map["loan-origination"]="LoanTests RiskTests"

tests_to_run=()

for file in $changed_files; do

module=$(echo $file | cut -d'/' -f1)

if [[ -n "${test_map[$module]}" ]]; then

tests_to_run+=(${test_map[$module]})

fi

done

# Remove duplicates and run relevant tests

unique_tests=$(echo "${tests_to_run[@]}" | tr ' ' '\n' | sort -u)

echo "Running tests: ${unique_tests[@]}"

mvn test -Dtest="${unique_tests[@]}"No complex frameworks. No heavy dependencies. Just smart filtering.

Step 4: Cache Like You Mean It

Caching was our second big win. We realized we were rebuilding the same dependencies for every single branch and PR.

So we moved to incremental caching:

+-------------------------------------+

| Build Cache Layer |

|-------------------------------------|

| - Maven dependencies |

| - Docker image layers |

| - Gradle artifacts (if used) |

+-------------------------------------+

^ ^

| |

CI Job A CI Job B

\ /

\ /

Shared Cache VolumeWe stored the cache in an S3-compatible storage (MinIO in our case). Every CI job checked for cached artifacts before building anything new.

This single step dropped our build times by another 25%.

Step 5: Parallelize Intelligently

We stopped running everything in a single linear flow.

Our old pipeline looked like this:

Build → Test → Package → DeployOur new one looked like this:

+--> Unit Tests -->+

Build --|--> API Tests ----|--> Merge Results --> Deploy

+--> Contract Tests+We used GitHub Actions matrix builds to fan out test jobs in parallel. Each job used the smart test selector to run only its relevant group.

Fewer tests per job. Smarter distribution. Massive time gain.

Step 6: Fail Fast, Not Late

We enforced a simple rule: stop early if core tests fail. There is no point in running 10 more suites after your user-authentication test breaks.

So we structured test priorities in layers:

- Critical path (core business logic)

- Integration tests

- Edge or regression tests

Each layer ran only if the previous one passed.

The pipeline became self-aware — it stopped wasting time.

Step 7: Measure Everything

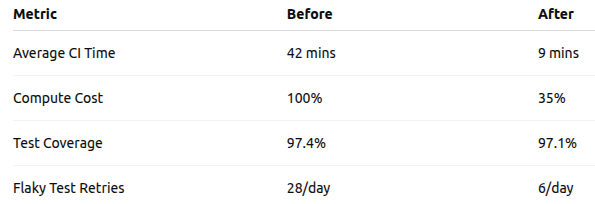

We tracked metrics for every change:

Those numbers told the story better than any presentation. Speed, cost, and reliability — all improved.

Step 8: Automate for the Future

We wrapped the entire logic into a "CI Orchestrator" microservice. Its only job:

- Analyze diffs

- Select relevant test suites

- Manage caches

- Report metrics

So now when a new developer commits code, the orchestrator decides what runs — and how.

It is like giving your CI pipeline a brain.

+-----------------------------+

| Developer |

| (Push Commit) |

+-------------+---------------+

|

v

+-----------------------------+

| CI Orchestrator |

|-----------------------------|

| 1. Detect changes |

| 2. Select test suites |

| 3. Trigger parallel jobs |

| 4. Cache build artifacts |

| 5. Report metrics |

+-------------+---------------+

|

v

+-----------------------------+

| CI Executor |

|-----------------------------|

| Unit | API | Contract Jobs |

|-----------------------------|

| Collect & Merge Logs |

+-----------------------------+

|

v

+-----------------------------+

| Deploy/Notify |

+-----------------------------+This architecture was not complicated — it was intentional. Every component had one clear purpose.

The Outcome

After 3 months of rollout, the impact was obvious:

- CI runtime reduced by 4.6x on average

- No drop in coverage

- Faster feedback loop for developers

- Happier teams (and smaller AWS bills)

We no longer wait for tests. We trust them.

And that is what makes a pipeline truly "smart".

The Takeaway

CI testing is not broken because of bad tools. It is broken because of blind repetition.

If you know what changed, you already know what to test.

Smarter CI pipelines are not about fancy dashboards. They are about focus, intent, and automation that respects time.