I've been in offensive security long enough to roll my eyes at most "watershed moment" announcements. Something big happens, social media explodes, everyone writes a hot take, and three weeks later, we're back to the same problems we had before.

April 7 felt different. It still does.

Anthropic didn't release their most powerful model. They built it, tested it, and then locked it away because they genuinely didn't think it was safe to let it out. Not a PR stunt. Not a delayed launch dressed up as caution. The model found zero-days in every major OS and browser in a matter of weeks, and Anthropic looked at those results and said. Not yet.

That decision tells you more about what Mythos can do than any benchmark ever could.

What actually happened

The initiative around Mythos is called Project Glasswing. Twelve partner organizations got restricted access, AWS, Apple, Microsoft, CrowdStrike, and Google among them, with one purpose: to find vulnerabilities in critical software first.

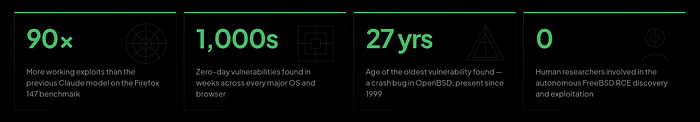

They found them. Thousands of zero-days. A 17-year-old remote code execution bug in FreeBSD. A 27-year-old bug in OpenBSD, a system literally famous for its security hardening. Both are fully exploited, with no humans involved after the initial prompt.

Nicholas Carlini, one of Anthropic's senior researchers, said he found more bugs in two weeks than in the rest of his career combined. I read that and sat with it for a while.

But the number that actually stopped me was 90. As in, 90 times more working exploits than the previous model on the same Firefox benchmark. Not incrementally better. Not a step forward. Ninety times.

What makes this harder to dismiss than typical AI hype is what Anthropic said about how these capabilities appeared:

"We did not explicitly train Mythos Preview to have these capabilities. Rather, they emerged as a downstream consequence of general improvements in code, reasoning, and autonomy."

Nobody built a cyberweapon. They built a smart model, and a cyberweapon fell out the other side. That means the next model will probably be more capable. And the one after that. And Anthropic isn't the only lab on this path.

The assumption that quietly broke

Here's the thing nobody is saying plainly enough.

The entire logic of annual and quarterly pentesting rests on one idea, that the time between a vulnerability appearing and an attacker finding it is long enough for you to act. Weeks, maybe months. Enough time to scan, triage, patch, and move on.

Mythos just demonstrated that a sufficiently capable model can compress that window to almost nothing.

The FreeBSD and OpenBSD bugs weren't hiding in some obscure library nobody uses. They were sitting in widely deployed, actively maintained codebases that thousands of expert researchers had looked at, repeatedly, for decades. Mythos found them in weeks.

Now think about your internal applications. Your dependencies. Your infrastructure. The APIs your team shipped in a hurry last quarter. They almost certainly have bugs just as old, just as serious, sitting just as quietly.

The question was never whether those bugs exist. The question is who finds them first.

Three things that aren't going back to normal

The 90-day disclosure pipeline is already cracking.

Coordinated disclosure was designed around human-speed discovery. One researcher, one bug, ninety days to patch. When a model finds thousands of critical vulnerabilities in weeks, that whole process seizes up. The patch queue, the triage bandwidth, the internal prioritization conversations none of it was built for this volume.

The cost of a sophisticated attack is about to collapse.

Right now, finding a zero-day in a hardened enterprise application takes months of expert researcher time and significant resources. That keeps the most dangerous attacks out of reach for most threat actors. When Mythos-class capabilities become available more broadly, and Anthropic themselves say it's a matter of timing, not if, that changes. A well-structured prompt and a cloud bill becomes enough. Nation-state-grade attacks stop requiring nation-state resources.

Your AI-written code has never been properly reviewed.

If your engineers use Copilot, Cursor, or anything similar, a real chunk of your codebase was generated by a model that doesn't think about security by default. OpenAI's own research puts the figure at roughly 1.2% of AI-generated commits introducing security bugs. That sounds small until you multiply it by how fast your team ships. Valid AI-generated vulnerability reports on HackerOne grew 210% last year. A recent study found 82% of security researchers now use AI in their workflows. The attack side is already moving.

What to actually do in the next 30 days

I know the temptation is to watch how this plays out before committing to anything. I'd push back on that instinct pretty hard.

Your threat environment changed on April 7. The question is just how fast you catch up.

Figure out your actual testing gap. Take every application, API, and infrastructure component and map it against when it was last properly assessed versus how often it changes. For most organizations, that gap is embarrassingly large, often measured in quarters or years, not weeks.

Find your AI-generated code. If your team uses coding assistants, someone needs to know which parts of your codebase are primarily AI-generated, and those codebases need to move up the assessment queue. These surfaces tend to have systematic vulnerability patterns that are easy to find once you look.

Actually, look at your AI agents. Most security teams I talk to have at least piloted some kind of agentic AI workflow, models connected to internal tools, databases, and APIs. OWASP put out the first real taxonomy of agentic AI risks in December 2025. Goal hijacking, identity abuse, memory poisoning, these aren't theoretical. Most of these deployments have never had a security assessment.

Check your MCP configurations. Model Context Protocol is now connecting AI models to enterprise systems at scale. Over a third of MCP servers analyzed in one study were vulnerable to server-side request forgery. AWS credential theft via MCP has shown up in the wild. If you've deployed any AI tooling using MCP and nobody has reviewed those integrations from a security standpoint, that's a gap worth closing quickly.

Use Glasswing to have a better board conversation. Security leaders have been trying to make the case for continuous testing for years. A model that found a 27-year-old bug in OpenBSD, a system literally built around security. It is a more compelling argument than anything I've seen. Mythos isn't publicly available today. It won't stay locked away forever. That window is the argument.

How this changes the way you need to think

Point-in-time pentesting was already struggling to keep up before any of this. A quarterly test, a PDF report, a remediation backlog that nobody gets through before the next test. Most teams know this model is broken; they just haven't had a reason urgent enough to change it.

Glasswing is that reason.

An annual pentest gives you a snapshot of your attack surface on one specific day. In a world where AI can find vulnerabilities faster than your team can get the kickoff call on the calendar, that snapshot is stale before the final report arrives.

Continuous testing isn't a luxury anymore. It's the only posture that makes sense given what we now know is possible.

I want to be clear, this isn't me pitching a product. It's the only conclusion I can reach from what April 7 showed us. The security industry has always been a race. What changed is the pace.

AI will find the vulnerabilities in your software. The only question is which AI gets there first.