In today's fast-moving software world, we rely heavily on automation, AI, and security tools to keep our systems safe. But sometimes, the very tools meant to help us can create confusion, noise, and unnecessary stress.

Let me start with a very real situation.

The Problem We All Face

Imagine this:

A security tool flags a vulnerability with a 9.8 critical score. Naturally, everything stops. Teams scramble. Priorities shift. Pressure builds.

But later… you discover something surprising:

👉 That vulnerable code was never actually used in your application.

If this sounds familiar, you're not alone.

This is exactly the question I raised after coming across a discussion from JFrog:

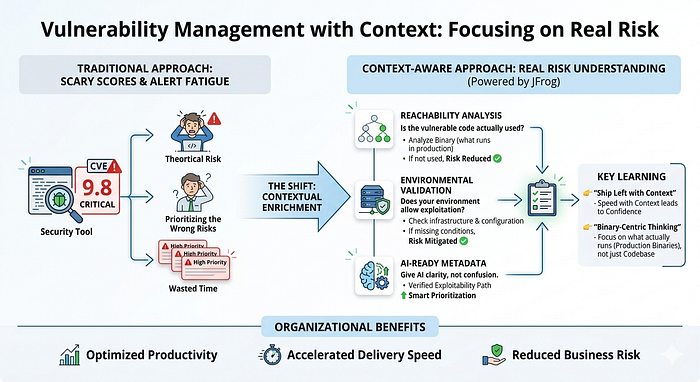

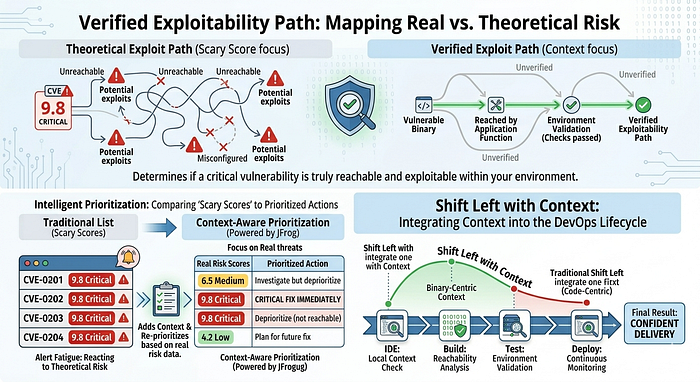

How do we give AI the real situation and context of each issue so it understands the actual risk — instead of just depending on risk scores, which can be wrong, misleading, or inconsistent?

The Reality Behind Traditional Security Tools

Tools like Tenable are excellent at identifying vulnerabilities (CVE data). But they have a limitation:

They don't understand your application's real-world behavior.

This leads to something many teams struggle with:

👉 Alert Fatigue

Where:

- Developers waste time fixing issues that don't matter

- AI systems prioritize the wrong risks

- Teams lose focus on what is actually critical

In simple terms: We are reacting to theoretical risk, not actual risk.

The Shift: From Raw Data to Real Context

This is where JFrog introduces a powerful idea:

Contextual Enrichment

Instead of just showing a vulnerability score, the system adds real-world context through three key layers:

1. Reachability Analysis

👉 Is the vulnerable code actually being used?

JFrog analyzes your application's binary (what actually runs in production) to check if the vulnerable function is ever called.

If it's not used: ➡️ The risk is automatically reduced

2. Environmental Validation

👉 Does your environment even allow this vulnerability to be exploited?

It checks your infrastructure, configurations, and secrets to see if the conditions required for an attack actually exist.

If conditions are missing: ➡️ The risk is not as critical as it appears

3. AI-Ready Metadata

👉 Give AI clarity, not confusion

Instead of feeding AI a "scary score," JFrog provides a verified path of exploitability.

This allows AI systems (and teams) to:

- Focus on real threats

- Prioritize intelligently

- Avoid unnecessary work

A Bigger Realization: We Need to Rethink "Shift Left"

For years, we've heard:

👉 "Shift Left" — fix issues earlier in development

But now, I see it differently:

💡 We should "Ship Left with Context"

Because speed without context leads to noise. But speed with context leads to confidence.

My Key Learning: Binary-Centric Thinking

One of the most important mindset shifts for me has been:

👉 Moving from code-level thinking to binary-centric thinking

What does that mean in simple terms?

- Code is what we write

- Binaries are what actually run in production

And security should focus on what truly runs, not just what exists in the codebase.

Learning from Great Minds

This journey also connects deeply with insights from two people I've been learning from:

Sathya

He emphasizes:

- Thinking beyond individual tasks

- Focusing on organization-level outcomes

- Understanding how Agentic AI is transforming software development culture

This shift is important because AI is no longer just assisting — it is participating.

Srinivasan Santhanam

His practical insights stood out:

- Managing open source licensing responsibly

- Ensuring tamper-proof binary artifact management

- Implementing strong operational quality gates (what he calls "hard gates")

These are the foundations of trust in modern software systems.

Bringing It All Together: Governance Meets Speed

With tools like:

- JFrog MCP Server

- JFrog Curation

We move toward a more controlled and intelligent development ecosystem:

✅ Only approved packages are used during development ✅ Developers can query OSS package risks, versions, and licenses in real-time ✅ Projects and artifacts are tracked with full visibility ✅ Security and governance are built into the workflow — not added later

Why This Matters (For Everyone, Not Just Engineers)

Even if you're not technical, here's the core idea:

- Not every "high-risk" alert is actually dangerous

- Without context, teams waste time and energy

- With context, organizations make smarter, faster decisions

This impacts:

- Business risk

- Delivery speed

- Team productivity

- Customer trust

Final Thoughts

I'm genuinely thankful to JFrog for pushing this conversation forward.

Because the future of AI and DevOps is not about:

❌ More alerts ❌ Higher scores ❌ Faster reactions

It's about:

✅ Better context ✅ Smarter prioritization ✅ Real-world risk understanding

The One Line That Stayed With Me

👉 "Don't fix what looks dangerous. Fix what actually is."

If you've faced alert fatigue or struggled with prioritizing vulnerabilities, I'd love to hear your thoughts.