Introduction: The Hunter Who Found Everything and Earned Nothing

There's a type of bug bounty hunter that every experienced researcher has met at some point — usually in a community forum, usually frustrated, always working hard.

They submit report after report. They run comprehensive recon. They test methodically. They're genuinely skilled. And at the end of every month, their earnings are inconsistent at best, nonexistent at worst. Meanwhile, other hunters — some with less technical knowledge — seem to earn with a reliability that looks almost unfair.

The hunter who finds everything and earns nothing isn't failing because of a skill gap. They're failing because of a strategy gap.

Here's the myth sitting at the center of the Bug Bounty Success Blueprint 2026 conversation: "The more vulnerabilities you find, the more money you earn." It sounds like an iron law. In practice, it's one of the most reliably misleading beliefs in the entire bug bounty ecosystem.

The real issue isn't output volume. It's validation — specifically, whether the vulnerabilities you're finding are the ones programs actually reward, on targets where your effort has genuine leverage, communicated in ways that maximize their assessed impact. The complete strategic roadmap to high-payout vulnerabilities and consistent earnings starts with understanding that distinction completely.

This blueprint is built around that understanding.

What the Bug Bounty Success Blueprint 2026 Is Really Built On

Most bug bounty guides are organized around techniques. Learn this vulnerability class, use this tool, follow this methodology. The implicit assumption is that technical knowledge is the primary variable separating high earners from low earners.

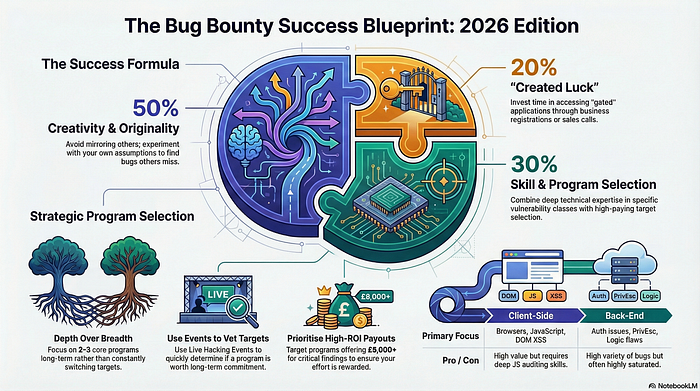

The Bug Bounty Success Blueprint 2026 is organized around a different variable: strategic decision-making. The four pillars covered in this guide — program selection, vulnerability targeting, systematic consistency, and report quality — are each strategic disciplines. They determine the context in which your technical skills operate. And context, it turns out, matters more than skill in determining actual earnings.

Think of it this way. A technically average hunter operating with a sharp strategy — targeting the right programs at the right time, focusing on the vulnerability classes programs reward most highly, writing reports that maximize impact assessment — will consistently outperform a technically excellent hunter operating without strategic clarity. The skill ceiling matters. The strategic floor matters more.

The Volume Myth That Keeps Hunters Perpetually Broke

The volume myth is seductive because it maps onto a logic that works in many other contexts. More work equals more output. More output equals more reward. It's the linear productivity model, and it fails spectacularly in bug bounty for a specific reason.

Not all findings are equal. A program that receives a hundred low-severity reports pays out less total value than it would for a single critical finding. The effort required to find that critical finding is often comparable to the effort required to find ten low-severity issues — but the reward differential is enormous. Hunters chasing volume optimize for the wrong variable. They work harder to earn less, and they exhaust themselves doing it.

The intellectual insight here is uncomfortable but important: deliberately finding fewer, better findings is a more profitable strategy than finding many mediocre ones. This requires resisting the psychological pull of activity — the feeling that submitting reports means progress — in favor of the discipline of targeted, patient, deep hunting.

Why Strategy Beats Skill in the Payout Economy

Here's a concrete illustration. Imagine two hunters targeting the same program. Hunter A has stronger technical skills but approaches the program without preparation — running automated tools, testing the most obvious attack surfaces, submitting whatever comes up. Hunter B has moderate technical skills but spends time before hunting studying the program's recent feature releases, reading its publicly disclosed vulnerability history, and identifying which areas of the application are newest and therefore least tested.

Hunter B finds fewer things overall. But what they find is higher severity, less likely to be a duplicate, and submitted with context that demonstrates genuine understanding of the program's architecture. Hunter B earns more. Not because they're technically superior. Because they made better decisions before they ever opened a browser.

Strategy is a multiplier on skill. Without it, skill earns its raw value. With it, skill earns significantly more.

Pillar One — Strategic Program Selection: The Decision Before All Decisions

Every hour you spend hunting on the wrong program is an hour you can never recover. Program selection is the foundational strategic decision in the Bug Bounty Success Blueprint 2026 — and it deserves far more deliberate attention than most hunters give it.

The wrong program isn't necessarily a bad program. It might be perfectly legitimate, well-run, and generous with payouts. It's wrong for you specifically if the competitive landscape is too dense, the scope doesn't match your skills, or the timing of your entry means the most accessible findings are already gone.

Reading a Program Like a Business Analyst, Not a Hacker

Pro hunters read programs the way a business analyst reads a company — looking for structural signals about where value and opportunity exist. This means examining the scope definition not just for what's included but for what's recently been added. New scope additions represent fresh territory that hasn't been saturated by competing hunters.

It means looking at the program's response time metrics and payout history. A program that triages quickly and pays fairly is worth more of your time than one that triages slowly and disputes severity assessments regularly, even if the nominal payout amounts look similar on paper.

It means understanding what kind of organization is running the program. A financial services company has a very different risk profile and vulnerability priority list than a social media platform or a developer tools company. Understanding that profile helps you anticipate which finding categories they'll reward most aggressively.

The Competitive Density Problem and How to Work Around It

Every public bug bounty program has a competitive density problem. The more well-known and long-running a program is, the more experienced hunters have already mapped its attack surface, tested its most obvious weaknesses, and submitted its most accessible findings. Entering that program as a beginner or even an intermediate hunter means competing against researchers who have months or years of target-specific knowledge.

The practical solution is to seek programs where your entry timing gives you an advantage. Organizations that have recently launched public programs, recently expanded their scope significantly, or recently deployed major new product features are all worth prioritizing. In each case, you're entering a landscape that's less saturated with prior hunting work.

This isn't about avoiding competition permanently. It's about choosing your competitive context deliberately rather than defaulting to the most visible targets.

Timing Your Entry Into a Program for Maximum Impact

Timing is an underappreciated variable in program selection strategy. The optimal moment to begin hunting on a program is shortly after a significant change — a major product release, a scope expansion, a recent acquisition that brought new infrastructure under the program's umbrella.

These moments create windows of genuine opportunity. The new surface hasn't been fully mapped. The triage team is often primed to prioritize findings in the new areas. And the organization itself is typically more motivated to understand its security posture in recently changed infrastructure than in systems that have been stable for years.

Monitor programs you're interested in. Track their changelog announcements and scope updates. When a significant change occurs, that's your signal to move quickly.

Pillar Two — High-Payout Vulnerability Targeting: Where the Real Money Lives

With program selection handled strategically, the next question becomes: where do you focus your hunting effort within a given program to maximize the probability of high-payout findings?

This pillar of the Bug Bounty Success Blueprint 2026 is about understanding which vulnerability categories produce the highest returns relative to effort, and why those categories remain consistently underexplored despite their value.

Why Automation Will Never Find the Bugs Worth Finding

Automated scanning tools have a well-defined ceiling. They're effective at identifying known patterns — specific misconfigurations, standard injection points, common header omissions. They're completely blind to anything that requires contextual understanding, creative reasoning, or knowledge of how a specific business's rules are supposed to work.

The implication is direct: if a vulnerability can be found by automated tooling, it either has already been found and reported, or it will be found and reported almost immediately by one of the many hunters running those tools across the same targets simultaneously. Automation produces a race to the bottom — whoever runs the scan first gets credit, and the payout for these findings is typically low because the finding is inherently low-sophistication.

High-payout findings live in the territory that automation cannot reach. That territory requires manual analysis, creative thinking, and genuine understanding of target-specific context. This is where deliberate hunters invest their time — and why that investment pays off disproportionately.

The Business Logic Goldmine: Underexplored and Consistently Rewarded

Business logic vulnerabilities represent the clearest illustration of the automation gap — and the clearest opportunity for hunters willing to do the manual work required to find them.

A business logic flaw exists when an application's implementation of its own rules produces outcomes the organization didn't intend and wouldn't sanction if they understood them. These aren't code bugs in the traditional sense. They're design and implementation gaps that live in the space between how a feature was specified and how it was built.

Here's an original example: consider a subscription platform that allows users to upgrade or downgrade their plan at any time, with prorated billing applied automatically. A logic flaw might allow a user to time a series of rapid plan changes in a specific sequence that results in a credit appearing on their account that exceeds what any legitimate transaction would produce. No scanner identifies this. It requires a hunter who reads the billing documentation carefully, understands the intended behavior, and methodically tests edge cases in the upgrade and downgrade flow.

When found and reported clearly, with full impact articulation, findings like this are frequently rewarded at high severity. They affect the organization's revenue directly, they're often systemic rather than isolated to a single user, and they demonstrate a level of target understanding that triage teams genuinely respect.

Chaining Low-Severity Findings Into Critical Impact

One of the most powerful and underutilized techniques in the strategic hunting toolkit is vulnerability chaining — the practice of combining multiple individually low-severity findings into a single report that demonstrates critical-level impact.

A reflected cross-site scripting vulnerability on a subdomain might be rated informational or low severity in isolation. An open redirect on the same application might similarly be considered low value alone. But combined — the open redirect used to redirect a victim to the attacker-controlled page, the XSS used to steal session tokens in that context — the chain produces a demonstrated account takeover scenario that triage teams cannot dismiss.

Chaining requires understanding how vulnerabilities interact, which demands the kind of deep, manual target analysis that volume-focused hunters never stop long enough to perform. It's one of the clearest examples of how strategic depth produces earnings that strategic breadth never reaches.

Pillar Three — Consistent Earnings Through Systems, Not Luck

The difference between hunters who earn consistently and those who earn sporadically often has nothing to do with talent. It has everything to do with whether they operate from a repeatable system or from ad hoc effort.

Consistent earnings in bug bounty come from consistent processes — structured approaches to program selection, recon, hunting, and reporting that produce reliable output over time rather than occasional bursts of activity separated by fallow periods.

Building a Personal Hunting Framework That Scales

A personal hunting framework is a documented, repeatable methodology that you refine over time based on what works for your specific skills, target preferences, and available time. It answers questions like: which program attributes tell me this target is worth my time? What's my recon sequence for a new target? Which vulnerability classes do I investigate first and why? How do I structure my hunting sessions to maintain focus?

The framework doesn't need to be elaborate. It needs to be explicit — written down rather than held loosely in your head — so that it can be followed consistently and improved deliberately. Hunters who operate from explicit frameworks make better decisions under pressure, maintain quality during long hunting sessions, and improve faster because they can identify specifically what's working and what isn't.

Managing Your Hunting Pipeline Like a Professional

Pro hunters treat their active programs, their in-progress findings, and their submitted reports as a pipeline — a structured set of work at different stages of completion that they manage actively rather than reactively.

This means maintaining awareness of which programs you're currently invested in, what findings you have in progress, what reports are awaiting triage response, and when to move on from a program that's no longer producing results. It means setting deliberate limits on how many programs you actively hunt simultaneously — depth across two or three targets will consistently outperform shallow attention spread across ten.

Managing a pipeline this way transforms bug bounty from a series of isolated efforts into a professional practice with momentum, visibility, and compounding returns over time.

Pillar Four — Report Quality as a Revenue Strategy

The fourth and final pillar of the Bug Bounty Success Blueprint 2026 reframes report writing from an administrative task into a direct revenue lever. Because that's exactly what it is.

The same vulnerability, reported with different levels of clarity, specificity, and impact framing, will receive different severity assessments and different payout amounts. This isn't a flaw in the system. It reflects the reality that impact is partly objective and partly a function of how well it's communicated. Hunters who communicate impact brilliantly earn more than hunters who communicate it poorly, holding the technical finding constant.

How Report Framing Directly Affects Payout Amounts

Triage analysts are not mind readers. When they read a report, they assess severity based on what's written, not on what the hunter observed or understood internally. A report that describes a vulnerability technically but fails to connect it to business risk — data exposure, financial impact, user harm, regulatory implications — leaves the analyst to make that connection themselves. Many won't. They'll default to a conservative severity assessment that protects them from over-rewarding an ambiguous finding.

A report that does that connection work explicitly — that says not just "an attacker could access user data" but describes specifically which users, which data, through what mechanism, with what downstream consequences — removes the ambiguity. The analyst isn't interpreting. They're confirming. That confirmation process produces faster triage and better severity assessments.

The framing of impact is a skill. It's learnable. And its return on investment, measured in actual payout amounts across a year of hunting, is substantial.

The Reputation Flywheel That Unlocks Private Program Access

The bug bounty ecosystem has a flywheel dynamic that most beginners don't fully appreciate until they've been in it long enough to feel its effects. Quality report submissions build reputation. Reputation earns private program invitations. Private programs offer better competitive conditions, more responsive triage, and often higher payout ceilings. Better conditions produce better findings. Better findings reinforce reputation. The flywheel accelerates.

The critical insight is that this flywheel starts with your very first submission. Every report you write either adds momentum to the flywheel or creates drag against it. The hunter who treats their first low-severity submission with the same professional standards as their first critical finding is building the foundation of a reputation that will compound into meaningful advantages over months and years.

Most beginners don't see the flywheel because the effects aren't immediately visible. But it's turning from the start. Make sure it's turning in the right direction.

Frequently Asked Questions

Q1: Is it realistic to pursue bug bounty as a primary income source in 2026? It's realistic for hunters who combine strong technical skills with the strategic approach outlined in this blueprint and invest significant consistent time over an extended period. It's not realistic as a quick income solution or for hunters who approach it casually. The earnings potential is genuine, but it requires treating hunting as a professional practice rather than an occasional activity.

Q2: How do I identify which vulnerability classes are highest priority for a specific program? Study the program's publicly available vulnerability disclosure history where accessible, read their security documentation and engineering communications, and pay attention to what types of findings their scope definition emphasizes or specifically excludes. Programs often signal their priorities — directly or indirectly — through the language of their scope rules and the categories they've historically rewarded most visibly.

Q3: What's the most common reason valid findings get low severity assessments? Weak impact articulation. The finding is technically valid but the report doesn't connect it clearly to business risk, user harm, or data exposure in terms that a triage analyst can immediately act on. Improving impact statement quality is one of the fastest ways to increase average payout per finding without changing anything about your technical hunting approach.

Q4: How many programs should an intermediate hunter actively target simultaneously? Two to three programs simultaneously is a reasonable ceiling for most hunters. Below that, you may not have enough active pipeline to maintain consistent output. Above it, attention dilutes to the point where depth becomes impossible to maintain on any single target. Adjust based on how time-intensive each program's scope is and how much hunting time you have available weekly.

Q5: Are vulnerability chains worth the extra time they require to develop? Yes, with the right target and the right component findings. A well-documented vulnerability chain that demonstrates critical impact from individually low-severity components can produce a payout that exceeds what either component would earn separately by a significant margin. The time investment in developing a chain is justified when the component findings are solid, the combined impact is genuinely critical, and the report communicates the full scenario clearly.

Q6: How should hunters handle programs with slow or poor triage communication? Document your findings and submissions carefully, follow up once through the program's official channel after a reasonable waiting period, and don't let the slowness of one program prevent you from actively hunting on others. If a program consistently demonstrates poor triage practices over an extended period, factor that into your program selection criteria going forward. Time is your scarcest resource — allocate it toward programs that respect it.

Conclusion: The Blueprint Is Simple — Execution Is the Edge

The Bug Bounty Success Blueprint 2026 is not a collection of secret techniques. It's a strategic framework built around four pillars — program selection, vulnerability targeting, systematic consistency, and report quality — that together create the conditions for high-payout findings and reliable earnings.

The myth that volume and technical breadth drive income has been dismantled across every pillar of this guide. What actually drives consistent earnings is strategic depth: choosing the right programs at the right time, hunting in the vulnerability categories where your effort has genuine leverage, building repeatable systems that maintain quality over time, and communicating your findings with the clarity and impact framing they deserve.

None of this is complicated. All of it requires discipline — the discipline to specialize before you generalize, to prepare before you hunt, to write carefully before you submit, and to think strategically before you act technically.

The hunters earning consistently in 2026 aren't the ones with the most tools or the most hours. They're the ones who made better decisions. This blueprint is the map. The execution is yours.

In 48 hours, I'll reveal the exact five-question program evaluation checklist that pro hunters run before committing a single hour to any new target — a process that takes under ten minutes and has saved countless hunters weeks of misdirected effort.

💬 Comment Magnet: What's the biggest strategic mistake you made early in your bug bounty journey — and what finally made you realize it was costing you money?

Get Lifetime Access: Download Now