AI has expanded in security research fast, but most discussions focus only on how powerful AI is. The critical question isn't how powerful AI is, but simply:

Which AI tools are reliable enough for security research?

Because in security, dependencies matter more than features.

First Principle: Not All AI Tools Are Equal

Today hundreds of new "AI security tools" launch every month. Many promise automated pentesting, AI reconnaissance, or instant vulnerability discovery.

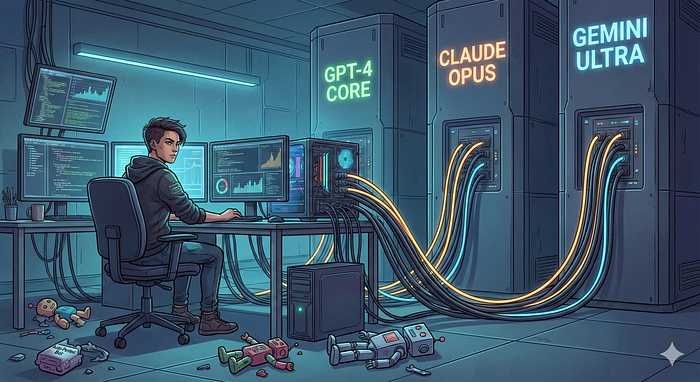

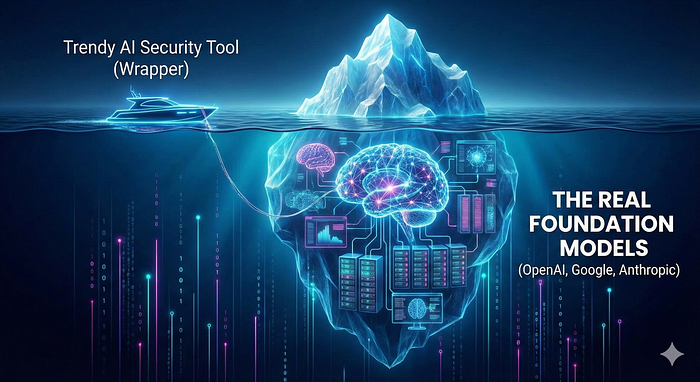

But technically, most of them are not independent systems.

They are:

- interfaces powered by GPT APIs

- wrappers around Google Gemini

- tools running on Claude models

- automation layers sitting on someone else's infrastructure

They look like standalone products, but underneath they are renting intelligence.

Why Wrapper AI Tools Are Risky?

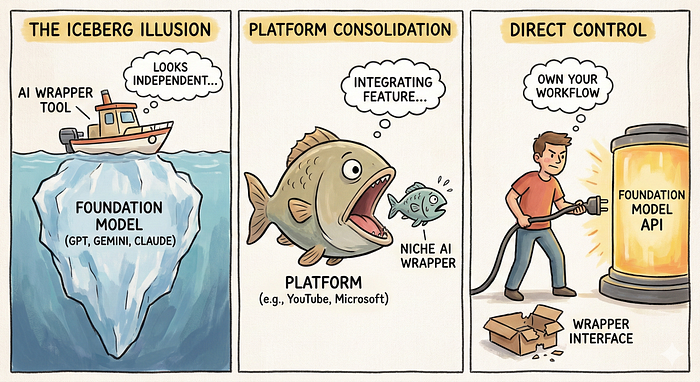

This pattern is already happening across the AI ecosystem.

Example 1: YouTube AI Summaries

A number of startups built tools where user pasted a YouTube link and received:

- transcript extraction

- summarized explanation

- key insights generated by AI

These services charged subscriptions, sometimes $10/month or more, because they automated a useful workflow using YouTube data + AI APIs.

Then Google introduced Gemini-based video summaries directly inside YouTube.

Now:

- no extra website needed

- no external subscription

- available directly to YouTube Premium or Google One users

- faster because it runs natively on the platform

Result: independent tools lost traffic almost overnight, and people wasted yearly subscriptions money on something which they didn't had to.

The platform didn't copy code, it integrated the validated use case.

Example 2: AI Writing Assistants vs Built-In Editors

Many AI writing startups grew rapidly using GPT APIs to improve emails and documents.

Soon after:

- Microsoft integrated Copilot into Word and Outlook

- Google added AI writing directly into Docs and Gmail

Users naturally preferred built-in features because:

- no extra login

- trusted ecosystem

- already included in subscriptions

Standalone wrappers lost differentiation.

Example 3: Image Generation Tools

Multiple websites sold AI image generation subscriptions built entirely on external APIs.

Later:

- image generation became native inside ChatGPT

- integrated into operating systems and productivity suites

Again, distribution beat innovation.

What This Means for Security Researchers?

If your pentesting workflow depends heavily on niche AI tools built on external APIs, you inherit their instability.

Any day:

- pricing changes

- rate limits appear

- features disappear

- or the main platform launches the same capability internally

Security research requires consistency. Your tools should not vanish mid-workflow.

The Safer Strategy: Use Foundation Models Directly

Instead of chasing every new AI product, rely on the systems that actually own the models:

- GPT models, Codex

- Google (Gemini, Antigravity IDE, Vertex AI ecosystem)

- Anthropic Claude, Opus, Sonnet

- Local open-source LLM models for sensitive research

These platforms control:

- infrastructure, data centers

- training

- long-term roadmap

- scalability

They are not temporary layers, they are the base layer.

How AI Should Actually Be Used in Pentesting?

1.) Recon & Data Reduction

The AI surfaces the interesting signals; the researcher decides which ones matter

Example: You run subdomain enumeration and collect 15,000 URLs.

Instead of manual filtering, One AI can:

- group endpoints by functionality

- highlight authentication-related paths

- identify unusual parameters

2nd can:

- visit the endpoint.

- test multiple behaviours.

At last, you still test manually to confirm, AI just removes noise.

2.) Code Review Acceleration

Example: During source review, AI can quickly explain:

- JWT validation logic

- OAuth flows

- serialization handling

This reduces hours of reading unfamiliar code before real testing begins.

3.) Payload Ideation

It expands exploration, but the researcher must still understand why a payload works to avoid dangerous side effects.

Example: When testing input filters, AI can generate variations:

- encoding combinations

- edge-case payload structures

- alternate injection attempts

It expands exploration, not replaces skill.

4.) Report Writing

Example: Turning raw notes like:

IDOR via user_id parameter → access other invoicesinto a structured vulnerability report with clear impact and remediation.

This saves time without changing technical accuracy.

What to Avoid

Be cautious of tools claiming:

- "AI pentester"

- "automatic bug bounty hunter"

- "fully autonomous exploitation"

Most are interfaces calling the same models you can access directly, with added dependency risk.

Convenience today can become limitation tomorrow.

The Bigger Insight

Large platforms don't need to steal ideas. They watch how builders use their ecosystems.

When thousands of users independently build similar workflows, that signals demand. Eventually the feature becomes native, just like AI summaries inside YouTube.

Innovation at the edge often becomes integration at the core.

Own Your Workflow

New AI wrapper tools look attractive with polished UIs and packaged features, but they are a trap of convenience. Don't depend on them.

Instead, invest time in building your own lightweight setup that queries model APIs directly. Keep your prompts private, curate your own context files, and understand exactly what data you are sending and receiving. That control is what allows you to explore deeper, test more freely, and avoid being rug-pulled by a startup's pricing change or pivot.

Choose foundations that will keep evolving, and build your own methodology on top. When the platforms inevitably move downstream to swallow the wrappers, only the foundations, and the researchers who know how to wield them directly, will remain.