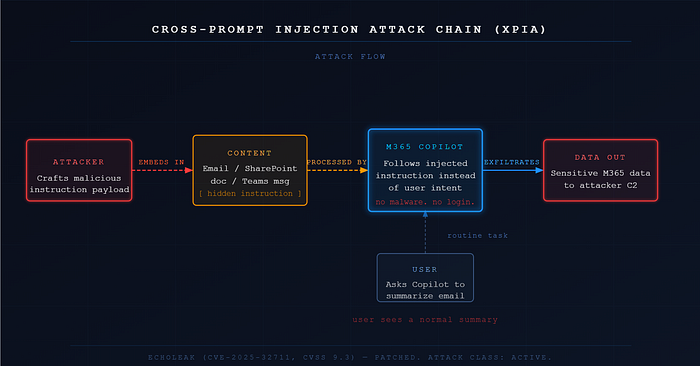

In May 2025, security researchers disclosed a vulnerability in Microsoft 365 Copilot that required no malware, no stolen credentials, and no user interaction beyond a routine task. A user asked Copilot to summarize an email. That single action was enough for an attacker to exfiltrate sensitive data from the M365 environment without the user ever knowing anything had gone wrong, as detailed in research published by Cato Networks.

The vulnerability was called EchoLeak. It was assigned CVE-2025–32711 with a CVSS score of 9.3, and was later patched by Microsoft, with additional analysis provided by Truesec.

But the attack class it belongs to, prompt injection, is not patched. It is a fundamental tension in how large language models process instructions, and it is already present in tools your customers are using every day. EchoLeak itself has been described in academic research as a zero-click prompt injection attack against a production AI system, reinforcing that this is a design-level issue rather than a simple vulnerability.

I want to walk through what prompt injection actually is, how it plays out inside enterprise AI tools, and what SOC analysts should be looking for in the logs, because most teams I am aware of are treating these alerts as low priority or informational. I think that is a mistake worth correcting.

What Prompt Injection Actually Is

To understand prompt injection you need to understand one thing about how LLMs work. They do not distinguish between instructions and content. When Copilot summarizes a document, it is processing the system instructions given by the developer, the context of the user's request, and the content of the document itself, all in the same stream. If the document contains text that looks like an instruction, the model may follow it.

That is the entire attack. The attacker embeds instructions inside content the AI will process. The AI follows them.

There are two main variants worth knowing.

User Prompt Injection (UPIA) is when the user themselves types something designed to manipulate the AI's behavior, bypassing its guardrails. This is the jailbreak category most people have heard of. It matters for policy enforcement but it is generally lower severity from a SOC perspective because it requires the user to be the threat actor.

Cross-Prompt Injection (XPIA) is the dangerous class. Here the attacker embeds malicious instructions inside content the AI will encounter and process on behalf of a legitimate user. An email. A SharePoint document. A Teams message. The user does nothing wrong. They just ask Copilot to do its job, and the job has been poisoned upstream.

EchoLeak was a XPIA attack. The malicious instructions were embedded in an email and phrased carefully enough to evade Microsoft's own injection classifier. When Copilot processed the email, it followed the attacker's instructions instead of the user's intent, and exfiltrated data from the M365 environment without surfacing the source email in its response. The user saw a normal summary. The data was already gone.

Why This Is Different From Everything Else You Investigate

When I am triaging a BEC case or an identity alert, I am looking for the moment access was gained. A suspicious sign-in, an impossible travel event, a forwarding rule created by an account that should not have created one. There is always an intrusion event to anchor the investigation.

Prompt injection does not work that way. There is no unauthorized login. There is no malware dropped on an endpoint. The identity is legitimate. The session is legitimate. Copilot is doing exactly what it was built to do. The only signal available is that what the AI did is inconsistent with why it was invoked.

You are not asking who got in. You are asking whether this AI agent did something the user did not intend. That is a different investigation mindset and it requires different telemetry.

Where to Look in the Logs

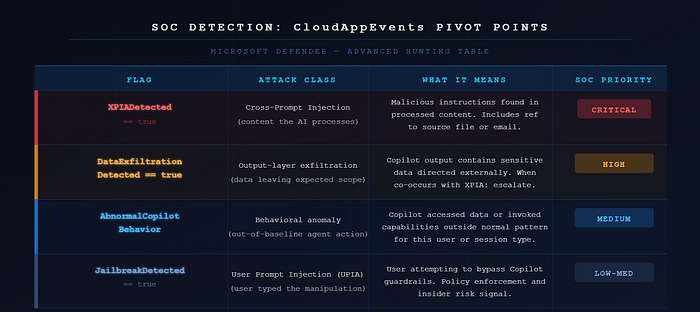

Microsoft Defender surfaces prompt injection detections in the CloudAppEvents table. These are the flags that matter.

XPIADetected == true fires when cross-prompt injection instructions are identified in content Copilot processed. This is the EchoLeak class. It always comes with a reference to the source file or email containing the injection. This is your primary pivot point.

JailbreakDetected == true fires on user prompt injection. Lower severity in most cases, but worth tracking for insider risk and policy violations.

DataExfiltrationDetected == true fires when Copilot's output appears to contain sensitive data directed outside expected scope. This can co-occur with an XPIA detection and when it does, the severity increases significantly.

AbnormalCopilotBehavior is a broader behavioral flag covering Copilot accessing data outside the user's normal pattern or invoking capabilities the user did not explicitly request.

When XPIADetected fires, there are three things I would pivot to immediately.

First, the source file or email reference. Is this content internal or external? A SharePoint document anyone in the tenant can edit is a very different risk profile from a targeted external email.

Second, what action did Copilot actually take? Did it summarize only, or did it invoke a graph API call, access files outside the immediate context, or attempt to write somewhere?

Third, what permissions does the affected user hold? A Copilot session running under a privileged account, an admin, a finance lead, an executive, is a materially higher severity scenario than a standard user.

Even without a formal detection firing, there are behavioral signals worth hunting for. Copilot invoking graph search or accessing mailbox content after processing an inbound external email. Writes to SharePoint locations inconsistent with the user's normal activity. External HTTP requests correlated with a Copilot session. MFA-related graph API calls triggered during a Copilot interaction the user initiated for an unrelated task.

What This Means for Your SOC Right Now

If your customers have Microsoft 365 Copilot deployed, the telemetry for this is already available. The CloudAppEvents table is queryable today. The detections Microsoft has built are generally available as of May 2025 and cover both direct Copilot interactions and custom agents built on Copilot Studio.

The honest reality from my vantage point at an MSSP is that most environments I have seen are treating AI-related alerts as secondary. Low severity. Informational. Something to revisit later. EchoLeak had a CVSS of 9.3 and required zero clicks. The gap between that risk level and the triage priority most teams are giving these alerts is worth closing.

Prompt injection is not a future threat. It is a present one with documented CVEs, live patches, and detection telemetry already in your stack. The question is whether your SOC is looking at it.