In cryptocurrency, there is an almost sacred formula: "Don't trust, verify." Every user can run their own node, check the network's rules themselves, and depend on neither the development team nor any central authority.

But this formula has a serious limitation. No matter how carefully I audit the code, I won't catch a vulnerability that takes a genuine hacker to find — I simply don't have the expertise. So I'm forced to trust anyway: to trust the people who check everything on my behalf and tell me what they find.

On May 5, 2026, the Bitcoin Core developers disclosed vulnerability CVE-2024–52911. It affected versions of Bitcoin Core from 0.14.0 onward and was fixed in 29.0. The bug allowed a miner to remotely crash other people's nodes.

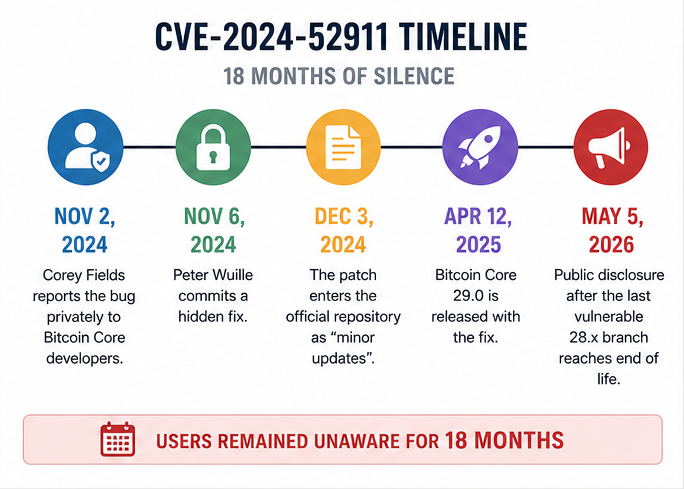

But the most striking detail here is how long this bug had been known. Cory Fields privately reported it to the developers on November 2, 2024. By November 6, 2024, Pieter Wuille committed a hidden fix. On December 3, 2024, the patch appeared in the official repository without any public announcement and was described merely as "minor updates." On April 12, 2025, Bitcoin Core 29.0 shipped with the fix included. The public disclosure came only on May 5, 2026, after the last vulnerable branch, 28.x, reached its end-of-support.

For a year and a half, the most widely trusted Bitcoin client ran with a vulnerability that the developers knew about — while node operators running that software did not. And this is no isolated incident; it is a recurring pattern in cryptocurrency. Developers across different crypto projects have been doing this for years.

I want to walk through other examples of the same thing, and ultimately answer the question: how safe is it, really, to keep your savings in cryptocurrency?

Bitcoin: How a DoS Bug Turned Out to Be an Inflation Bug

In September 2018, Bitcoin Core rushed out version 0.16.3. Users were told it patched a denial-of-service vulnerability.

Two days later, it turned out that was only half the story. The same flaw in versions 0.15.x and 0.16.x could allow a miner to inflate the bitcoin supply — effectively spending the same output twice within a single block and pocketing extra BTC. Developers acknowledged that they had initially disclosed only the less dangerous DoS component, deliberately holding back the full description of the inflation threat to give miners, businesses, and other critical participants time to update.

In the worst case, this would have been an attack not on any individual wallet, but on Bitcoin's core promise: a fixed supply. If the 21 million rule can be broken by a bug in the client, the entire economic mythology of Bitcoin crumbles.

The vulnerability was never exploited. But CVE-2018–17144 illustrates the ethical dilemma perfectly. On one hand, full immediate disclosure could have handed attackers a ready-made playbook. On the other, users were effectively not told the truth about how critical the update was.

Zcash: Invisible Counterfeit Coins

The Zcash story was even more unsettling, because this is a privacy coin where not everything is visible on the blockchain. In 2018, the team discovered a vulnerability in the cryptography underlying its zero-knowledge proofs. Before it was fixed, an attacker could have created fake ZEC in a way that no one would have been able to detect. With a transparent chain, an inflation bug is at least theoretically visible through balance analysis; a privacy system makes that kind of verification far harder.

Zcash fixed the issue in the Sapling upgrade, activated on October 28, 2018, and disclosed it publicly only months later. In the official disclosure, the team wrote plainly: "eleven months ago we discovered a vulnerability" — and acknowledged that, before the fix, an attacker could have minted counterfeit Zcash without detection.

The case for silence here is easy to understand. Disclosing this flaw before it was patched would have been handing someone a recipe for destroying Zcash. But the flip side is just as clear: for nearly a year, users held an asset in which invisible counterfeiting was theoretically possible — and they had no idea.

This points to a real paradox:

- The more serious the vulnerability, the stronger the argument for secrecy.

- But the greater the secrecy, the more it undermines the idea of verifiability. (What is the point of "verify everything" if I lack the skills to understand what I'm looking at, and the qualified auditors won't tell me what they've found?)

Monero: The Truth Hidden Behind a Non-Existent DoS

In 2017, Monero ran into a similar threat. The team discovered a critical bug in CryptoNote, the base protocol powering Monero and a number of other privacy coins. The vulnerability allowed the creation of an unlimited number of coins in a way that would have been invisible to any observer who didn't know exactly what to look for.

The patch in Monero was introduced covertly. What's more, the release was publicly explained as protection against a RingCT denial-of-service attack — one that, by the team's own subsequent admission, never actually existed. It was a cover story, constructed to get exchanges and mining pools to update without revealing the real, inflationary risk.

For Monero itself, the story ended well: the team reported that it had checked the blockchain and found no signs of exploitation. But the vulnerability extended beyond Monero. After Monero updated, the team began notifying other CryptoNote-based projects. But one of them, Bytecoin, was soon attacked, and 693 million additional coins were created within it.

This opens up another layer of the problem with keeping vulnerabilities secret. When code is shared across dozens of projects, announcing a discovered threat in one place can become a tip-off for attackers targeting another. Developers have to decide not just when to tell their own users, but which competing projects to warn, and in what order — knowing that a competitor who gets the information first could potentially use it against one who hasn't yet received it.

Monero Again: The Bug That Let Attackers Burn Other People's Money

In 2018, Monero faced a different, less fundamental — but highly instructive — incident known as the burning bug. It didn't allow anyone to print XMR from thin air or break the supply schedule. But it did allow an attacker to cause real financial harm to exchanges, merchants, and other services. The bug made it possible to send funds in such a way that some of the received outputs became unspendable.

A swapping service like rabbit.io could have been vulnerable to such an attack.

Here is how cryptocurrency swaps work on rabbit.io:

- A user sends cryptocurrency, for example XMR, to the deposit address displayed in the order form;

- Our automated system detects the incoming payment, and sends the requested cryptocurrency to the payout address specified by the user.

Our service did not exist at the time, but had it existed, the attack could have looked like this:

- An attacker creates an order to swap XMR for BTC;

- The attacker sends XMR and receives BTC;

- We are left without both the BTC and the XMR because the received XMR outputs are unusable;

- The attacker gains nothing directly, but destroys the businesses accepting XMR.

The Monero team prepared a quiet patch and directly notified the exchanges and known XMR merchants they could reach. In the post-mortem, the developers honestly acknowledged that this approach inevitably left out organizations they had no existing contact with, and created an impression of privileged access to information.

This is the practical reality of selective disclosure: some market participants will always learn about a risk before others. That asymmetry is itself a security problem.

Stellar: A Hidden Bug That Had Already Been Exploited

The Stellar story differs from most of the others because the bug wasn't just silently present, unknown to anyone but the developers. It had already been used.

In 2017, an attacker exploited a concurrency flaw in the Stellar protocol and created 2.25 billion XLM — roughly $10 million at the time, and about a quarter of all tokens in circulation. According to Messari, the newly minted XLM was transferred to exchanges and presumably sold.

The Stellar Development Foundation later burned an equivalent quantity of XLM from its own reserves to prevent supply dilution. But it made no public announcement — until Messari surfaced the incident in 2019, two years after the fact. In response, Stellar representatives said they had mentioned the bug in their protocol update release notes. Technically, that's not complete silence. But a quiet entry in technical release notes that the market didn't learn about until a Messari investigation two years later is, in practice, silence.

This case is particularly uncomfortable for the industry. You can't say, "We kept the vulnerability secret so no one could exploit it." Someone already had. The silence protected not users from an attack — but developers from scrutiny.

Ethereum Geth: How a Silent Patch Split the Network

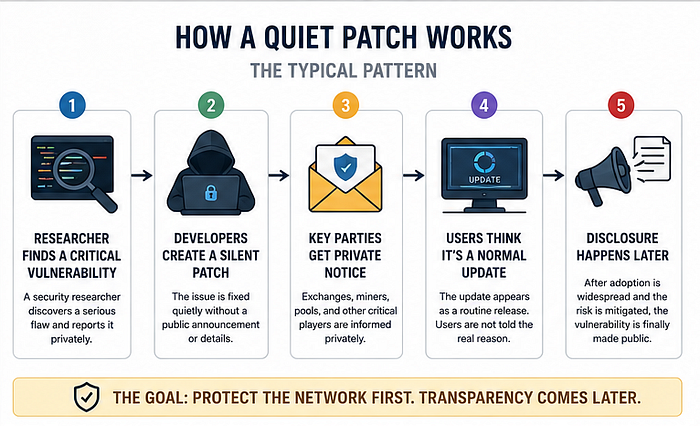

At Geth, the most widely used Ethereum client, the practice of silent patching was essentially formalized. The team's documentation states explicitly that for vulnerabilities threatening the health of the Ethereum mainnet, it reserves the right to quietly ship fixes in new releases without disclosing the vulnerabilities themselves. The reasoning is straightforward: node operators may go weeks or months without updating, and if you spell out exactly which bug a release fixes, someone may attempt to exploit it faster than most nodes can be upgraded.

But in August 2021, this logic backfired. Geth patched CVE-2021–39137, releasing the fix on August 24 without disclosing details. Someone, however, figured out what had been fixed — and on August 27, the vulnerability was exploited, causing a portion of older Geth nodes to fork off from the main chain.

This is the other edge of the secrecy blade. Say too much, and you may accelerate an attack. Say too little, and some operators won't understand that updating is urgent. The network can end up with exactly what it was trying to avoid: active exploitation of the vulnerability.

Polygon: $24 Billion at Risk, Real Losses Incurred

In December 2021, Polygon faced the same dilemma. Security researchers reported a critical vulnerability in the Polygon PoS genesis contract — one that could allow an attacker to drain approximately $24 billion worth of MATIC tokens. The team decided to proceed quietly: as they later put it, "given the nature of this upgrade, it needed to be executed without drawing too much attention." Validators and full-node operators were notified, and the network upgraded quickly.

But even a fast response wasn't fast enough to close the window entirely. Before the upgrade took effect, an attacker managed to steal 801,601 MATIC — just under $2 million. The developers confirmed that the Polygon Foundation would cover the loss. The researchers who had reported the vulnerability were paid approximately $3.46 million in bounty rewards.

The team didn't try to bury the issue, but users learned the full scale of what had nearly happened only after it was over. Two reasons drove the approach: first, the priority was to act, not to deliberate; second, before the problem was resolved, every additional person who knew about it was someone who could potentially abuse that knowledge.

Perhaps this is what a reasonable compromise looks like: don't disclose an attack before it's patched, but don't turn the fix into a secret that lasts months or years either.

Are Developers Protecting Users, or Deceiving Them?

Sometimes secrecy genuinely saves the network. Without a silent fix, Zcash might have suffered invisible inflation. Without a covert patch, Monero could have faced undetectable counterfeiting. Without Polygon's swift, behind-closed-doors action, the potential damage to that network would have been incomparably worse.

But secrecy isn't free. It creates tiers of insiders and outsiders. It gives an advantage to whoever the team manages to warn privately. It reduces node operators' urgency to update when a release looks like routine maintenance. And it undermines one of the most important psychological foundations of using cryptocurrencies — the sense that the rules of the game are equally known to everyone at the same time.

That sense, it turns out, is an illusion. Each of these stories follows the same arc: a quiet patch, a long silence, and then the admission that all along, the security of the network had been resting on trust in a small group of people who knew more than everyone else.