Replacing the last human gate with assurance scores and environmental constraints. Part 5 of the Assisted Continuous Assurance series — rethinking AppSec from first principles

Deployment approval is the last surviving human gate in most modernized AppSec programs. Teams that have automated scanning, adopted CI/CD, and shifted left on testing still route their final release decision through a human. Why? Because nobody has built a better mechanism for answering the question: "is this service safe enough to go to production?"

If you introspect, this might look like a security engineer review for your app that equates to a checklist of tests that the engineer will need to go through and then approve your request & stamp it release-ready. This request may also sometimes sit in a queue for days before being picked up or needs to be 'escalated' for rush-hour service. Does this mean it makes the deployment safer? Well, it definitely made the deployment slower.

Let's continue our design (If you have been following the series, we are talking about this design — Part 1) and fix this. After getting an output from our Validation Core (aka LLM Brain), the information should flow into what I call the Output Funnel. It replaces deployment approval with a continuous assurance score and graduated environmental constraints. Yes, no humans involved here. The environment responds proportionally to the risk signal. Presenting the flow within flow:

Every app gets an assurance score → environmental constraints are applied based on the score → risk acceptance options are available (if your risk acceptance expires, env constraints flood back in) → Score + Constraints considered while business planning process and hence surfaced to the leadership

The Assurance Score

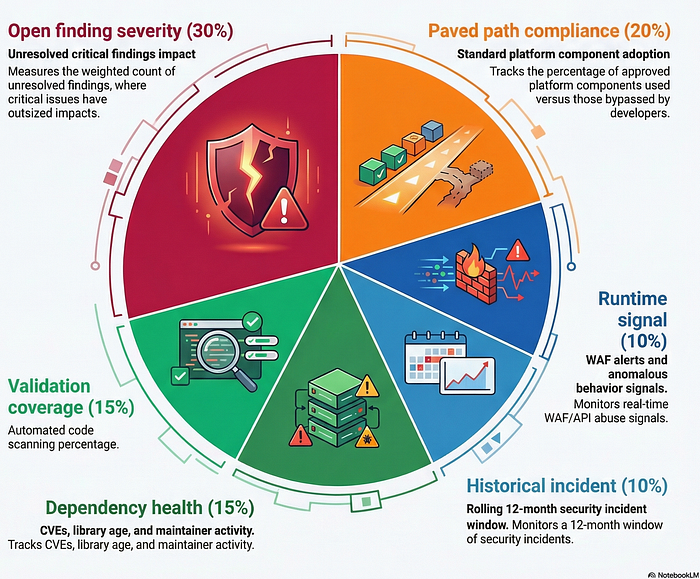

Every service (can be an app or a collection of apps/services) gets an assurance score from 0 to 100 — think of it as a credit score for security posture. The higher the score the better. My suggestion is to calculate this score from six weighted factors:

Note: This assumes that Paved Paths are available and usable. If not, the weightage can be redistributed to accommodate the rest five.

This score is dynamically computed at a cadence and updates continuously as findings are created, resolved, or suppressed. A deployment doesn't need a human to check the score. The deployment pipeline reads it directly and makes the release decision automatically based on it.

Graduated Constraints: The Environment Is the Gate

Since one of our key design goals is to make environment the gate and the enforcer, this concept of graduated constraints happens to be the primary enforcing mechanism we will focus on.

When the assurance score drops, the system doesn't block the deployment. It constrains the deployment environment proportionally. The developer can always ship, we don't block them from releasing their changes. The question is how much blast radius the system allows.

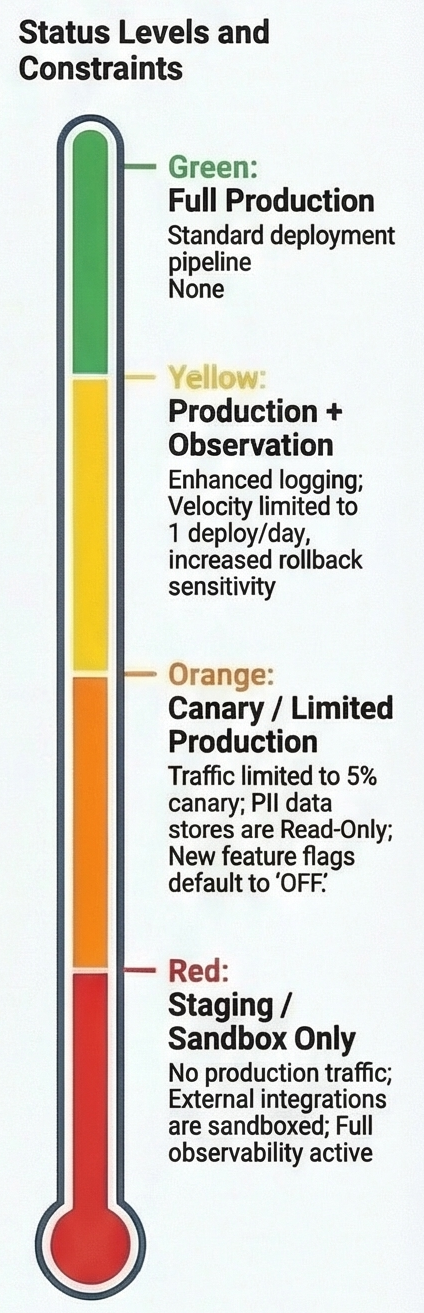

Mapping it out: 90–100 → Green, 70–89 → Yellow, 50–69 → Orange, Below 50 → Red

Again, same principle applies. A security engineer never gets a request to "approve this deploy." They see a dashboard showing which services are constrained and which risk patterns are driving it. They work on patterns (or simply noting them). One policy update can resolve constraints across dozens of services.

Constraints Tighten over time

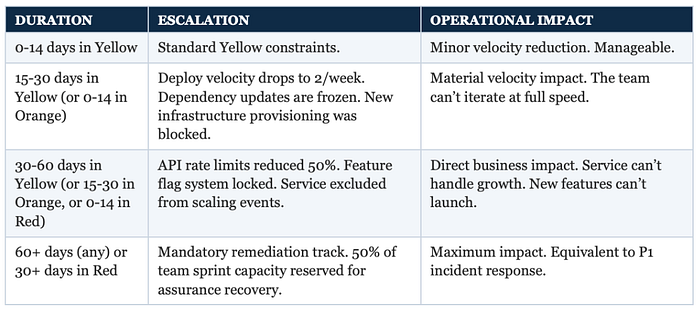

Thinking about how these kind of scores have so far failed to make their mark across various applications in our industry, the bulk of the problem lies in the fact that they probably become stale after a while. So lets make this score dynamic in our design. These constraints would tighten over time.

Why? Because a team can learn to live in Yellow indefinitely if the constraints are mild. The accountability mechanism is that constraints escalate automatically when the score doesn't recover.

Asked Claude to whip up a sample constraint-tighten-over-time for our design and here is a sample approach that got presented:

Yes, I take pride in making it difficult to escape the loop or form a loophole. But these constraints are important IMHO. They're genuine risk containment. A service with unresolved critical vulnerabilities should not be scaling to handle more traffic; that increases blast radius. A service with unvetted dependencies (think OSS) should not be adding more. The constraints are logically connected to the risk, which makes them defensible and resistant to political override.

Automated Risk Acceptance

This is where we get to reap the fruits of our labor — Automation!!

There can be edge scenarios. Sometimes a team needs to deploy with a known risk. The traditional model routes this through an approval chain: developer writes a justification, manager approves, director approves, maybe the CISO approves. Three to five days or weeks of queue time for a decision that could be automated.

In our design, there's no approval chain! The developer declares a risk acceptance through the system — the finding, the justification, the expected remediation timeline. The system applies a time-bound acceptance: 30 days for high severity, 90 days for medium. The finding is temporarily excluded from the assurance score calculation.

So wait? We allow stragglers then? Not exactly. The key mechanism that still holds the door for us: the acceptance auto-decays. At expiration, the finding re-enters the score and constraints reactivate. There's no renewal process. Fix it or declare a new acceptance. And bonus? Chronically re-accepted findings are auto-flagged as systemic issues for paved path remediation.

There must be some kind of risk dashboard made somewhere to track this. All acceptances are visible in the governance dashboard with aging metrics which is how leadership would get visibility into this.

But how would it really work in the end? Where's the accountability?

How would we ensure engineering leadership actually prioritizes security health?

Here's the answer: plug assurance scores into the existing planning process. At quarterly planning, the system automatically surfaces each team's assurance score distribution alongside velocity, reliability, and other engineering health metrics (might be tough to build it out depending on size of org). Teams below threshold get a percentage of their capacity auto-allocated to recovery work.

The framing matters. "Your team has 70% capacity for features this quarter because 30% is allocated to security health recovery" is fundamentally different from "the security team is asking you to fix these vulnerabilities." One is an engineering health metric. The other is a cross-team request that gets deprioritized.

Together, these two layers create a self-reinforcing incentive: the fastest way to maximize feature velocity is to maintain a healthy assurance score.

The Takeaway

There's something almost philosophical about removing the last human gate from the deployment process. It forces you to ask: what was that gate actually protecting? If a human can be replaced by a score and a set of automated constraints without any loss in security — and in fact with a gain, because the system is faster, more consistent, and never takes a day off — then the gate was never about security. It was about comfort. The comfort of knowing someone looked at it and signed off on it.

The Output Funnel replaces that comfort with something better: a system that is always looking at it. A score that updates continuously. Constraints that match the risk. Escalation that creates real consequences over time. And a planning integration that makes security health feel like what it actually is — an engineering problem, not a security ask.

The fastest path to shipping is keeping your score green. And that, I think, is exactly the right incentive.

Next: Part 6 — Feedback Loops. The last stage. The five loops that make the entire system smarter every month, and the metric that tells you whether it's working.

Read the series design: Assisted Continuous Assurance