Introduction: The Growing Challenge of Software Vulnerabilities

Modern software is evolving faster than ever — but so are security threats.

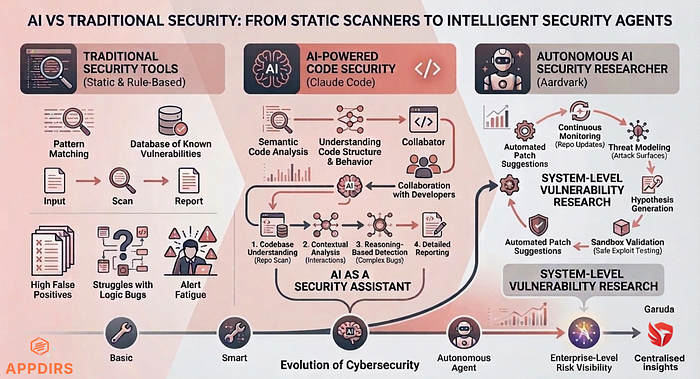

Today's applications are built on cloud infrastructure, APIs, microservices, and numerous third-party integrations. While this architecture enables scalability and flexibility, it also significantly increases the attack surface. As systems grow more complex, identifying vulnerabilities becomes increasingly challenging.

Traditional security tools have played an important role in helping developers detect common issues. However, they are struggling to keep pace with modern development speed and complexity.

This is where AI-powered security tools are beginning to redefine how vulnerabilities are identified and addressed.

Recently, companies have introduced new solutions that demonstrate how AI can assist developers and security teams more effectively. Two notable examples include:

- Claude Code Security, introduced by Anthropic

- Aardvark, a vulnerability research system developed by OpenAI

These innovations signal a shift in cybersecurity — from rule-based detection to intelligent, AI-driven security analysis.

Traditional Vulnerability Detection Methods

Static and Rule-Based Security Tools For many years, vulnerability detection has relied on static analysis tools and rule-based scanners.

These tools examine source code and compare it against databases of known vulnerability patterns. If a match is found, an alert is generated.

Common approaches include:

- Static Application Security Testing (SAST)

- Software Composition Analysis (SCA)

- Fuzz testing

These techniques remain valuable for identifying well-known vulnerabilities and are still widely used across the industry.

However, they primarily rely on pattern matching rather than understanding how the code actually behaves.

Limitations of Traditional Approaches

Despite their usefulness, traditional security tools present several challenges:

- High volume of false positives

- Difficulty detecting logic-based vulnerabilities

- Limited efficiency when analyzing large codebases

- Alert fatigue for developers and security teams

For example, a traditional scanner might flag a known vulnerability pattern but completely miss an authentication bypass caused by flawed business logic.

This gap is exactly where AI begins to make a difference.

The Rise of AI-Powered Security Analysis

Why AI Is Entering Cybersecurity

Artificial Intelligence is becoming an integral part of the software development lifecycle.

Developers already use AI for code generation, code reviews, and automation. Extending AI into security is a natural progression.

Unlike traditional tools, AI systems can analyze:

- Relationships between different code components

- How data flows across applications

- Interactions between functions and services

This deeper level of analysis allows AI to identify vulnerabilities that traditional tools may miss.

AI as a Security Assistant

It's important to clarify, AI is not replacing developers or security engineers — it is augmenting them.

These tools help teams:

- Identify vulnerabilities earlier in development

- Reduce manual security review effort

- Detect risks more quickly and accurately

This enables a shift toward a more proactive and efficient security approach.

Claude Code Security: AI-Assisted Vulnerability Detection

What It Does

Claude Code Security, introduced by Anthropic, is designed to analyze codebases using advanced AI reasoning.

Unlike traditional tools that rely solely on predefined rules, Claude attempts to understand the structure and behavior of the entire codebase. This allows it to analyze large repositories and detect more complex vulnerabilities.

How It Works

Step 1: Codebase Understanding The system scans the repository to understand its structure, including files, dependencies, and relationships.

Step 2: Contextual Code Analysis Instead of analyzing files in isolation, Claude evaluates how different parts of the application interact. This helps detect issues related to authentication, input validation, data handling, and permissions.

Step 3: Reasoning-Based Detection Claude applies AI reasoning to identify vulnerabilities that may not match known patterns — such as logic flaws or improper data handling.

Step 4: Reporting and Recommendations The system generates detailed reports explaining:

- Where the issue exists

- Why it is risky

- How it can be fixed

Developers can then review and apply the suggested fixes.

Impact on Development

By integrating AI into development workflows, Claude Code Security helps teams:

- Detect vulnerabilities earlier

- Reduce manual review effort

- Improve overall software security

Aardvark: An Autonomous AI Security Researcher

Introduction

Aardvark, introduced by OpenAI, represents a more advanced approach to AI-driven security.

While Claude focuses on assisting developers, Aardvark operates as an autonomous security researcher — continuously analyzing systems and discovering vulnerabilities.

How It Works

Step 1: Threat Modeling Aardvark analyzes system architecture to identify attack surfaces, entry points, and potential attack paths.

Step 2: Continuous Code Monitoring It monitors repository changes and scans new commits for potential vulnerabilities.

Step 3: Hypothesis Generation The system generates hypotheses about possible vulnerabilities based on its analysis.

Step 4: Sandbox Validation Aardvark tests vulnerabilities in a controlled sandbox environment to determine whether they are exploitable.

Step 5: Patch Suggestions If a vulnerability is confirmed, the system suggests potential fixes or patches.

How It Works

- Continuous monitoring of codebases

- Autonomous vulnerability discovery

- Exploit validation through sandbox testing

- AI-generated patch recommendations

This approach enables Aardvark to function similarly to a human security researcher — operating continuously and at scale.

Claude vs Aardvark vs Traditional Security

While all three approaches aim to improve software security, they differ significantly in methodology and automation.

| Aspect | Traditional Tools | Claude Code Security | Aardvark |

|--------------|----------------------|----------------------|---------------------------|

| Approach | Rule-based scanning | AI-driven analysis | Autonomous AI research |

| Focus | Known vulnerabilities| Code logic & behavior| System-level vulnerabilities |

| Automation | Limited | Assisted | Highly autonomous |

| Validation | Manual | AI-supported | Sandbox testing |

| Fixes | Rare | Suggested | AI-recommended |Beyond Code: Enterprise Security Platforms

While tools like Claude and Aardvark focus on code-level vulnerabilities, organisations also need broader visibility.

Enterprise platforms aim to provide:

- Centralized visibility into security risks

- Monitoring across applications and infrastructure

- Risk tracking across digital assets

Security is no longer just about code — it's about the entire ecosystem.

Solutions like Appdirs, along with its platform such as Garuda, are designed to address these broader challenges by providing insights into application-level risks and helping organizations strengthen their overall security posture.

The Future of AI in Cybersecurity

AI-powered security tools are still evolving, but their impact is already clear.

In the coming years, we can expect:

- Faster vulnerability detection

- Earlier identification of security risks

- Stronger collaboration between AI systems and security teams

Rather than replacing human expertise, AI will continue to augment and enhance security operations.

Conclusion

The evolution of cybersecurity reflects the growing complexity of modern software systems.

Traditional security tools remain essential, but they are no longer sufficient on their own. AI-powered solutions like Claude Code Security and Aardvark demonstrate how intelligent systems can analyze code more deeply, identify vulnerabilities earlier, and improve overall security outcomes.

The future of cybersecurity lies in combining human expertise with AI-driven intelligence. Organizations that adopt this hybrid approach will be better positioned to build secure, resilient systems in an increasingly complex digital landscape.